Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

Many workflows require sensitive information such as credentials, API keys, and tokens. Rather than entering these values directly into notebooks or storing them in plain text, you can securely store them using Databricks secrets and reference them in your notebooks and jobs. This approach enhances security and simplifies credential management. This page provides an overview of Databricks secrets.

Note

Databricks recommends using Unity Catalog to configure access to data in cloud storage. See Connect to cloud object storage using Unity Catalog.

Secrets overview

To configure and use secrets you:

- Create a secret scope. A secret scope is collection of secrets identified by a name.

- Add secrets to the scope

- Assign permissions on the secret scope.

- Reference secrets in your code.

For an end-to-end example of how to use secrets in your workflows, see Tutorial: Create and use a Databricks secret. To use a secret in a Spark configuration property or environment variable, see Use a secret in a Spark configuration property or environment variable.

Warning

Workspace admins, secret creators, and users who have been granted permission can access and read Databricks secrets. Although Databricks attempts to redact secret values in notebook outputs, it is not possible to fully prevent these users from viewing secret contents. Always assign secret access permissions carefully to protect sensitive information.

Manage secret scopes

A secret scope is collection of secrets identified by a name. Databricks recommends aligning secret scopes to roles or applications rather than individuals.

There are two types of secret scope:

- Azure Key Vault-backed: You can reference secrets stored in an Azure Key Vault using Azure Key Vault-backed secret scopes. Azure Key Vault-backed secret scope is a read-only interface to the Key Vault. You must manage secrets in Azure Key Vault-backed secret scopes in Azure.

- Databricks-backed: A Databricks-backed secret scope is stored in an encrypted database owned and managed by Azure Databricks.

After creating a secret scope, you can assign permissions to grant users access to read, write, and manage secret scopes.

Create an Azure Key Vault-backed secret scope

This section describes how to create an Azure Key Vault-backed secret scope using the Azure portal and the Azure Databricks workspace UI. You can also create an Azure Key Vault-backed secret scope using the Databricks CLI.

Requirements

- You must have an Azure key vault instance. If you do not have a key vault instance, follow the instructions in Create a Key Vault using the Azure portal.

- You must have the Key Vault Contributor, Contributor, or Owner role on the Azure key vault instance that you want to use to back the secret scope.

Note

Creating an Azure Key Vault-backed secret scope requires the Contributor or Owner role on the Azure key vault instance even if the Azure Databricks service has previously been granted access to the key vault.

If the key vault exists in a different tenant than the Azure Databricks workspace, the Azure AD user who creates the secret scope must have permission to create service principals in the key vault's tenant. Otherwise, the following error occurs:

Unable to grant read/list permission to Databricks service principal to KeyVault 'https://xxxxx.vault.azure.net/': Status code 403, {"odata.error":{"code":"Authorization_RequestDenied","message":{"lang":"en","value":"Insufficient privileges to complete the operation."},"requestId":"XXXXX","date":"YYYY-MM-DDTHH:MM:SS"}}

Configure your Azure key vault instance for Azure Databricks

Log in to the Azure Portal, find and select the Azure key vault instance.

Under Settings, click the Access configuration tab.

Set Permission model to Vault access policy.

Note

Creating an Azure Key Vault-backed secret scope role grants the Get and List permissions to the application ID for the Azure Databricks service using key vault access policies. The Azure role-based access control permission model is not supported with Azure Databricks.

Under Settings, select Networking.

In Firewalls and virtual networks set Allow access from: to Allow public access from specific virtual networks and IP addresses.

Under Exception, check Allow trusted Microsoft services to bypass this firewall.

Note

You can also set Allow access from: to Allow public access from all networks.

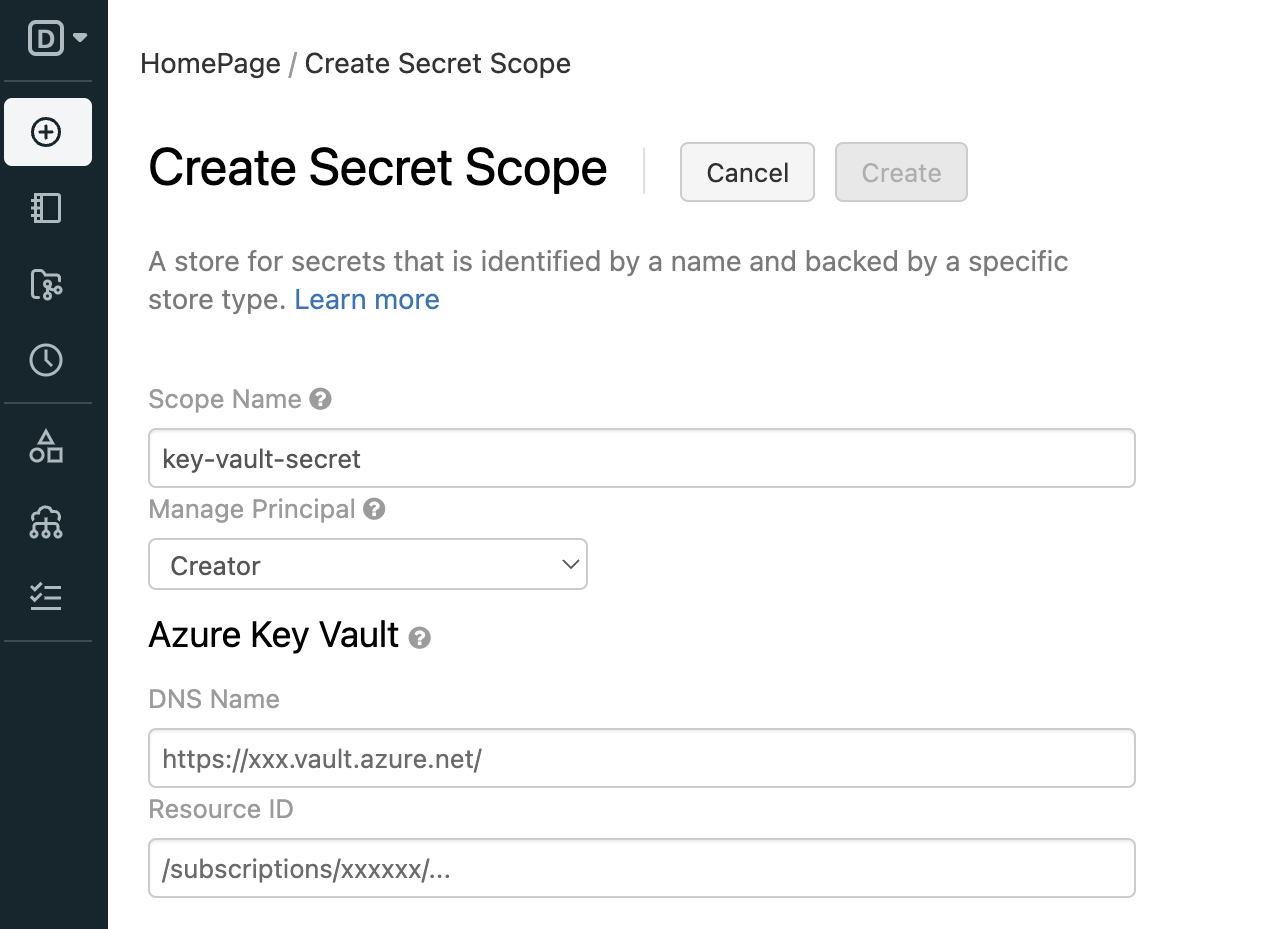

Create an Azure Key Vault-backed secret scope

Go to

https://<databricks-instance>#secrets/createScope. Replace<databricks-instance>with the workspace URL of your Azure Databricks deployment. This URL is case sensitive. For example,scopeincreateScopemust use an uppercaseS).

Enter the name of the secret scope. Secret scope names are case insensitive.

In Manage Principal select Creator or All workspace users to specify which users have the MANAGE permission on the secret scope.

The MANAGE permission allows users to read, write, and grant permissions on the scope. Your account must have the Premium plan to choose Creator.

Enter the DNS Name (for example,

https://databrickskv.vault.azure.net/) and Resource ID, for example:/subscriptions/xxxxxxxx-xxxx-xxxx-xxxx-xxxxxxxxxxxx/resourcegroups/databricks-rg/providers/Microsoft.KeyVault/vaults/databricksKVThese properties are available from the Settings > Properties tab of an Azure Key Vault in your Azure portal.

Click Create.

Use the Databricks CLI

databricks secrets list-scopescommand to verify that the scope was created successfully.

Create a Databricks-backed secret scope

This section describes how to create a secret scope using the Databricks CLI (version 0.205 and above). You can also use the Secrets API.

Secret scope names:

- Must be unique within a workspace.

- Must consist of alphanumeric characters, dashes, underscores,

@, and periods, and can not exceed 128 characters. - Are case insensitive.

Secret scope names are considered non-sensitive and are readable by all users in the workspace.

To create a scope using the Databricks CLI:

databricks secrets create-scope <scope-name>

By default, scopes are created with MANAGE permission for the user who created the scope. After you have created a Databricks-backed secret scope, you can add secrets to it.

List secret scopes

To list the existing scopes in a workspace using the CLI:

databricks secrets list-scopes

You can also list secret scopes using the Secrets API.

Delete a secret scope

Deleting a secret scope deletes all secrets and ACLs applied to the scope. To delete a scope using the CLI, run the following:

databricks secrets delete-scope <scope-name>

You can also delete a secret scope using the Secrets API.

Manage secrets

A secret is a key-value pair that stores sensitive material using a key name that is unique within a secret scope.

This section describes how to create a secret scope using the Databricks CLI (version 0.205 and above). You can also use the Secrets API. Secret names are case insensitive.

Create a secret

The method for creating a secret depends on whether you are using an Azure Key Vault-backed scope or a Databricks-backed scope.

Create a secret in an Azure Key Vault-backed scope

To create a secret in Azure Key Vault you use the Azure portal or Azure Set Secret REST API.

Create a secret in a Databricks-backed scope

This section describes how to create a secret using the Databricks CLI (version 0.205 and above) or in a notebook using the Databricks SDK for Python. You can also use the Secrets API. Secret names are case insensitive.

Databricks CLI

When you create a secret in a Databricks-backed scope, you can specify the secret value in one of three ways:

- Specify the value as a string using the –string-value flag.

- Input the secret when prompted interactively (single-line secrets).

- Pass the secret using standard input (multi-line secrets).

For example:

databricks secrets put-secret --json '{

"scope": "<scope-name>",

"key": "<key-name>",

"string_value": "<secret>"

}'

If you are creating a multi-line secret, you can pass the secret using standard input. For example:

(cat << EOF

this

is

a

multi

line

secret

EOF

) | databricks secrets put-secret <scope-name> <key-name>

Databricks SDK for Python

from databricks.sdk import WorkspaceClient

w = WorkspaceClient()

w.secrets.put_secret("<secret_scope>","<key-name>",string_value ="<secret>")

Read a secret

This section describes how to read a secret using the Databricks CLI (version 0.205 and above) or in a notebook using Secrets utility (dbutils.secrets).

Databricks CLI

In order to read the value of a secret using the Databricks CLI, you must decode the base64 encoded value. You can use jq to extract the value and base --decode to decode it:

databricks secrets get-secret <scope-name> <key-name> | jq -r .value | base64 --decode

Secrets utility (dbutils.secrets)

password = dbutils.secrets.get(scope = "<scope-name>", key = "<key-name>")

List secrets

To list secrets in a given scope:

databricks secrets list-secrets <scope-name>

The response displays metadata information about the secrets, such as the secrets' key names. You use the Secrets utility (dbutils.secrets) in a notebook or job to list this metadata. For example:

dbutils.secrets.list('my-scope')

Delete a secret

To delete a secret from a scope with the Databricks CLI:

databricks secrets delete-secret <scope-name> <key-name>

You can also use the Secrets API.

To delete a secret from a scope backed by Azure Key Vault, use the Azure SetSecret REST API or Azure portal UI.

Manage secret scope permissions

By default, the user that creates the secret scopes is granted the MANAGE permission. This allows the scope creator to read secrets in the scope, write secrets to the scope, and manage permissions on the scope.

Note

Secret ACLs are at the scope level. If you use Azure Key Vault-backed scopes, users that are granted access to the scope have access to all secrets in the Azure Key Vault. To restrict access, use separate Azure key vault instances.

This section describes how to manage secret access control using the Databricks CLI (version 0.205 and above). You can also use the Secrets API. For secret permission levels, see Secret ACLs

Grant a user permissions on a secret scope

To grant a user permissions on a secret scope using the Databricks CLI:

databricks secrets put-acl <scope-name> <principal> <permission>

Making a put request for a principal that already has an applied permission overwrites the existing permission level.

The principal field specifies an existing Azure Databricks principal. A user is specified using their email address, a service principal using its applicationId value, and a group using its group name. For more information, see Principal.

View secret scope permissions

To view all secret scope permissions for a given secret scope:

databricks secrets list-acls <scope-name>

To get the secret scope permissions applied to a principal for a given secret scope:

databricks secrets get-acl <scope-name> <principal>

If no ACL exists for the given principal and scope, this request fails.

Delete a secret scope permission

To delete a secret scope permission applied to a principal for a given secret scope:

databricks secrets delete-acl <scope-name> <principal>

Secret redaction

Storing credentials as Azure Databricks secrets makes it easy to protect your credentials when you run notebooks and jobs. However, it is easy to accidentally print a secret to standard output buffers or display the value during variable assignment.

To prevent this, Azure Databricks redacts all secret values that are read using dbutils.secrets.get() and referenced in a Spark configuration property. When displayed, the secret values are replaced with [REDACTED].

For example, if you set a variable to a secret value using dbutils.secrets.get() and then print that variable, that variable is replaced with [REDACTED].

Warning

Redaction applies only to literal secret values. The secret redaction functionality does not prevent deliberate and arbitrary transformations of a secret literal. To ensure the proper control of secrets, you should use access control lists to limit permissions to run commands. This prevents unauthorized access to shared notebook contexts.

Secret redaction in SQL

Azure Databricks attempts to redact all SQL DQL (Data Query Language) commands that invoke the secret function, including referenced views and user-defined functions. When the secret function is used, the output is replaced with [REDACTED] where possible. Like notebook redaction, this only applies to literal values, not to transformed or indirectly referenced secrets.

For SQL DML (Data Manipulation Language) commands, Azure Databricks allows secret lookups if the secret is considered safe—for example, when wrapped in a cryptographic function such as sha() or aes_encrypt(), which prevent raw values from being stored unencrypted.

Secret validation in SQL

Azure Databricks also applies validation to block SQL DML commands that could result in unencrypted secrets being saved to tables. The query analyzer attempts to identify and prevent these scenarios, which helps avoid accidental storage of sensitive information in plaintext.