Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

In this article, learn how to securely integrate with Azure Machine Learning from Azure Synapse. This integration enables you to use Azure Machine Learning from notebooks in your Azure Synapse workspace. Communication between the two workspaces is secured using an Azure Virtual Network.

Prerequisites

An Azure subscription.

An Azure Machine Learning workspace with a private endpoint connection to a virtual network. The following workspace dependency services must also have a private endpoint connection to the virtual network:

Azure Storage Account

Tip

For the storage account there are three separate private endpoints; one each for blob, file, and dfs.

Azure Key Vault

Azure Container Registry

A quick and easy way to build this configuration is to use a Bicep template or Terraform template.

An Azure Synapse workspace in a managed virtual network, using a managed private endpoint. For more information, see Azure Synapse Analytics Managed Virtual Network.

Warning

The Azure Machine Learning integration is not currently supported in Synapse Workspaces with data exfiltration protection. When configuring your Azure Synapse workspace, do not enable data exfiltration protection. For more information, see Azure Synapse Analytics Managed Virtual Network.

Note

The steps in this article make the following assumptions:

- The Azure Synapse workspace is in a different resource group than the Azure Machine Learning workspace.

- The Azure Synapse workspace uses a managed virtual network. The managed virtual network secures the connectivity between Azure Synapse and Azure Machine Learning. It does not restrict access to the Azure Synapse workspace. You will access the workspace over the public internet.

Understanding the network communication

In this configuration, Azure Synapse uses a managed private endpoint and virtual network. The managed virtual network and private endpoint secures the internal communications from Azure Synapse to Azure Machine Learning by restricting network traffic to the virtual network. It does not restrict communication between your client and the Azure Synapse workspace.

Azure Machine Learning doesn't provide managed private endpoints or virtual networks, and instead uses a user-managed private endpoint and virtual network. In this configuration, both internal and client/service communication is restricted to the virtual network. For example, if you wanted to directly access the Azure Machine Learning studio from outside the virtual network, you would use one of the following options:

- Create an Azure Virtual Machine inside the virtual network and use Azure Bastion to connect to it. Then connect to Azure Machine Learning from the VM.

- Create a VPN gateway or use ExpressRoute to connect clients to the virtual network.

Since the Azure Synapse workspace is publicly accessible, you can connect to it without having to create things like a VPN gateway. The Synapse workspace securely connects to Azure Machine Learning over the virtual network. Azure Machine Learning and its resources are secured within the virtual network.

When adding data sources, you can also secure those behind the virtual network. For example, securely connecting to an Azure Storage Account or Data Lake Store Gen 2 through the virtual network.

For more information, see the following articles:

- Azure Synapse Analytics Managed Virtual Network

- Secure Azure Machine Learning workspace resources using virtual networks.

- Connect to a secure Azure storage account from your Synapse workspace.

Configure Azure Synapse

Important

Before following these steps, you need an Azure Synapse workspace that is configured to use a managed virtual network. For more information, see Azure Synapse Analytics Managed Virtual Network.

From Azure Synapse Studio, Create a new Azure Machine Learning linked service.

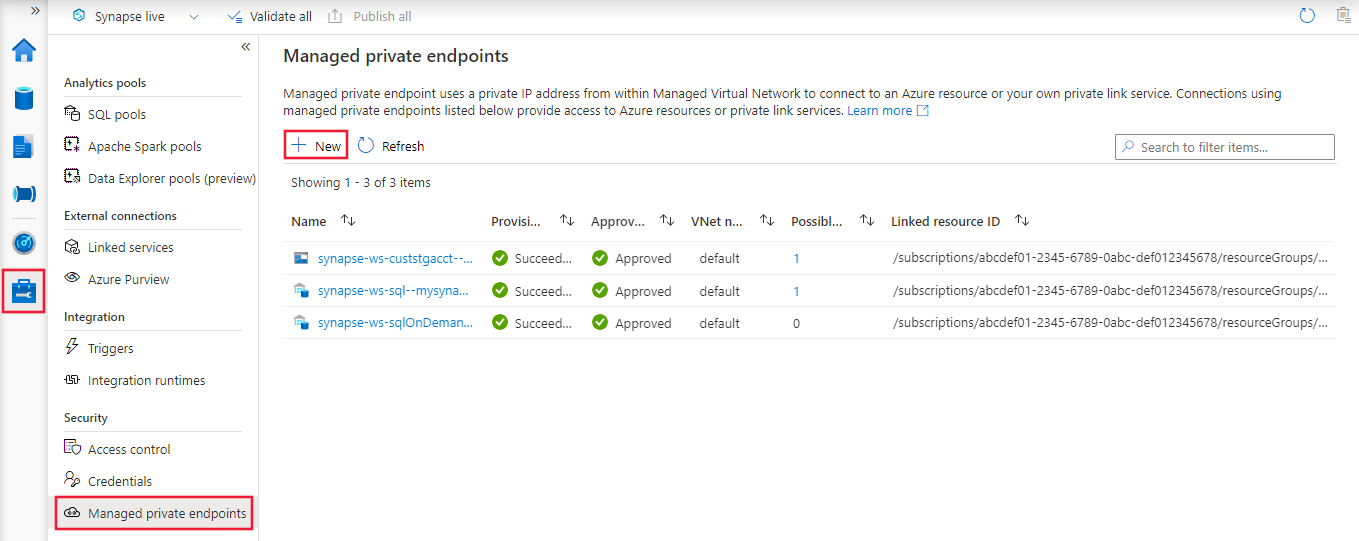

After creating and publishing the linked service, select Manage, Managed private endpoints, and then + New in Azure Synapse Studio.

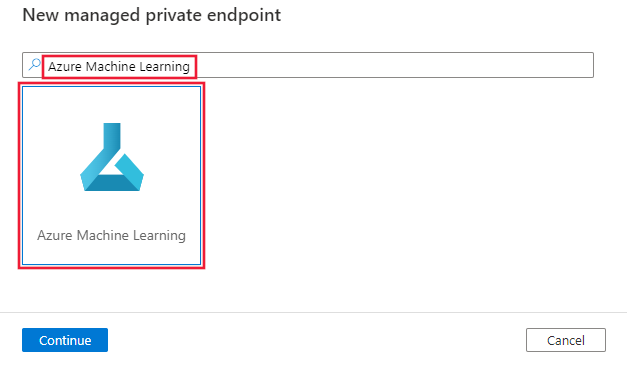

From the New managed private endpoint page, search for Azure Machine Learning and select the tile.

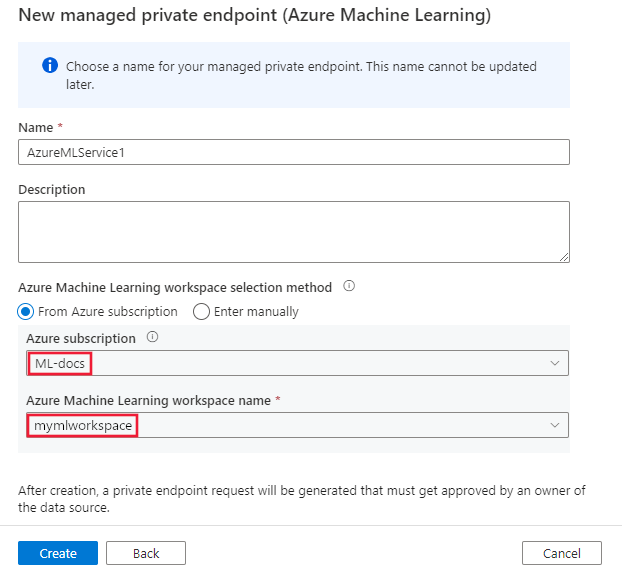

When prompted to select the Azure Machine Learning workspace, use the Azure subscription and Azure Machine Learning workspace you added previously as a linked service. Select Create to create the endpoint.

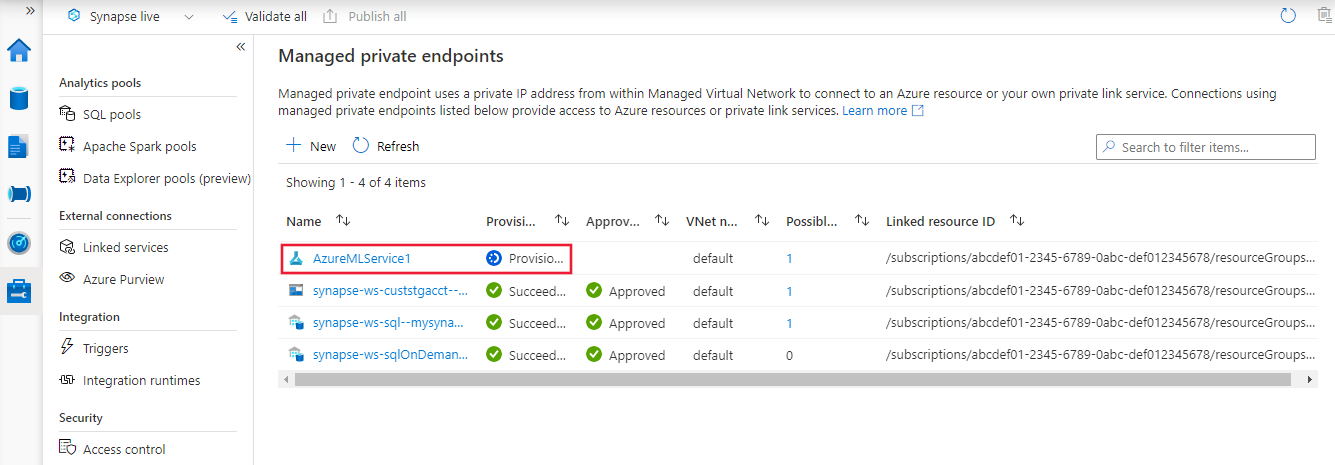

The endpoint will be listed as Provisioning until it has been created. Once created, the Approval column will list a status of Pending. You'll approve the endpoint in the Configure Azure Machine Learning section.

Note

In the following screenshot, a managed private endpoint has been created for the Azure Data Lake Storage Gen 2 associated with this Synapse workspace. For information on how to create an Azure Data Lake Storage Gen 2 and enable a private endpoint for it, see Provision and secure a linked service with Managed VNet.

Create a Spark pool

To verify that the integration between Azure Synapse and Azure Machine Learning is working, you'll use an Apache Spark pool. For information on creating one, see Create a Spark pool.

Configure Azure Machine Learning

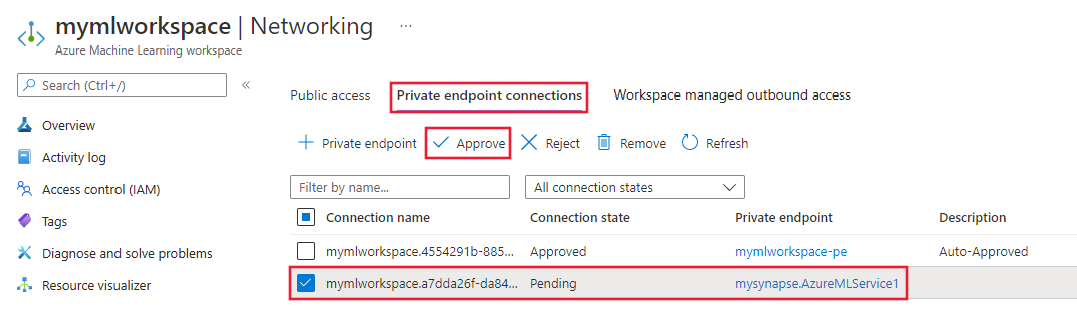

From the Azure portal, select your Azure Machine Learning workspace, and then select Networking.

Select Private endpoints, and then select the endpoint you created in the previous steps. It should have a status of pending. Select Approve to approve the endpoint connection.

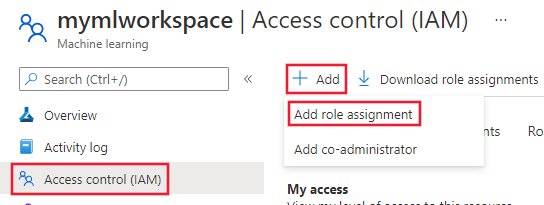

From the left of the page, select Access control (IAM). Select + Add, and then select Role assignment.

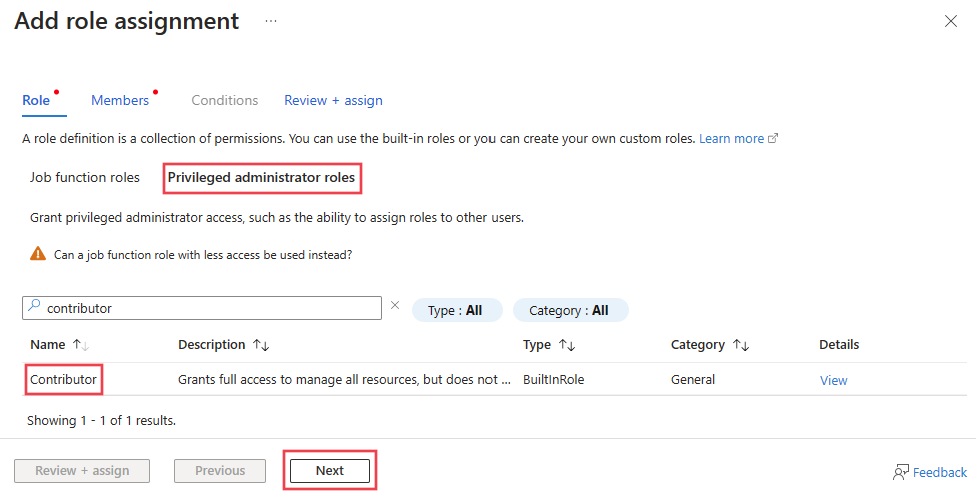

Select Privileged administrator roles, Contributor, and then select Next.

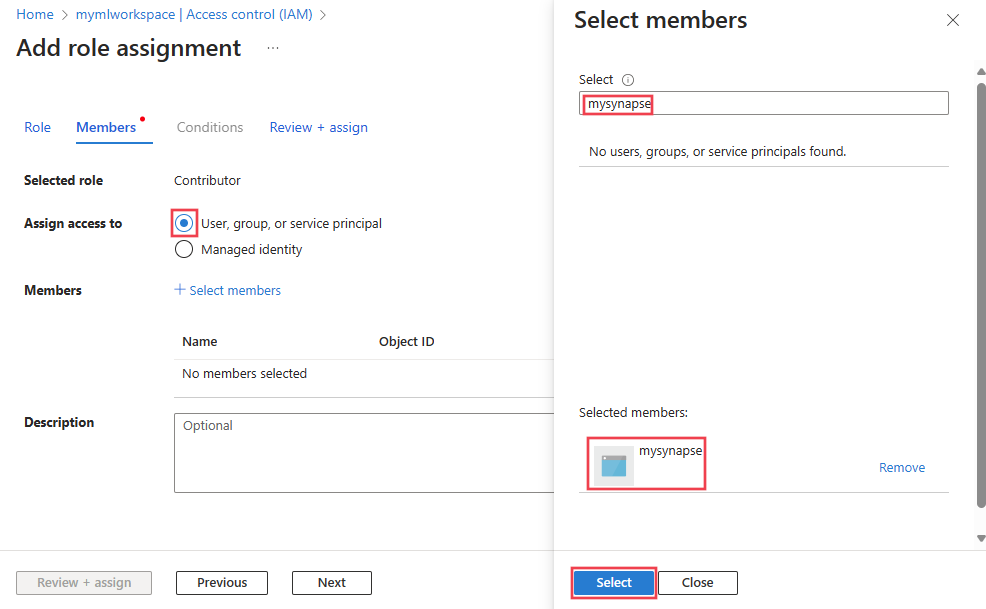

Select User, group, or service principal, and then + Select members. Enter the name of the identity created earlier, select it, and then use the Select button.

Select Review + assign, verify the information, and then select the Review + assign button.

Tip

It may take several minutes for the Azure Machine Learning workspace to update the credentials cache. Until it has been updated, you may receive errors when trying to access the Azure Machine Learning workspace from Synapse.

Verify connectivity

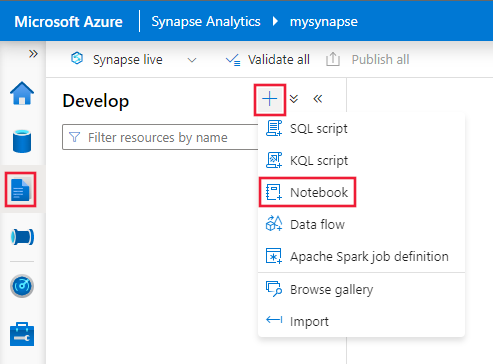

From Azure Synapse Studio, select Develop, and then + Notebook.

In the Attach to field, select the Apache Spark pool for your Azure Synapse workspace, and enter the following code in the first cell:

from notebookutils.mssparkutils import azureML # getWorkspace() takes the linked service name, # not the Azure Machine Learning workspace name. ws = azureML.getWorkspace("AzureMLService1") print(ws.name)Important

This code snippet connects to the linked workspace using SDK v1, and then prints the workspace info. In the printed output, the value displayed is the name of the Azure Machine Learning workspace, not the linked service name that was used in the

getWorkspace()call. For more information on using thewsobject, see the Workspace class reference.