Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

This article shows you how to use NVIDIA GPU workloads with Azure Red Hat OpenShift.

Prerequisites

- OpenShift CLI

- jq, moreutils, and gettext package

- Azure Red Hat OpenShift 4.10

If you need to install a cluster, see Tutorial: Create an Azure Red Hat OpenShift 4 cluster. clusters must be version 4.10.x or higher.

Note

As of 4.10, it is no longer necessary to set up entitlements to use the NVIDIA Operator. This has greatly simplified the setup of the cluster for GPU workloads.

Linux:

sudo dnf install jq moreutils gettext

macOS

brew install jq moreutils gettext

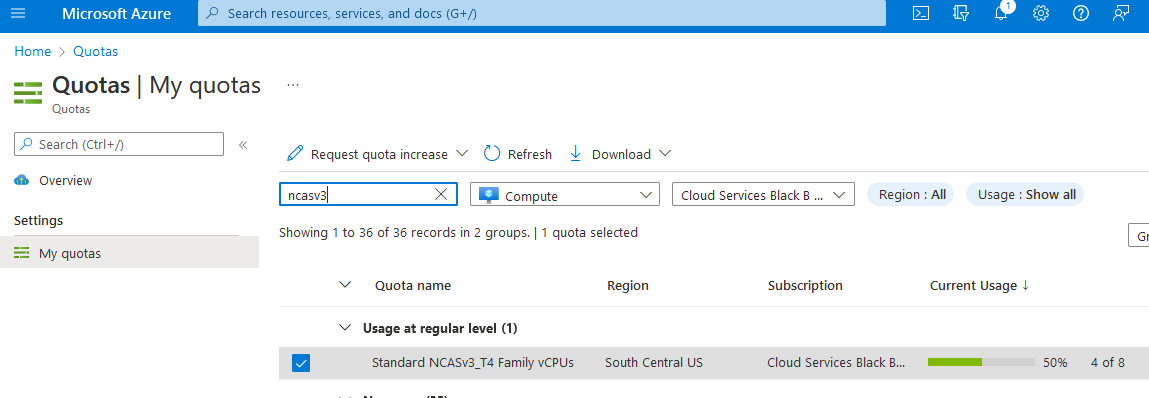

Request GPU quota

All GPU quotas in Azure are 0 by default. You will need to sign in to the Azure portal and request GPU quota. Due to competition for GPU workers, you may have to provision a cluster in a region where you can actually reserve GPU.

supports the following GPU workers:

- NC4as T4 v3

- NC6s v3

- NC8as T4 v3

- NC12s v3

- NC16as T4 v3

- NC24s v3

- NC24rs v3

- NC64as T4 v3

The following instances are also supported in additional MachineSets:

- Standard_ND96asr_v4

- NC24ads_A100_v4

- NC48ads_A100_v4

- NC96ads_A100_v4

- ND96amsr_A100_v4

Note

When requesting quota, remember that Azure is per core. To request a single NC4as T4 v3 node, you will need to request quota in groups of 4. If you wish to request an NC16as T4 v3, you will need to request quota of 16.

Sign in to Azure portal.

Enter quotas in the search box, then select Compute.

In the search box, enter NCAsv3_T4, check the box for the region your cluster is in, and then select Request quota increase.

Configure quota.

Sign in to your cluster

Sign in to OpenShift with a user account with cluster-admin privileges. The example below uses an account named kubadmin:

oc login <apiserver> -u kubeadmin -p <kubeadminpass>

Pull secret (conditional)

Update your pull secret to make sure you can install operators and connect to cloud.redhat.com.

Note

Skip this step if you have already recreated a full pull secret with cloud.redhat.com enabled.

Log into to cloud.redhat.com.

Browse to https://cloud.redhat.com/openshift/install/azure/aro-provisioned.

Select Download pull secret and save the pull secret as

pull-secret.txt.Important

The remaining steps in this section must be run in the same working directory as

pull-secret.txt.Export the existing pull secret.

oc get secret pull-secret -n openshift-config -o json | jq -r '.data.".dockerconfigjson"' | base64 --decode > export-pull.jsonMerge the downloaded pull secret with the system pull secret to add

cloud.redhat.com.jq -s '.[0] * .[1]' export-pull.json pull-secret.txt | tr -d "\n\r" > new-pull-secret.jsonUpload the new secret file.

oc set data secret/pull-secret -n openshift-config --from-file=.dockerconfigjson=new-pull-secret.jsonYou may need to wait about 1 hour for everything to sync up with cloud.redhat.com.

Delete secrets.

rm pull-secret.txt export-pull.json new-pull-secret.json

GPU machine set

uses Kubernetes MachineSet to create machine sets. The procedure below explains how to export the first machine set in a cluster and use that as a template to build a single GPU machine.

View existing machine sets.

For ease of setup, this example uses the first machine set as the one to clone to create a new GPU machine set.

MACHINESET=$(oc get machineset -n openshift-machine-api -o=jsonpath='{.items[0]}' | jq -r '[.metadata.name] | @tsv')Save a copy of the example machine set.

oc get machineset -n openshift-machine-api $MACHINESET -o json > gpu_machineset.jsonChange the

.metadata.namefield to a new unique name.jq '.metadata.name = "nvidia-worker-<region><az>"' gpu_machineset.json| sponge gpu_machineset.jsonEnsure

spec.replicasmatches the desired replica count for the machine set.jq '.spec.replicas = 1' gpu_machineset.json| sponge gpu_machineset.jsonChange the

.spec.selector.matchLabels.machine.openshift.io/cluster-api-machinesetfield to match the.metadata.namefield.jq '.spec.selector.matchLabels."machine.openshift.io/cluster-api-machineset" = "nvidia-worker-<region><az>"' gpu_machineset.json| sponge gpu_machineset.jsonChange the

.spec.template.metadata.labels.machine.openshift.io/cluster-api-machinesetto match the.metadata.namefield.jq '.spec.template.metadata.labels."machine.openshift.io/cluster-api-machineset" = "nvidia-worker-<region><az>"' gpu_machineset.json| sponge gpu_machineset.jsonChange the

spec.template.spec.providerSpec.value.vmSizeto match the desired GPU instance type from Azure.The machine used in this example is Standard_NC4as_T4_v3.

jq '.spec.template.spec.providerSpec.value.vmSize = "Standard_NC4as_T4_v3"' gpu_machineset.json | sponge gpu_machineset.jsonChange the

spec.template.spec.providerSpec.value.zoneto match the desired zone from Azure.jq '.spec.template.spec.providerSpec.value.zone = "1"' gpu_machineset.json | sponge gpu_machineset.jsonDelete the

.statussection of the yaml file.jq 'del(.status)' gpu_machineset.json | sponge gpu_machineset.jsonVerify the other data in the yaml file.

Ensure the correct SKU is set

Depending on the image used for the machine set, both values for image.sku and image.version must be set accordingly. This is to ensure if generation 1 or 2 virtual machine for Hyper-V will be used. See here for more information.

Example:

If using Standard_NC4as_T4_v3, both versions are supported. As mentioned in Feature support. In this case, no changes are required.

If using Standard_NC24ads_A100_v4, only Generation 2 VM is supported.

In this case, the image.sku value must follow the equivalent v2 version of the image that corresponds to the cluster's original image.sku. For this example, the value will be v410-v2.

This can be found using the following command:

az vm image list --architecture x64 -o table --all --offer aro4 --publisher azureopenshift

Filtered output:

SKU VERSION

------- ---------------

v410-v2 410.84.20220125

aro_410 410.84.20220125

If the cluster was created with the base SKU image aro_410, and the same value is kept in the machine set, it will fail with the following error:

failure sending request for machine myworkernode: cannot create vm: compute.VirtualMachinesClient#CreateOrUpdate: Failure sending request: StatusCode=400 -- Original Error: Code="BadRequest" Message="The selected VM size 'Standard_NC24ads_A100_v4' cannot boot Hypervisor Generation '1'.

Create GPU machine set

Use the following steps to create the new GPU machine. It may take 10-15 minutes to provision a new GPU machine. If this step fails, sign in to Azure portal and ensure there are no availability issues. To do so, go to Virtual Machines and search for the worker name you created previously to see the status of VMs.

Create the GPU Machine set.

oc create -f gpu_machineset.jsonThis command will take a few minutes to complete.

Verify GPU machine set.

Machines should be deploying. You can view the status of the machine set with the following commands:

oc get machineset -n openshift-machine-api oc get machine -n openshift-machine-apiOnce the machines are provisioned (which could take 5-15 minutes), machines will show as nodes in the node list:

oc get nodesYou should see a node with the

nvidia-worker-southcentralus1name that was created previously.

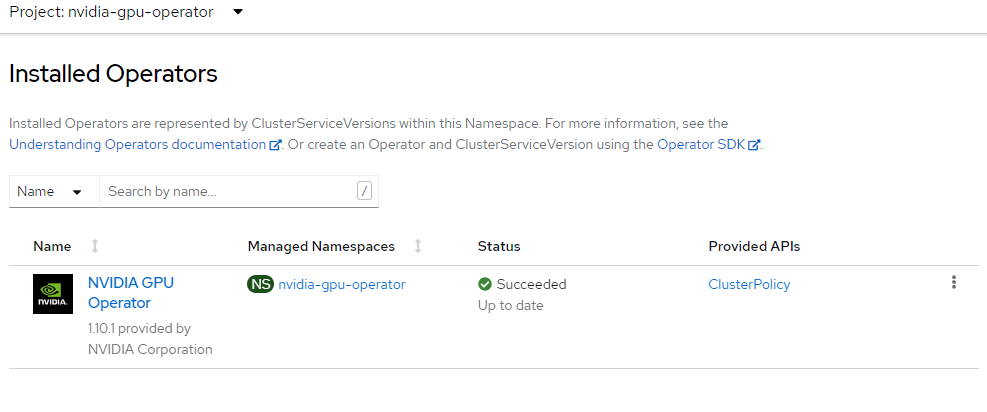

Install NVIDIA GPU Operator

This section explains how to create the nvidia-gpu-operator namespace, set up the operator group, and install the NVIDIA GPU operator.

Create NVIDIA namespace.

cat <<EOF | oc apply -f - apiVersion: v1 kind: Namespace metadata: name: nvidia-gpu-operator EOFCreate Operator Group.

cat <<EOF | oc apply -f - apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: nvidia-gpu-operator-group namespace: nvidia-gpu-operator spec: targetNamespaces: - nvidia-gpu-operator EOFGet the latest NVIDIA channel using the following command:

CHANNEL=$(oc get packagemanifest gpu-operator-certified -n openshift-marketplace -o jsonpath='{.status.defaultChannel}')

Note

If your cluster was created without providing the pull secret, the cluster won't include samples or operators from Red Hat or from certified partners. This will result in the following error message:

Error from server (NotFound): packagemanifests.packages.operators.coreos.com "gpu-operator-certified" not found.

To add your Red Hat pull secret on an Azure Red Hat OpenShift cluster, follow this guidance.

Get latest NVIDIA package using the following command:

PACKAGE=$(oc get packagemanifests/gpu-operator-certified -n openshift-marketplace -ojson | jq -r '.status.channels[] | select(.name == "'$CHANNEL'") | .currentCSV')Create Subscription.

envsubst <<EOF | oc apply -f - apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: gpu-operator-certified namespace: nvidia-gpu-operator spec: channel: "$CHANNEL" installPlanApproval: Automatic name: gpu-operator-certified source: certified-operators sourceNamespace: openshift-marketplace startingCSV: "$PACKAGE" EOFWait for Operator to finish installing.

Don't proceed until you have verified that the operator has finished installing. Also, ensure that your GPU worker is online.

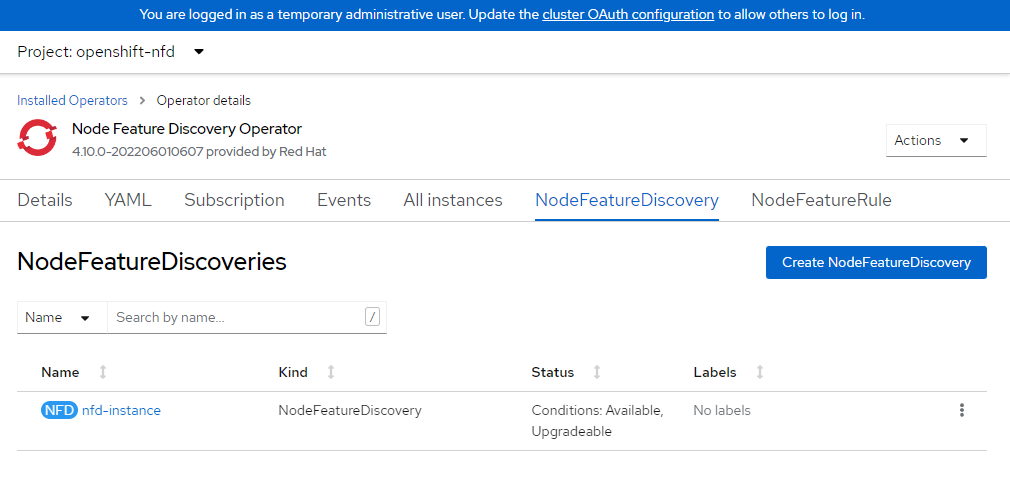

Install node feature discovery operator

The node feature discovery operator will discover the GPU on your nodes and appropriately label the nodes so you can target them for workloads.

This example installs the NFD operator into the openshift-ndf namespace and creates the "subscription" which is the configuration for NFD.

Official Documentation for Installing Node Feature Discovery Operator.

Set up

Namespace.cat <<EOF | oc apply -f - apiVersion: v1 kind: Namespace metadata: name: openshift-nfd EOFCreate

OperatorGroup.cat <<EOF | oc apply -f - apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: generateName: openshift-nfd- name: openshift-nfd namespace: openshift-nfd EOFCreate

Subscription.cat <<EOF | oc apply -f - apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: nfd namespace: openshift-nfd spec: channel: "stable" installPlanApproval: Automatic name: nfd source: redhat-operators sourceNamespace: openshift-marketplace EOFWait for Node Feature discovery to complete installation.

You can log in to your OpenShift console to view operators or simply wait a few minutes. Failure to wait for the operator to install will result in an error in the next step.

Create NFD Instance.

cat <<EOF | oc apply -f - kind: NodeFeatureDiscovery apiVersion: nfd.openshift.io/v1 metadata: name: nfd-instance namespace: openshift-nfd spec: customConfig: configData: | # - name: "more.kernel.features" # matchOn: # - loadedKMod: ["example_kmod3"] # - name: "more.features.by.nodename" # value: customValue # matchOn: # - nodename: ["special-.*-node-.*"] operand: image: >- registry.redhat.io/openshift4/ose-node-feature-discovery@sha256:07658ef3df4b264b02396e67af813a52ba416b47ab6e1d2d08025a350ccd2b7b servicePort: 12000 workerConfig: configData: | core: # labelWhiteList: # noPublish: false sleepInterval: 60s # sources: [all] # klog: # addDirHeader: false # alsologtostderr: false # logBacktraceAt: # logtostderr: true # skipHeaders: false # stderrthreshold: 2 # v: 0 # vmodule: ## NOTE: the following options are not dynamically run-time ## configurable and require a nfd-worker restart to take effect ## after being changed # logDir: # logFile: # logFileMaxSize: 1800 # skipLogHeaders: false sources: # cpu: # cpuid: ## NOTE: attributeWhitelist has priority over attributeBlacklist # attributeBlacklist: # - "BMI1" # - "BMI2" # - "CLMUL" # - "CMOV" # - "CX16" # - "ERMS" # - "F16C" # - "HTT" # - "LZCNT" # - "MMX" # - "MMXEXT" # - "NX" # - "POPCNT" # - "RDRAND" # - "RDSEED" # - "RDTSCP" # - "SGX" # - "SSE" # - "SSE2" # - "SSE3" # - "SSE4.1" # - "SSE4.2" # - "SSSE3" # attributeWhitelist: # kernel: # kconfigFile: "/path/to/kconfig" # configOpts: # - "NO_HZ" # - "X86" # - "DMI" pci: deviceClassWhitelist: - "0200" - "03" - "12" deviceLabelFields: # - "class" - "vendor" # - "device" # - "subsystem_vendor" # - "subsystem_device" # usb: # deviceClassWhitelist: # - "0e" # - "ef" # - "fe" # - "ff" # deviceLabelFields: # - "class" # - "vendor" # - "device" # custom: # - name: "my.kernel.feature" # matchOn: # - loadedKMod: ["example_kmod1", "example_kmod2"] # - name: "my.pci.feature" # matchOn: # - pciId: # class: ["0200"] # vendor: ["15b3"] # device: ["1014", "1017"] # - pciId : # vendor: ["8086"] # device: ["1000", "1100"] # - name: "my.usb.feature" # matchOn: # - usbId: # class: ["ff"] # vendor: ["03e7"] # device: ["2485"] # - usbId: # class: ["fe"] # vendor: ["1a6e"] # device: ["089a"] # - name: "my.combined.feature" # matchOn: # - pciId: # vendor: ["15b3"] # device: ["1014", "1017"] # loadedKMod : ["vendor_kmod1", "vendor_kmod2"] EOFVerify that NFD is ready.

The status of this operator should show as Available.

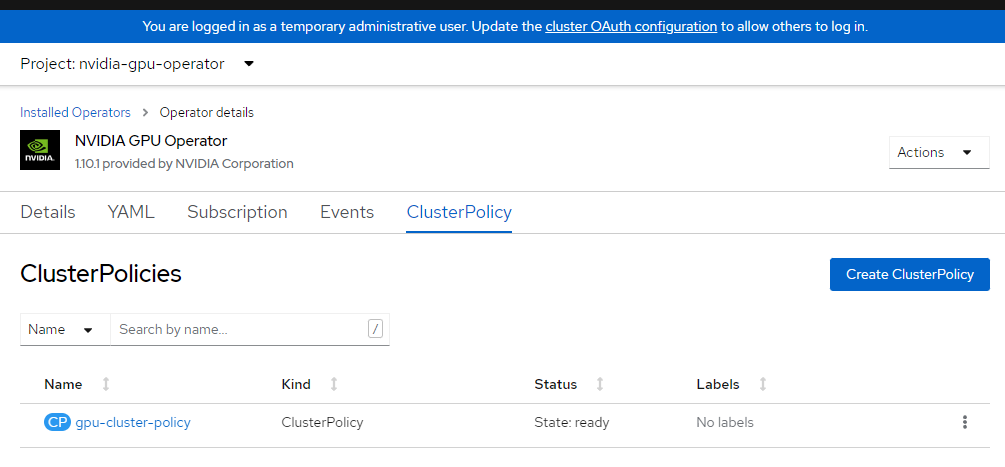

Apply NVIDIA Cluster Config

This section explains how to apply the NVIDIA cluster config. Please read the NVIDIA documentation on customizing this if you have your own private repos or specific settings. This process may take several minutes to complete.

Apply cluster config.

cat <<EOF | oc apply -f - apiVersion: nvidia.com/v1 kind: ClusterPolicy metadata: name: gpu-cluster-policy spec: migManager: enabled: true operator: defaultRuntime: crio initContainer: {} runtimeClass: nvidia deployGFD: true dcgm: enabled: true gfd: {} dcgmExporter: config: name: '' driver: licensingConfig: nlsEnabled: false configMapName: '' certConfig: name: '' kernelModuleConfig: name: '' repoConfig: configMapName: '' virtualTopology: config: '' enabled: true use_ocp_driver_toolkit: true devicePlugin: {} mig: strategy: single validator: plugin: env: - name: WITH_WORKLOAD value: 'true' nodeStatusExporter: enabled: true daemonsets: {} toolkit: enabled: true EOFVerify cluster policy.

Log in to OpenShift console and browse to operators. Ensure sure you're in the

nvidia-gpu-operatornamespace. It should sayState: Ready once everything is complete.

Validate GPU

It may take some time for the NVIDIA Operator and NFD to completely install and self-identify the machines. Run the following commands to validate that everything is running as expected:

Verify that NFD can see your GPU(s).

oc describe node | egrep 'Roles|pci-10de' | grep -v masterThe output should appear similar to the following:

Roles: worker feature.node.kubernetes.io/pci-10de.present=trueVerify node labels.

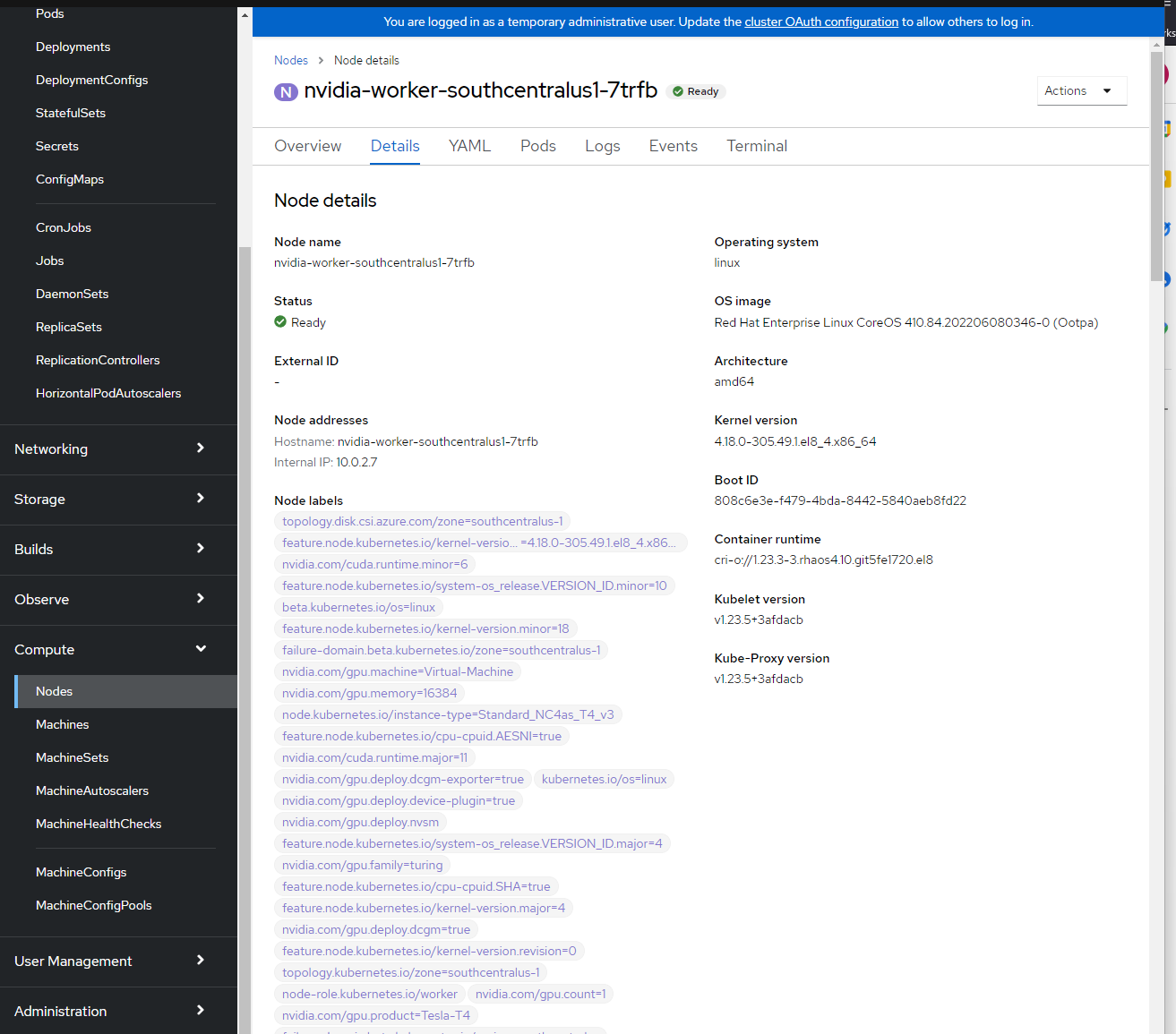

You can see the node labels by logging into the OpenShift console -> Compute -> Nodes -> nvidia-worker-southcentralus1-. You should see multiple NVIDIA GPU labels and the pci-10de device from above.

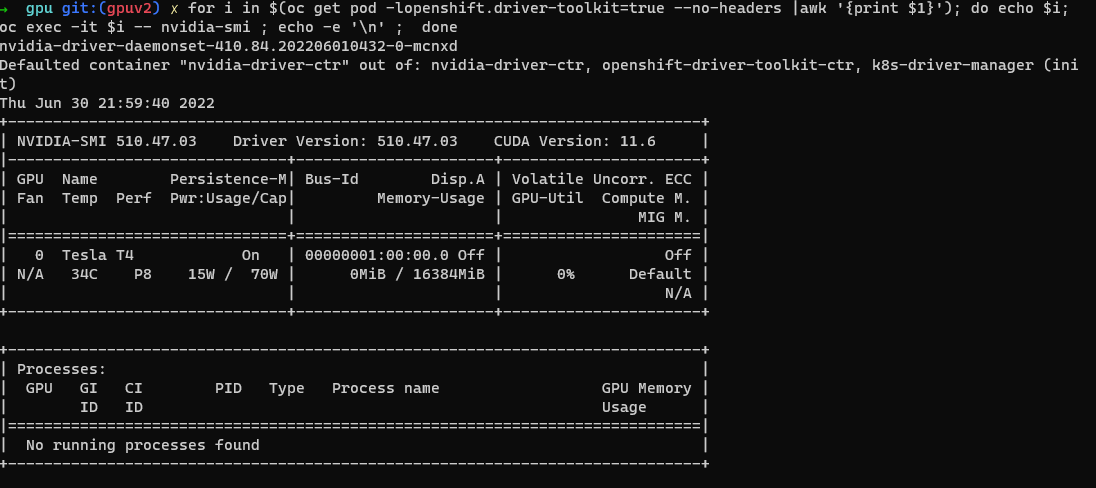

NVIDIA SMI tool verification.

oc project nvidia-gpu-operator for i in $(oc get pod -lopenshift.driver-toolkit=true --no-headers |awk '{print $1}'); do echo $i; oc exec -it $i -- nvidia-smi ; echo -e '\n' ; doneYou should see output that shows the GPUs available on the host such as this example screenshot. (Varies depending on GPU worker type)

Create Pod to run a GPU workload

oc project nvidia-gpu-operator cat <<EOF | oc apply -f - apiVersion: v1 kind: Pod metadata: name: cuda-vector-add spec: restartPolicy: OnFailure containers: - name: cuda-vector-add image: "quay.io/giantswarm/nvidia-gpu-demo:latest" resources: limits: nvidia.com/gpu: 1 nodeSelector: nvidia.com/gpu.present: true EOFView logs.

oc logs cuda-vector-add --tail=-1

Note

If you get an error Error from server (BadRequest): container "cuda-vector-add" in pod "cuda-vector-add" is waiting to start: ContainerCreating, try running oc delete pod cuda-vector-add and then re-run the create statement above.

The output should be similar to the following (depending on GPU):

[Vector addition of 5000 elements]

Copy input data from the host memory to the CUDA device

CUDA kernel launch with 196 blocks of 256 threads

Copy output data from the CUDA device to the host memory

Test PASSED

Done

If successful, the pod can be deleted:

oc delete pod cuda-vector-add