Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

This article explains how to use the no code editor to automatically capture streaming data in Event Hubs in an Azure Data Lake Storage Gen2 account in Delta Lake format.

Prerequisites

- Your Azure Event Hubs and Azure Data Lake Storage Gen2 resources must be publicly accessible and can't be behind a firewall or secured in an Azure Virtual Network.

- The data in your Event Hubs must be serialized in either JSON, CSV, or Avro format.

Configure a job to capture data

Use the following steps to configure a Stream Analytics job to capture data in Azure Data Lake Storage Gen2.

In the Azure portal, navigate to your event hub.

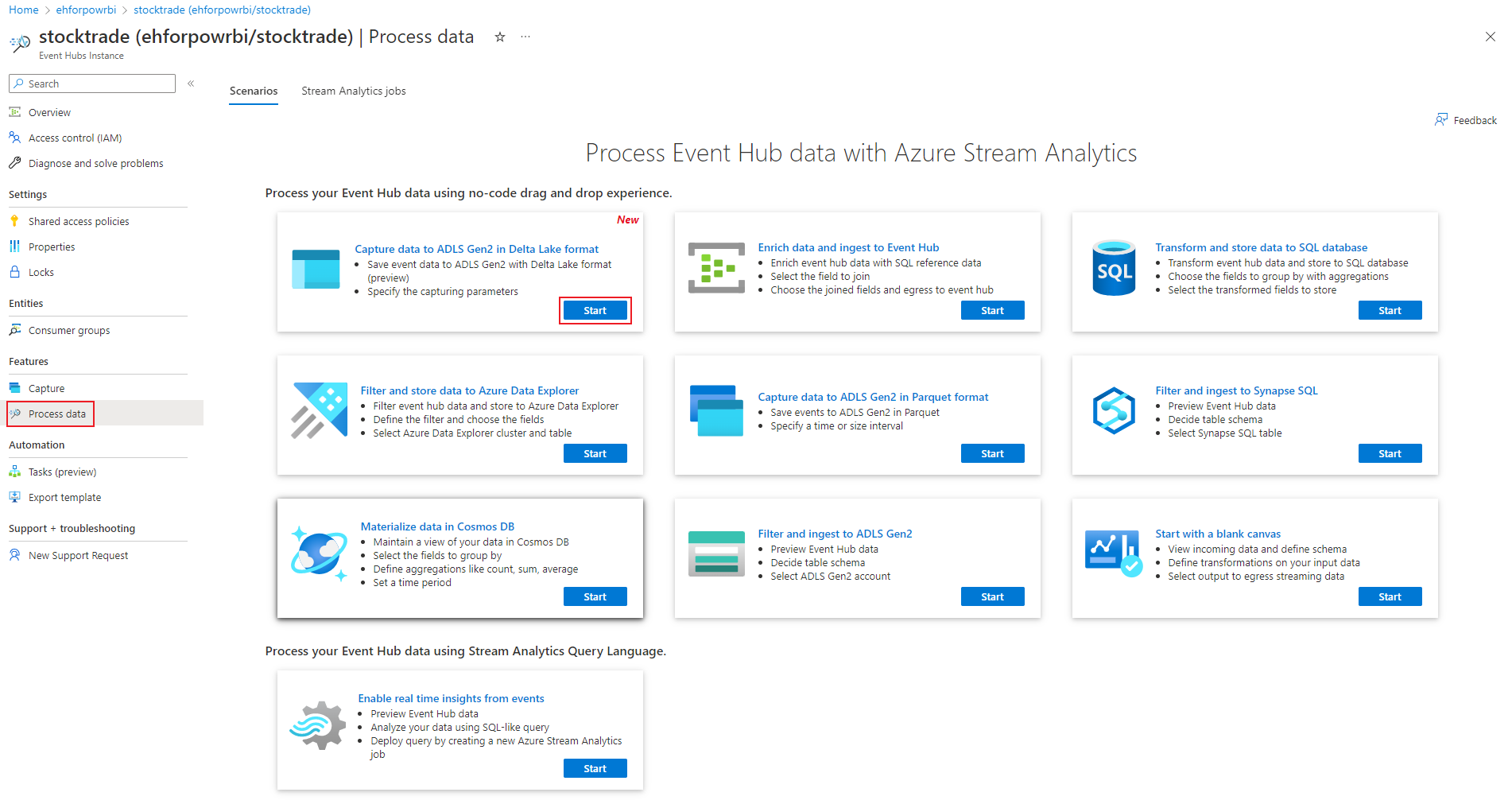

Select Features > Process Data, and select Start on the Capture data to ADLS Gen2 in Delta Lake format card.

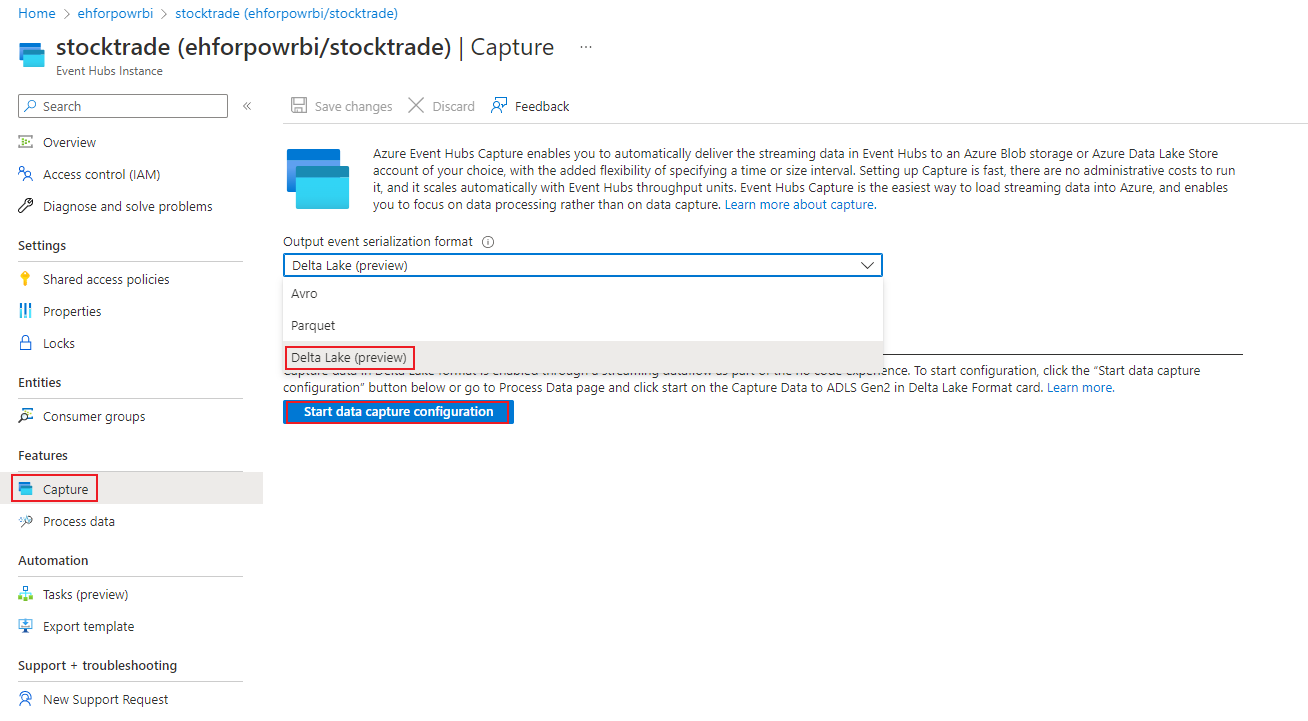

Alternatively, select Features > Capture, and select Delta Lake option under "Output event serialization format", then select Start data capture configuration.

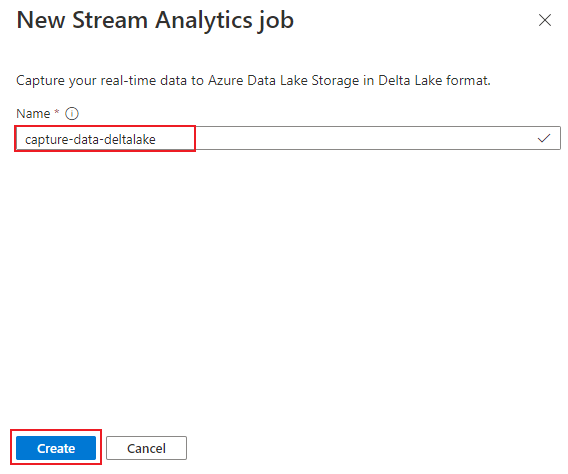

Enter a name to identify your Stream Analytics job. Select Create.

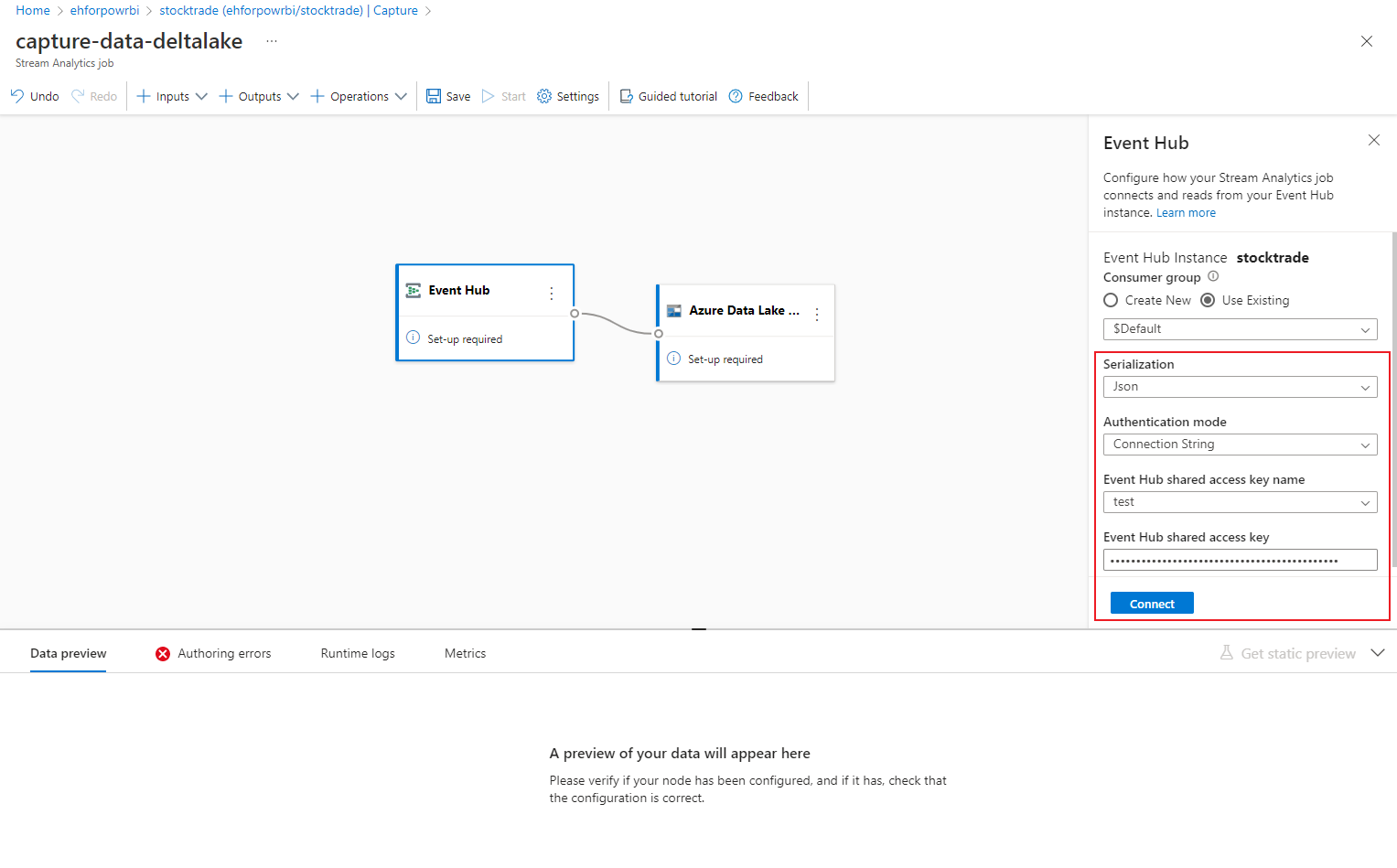

Specify the Serialization type of your data in the Event Hubs and the Authentication method that the job uses to connect to Event Hubs. Then select Connect.

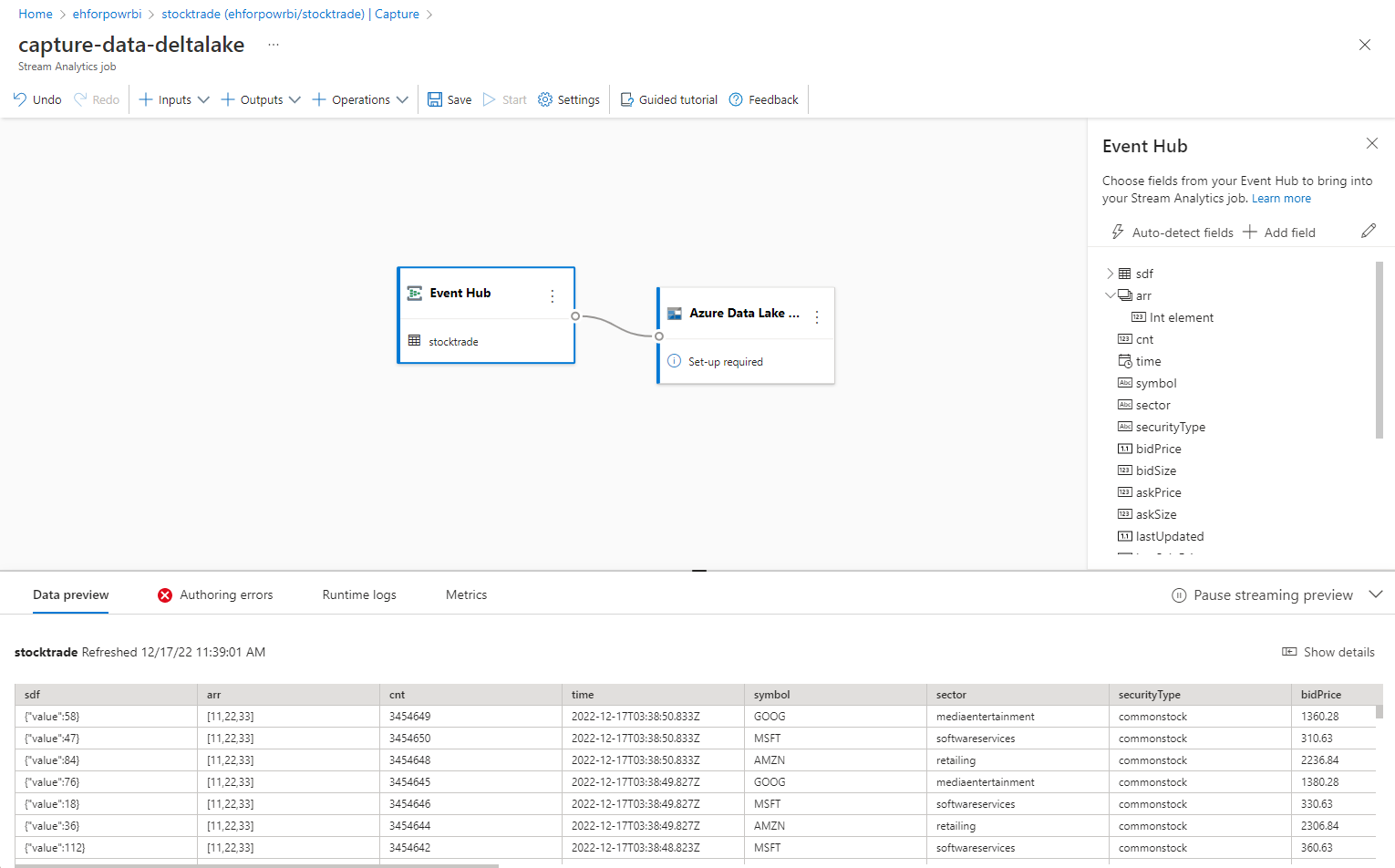

When the connection is established successfully, you see:

- Fields that are present in the input data. You can choose Add field or you can select the three dot symbol next to a field to optionally remove, rename, or change its name.

- A live sample of incoming data in the Data preview table under the diagram view. It refreshes periodically. You can select Pause streaming preview to view a static view of the sample input.

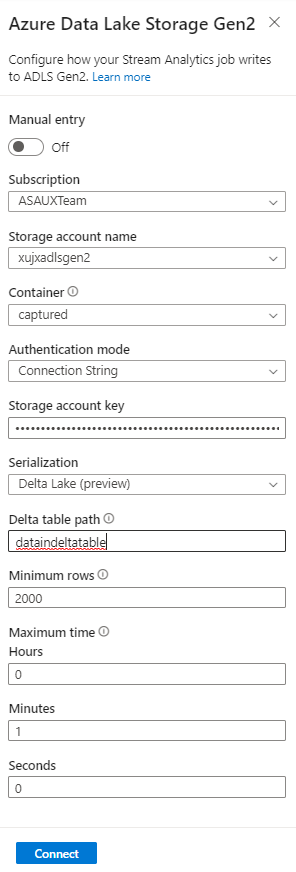

Select the Azure Data Lake Storage Gen2 tile to edit the configuration.

On the Azure Data Lake Storage Gen2 configuration page, follow these steps:

Select the subscription, storage account name, and container from the drop-down menu.

Once the subscription is selected, the authentication method and storage account key should be automatically filled in.

For Delta table path, it's used to specify the location and name of your Delta Lake table stored in Azure Data Lake Storage Gen2. You can choose to use one or more path segments to define the path to the delta table and the delta table name. To learn more, see to Write to Delta Lake table.

Select Connect.

When the connection is established, you see fields that are present in the output data.

Select Save on the command bar to save your configuration.

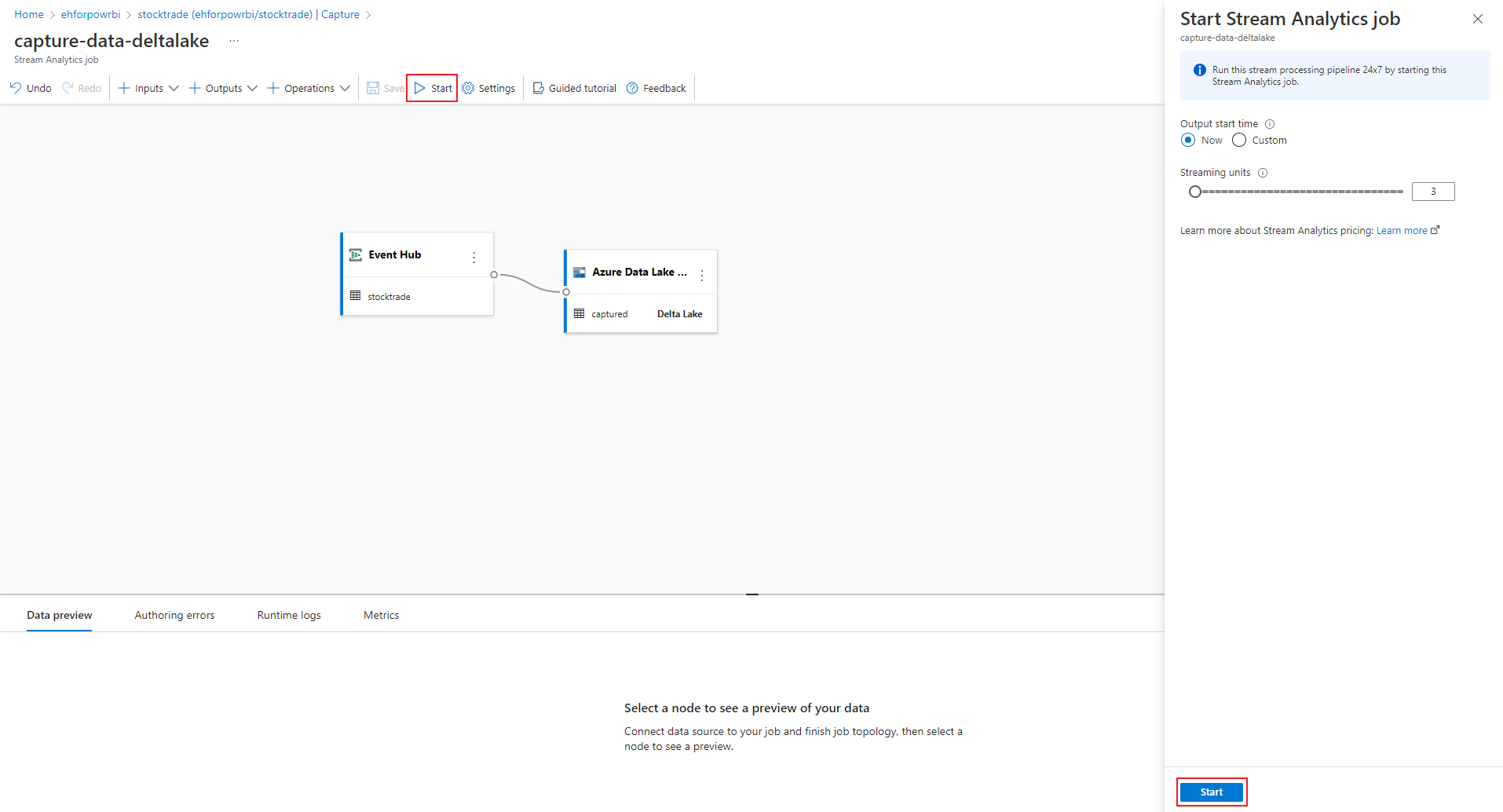

Select Start on the command bar to start the streaming flow to capture data. Then in the Start Stream Analytics job window:

- Choose the output start time.

- Select the number of Streaming Units (SU) that the job runs with. SU represents the computing resources that are allocated to execute a Stream Analytics job. For more information, see Streaming Units in Azure Stream Analytics.

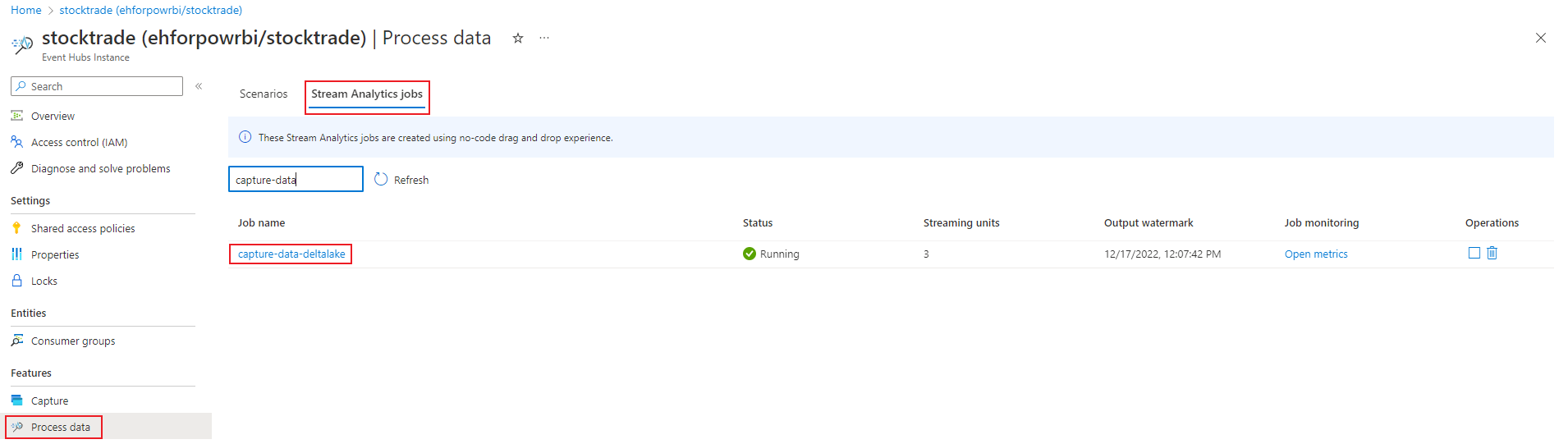

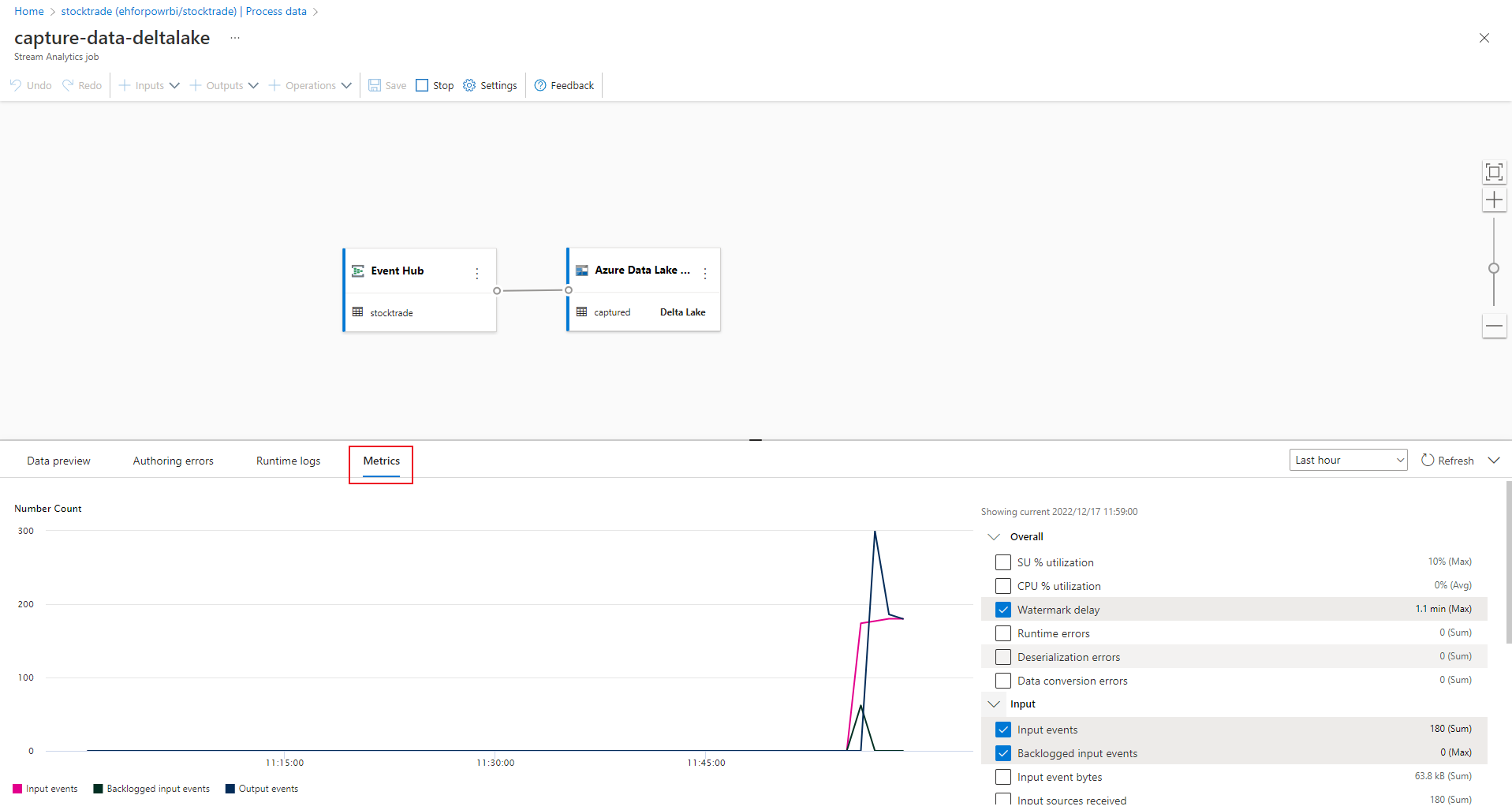

After you select Start, the job starts running within two minutes, and the metrics will be open in tab section as shown in the following image.

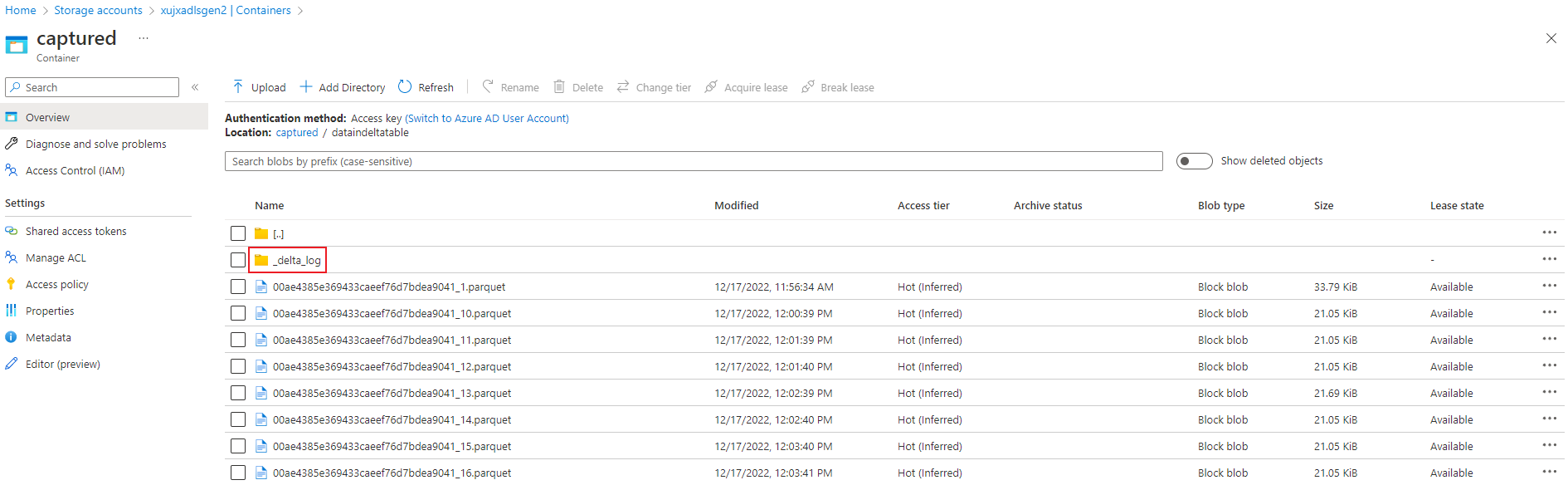

Verify output

Verify that the parquet files with Delta lake format are generated in the Azure Data Lake Storage container.

Considerations when using the Event Hubs Geo-replication feature

Azure Event Hubs recently launched the Geo-Replication feature in public preview. This feature is different from the Geo Disaster Recovery feature of Azure Event Hubs.

When the failover type is Forced and replication consistency is Asynchronous, Stream Analytics job doesn't guarantee exactly once output to an Azure Event Hubs output.

Azure Stream Analytics, as producer with an event hub an output, might observe watermark delay on the job during failover duration and during throttling by Event Hubs in case replication lag between primary and secondary reaches the maximum configured lag.

Azure Stream Analytics, as consumer with Event Hubs as Input, might observe watermark delay on the job during failover duration and might skip data or find duplicate data after failover is complete.

Due to these caveats, we recommend that you restart the Stream Analytics job with appropriate start time right after Event Hubs failover is complete. Also, since Event Hubs Geo-replication feature is in public preview, we don't recommend using this pattern for production Stream Analytics jobs at this point. The current Stream Analytics behavior will improve before the Event Hubs Geo-replication feature is generally available and can be used in Stream Analytics production jobs.

Next steps

Now you know how to use the Stream Analytics no code editor to create a job that captures Event Hubs data to Azure Data Lake Storage Gen2 in Delta lake format. Next, you can learn more about Azure Stream Analytics and how to monitor the job that you created.