Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

Testing helps ensure that code performs as expected, but the time and effort to build tests takes time away from other tasks such as feature development. With this cost, it's important to extract maximum value from testing. This article discusses DevOps test principles, focusing on the value of unit testing and a shift-left test strategy.

Dedicated testers used to write most tests, and many product developers didn't learn to write unit tests. Writing tests can seem too difficult or like too much work. There can be skepticism about whether a unit test strategy works, bad experiences with poorly-written unit tests, or fear that unit tests will replace functional tests.

To implement a DevOps test strategy, be pragmatic and focus on building momentum. Although you can insist on unit tests for new code or existing code that can be cleanly refactored, it might make sense for a legacy codebase to allow some dependency. If significant parts of product code use SQL, allowing unit tests to take dependency on the SQL resource provider instead of mocking that layer could be a short-term approach to progress.

As DevOps organizations mature, it becomes easier for leadership to improve processes. While there might be some resistance to change, Agile organizations value changes that clearly pay dividends. It should be easy to sell the vision of faster test runs with fewer failures, because it means more time to invest in generating new value through feature development.

DevOps test taxonomy

Defining a test taxonomy is an important aspect of the DevOps testing process. A DevOps test taxonomy classifies individual tests by their dependencies and the time they take to run. Developers should understand the right types of tests to use in different scenarios, and which tests different parts of the process require. Most organizations categorize tests across four levels:

- L0 and L1 tests are unit tests, or tests that depend on code in the assembly under test and nothing else. L0 is a broad class of fast, in-memory unit tests.

- L2 are functional tests that might require the assembly plus other dependencies, like SQL or the file system.

- L3 functional tests run against testable service deployments. This test category requires a service deployment, but might use stubs for key service dependencies.

- L4 tests are a restricted class of integration tests that run against production. L4 tests require a full product deployment.

While it would be ideal for all tests to run at all times, it's not feasible. Teams can select where in the DevOps process to run each test, and use shift-left or shift-right strategies to move different test types earlier or later in the process.

For example, the expectation might be that developers always run through L2 tests before committing, a pull request automatically fails if the L3 test run fails, and the deployment might be blocked if L4 tests fail. The specific rules may vary from organization to organization, but enforcing the expectations for all teams within an organization moves everyone toward the same quality vision goals.

Unit test guidelines

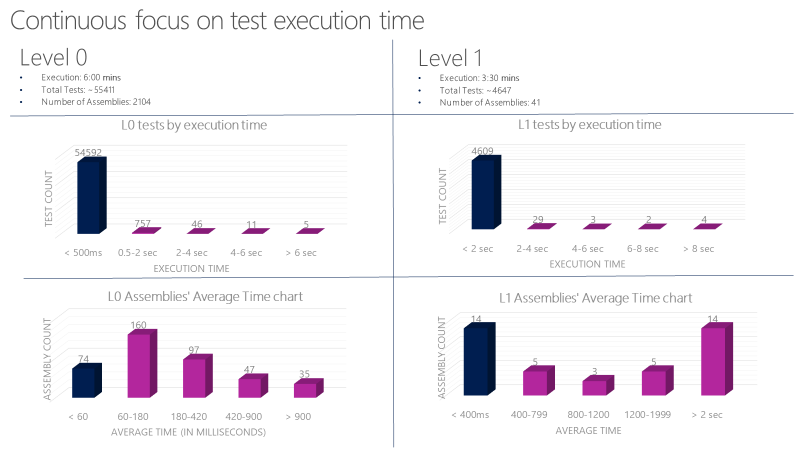

Set strict guidelines for L0 and L1 unit tests. These tests need to be very fast and reliable. For example, average execution time per L0 test in an assembly should be less than 60 milliseconds. The average execution time per L1 test in an assembly should be less than 400 milliseconds. No test at this level should exceed 2 seconds.

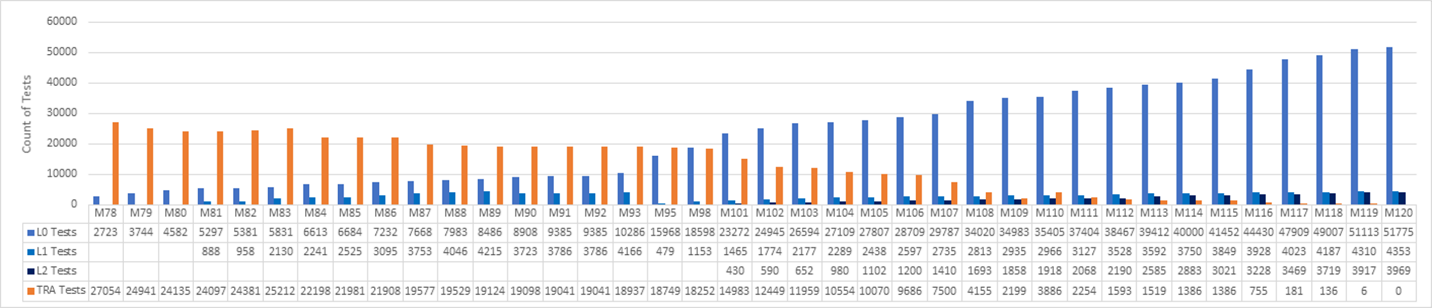

One Microsoft team runs over 60,000 unit tests in parallel in less than six minutes. Their goal is to reduce this time to less than a minute. The team tracks unit test execution time with tools like the following chart, and files bugs against tests that exceed the allowed time.

Functional test guidelines

Functional tests must be independent. The key concept for L2 tests is isolation. Properly isolated tests can run reliably in any sequence, because they have complete control over the environment they run in. The state must be known at the beginning of the test. If one test created data and left it in the database, it could corrupt the run of another test that relies on a different database state.

Legacy tests that need a user identity might have called external authentication providers to get the identity. This practice introduces several challenges. The external dependency could be unreliable or unavailable momentarily, breaking the test. This practice also violates the test isolation principle, because a test could change the state of an identity, such as permission, resulting in an unexpected default state for other tests. Consider preventing these issues by investing in identity support within the test framework.

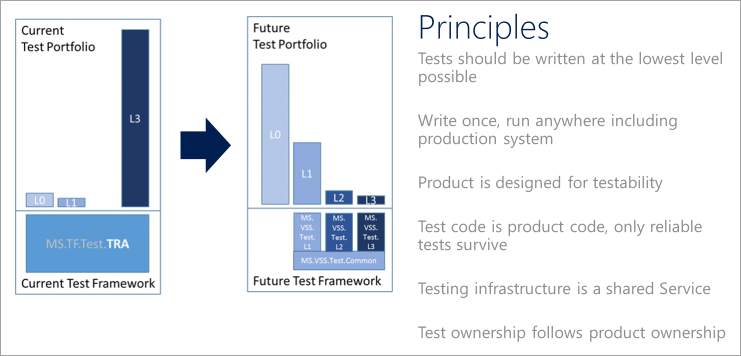

DevOps test principles

To help transition a test portfolio to modern DevOps processes, articulate a quality vision. Teams should adhere to the following test principles when defining and implementing a DevOps testing strategy.

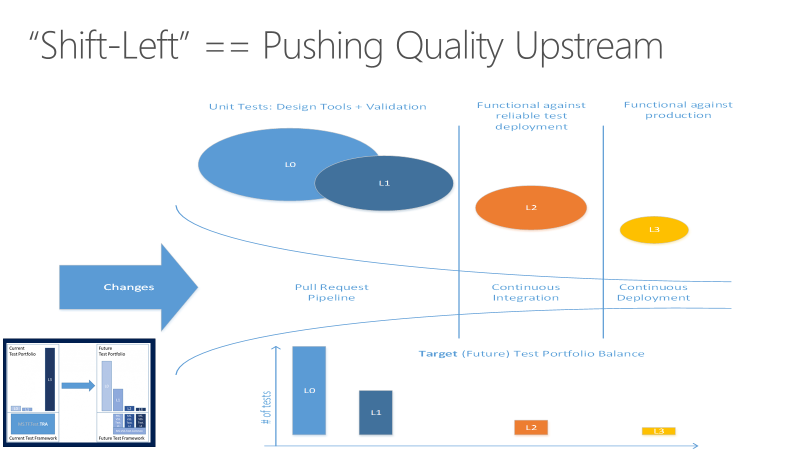

Shift left to test earlier

Tests can take a long time to run. As projects scale, test numbers and types grow substantially. When test suites grow to take hours or days to complete, they can push farther out until they run at the last moment. The code quality benefits of testing aren't realized until long after the code is committed.

Long-running tests might also produce failures that are time-consuming to investigate. Teams can build a tolerance for failures, especially early in sprints. This tolerance undermines the value of testing as insight into codebase quality. Long-running, last-minute tests also add unpredictability to end-of-sprint expectations, because an unknown amount of technical debt must be paid to get the code shippable.

The goal for shifting testing left is to move quality upstream by performing testing tasks earlier in the pipeline. Through a combination of test and process improvements, shifting left reduces both the time it takes for tests to run, and the impact of failures later in the cycle. Shifting left ensures that most testing is completed before a change merges into the main branch.

In addition to shifting certain testing responsibilities left to improve code quality, teams can shift other test aspects right, or later in the DevOps cycle, to improve the final product. For more information, see Shift right to test in production.

Write tests at the lowest possible level

Write more unit tests. Favor tests with the fewest external dependencies, and focus on running most tests as part of the build. Consider a parallel build system that can run unit tests for an assembly as soon as the assembly and associated tests drop. It's not feasible to test every aspect of a service at this level, but the principle is to use lighter unit tests if they can produce the same results as heavier functional tests.

Aim for test reliability

An unreliable test is organizationally expensive to maintain. Such a test works directly against the engineering efficiency goal by making it hard to make changes with confidence. Developers should be able to make changes anywhere and quickly gain confidence that nothing has been broken. Maintain a high bar for reliability. Discourage the use of UI tests, because they tend to be unreliable.

Write functional tests that can run anywhere

Tests might use specialized integration points designed specifically to enable testing. One reason for this practice is a lack of testability in the product itself. Unfortunately, tests like these often depend on internal knowledge and use implementation details that don't matter from a functional test perspective. These tests are limited to environments that have the secrets and configuration necessary to run the tests, which generally excludes production deployments. Functional tests should use only the public API of the product.

Design products for testability

Organizations in a maturing DevOps process take a complete view of what it means to deliver a quality product on a cloud cadence. Shifting the balance strongly in favor of unit testing over functional testing requires teams to make design and implementation choices that support testability. There are different ideas about what constitutes well-designed and well-implemented code for testability, just as there are different coding styles. The principle is that designing for testability must become a primary part of the discussion about design and code quality.

Treat test code as product code

Explicitly stating that test code is product code makes it clear that the quality of test code is as important to shipping as that of product code. Teams should treat test code the same way they treat product code, and apply the same level of care to the design and implementation of tests and test frameworks. This effort is similar to managing configuration and infrastructure as code. To be complete, a code review should consider the test code and hold it to the same quality bar as the product code.

Use shared test infrastructure

Lower the bar for using test infrastructure to generate trusted quality signals. View testing as a shared service for the entire team. Store unit test code alongside product code and build it with the product. Tests that run as part of the build process must also run under development tools such as Azure DevOps. If tests can run in every environment from local development through production, they have the same reliability as the product code.

Make code owners responsible for testing

Test code should reside next to the product code in a repo. For code to be tested at a component boundary, push accountability for testing to the person writing the component code. Don't rely on others to test the component.

Case study: Shift left with unit tests

A Microsoft team decided to replace their legacy test suites with modern, DevOps unit tests and a shift-left process. The team tracked progress across triweekly sprints, as shown in the following graph. The graph covers sprints 78-120, which represents 42 sprints over 126 weeks, or about two and half years of effort.

The team started at 27K legacy tests in sprint 78, and reached zero legacy tests at S120. A set of L0 and L1 unit tests replaced most of the old functional tests. New L2 tests replaced some of the tests, and many of the old tests were deleted.

In a software journey that takes over two years to complete, there's a lot to learn from the process itself. Overall, the effort to completely redo the test system over two years was a massive investment. Not every feature team did the work at the same time. Many teams across the organization invested time in every sprint, and in some sprints it was most of what the team did. Although it's difficult to measure the cost of the shift, it was a non-negotiable requirement for the team's quality and performance goals.

Getting started

At the beginning, the team left the old functional tests, called TRA tests, alone. The team wanted developers to buy into the idea of writing unit tests, particularly for new features. The focus was on making it as easy as possible to author L0 and L1 tests. The team needed to develop that capability first, and build momentum.

The preceding graph shows unit test count starting to increase early, as the team saw the benefit of authoring unit tests. Unit tests were easier to maintain, faster to run, and had fewer failures. It was easy to gain support for running all unit tests in the pull request flow.

The team didn't focus on writing new L2 tests until sprint 101. In the meantime, the TRA test count went down from 27,000 to 14,000 from Sprint 78 to Sprint 101. New unit tests replaced some of the TRA tests, but many were simply deleted, based on team analysis of their usefulness.

The TRA tests jumped from 2100 to 3800 in sprint 110 because more tests were discovered in the source tree and added to the graph. It turned out that the tests had always been running, but weren't being tracked properly. This wasn't a crisis, but it was important to be honest and reassess as needed.

Getting faster

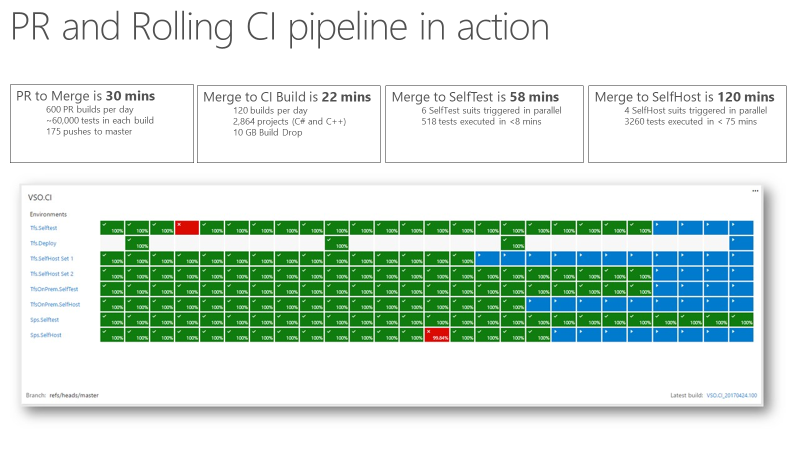

Once the team had a continuous integration (CI) signal that was extremely fast and reliable, it became a trusted indicator for product quality. The following screenshot shows the pull request and CI pipeline in action, and the time it takes to go through various phases.

It takes around 30 minutes to go from pull request to merge, which includes running 60,000 unit tests. From code merge to CI build is about 22 minutes. The first quality signal from CI, SelfTest, comes after about an hour. Then, most of the product is tested with the proposed change. Within two hours from Merge to SelfHost, the entire product is tested and the change is ready to go into production.

Using metrics

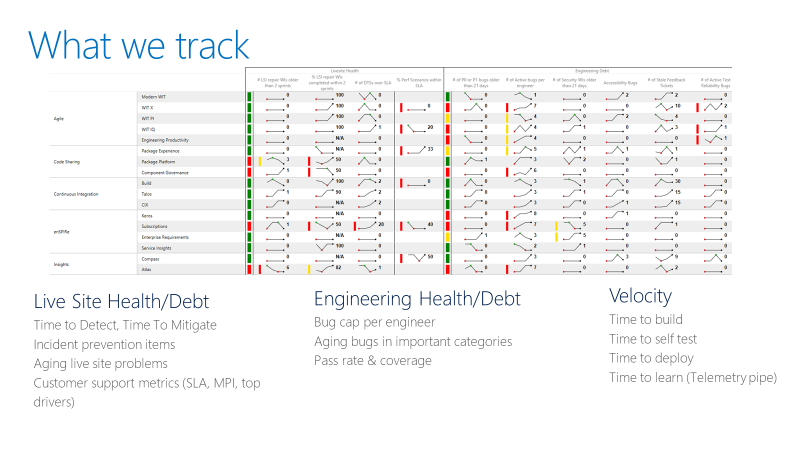

The team tracks a scorecard like the following example. At a high level, the scorecard tracks two types of metrics: Health or debt, and velocity.

For live site health metrics, the team tracks the time to detect, time to mitigate, and how many repair items a team is carrying. A repair item is work the team identifies in a live site retrospective to prevent similar incidents from recurring. The scorecard also tracks whether teams are closing the repair items within a reasonable timeframe.

For engineering health metrics, the team tracks active bugs per developer. If a team has more than five bugs per developer, the team must prioritize fixing those bugs before new feature development. The team also tracks aging bugs in special categories like security.

Engineering velocity metrics measure speed in different parts of the continuous integration and continuous delivery (CI/CD) pipeline. The overall goal is to increase the velocity of the DevOps pipeline: Starting from an idea, getting the code into production, and receiving data back from customers.