Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

This topic describes security considerations for deploying and running jobs on Azure worker role instances that have been added to a Windows HPC cluster (an Azure “burst” scenario).

For background information about Azure platform security, see Azure security overview.

User accounts

The domain-joined user accounts that are used to submit jobs and tasks to an on-premises HPC cluster are not used to run jobs and tasks on Azure nodes. Each job that is run on an Azure node creates a local user account and password. This helps ensure separation of job processes in Azure.

Note

A change or expiration of domain credentials for a cluster user does not cause any job queued by that user to fail on Azure nodes, even though the queued jobs will fail on on-premises nodes.

Firewall ports and protocols

The firewall ports and protocols that are used to deploy Azure nodes and to run jobs are summarized in Firewall ports used for communication with Azure nodes. For information about the configuration of Windows Firewall in the on-premises cluster that enables internal services to run, see Appendix 1: HPC Cluster Networking.

Note

Starting with HPC Pack 2008 R2 with SP3, port 443 is used for all Azure node deployment and job scheduling operations. This simplifies the set of ports that are required to use Azure nodes, compared with earlier versions of HPC Pack.

Certificates

X.509 v3 certificates are used to help secure the communication between on-premises cluster nodes and Azure nodes. They can be signed by another trusted certificate or they can be self-signed. To deploy Azure nodes to an Azure hosted service and to run jobs on them, both a management certificate and service certificates must be configured. The management certificate generally requires manual configuration. The service certificates are automatically configured by HPC Pack.

Management certificate

An Azure management certificate must be configured in the Azure subscription, on the head node, and on any client computer that is used to connect to the Azure cloud service. The client that connects to the Azure subscription has the private key. For procedures to configure the management certificate, see Options to Configure the Azure Management Certificate for Azure Burst Deployments.

The management certificate is used to help secure operations including the following:

Create an Azure node template that can be used to deploy Azure nodes

Provision Azure nodes

Upload files to Azure storage (for example, by using the hpcpack command)

Caution

A self-signed management certificate can be used for testing purposes or proof-of-concept deployments. However, it is not recommended for production deployments.

Service certificates

The following two public-key service certificates are automatically uploaded to the Azure cloud service from HPC Pack when Azure nodes are provisioned. They are used to permit mutual authentication between the on-premises head node and the Azure proxy nodes that are automatically provisioned in every deployment.

Microsoft HPC Azure Service

Microsoft HPC Azure Client

These certificates are configured by HPC Pack as follows:

The on-premises head node is configured with the Microsoft HPC Azure Client certificate (with the private key) and the Microsoft HPC Azure Service certificate

The proxy nodes in Azure are configured with the Microsoft HPC Azure Service certificate (with the private key) and the Microsoft HPC Azure Client certificate

Storage

Operations on an Azure storage account require an account key, which is automatically configured when the account is created. HPC Pack automatically retrieves this key to perform storage operations during the provisioning of Azure nodes.

Security model for interaction of on-premises and Azure components

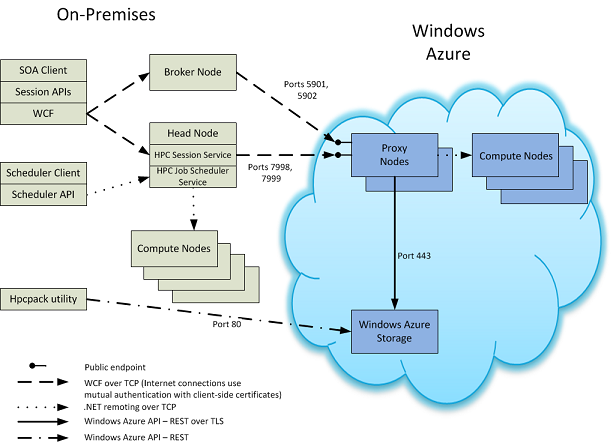

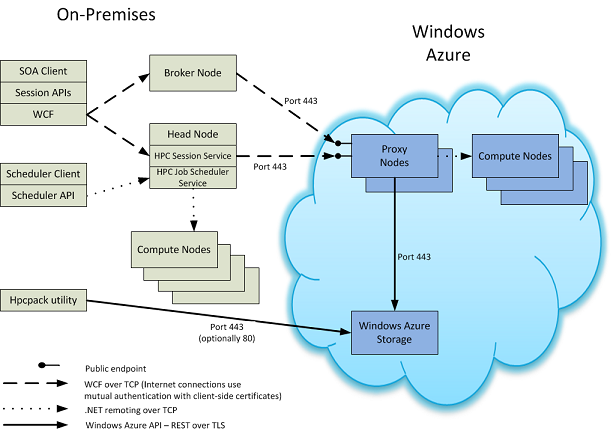

The following figures provide details on the interactions of on-premises HPC Pack components and those in Azure that are used for running cluster jobs. The figures indicate the ports, protocols, and endpoints that are used for communication in HPC Pack (starting with HPC Pack 2008 R2 with SP3), as well as in HPC Pack 2008 R2 with SP1 or SP2. The on-premises components that are present depend on the configuration of the HPC cluster.

HPC Pack 2008 R2 with at least SP3, or a later version of HPC Pack

HPC Pack 2008 R2 with SP1 or SP2