Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

Important

This content is being retired and may not be updated in the future. The support for Machine Learning Server will end on July 1, 2022. For more information, see What's happening to Machine Learning Server?

Applies to: Machine Learning Server, Microsoft R Server 9.x

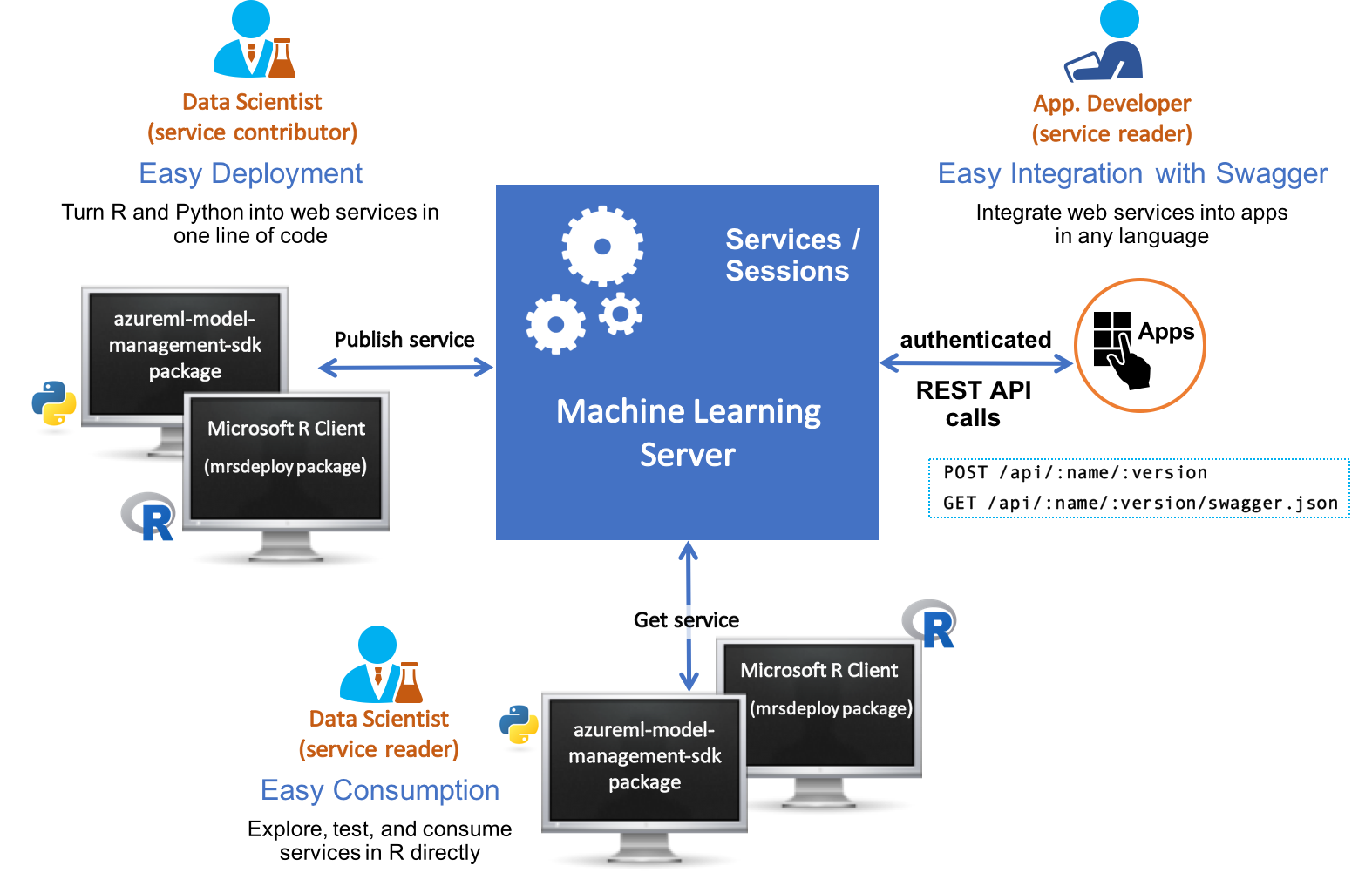

Operationalization refers to the process of deploying R and Python models and code to Machine Learning Server in the form of web services and the subsequent consumption of these services within client applications to affect business results.

Today, more businesses are adopting advanced analytics for mission critical decision making. Typically, data scientists first build the predictive models, and only then can businesses deploy those models in a production environment and consume them for predictive actions.

Being able to operationalize your analytics is a central capability in Machine Learning Server. After installing Machine Learning Server on select platforms, you'll have everything you need to configure the server to securely host R and Python analytics web services. For details on which platforms, see Supported platforms.

Data scientists work locally with Microsoft R Client, with Machine Learning Server, or with any other program in their preferred IDE and favorite version control tools to build scripts and models using open-source algorithms and functions and/or our proprietary ones. Using the mrsdeploy R package and/or the azureml-model-management-sdk Python package that ships the products, the data scientist can develop, test, and ultimately deploy these R and Python analytics as web services in their production environment.

Once deployed, the analytic web service is available to a broader audience within the organization who can then, in turn, consume the analytics. Machine Learning Server provides the operationalizing tools to deploy R and Python analytics inside web, desktop, mobile, and dashboard applications and backend systems. Machine Learning Server turns your scripts into analytics web services, so R and Python code can be easily executed by applications running on a secure server.

Learn more about the new additions in the "What's New in Machine Learning Server" article.

Video introduction

What you'll need

You'll develop your R and Python analytics locally, deploy them to Machine Learning Server as web services, and then consume or share them.

On the local client, you'll need to install:

- Microsoft R Client if working with R code. You'll also need to configure the R IDE of your choice to run Microsoft R Client. After you have this set up, you can develop your R analytics in your local R IDE using the functions in the mrsdeploy package that was installed with Microsoft R Client (and R Server).

- Local Python interpreter if working with Python code. After you have this set up, you can develop your Python analytics in your local interpreter using the functions in the azureml-model-management-sdk Python package.

On the remote server, you'll need the connection details and access to an instance of Machine Learning Server with its operationalization feature configured. After Machine Learning Server is configured for operationalization, you'll be able to connect to it from your local machine, deploy your models and other analytics to Machine Learning Server as web services, and finally consume or share those services. Please contact your administrator for any missing connection details.

Configuration

To benefit from Machine Learning Server’s web service deployment and remote execution features, you must first configure the server after installation to act as a deployment server and host analytic web services.

Learn how to configure Machine Learning Server to operationalize analytics.

Learn more

This section provides a quick summary of useful links for data scientists operationalizing R and Python analytics with Machine Learning Server.

Key Documents

For R users:

For Python users:

- Quickstart: Deploying an Python model as a web service

- Functions in azureml-model-management-sdk package

- Connecting to Machine Learning Server in Python

- Working with web services in Python

- How to consume web services in Python synchronously (request/response)

- How to consume web services in Python asynchronously (batch)

The differences between DeployR and R Server 9.x Operationalization.

How to integrate web services and authentication into your application