Direct Manipulation

The Direct Manipulation APIs let you create great pan, zoom, and drag user experiences. To do this, it processes touch input on a region or object, generates output transforms, and applies the transforms to UI elements. You can use Direct Manipulation to optimize responsiveness and reduce latency through off-thread input processing, optional off-thread input hit testing, and input/output prediction.

Any application that uses Direct Manipulation to process touch interactions displays the fluid Windows 8 animations and interaction feedback behaviors that conform to the Guidelines for common user interactions.

The Direct Manipulation API is for experienced developers who know C/C++, have a solid understanding of the Component Object Model (COM), and are familiar with Windows programming concepts.

Direct Manipulation was introduced in Windows 8. It is included in both 32-bit and 64-bit versions.

Direct Manipulation works by pre-declaring the behaviors and interactions for a region or object. For example, a web page is often configured for pan and zoom. At runtime, input is then associated with this region/object through a simple API call. From this point forward Direct Manipulation does all the heavy lifting of processing the input, applying constraints and personality, and generating the output transforms.

To optimize responsiveness and minimize latency, Direct Manipulation processing occurs on a separate, independent thread from the UI thread. As a result, output transforms can run in parallel to activity on the UI thread. The UI thread activity may include application logic, rendering, layout, and anything else that consumes cycles on the processor.

The interfaces included with Direct Manipulation provide comprehensive support for input handling, interaction recognition, feedback notifications, and UI updates. The interfaces also incorporate system services such as DirectComposition.

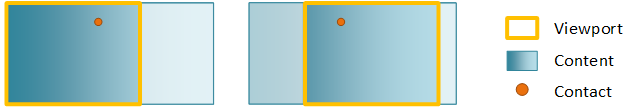

The most basic Direct Manipulation implementation consists of a viewport, content, and interactions. The viewport is a region that is able to receive and process input from user interactions. It is also the region of the content that is visible to the end-user. The content is the actual object that end-users can see and is what moves or scales in response to a user interaction. The primary user interactions (also known as manipulations) supported by Direct Manipulation are panning and zooming. These interactions apply a translate or scale transform to the content within the viewport, respectively. Multiple viewports (each with their own content) can be configured in a single window to create a rich UI experience.

This figure shows a basic Direct Manipulation implementation before and after panning.

During initialization of Direct Manipulation a DCompDirectManipulationCompositor object is instantiated and is associated with Direct Manipulation. This object is a wrapper around DirectComposition, which is the system compositor. The object is responsible for applying the output transforms and driving visual updates.

A contact represents a touch point identified by the pointerId provided in the WM_POINTERDOWN message. When a WM_POINTERDOWN message is received, the application calls SetContact. The application notifies Direct Manipulationabout the contacts that should be handled and the viewport(s) that should react to those contacts. Keyboard and mouse input have special pointerId values so they can be handled appropriately by Direct Manipulation.

In our basic case above, when SetContact is called a few things happen:

- When the user performs a pan, a WM_POINTERCAPTURECHANGED message is sent to the application to notify that the contact has been consumed by Direct Manipulation.

- When the user moves the moves, the viewport fires update events which are used by the DirectComposition wrapper to drive visual updates to the screen. To a user panning in a viewport, the content will appear to move smoothly under the contact.

- When the user lifts the contact, the user sees the content continue to move as it transitions into an inertia animation, gradually decelerating until it reaches its final resting place.

Direct Manipulation allows keyboard and mouse messages to be forwarded manually from the application UI thread via the ProcessInput API such that they can be handled appropriately by Direct Manipulation.

Direct Manipulation is associated with a Win32 HWND in order to receive and process pointer input messages for that window. As Direct Manipulation computes output values, it makes asynchronous callbacks to the Direct ManipulationComponent Object Model (COM) objects that are implemented in the application. These callbacks inform the application about the transform that was applied to the objects. Direct Manipulation is activated on the specified HWND by calling Activate.

- Viewports and content

- Using multiple viewports in DirectManipulation

- Processing input with DirectManipulation

- Composition engine

- Direct Manipulation Reference

- BUILD 2013: WCL-022: Make your desktop app great with touch, mouse, and pen

- Process touch input with Direct Manipulation sample