Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

Important

Microsoft Purview Communication Compliance provides the tools to help organizations detect regulatory compliance (for example, SEC or FINRA) and business conduct violations such as sensitive or confidential information, harassing or threatening language, and sharing of adult content. Communication Compliance is built with privacy by design. Usernames are pseudonymized by default, role-based access controls are built in, investigators are opted in by an admin, and audit logs are in place to help ensure user-level privacy.

Microsoft Purview Communication Compliance is an insider risk solution in Microsoft 365 that helps minimize communication risks by helping you detect, capture, and act on inappropriate messages in your organization.

For Microsoft Teams, Communication Compliance helps identify the following types of inappropriate content in Teams channels, Private Teams channels, or in 1:1 and group chats:

- Offensive, profane, and harassing language

- Adult, racy, and gory images

- Sharing of sensitive information

Watch the video below to learn how to detect communication risks in Microsoft Teams with Communication Compliance:

You can also detect offensive communications included in Microsoft Teams transcripts (preview).

For more information on Communication Compliance and how to configure policies for your organization, see Learn about Communication Compliance.

Tip

Get started with Microsoft Security Copilot to explore new ways to work smarter and faster using the power of AI. Learn more about Microsoft Security Copilot in Microsoft Purview.

How to use Communication Compliance in Microsoft Teams

Communication Compliance and Microsoft Teams are tightly integrated and can help minimize communication risks in your organization. After you've configured your first Communication Compliance policies, you can actively manage inappropriate Microsoft Teams messages and content that is automatically flagged in alerts.

Getting started

Getting started with Communication Compliance in Microsoft Teams begins with planning and creating predefined or custom policies to identify inappropriate user activities in Teams channels or in 1:1 and groups. Keep in mind that you need to configure some permissions and basic prerequisites as part of the configuration process.

Teams administrators can configure Communication Compliance policies at the following levels:

- User level: Policies at this level apply to an individual Teams user or may be applied to all Teams users in your organization. These policies cover messages that these users may send in 1:1 or group chats. Chat communications for the users are automatically detected across all Microsoft Teams where the users are a member.

- Teams level: Policies at this level apply to a Microsoft Teams channel, including a Private channel. These policies cover messages sent in the Teams channel only.

Report a message in Microsoft Teams

Note

The User-reported messages policy is implemented for your organization after you create your first Communication Compliance policy. It can take up to 30 days for this feature to be available after you create your first policy.

The Report inappropriate content option for Teams personal and group chat messages is enabled by default and can be controlled via Teams messaging policies in the Teams admin center. This allows users in your organization to submit inappropriate internal chat messages for review by Communication Compliance reviewers for the policy. For more information about user-reported messages in Communication Compliance, see Communication Compliance policies.

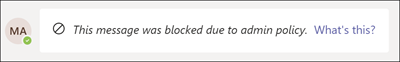

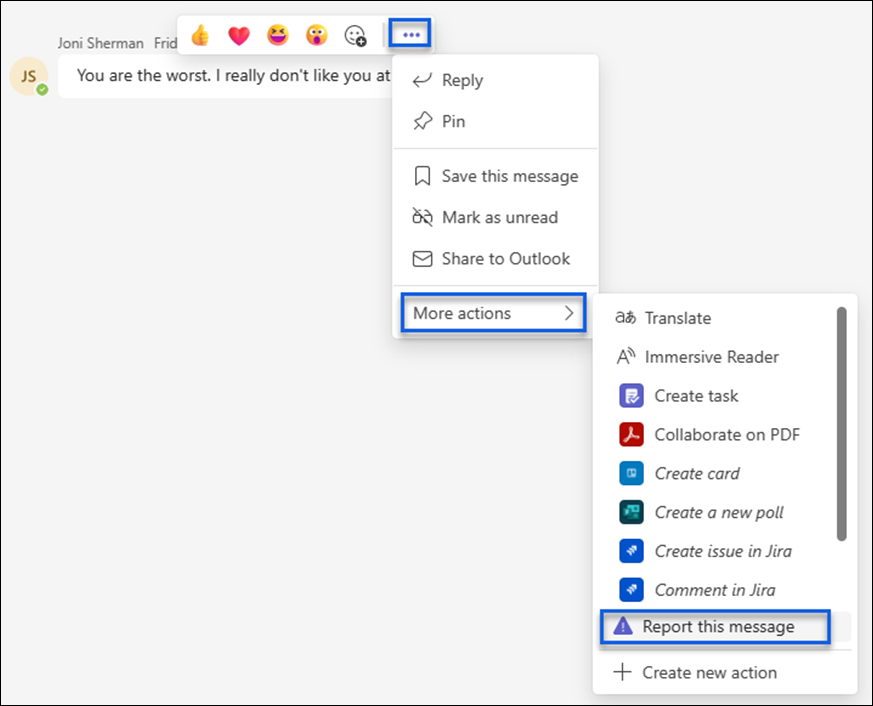

To access the feature, from a Teams chat, a user selects More options (...) > More actions > Report this message.

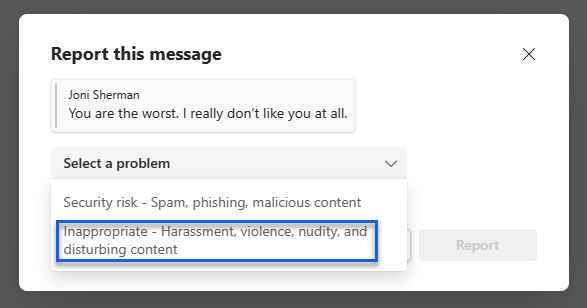

In the next dialog box, the user selects the Inappropriate - Harassment, violence, nudity, and disturbing content option from the Select a problem list.

Note

The other choice in the list (Security risk- Spam, phishing, malicious content), if available, is managed by Microsoft Defender for Office 365. The user might also be presented with just the Inappropriate - Harassment, violence, nudity, and disturbing content option, depending on which policy options are turned on in the Microsoft Teams admin center. Learn more about the Microsoft Defender for Office setting

After submitting the message for review, the user receives a confirmation of the submittal in Microsoft Teams. Other participants in the chat don't see this notification.

Users in your organization automatically get the global policy unless you create and assign a custom policy. Edit the settings in the global policy or create and assign one or more custom policies to turn on or turn off this feature. For more information, see Manage messaging policies in Teams.

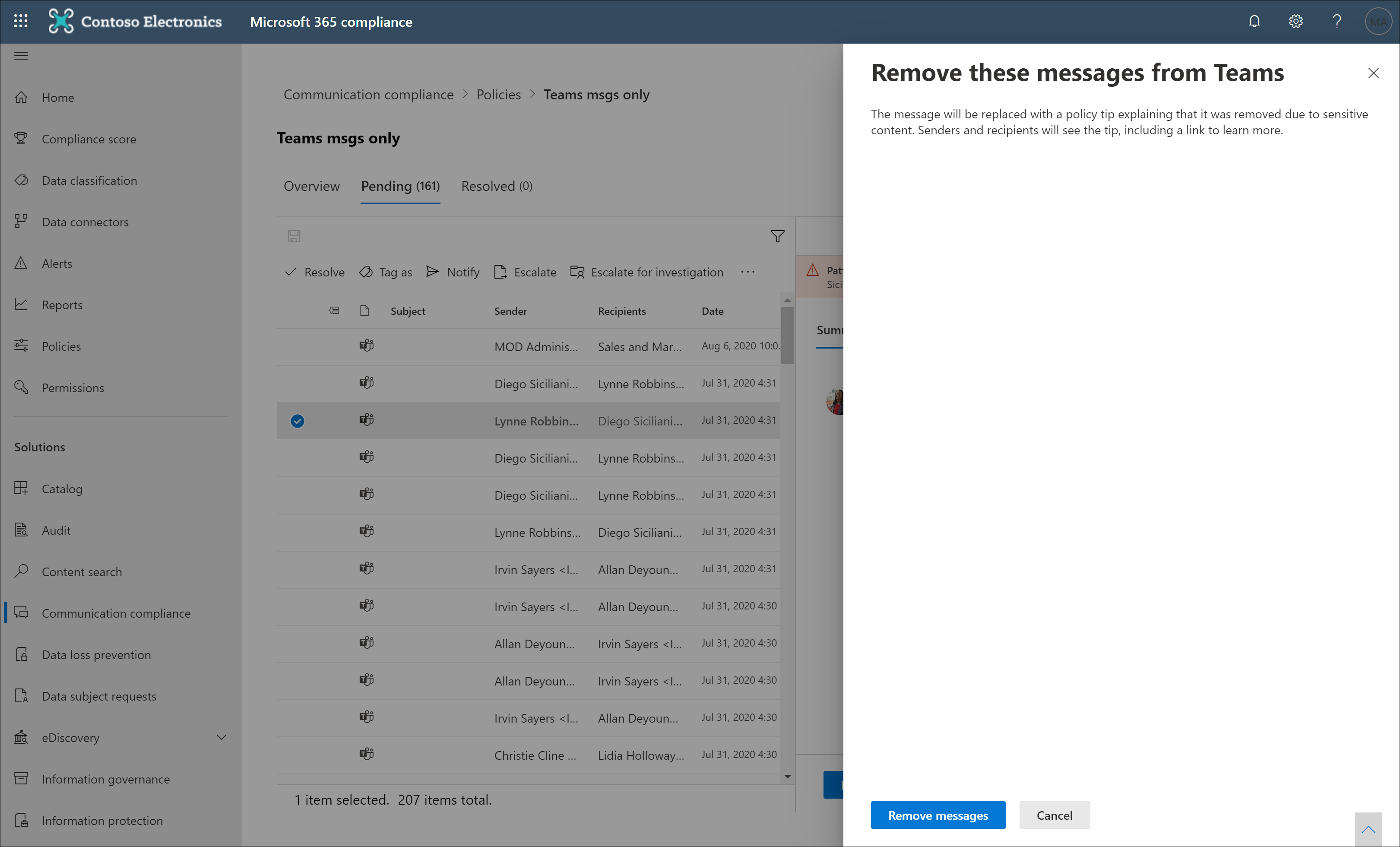

Act on inappropriate messages in Microsoft Teams

After you have configured your policies and have received Communication Compliance alerts for Microsoft Teams messages, it's time for compliance reviewers in your organization to act on these messages. This will also include user-reported messages if enabled for your organization. Reviewers can help safeguard your organization by reviewing Communication Compliance alerts and removing flagged messages from view in Microsoft Teams.

Important

If a user reports a message that was sent before they were added to a chat, Teams message remediation isn't supported; the Teams message can't be removed.

Removed messages and content are replaced with notifications for viewers explaining that the message or content was removed and what policy is applicable to the removal. The sender of the removed message or content is also notified of the removal status and provided with original message content for context relating to its removal. The sender can also view the specific policy condition that applies to the message removal.

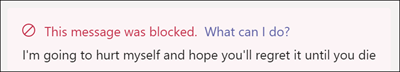

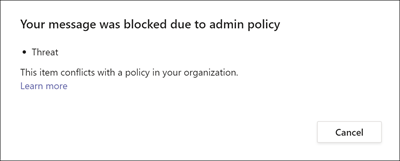

Example of policy tip seen by sender:

Example of policy notification seen by the sender:

Example of policy tip seen by recipient: