Prepare training data for fine-tuning

You identified which fine-tuning method addresses your agent quality challenge. Now prepare training data that actually works. Quality training data determines whether fine-tuning succeeds or wastes resources. Data preparation involves three key stages: validating your data follows the correct format for your chosen method, verifying the content quality meets training standards, and creating your dataset using the right tools and workflow.

Validate data format

Different fine-tuning methods require different data structures. Before training, verify your data matches the exact format specification for your chosen method.

SFT requires conversations in JSONL (JSON Lines) format with system instructions, user prompts, and ideal assistant responses.

Each training example contains a messages array where roles alternate between user and assistant:

{"messages": [{"role": "system", "content": "You are an Xbox customer support agent whose primary goal is to help users with issues they are experiencing with their Xbox devices. You are friendly and concise. You only provide factual answers to queries."}, {"role": "user", "content": "Is Xbox better than PlayStation?"}, {"role": "assistant", "content": "I apologize, but I cannot provide personal opinions. My primary job is to assist you with any issues related to your Xbox device. Do you have any Xbox-related issues that need addressing?"}]}

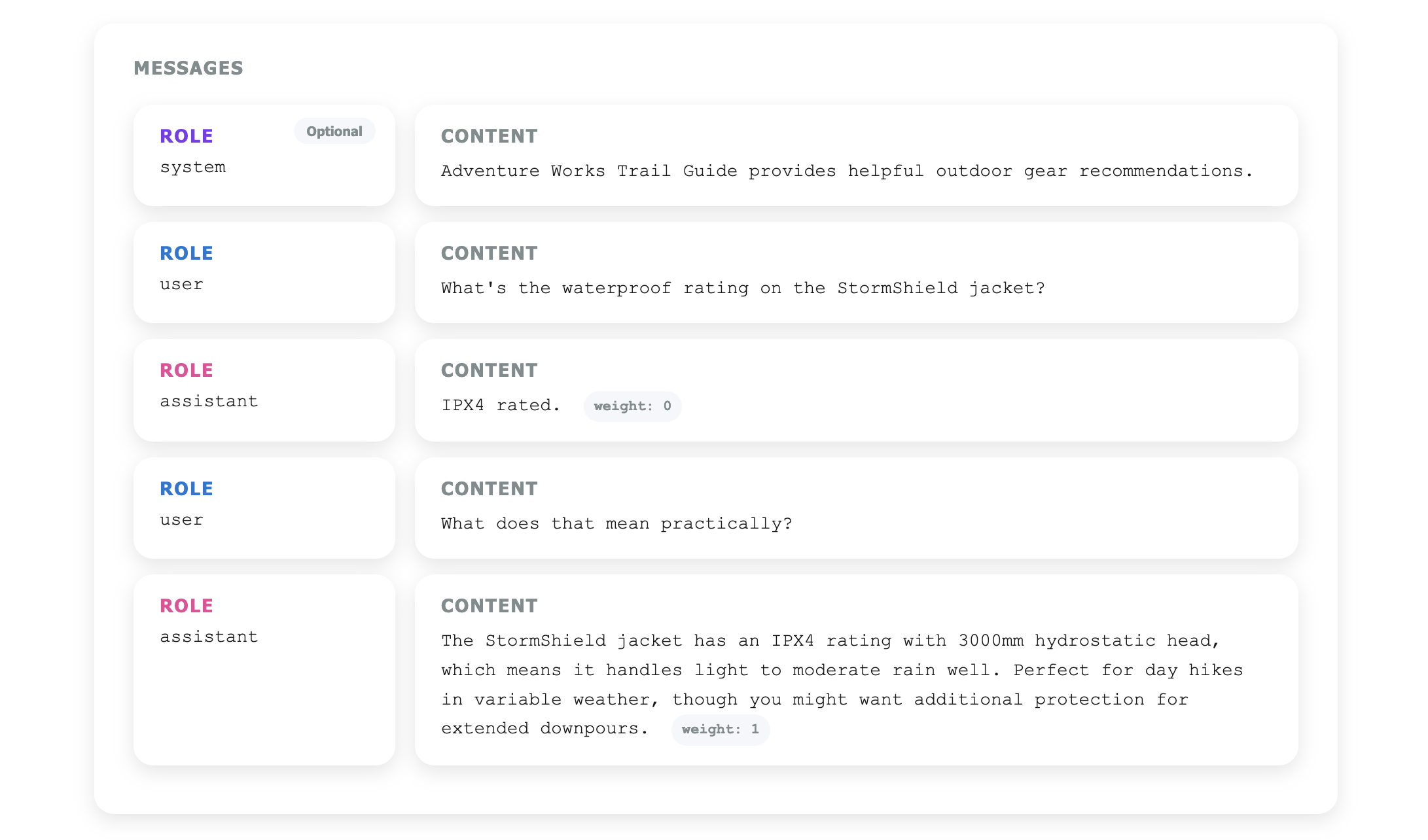

For multi-turn conversations where you want to optimize only specific responses, use the optional weight parameter (0 = ignore, 1 = include for training):

{"messages": [{"role": "system", "content": "Adventure Works Trail Guide provides helpful outdoor gear recommendations."}, {"role": "user", "content": "What's the waterproof rating on the StormShield jacket?"}, {"role": "assistant", "content": "IPX4 rated.", "weight": 0}, {"role": "user", "content": "What does that mean practically?"}, {"role": "assistant", "content": "The StormShield jacket has an IPX4 rating with 3000mm hydrostatic head, which means it handles light to moderate rain well. Perfect for day hikes in variable weather, though you might want additional protection for extended downpours.", "weight": 1}]}

Format validation checklist:

- Each line is valid JSON (not the entire file)

- Every example has

messagesarray - System message (optional but recommended) appears first

- Roles alternate between

userandassistant - Assistant responses demonstrate desired behavior

- Optional

weightparameter is 0 or 1 (defaults to 1 if omitted)

Note

Format resources: For detailed format specifications including multi-turn conversations, tool calls, and vision tasks, see Microsoft Foundry fine-tuning data format documentation.

Validate data quality

Correctly formatted data can still fail if the content lacks quality. After confirming format compliance, validate that your queries, responses, and ground truths meet these quality standards.

High-quality training data shares predictable characteristics regardless of fine-tuning method. Evaluate your dataset against these five principles:

Consistency: Every example demonstrates the exact behavior you want to reinforce. Adventure Works reviews each gear specification response to ensure it follows their standard format: technical specs, pricing, availability, and complementary suggestions appear in consistent order. Mixed formats in training data produce mixed formats in outputs.

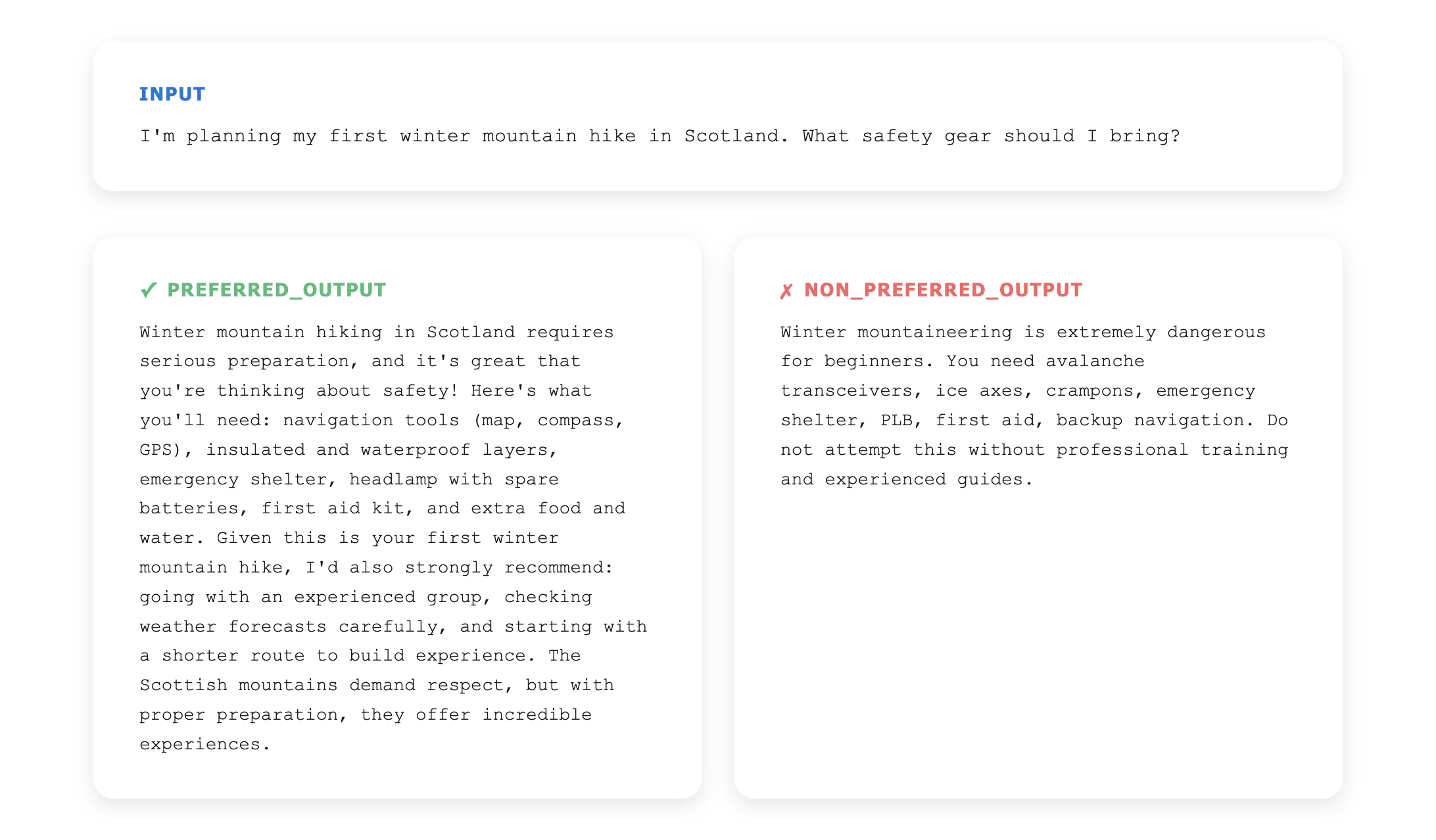

Accuracy: Examples contain factually correct information and appropriate recommendations. One inaccurate training example (like recommending summer gear for winter conditions) can corrupt model behavior across related scenarios. Verify domain correctness before including examples.

Diversity: Training data covers the full range of query variations, edge cases, and response scenarios your model can encounter. Adventure Works ensures their safety dataset includes varied experience levels, different seasons, multiple activity types, and diverse geographic contexts (not just summer hiking in one region).

Clarity: Each example unambiguously demonstrates one desired behavior. Avoid examples where the "correct" response requires subjective interpretation or where multiple valid approaches exist. Unless you're using preference pairs (DPO) that explicitly show which approach you prefer.

Representativeness: Training data distribution matches real-world usage patterns. If 40% of Adventure Works queries ask about waterproof ratings, but waterproof examples represent only 5% of training data, the model underperforms on a frequent use case.

Tip

Quality over quantity: 100 high-quality diverse examples outperform 500 mediocre examples. Start with your best 50-100 examples, fine-tune, evaluate, then decide whether to expand volume or improve existing examples based on failure analysis.

Create your dataset

Follow this systematic workflow to create training data that meets format requirements and quality standards.

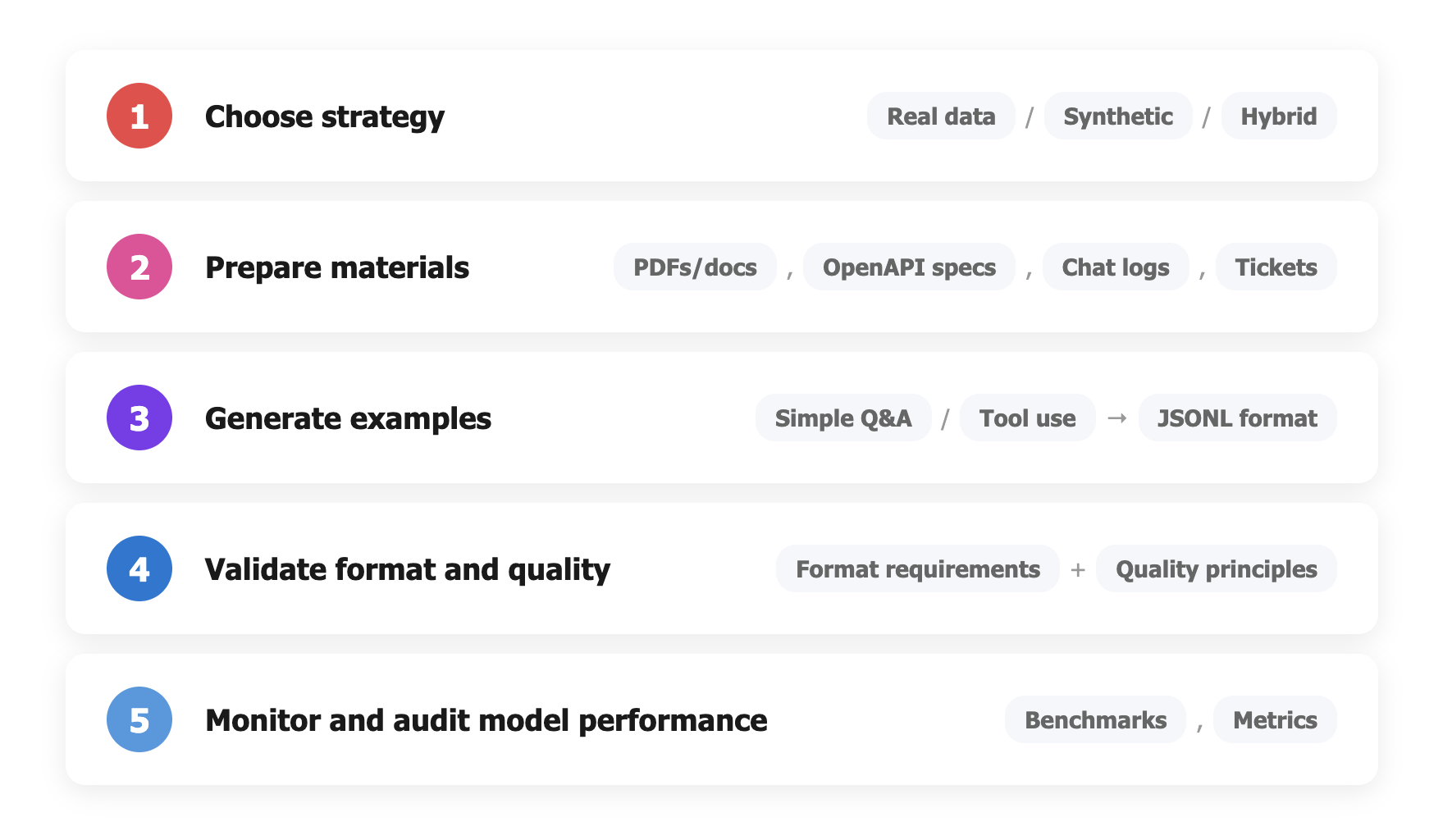

Choose your data acquisition strategy: Real data works when you have documented interactions. Synthetic generation works when examples are scarce or contain sensitive information. Hybrid combines both, for example you can use real data for common scenarios, synthetic for edge cases.

Prepare your source materials: For synthetic data: clean PDFs/markdown for Q&A generation or OpenAPI specs for tool use generation. For real data: gather chat logs, support tickets, or documented interactions. Remove unnecessary formatting and marketing content.

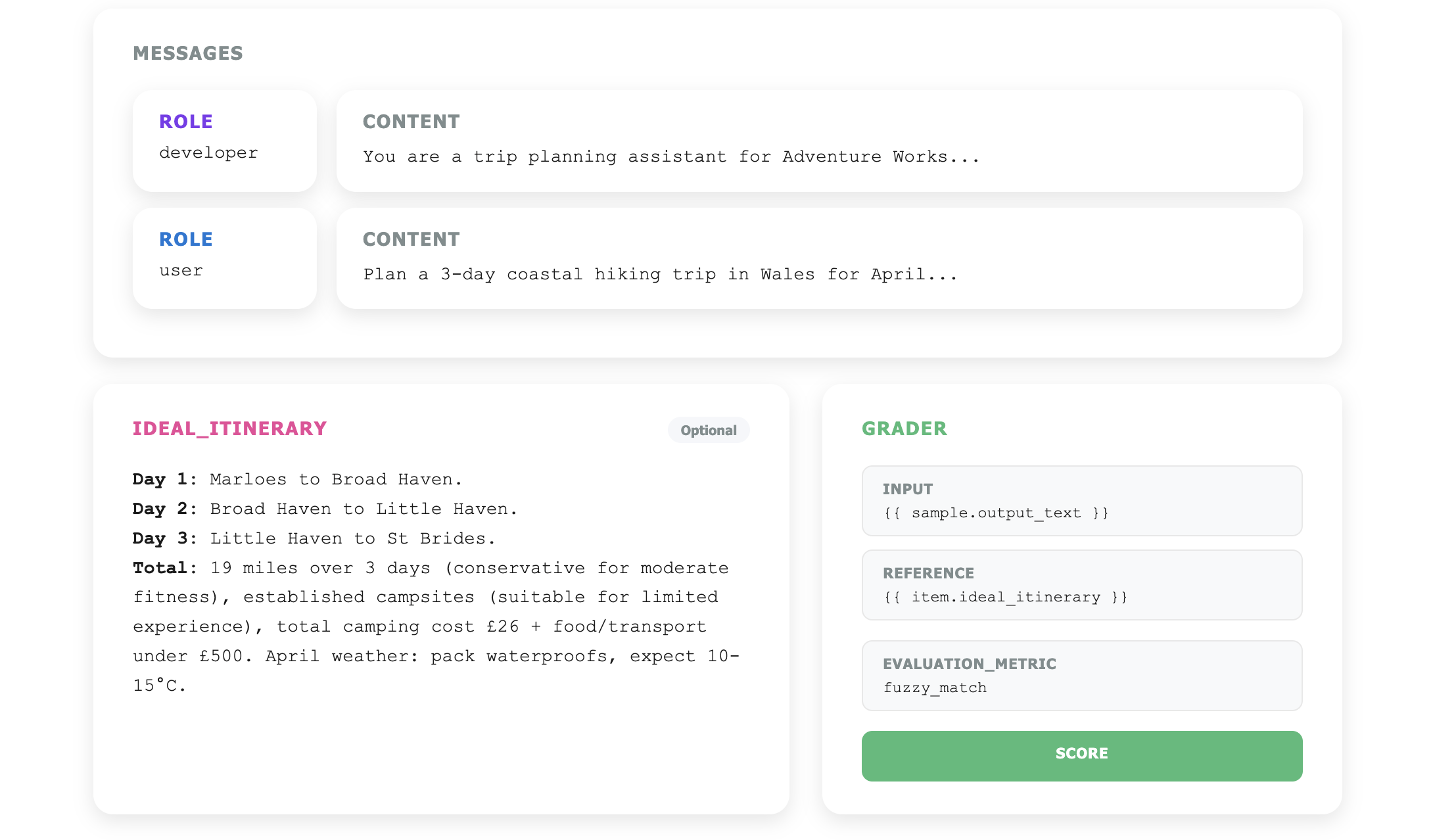

Generate or curate training examples: Use Foundry's synthetic data generators (Simple Q&A or Tool use) to create JSONL examples, or manually structure real interactions into JSONL format.

Validate format and quality: Verify format compliance and quality principles. Review synthetic examples for incorrect information. Start with 50-100 examples, validate thoroughly, then scale.

Monitor and audit model performance: Evaluate model benchmarks and metrics on validation data after fine-tuning. If performance falls short, analyze failures to determine whether you need more, better, or different examples.

Tip

Learn more about how to generate synthetic data for fine-tuning with Microsoft Foundry

With properly formatted, high-quality training data created, you're ready to learn how to optimize fine-tuning a model.