Introduction

Language models are powerful tools for building generative AI applications, but a base model on its own might not meet all of your requirements. The quality, accuracy, and consistency of the responses a model generates depend on how you configure and augment it.

Imagine you're a developer working for a travel agency. You're building a chat application to help customers with their travel-related questions. The base model gives decent responses, but your team has specific needs: the responses should follow the company's tone of voice, include accurate information about your hotel catalog, and maintain a consistent format across interactions. How do you get the model to perform at this level?

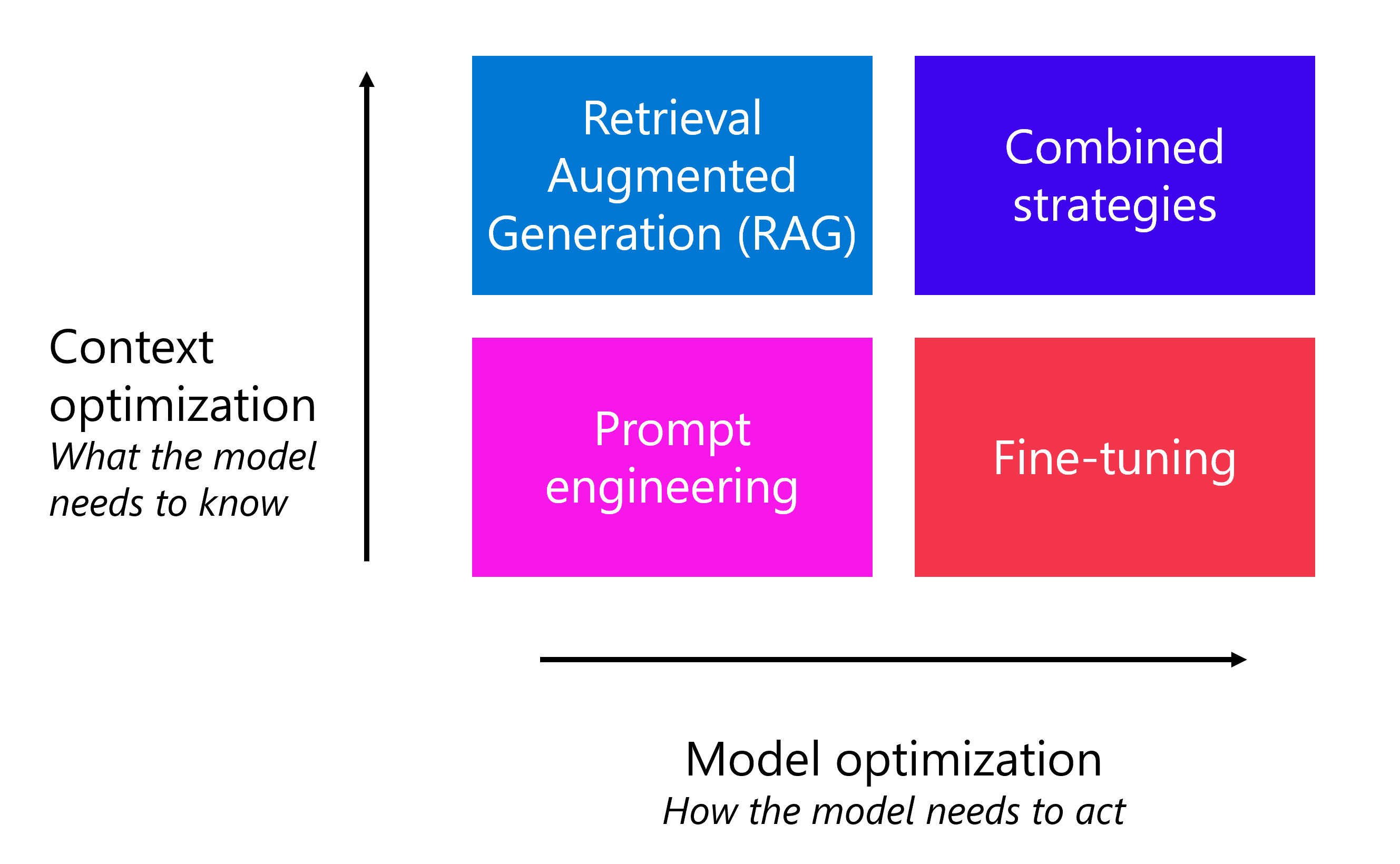

There are several complementary strategies you can use to optimize a generative AI model's performance. These strategies range from quick, low-cost adjustments to more involved techniques that require additional time and resources.

Throughout this module, you explore each of these strategies and learn when and how to apply them individually or in combination.

In this module, you learn how to:

- Apply prompt engineering techniques including system messages, few-shot learning, and model parameters to optimize model output.

- Understand when and how to ground a language model using Retrieval Augmented Generation (RAG).

- Identify when fine-tuning a model improves behavioral consistency.

- Compare optimization strategies and determine when to combine them.

Prerequisites

- Familiarity with fundamental AI concepts and services in Azure.

- A basic understanding of generative AI models and how they generate responses.