Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

Use speech recognition to provide input, specify an action or command, and accomplish tasks.

Important APIs: Windows.Media.SpeechRecognition

Speech recognition is made up of a speech runtime, recognition APIs for programming the runtime, ready-to-use grammars for dictation and web search, and a default system UI that helps users discover and use speech recognition features.

Configure speech recognition

To support speech recognition with your app, the user must connect and enable a microphone on their device, and accept the Microsoft Privacy Policy granting permission for your app to use it.

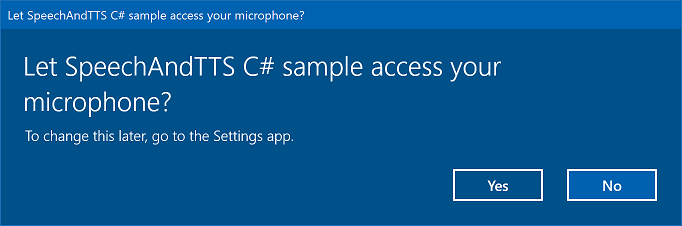

To automatically prompt the user with a system dialog requesting permission to access and use the microphone's audio feed (example from the Speech recognition and speech synthesis sample shown below), just set the Microphone device capability in the App package manifest. For more detail, see App capability declarations.

If the user clicks Yes to grant access to the microphone, your app is added to the list of approved applications on the Settings -> Privacy -> Microphone page. However, as the user can choose to turn this setting off at any time, you should confirm that your app has access to the microphone before attempting to use it.

If you also want to support dictation, Cortana, or other speech recognition services (such as a predefined grammar defined in a topic constraint), you must also confirm that Online speech recognition (Settings -> Privacy -> Speech) is enabled.

This snippet shows how your app can check if a microphone is present and if it has permission to use it.

public class AudioCapturePermissions

{

// If no microphone is present, an exception is thrown with the following HResult value.

private static int NoCaptureDevicesHResult = -1072845856;

/// <summary>

/// Note that this method only checks the Settings->Privacy->Microphone setting, it does not handle

/// the Cortana/Dictation privacy check.

///

/// You should perform this check every time the app gets focus, in case the user has changed

/// the setting while the app was suspended or not in focus.

/// </summary>

/// <returns>True, if the microphone is available.</returns>

public async static Task<bool> RequestMicrophonePermission()

{

try

{

// Request access to the audio capture device.

MediaCaptureInitializationSettings settings = new MediaCaptureInitializationSettings();

settings.StreamingCaptureMode = StreamingCaptureMode.Audio;

settings.MediaCategory = MediaCategory.Speech;

MediaCapture capture = new MediaCapture();

await capture.InitializeAsync(settings);

}

catch (TypeLoadException)

{

// Thrown when a media player is not available.

var messageDialog = new Windows.UI.Popups.MessageDialog("Media player components are unavailable.");

await messageDialog.ShowAsync();

return false;

}

catch (UnauthorizedAccessException)

{

// Thrown when permission to use the audio capture device is denied.

// If this occurs, show an error or disable recognition functionality.

return false;

}

catch (Exception exception)

{

// Thrown when an audio capture device is not present.

if (exception.HResult == NoCaptureDevicesHResult)

{

var messageDialog = new Windows.UI.Popups.MessageDialog("No Audio Capture devices are present on this system.");

await messageDialog.ShowAsync();

return false;

}

else

{

throw;

}

}

return true;

}

}

/// <summary>

/// Note that this method only checks the Settings->Privacy->Microphone setting, it does not handle

/// the Cortana/Dictation privacy check.

///

/// You should perform this check every time the app gets focus, in case the user has changed

/// the setting while the app was suspended or not in focus.

/// </summary>

/// <returns>True, if the microphone is available.</returns>

IAsyncOperation<bool>^ AudioCapturePermissions::RequestMicrophonePermissionAsync()

{

return create_async([]()

{

try

{

// Request access to the audio capture device.

MediaCaptureInitializationSettings^ settings = ref new MediaCaptureInitializationSettings();

settings->StreamingCaptureMode = StreamingCaptureMode::Audio;

settings->MediaCategory = MediaCategory::Speech;

MediaCapture^ capture = ref new MediaCapture();

return create_task(capture->InitializeAsync(settings))

.then([](task<void> previousTask) -> bool

{

try

{

previousTask.get();

}

catch (AccessDeniedException^)

{

// Thrown when permission to use the audio capture device is denied.

// If this occurs, show an error or disable recognition functionality.

return false;

}

catch (Exception^ exception)

{

// Thrown when an audio capture device is not present.

if (exception->HResult == AudioCapturePermissions::NoCaptureDevicesHResult)

{

auto messageDialog = ref new Windows::UI::Popups::MessageDialog("No Audio Capture devices are present on this system.");

create_task(messageDialog->ShowAsync());

return false;

}

throw;

}

return true;

});

}

catch (Platform::ClassNotRegisteredException^ ex)

{

// Thrown when a media player is not available.

auto messageDialog = ref new Windows::UI::Popups::MessageDialog("Media Player Components unavailable.");

create_task(messageDialog->ShowAsync());

return create_task([] {return false; });

}

});

}

var AudioCapturePermissions = WinJS.Class.define(

function () { }, {},

{

requestMicrophonePermission: function () {

/// <summary>

/// Note that this method only checks the Settings->Privacy->Microphone setting, it does not handle

/// the Cortana/Dictation privacy check.

///

/// You should perform this check every time the app gets focus, in case the user has changed

/// the setting while the app was suspended or not in focus.

/// </summary>

/// <returns>True, if the microphone is available.</returns>

return new WinJS.Promise(function (completed, error) {

try {

// Request access to the audio capture device.

var captureSettings = new Windows.Media.Capture.MediaCaptureInitializationSettings();

captureSettings.streamingCaptureMode = Windows.Media.Capture.StreamingCaptureMode.audio;

captureSettings.mediaCategory = Windows.Media.Capture.MediaCategory.speech;

var capture = new Windows.Media.Capture.MediaCapture();

capture.initializeAsync(captureSettings).then(function () {

completed(true);

},

function (error) {

// Audio Capture can fail to initialize if there's no audio devices on the system, or if

// the user has disabled permission to access the microphone in the Privacy settings.

if (error.number == -2147024891) { // Access denied (microphone disabled in settings)

completed(false);

} else if (error.number == -1072845856) { // No recording device present.

var messageDialog = new Windows.UI.Popups.MessageDialog("No Audio Capture devices are present on this system.");

messageDialog.showAsync();

completed(false);

} else {

error(error);

}

});

} catch (exception) {

if (exception.number == -2147221164) { // REGDB_E_CLASSNOTREG

var messageDialog = new Windows.UI.Popups.MessageDialog("Media Player components not available on this system.");

messageDialog.showAsync();

return false;

}

}

});

}

})

Recognize speech input

A constraint defines the words and phrases (vocabulary) that an app recognizes in speech input. Constraints are at the core of speech recognition and give your app greater control over the accuracy of speech recognition.

You can use the following types of constraints for recognizing speech input.

Predefined grammars

Predefined dictation and web-search grammars provide speech recognition for your app without requiring you to author a grammar. When using these grammars, speech recognition is performed by a remote web service and the results are returned to the device.

The default free-text dictation grammar can recognize most words and phrases that a user can say in a particular language, and is optimized to recognize short phrases. The predefined dictation grammar is used if you don't specify any constraints for your SpeechRecognizer object. Free-text dictation is useful when you don't want to limit the kinds of things a user can say. Typical uses include creating notes or dictating the content for a message.

The web-search grammar, like a dictation grammar, contains a large number of words and phrases that a user might say. However, it is optimized to recognize terms that people typically use when searching the web.

Note

Because predefined dictation and web-search grammars can be large, and because they are online (not on the device), performance might not be as fast as with a custom grammar installed on the device.

These predefined grammars can be used to recognize up to 10 seconds of speech input and require no authoring effort on your part. However, they do require a connection to a network.

To use web-service constraints, speech input and dictation support must be enabled in Settings by turning on the "Get to know me" option in Settings -> Privacy -> Speech, inking, and typing.

Here, we show how to test whether speech input is enabled and open the Settings -> Privacy -> Speech, inking, and typing page, if not.

First, we initialize a global variable (HResultPrivacyStatementDeclined) to the HResult value of 0x80045509. See Exception handling for in C# or Visual Basic.

private static uint HResultPrivacyStatementDeclined = 0x80045509;

We then catch any standard exceptions during recognition and test if the HResult value is equal to the value of the HResultPrivacyStatementDeclined variable. If so, we display a warning and call await Windows.System.Launcher.LaunchUriAsync(new Uri("ms-settings:privacy-accounts")); to open the Settings page.

catch (Exception exception)

{

// Handle the speech privacy policy error.

if ((uint)exception.HResult == HResultPrivacyStatementDeclined)

{

resultTextBlock.Visibility = Visibility.Visible;

resultTextBlock.Text = "The privacy statement was declined." +

"Go to Settings -> Privacy -> Speech, inking and typing, and ensure you" +

"have viewed the privacy policy, and 'Get To Know You' is enabled.";

// Open the privacy/speech, inking, and typing settings page.

await Windows.System.Launcher.LaunchUriAsync(new Uri("ms-settings:privacy-accounts"));

}

else

{

var messageDialog = new Windows.UI.Popups.MessageDialog(exception.Message, "Exception");

await messageDialog.ShowAsync();

}

}

See SpeechRecognitionTopicConstraint.

Programmatic list constraints

Programmatic list constraints provide a lightweight approach to creating simple grammars using a list of words or phrases. A list constraint works well for recognizing short, distinct phrases. Explicitly specifying all words in a grammar also improves recognition accuracy, as the speech recognition engine must only process speech to confirm a match. The list can also be programmatically updated.

A list constraint consists of an array of strings that represents speech input that your app will accept for a recognition operation. You can create a list constraint in your app by creating a speech-recognition list-constraint object and passing an array of strings. Then, add that object to the constraints collection of the recognizer. Recognition is successful when the speech recognizer recognizes any one of the strings in the array.

See SpeechRecognitionListConstraint.

SRGS grammars

An Speech Recognition Grammar Specification (SRGS) grammar is a static document that, unlike a programmatic list constraint, uses the XML format defined by the SRGS Version 1.0. An SRGS grammar provides the greatest control over the speech recognition experience by letting you capture multiple semantic meanings in a single recognition.

See SpeechRecognitionGrammarFileConstraint.

Voice command constraints

Use a Voice Command Definition (VCD) XML file to define the commands that the user can say to initiate actions when activating your app. See SpeechRecognitionVoiceCommandDefinitionConstraint.

Note The type of constraint type you use depends on the complexity of the recognition experience you want to create. Any could be the best choice for a specific recognition task, and you might find uses for all types of constraints in your app.

To get started with constraints, see Define custom recognition constraints.

The predefined Universal Windows app dictation grammar recognizes most words and short phrases in a language. It is activated by default when a speech recognizer object is instantiated without custom constraints.

In this example, we show how to:

- Create a speech recognizer.

- Compile the default Universal Windows app constraints (no grammars have been added to the speech recognizer's grammar set).

- Start listening for speech by using the basic recognition UI and TTS feedback provided by the RecognizeWithUIAsync method. Use the RecognizeAsync method if the default UI is not required.

private async void StartRecognizing_Click(object sender, RoutedEventArgs e)

{

// Create an instance of SpeechRecognizer.

var speechRecognizer = new Windows.Media.SpeechRecognition.SpeechRecognizer();

// Compile the dictation grammar by default.

await speechRecognizer.CompileConstraintsAsync();

// Start recognition.

Windows.Media.SpeechRecognition.SpeechRecognitionResult speechRecognitionResult = await speechRecognizer.RecognizeWithUIAsync();

// Do something with the recognition result.

var messageDialog = new Windows.UI.Popups.MessageDialog(speechRecognitionResult.Text, "Text spoken");

await messageDialog.ShowAsync();

}

Customize the recognition UI

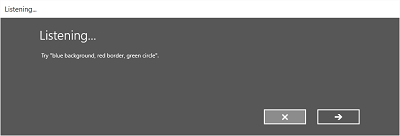

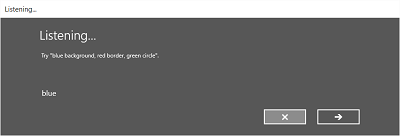

When your app attempts speech recognition by calling SpeechRecognizer.RecognizeWithUIAsync, several screens are shown in the following order.

If you're using a constraint based on a predefined grammar (dictation or web search):

- The Listening screen.

- The Thinking screen.

- The Heard you say screen or the error screen.

If you're using a constraint based on a list of words or phrases, or a constraint based on a SRGS grammar file:

- The Listening screen.

- The Did you say screen, if what the user said could be interpreted as more than one potential result.

- The Heard you say screen or the error screen.

The following image shows an example of the flow between screens for a speech recognizer that uses a constraint based on a SRGS grammar file. In this example, speech recognition was successful.

The Listening screen can provide examples of words or phrases that the app can recognize. Here, we show how to use the properties of the SpeechRecognizerUIOptions class (obtained by calling the SpeechRecognizer.UIOptions property) to customize content on the Listening screen.

private async void WeatherSearch_Click(object sender, RoutedEventArgs e)

{

// Create an instance of SpeechRecognizer.

var speechRecognizer = new Windows.Media.SpeechRecognition.SpeechRecognizer();

// Listen for audio input issues.

speechRecognizer.RecognitionQualityDegrading += speechRecognizer_RecognitionQualityDegrading;

// Add a web search grammar to the recognizer.

var webSearchGrammar = new Windows.Media.SpeechRecognition.SpeechRecognitionTopicConstraint(Windows.Media.SpeechRecognition.SpeechRecognitionScenario.WebSearch, "webSearch");

speechRecognizer.UIOptions.AudiblePrompt = "Say what you want to search for...";

speechRecognizer.UIOptions.ExampleText = @"Ex. 'weather for London'";

speechRecognizer.Constraints.Add(webSearchGrammar);

// Compile the constraint.

await speechRecognizer.CompileConstraintsAsync();

// Start recognition.

Windows.Media.SpeechRecognition.SpeechRecognitionResult speechRecognitionResult = await speechRecognizer.RecognizeWithUIAsync();

//await speechRecognizer.RecognizeWithUIAsync();

// Do something with the recognition result.

var messageDialog = new Windows.UI.Popups.MessageDialog(speechRecognitionResult.Text, "Text spoken");

await messageDialog.ShowAsync();

}

Related articles

Samples