Nota:

El acceso a esta página requiere autorización. Puede intentar iniciar sesión o cambiar directorios.

El acceso a esta página requiere autorización. Puede intentar cambiar los directorios.

In the previous blog https://blogs.msdn.microsoft.com/sqlserverstorageengine/2016/02/29/real-time-operational-analytics-simple-example-using-nonclustered-clustered-columnstore-index-ncci/ , I showed you how you can create NCCI on a regular rowstore table and speed up analytics query performance significantly. Now, let us shift our focus to the overhead of maintaining NCCI on transactional workload.

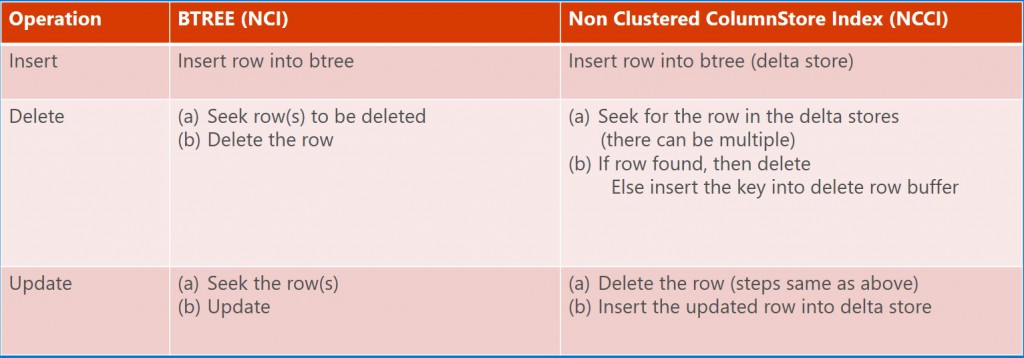

Any additional index that you create on a table will have additional overheard as part of index maintenance. For example, any new row inserted into the table, it needs to be inserted into the index. Similarly, for updates/deletes operations. The first question to be asked is how expensive it is to maintain a columnstore index compared to regular btree index. Here is the table comparing NCCI and regular btree nonclustered index (NCI).

Key points for each of the operations are

- Insert Operation: the row inserted into NCCI lands into a delta rowgroup which is physically structured as a rowstore. To make this insert more efficient, the rows in delta rowgroup are stored uncompressed. In SQL Server 2014, the delta rowgroup was PAGE compressed. In SQL Server 2016, the delta rowgroups for CCI and NCCI are uncompressed.

- Delete Operation: This operation is a bit more complicate and needs explanation. When a row is deleted, it is first removed from the rowstore table. To delete the row from NCCI, we need to somehow find this row in the NCCI efficiently. Since NCCI does not really have any key columns, how do we find it? Well, the trick is to use clustered index key column(s) including uniqueifier if needed or using RID for heap (i.e. the table with clustered index). These column(s) are included in NCCI automatically even if you did not select them as part of NCCI definition. Each delta rowgroup is organized as clustered index using these column(s). Now, to find the row to be deleted, SQL Server searches for the row in each of the delta rowgroups. If the row is found, it is deleted like any other rowstore. If the row is not found, then the the row exists in the compressed rowgroups. Since there is no efficient way to search compressed rowgroups, the row identifier is inserted into an internal btree called ‘delete buffer’. Periodically this ‘delete buffer’ is merged into the ‘delete bitmap’. When accessing NCCI for queries, these rows are filtered our automatically. The Delete operation can get more expensive if there are large number of delta rowgroups. To minimize the number of delta rowgroups for NCCI, we grow delta rowgroups exponentially. For example, the first delta rowgroup is closed when number of rows reaches 1 million. The second delta rowgroup reaches 1 million mark but the first one has not yet been compressed, we internally increase the threshold to 2 million. The third delta rowgroup is closed at 4 million, next one at 8 million with max to 32 million. Even though the delta rowgroup may have 2 million rows, when it is compressed it is broken into chunks of 1 million or less when compressing. Another point to note that when a row is deleted from compressed rowgroups, it causes index fragmentation. SQL Server 2016 provides an ONLINE operation to remove the deleted rows.

- Update Operation: Update to NCCI is done as delete/insert. There is no in place update even when the row is found in delta rowgroup. This is something we may look into in future

I realize this explanation touched upon many internal details that you may not care about. The key takeaway is that NCCI is more expensive to maintain than a regular btree index. If your workload has one or more btree indexes primarily created for range queries, you may be able to drop and replace them by a single NCCI. This may reduce the total number of indexes on the table and it may infact speed up the transactional workload. Not suggesting this is a common case but definitely one of the possibilities.

In the next blogs, we will discuss filtered NCCI and compression delay to minimize the overhead of NCCI maintenance.

Thanks

Sunil