AlwaysON - HADRON Learning Series: Worker Pool Usage for HADRON Enabled Databases

I am on several e-mail aliases related to Always On databases (reference Availability Group, AG, HADRON) and the question of worker thread usage is a hot topic this week. I developed some training around this during the beta so I decided to pull out the relevant details and share them with you all. Hopefully this will provide you with a 10,000 foot view around the basic worker thread consumption related to HADRON enabled databases.

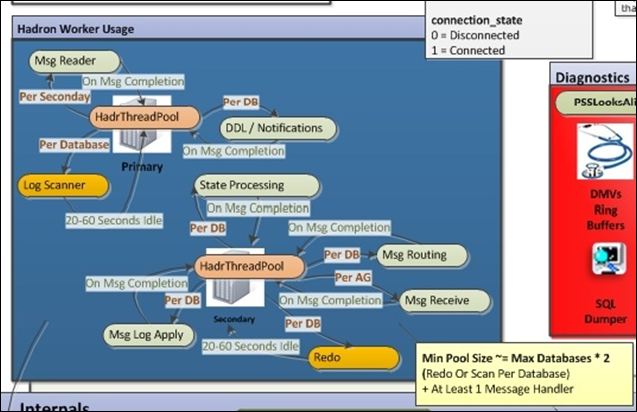

The following is a drawing I created for the training, showing the HadrThreadPool on a primary (upper left) and a secondary (lower right).

Always On is different than database mirroring. Database mirroring used dedicated threads per database and Always On uses a request queue and worker pool to handle the requests. The HadrThreadPool is shared among all HADRON enabled databases.

Primary

On the primary messages the active log scanner is the log pole. When a secondary is ready to receive log blocks a message is sent to the primary to start the log scanning. This message is handled by a worker in the HadrThreadPool. The startup and tearing down of a log scan operation can be expensive so the request will retain the worker thread, waiting on new log record flushes, until it has been idle for at least 20 seconds, usually 60 seconds before returning the message to the pool for reuse. All other messages acquire a worker, perform the operation and return the worker to the pool.

Secondary

The expensive path on the secondary is the redo work. Similar to how the primary waits for idle log scan activity the secondary will wait for idle redo activity for at least 20 seconds before returning the worker to the pool.

Messages/Task Types

There is wide set of messages exchanged between the primary and secondary as depicted in the following diagram.

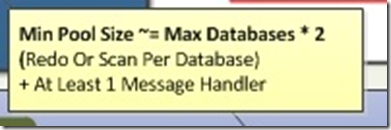

HadrThreadPool - High Level Formula for HADRON Worker Needs

The formula is as follows but I have to temper this with 'ACTIVE.'

To keep the pool of workers fully utilized you have to have activity in the database. If I have 100 databases in 25 different AGs but only 10 active databases (at any point in time) the (Max Databases) I would pick a max databases value around 15 for the calculation as to the relative size of the HadrThreadPool used on my system. If all 100 database are active then account for 100 Max Databases in your calculation.

Note: Be sure to add the number of DB Replicas to your calculation.

How Do I See The Pool Workers?

The common tasks assigned to HADRON activities can be observed using the following query.

select

* from sys.dm_exec_requests

where command like '%HADR%'

or command like '%DB%'

or command like '%BRKR%' -- Not HadrThreadPool but Service Broker transport threads neededRelevant Command Types

HADR_BACKUP_LOCK_HOLDER

HADR_AR_MGR_STARTUP

HADR_AR_MGR_RESTART

HADR_AR_MGR_NOTIFICATION_WORKER

DB_MIRRORXEvents

There are many new XEvents associated with HADRON. The XeSqlPkg::hadr_worker_pool_task allows you to watch which HADRON tasks are executing and completing on your system so you can establish a specific baseline for concurrent task execution levels.

Backup and File Streams Impacts

A backup activity on a secondary requires a worker from the pool on the primary to maintain the proper locking and backup sequence capabilities. This could be a longer term thread and scheduling of backups can impact the worker pool.

The file stream data is not part of the physical LDF file so the actual file stream data needs to be streamed to the secondary. On the primary the log block is cracked (find all File Stream records and send proper requests to secondary) and the file stream data is sent in parallel with the log blocks. The more file stream activity the database has the more likely additional threads are necessary to handle the parallel file stream shipping activities on the primary and secondary (receive and save).

Max Usage

The formula uses a 2x factor calculation. For a database that is under heavy activity, backups frequently active and file stream activity a 5x factor would be max use case calculation at full utilization. Again, the database activity is key to the worker use and reuse.

File Steam Worker - Per database worker that is held long term

Backup - Per database worker that is held long term (duration of backup)

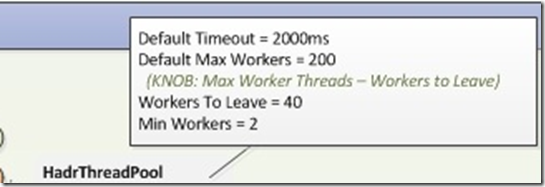

Cap

The HardThreadPool is capped at the sp_configure 'max worker threads' minus 40 level. To increase the size of the HadrThreadPool increase the max worker thread setting. Note: increasing the max worker thread setting can reduce the buffer pool size.

Idle Workers in HadrThreadPool

A worker in the HadrThreadPool, in an idle state for more than 2 seconds can be returned to the SQL system pool.

Bob Dorr - Principal SQL Server Escalation Engineer