Monitor performance of models deployed to production

APPLIES TO:

Azure CLI ml extension v2 (current)

Azure CLI ml extension v2 (current)

Python SDK azure-ai-ml v2 (current)

Python SDK azure-ai-ml v2 (current)

Learn to use Azure Machine Learning's model monitoring to continuously track the performance of machine learning models in production. Model monitoring provides you with a broad view of monitoring signals and alerts you to potential issues. When you monitor signals and performance metrics of models in production, you can critically evaluate the inherent risks associated with them and identify blind spots that could adversely affect your business.

In this article you, learn to perform the following tasks:

- Set up out-of box and advanced monitoring for models that are deployed to Azure Machine Learning online endpoints

- Monitor performance metrics for models in production

- Monitor models that are deployed outside Azure Machine Learning or deployed to Azure Machine Learning batch endpoints

- Set up model monitoring with custom signals and metrics

- Interpret monitoring results

- Integrate Azure Machine Learning model monitoring with Azure Event Grid

Prerequisites

Before following the steps in this article, make sure you have the following prerequisites:

The Azure CLI and the

mlextension to the Azure CLI. For more information, see Install, set up, and use the CLI (v2).Important

The CLI examples in this article assume that you are using the Bash (or compatible) shell. For example, from a Linux system or Windows Subsystem for Linux.

An Azure Machine Learning workspace. If you don't have one, use the steps in the Install, set up, and use the CLI (v2) to create one.

Azure role-based access controls (Azure RBAC) are used to grant access to operations in Azure Machine Learning. To perform the steps in this article, your user account must be assigned the owner or contributor role for the Azure Machine Learning workspace, or a custom role allowing

Microsoft.MachineLearningServices/workspaces/onlineEndpoints/*. For more information, see Manage access to an Azure Machine Learning workspace.For monitoring a model that is deployed to an Azure Machine Learning online endpoint (managed online endpoint or Kubernetes online endpoint), be sure to:

Have a model already deployed to an Azure Machine Learning online endpoint. Both managed online endpoint and Kubernetes online endpoint are supported. If you don't have a model deployed to an Azure Machine Learning online endpoint, see Deploy and score a machine learning model by using an online endpoint.

Enable data collection for your model deployment. You can enable data collection during the deployment step for Azure Machine Learning online endpoints. For more information, see Collect production data from models deployed to a real-time endpoint.

For monitoring a model that is deployed to an Azure Machine Learning batch endpoint or deployed outside of Azure Machine Learning, be sure to:

- Have a means to collect production data and register it as an Azure Machine Learning data asset.

- Update the registered data asset continuously for model monitoring.

- (Recommended) Register the model in an Azure Machine Learning workspace, for lineage tracking.

Important

Model monitoring jobs are scheduled to run on serverless Spark compute pools with support for the following VM instance types: Standard_E4s_v3, Standard_E8s_v3, Standard_E16s_v3, Standard_E32s_v3, and Standard_E64s_v3. You can select the VM instance type with the create_monitor.compute.instance_type property in your YAML configuration or from the dropdown in the Azure Machine Learning studio.

Set up out-of-box model monitoring

Suppose you deploy your model to production in an Azure Machine Learning online endpoint and enable data collection at deployment time. In this scenario, Azure Machine Learning collects production inference data, and automatically stores it in Microsoft Azure Blob Storage. You can then use Azure Machine Learning model monitoring to continuously monitor this production inference data.

You can use the Azure CLI, the Python SDK, or the studio for an out-of-box setup of model monitoring. The out-of-box model monitoring configuration provides the following monitoring capabilities:

- Azure Machine Learning automatically detects the production inference dataset associated with an Azure Machine Learning online deployment and uses the dataset for model monitoring.

- The comparison reference dataset is set as the recent, past production inference dataset.

- Monitoring setup automatically includes and tracks the built-in monitoring signals: data drift, prediction drift, and data quality. For each monitoring signal, Azure Machine Learning uses:

- the recent, past production inference dataset as the comparison reference dataset.

- smart defaults for metrics and thresholds.

- A monitoring job is scheduled to run daily at 3:15am (for this example) to acquire monitoring signals and evaluate each metric result against its corresponding threshold. By default, when any threshold is exceeded, Azure Machine Learning sends an alert email to the user that set up the monitor.

Azure Machine Learning model monitoring uses az ml schedule to schedule a monitoring job. You can create the out-of-box model monitor with the following CLI command and YAML definition:

az ml schedule create -f ./out-of-box-monitoring.yaml

The following YAML contains the definition for the out-of-box model monitoring.

# out-of-box-monitoring.yaml

$schema: http://azureml/sdk-2-0/Schedule.json

name: credit_default_model_monitoring

display_name: Credit default model monitoring

description: Credit default model monitoring setup with minimal configurations

trigger:

# perform model monitoring activity daily at 3:15am

type: recurrence

frequency: day #can be minute, hour, day, week, month

interval: 1 # #every day

schedule:

hours: 3 # at 3am

minutes: 15 # at 15 mins after 3am

create_monitor:

compute: # specify a spark compute for monitoring job

instance_type: standard_e4s_v3

runtime_version: "3.3"

monitoring_target:

ml_task: classification # model task type: [classification, regression, question_answering]

endpoint_deployment_id: azureml:credit-default:main # azureml endpoint deployment id

alert_notification: # emails to get alerts

emails:

- abc@example.com

- def@example.com

Set up advanced model monitoring

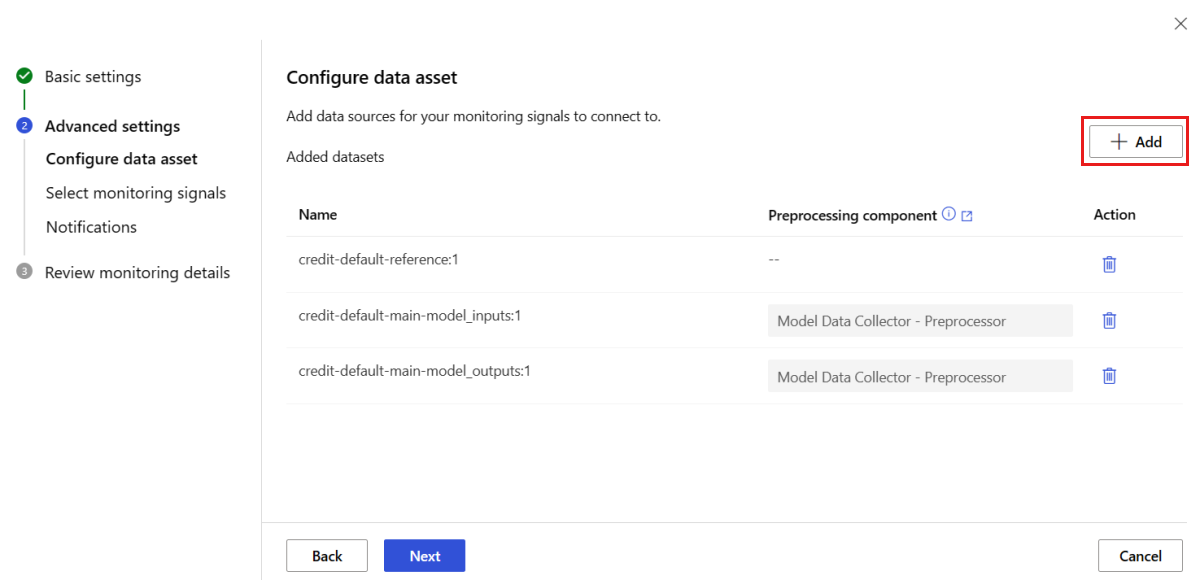

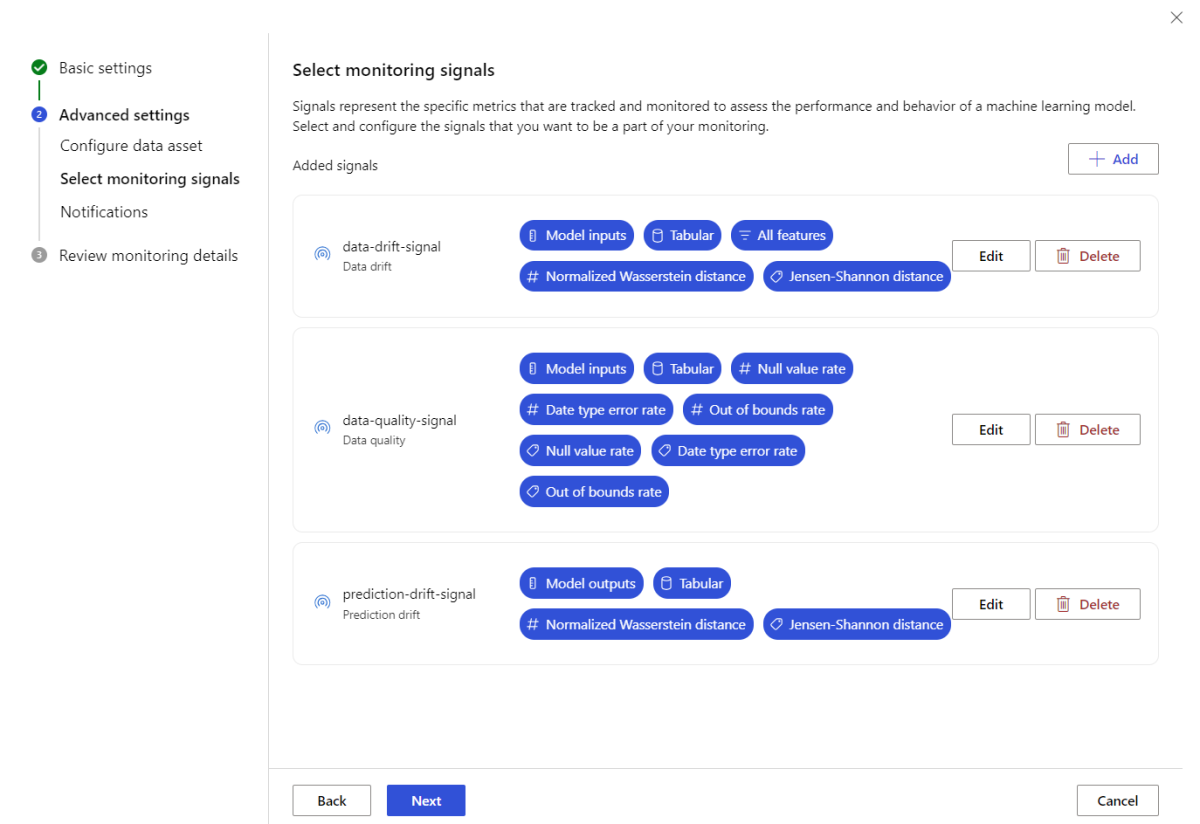

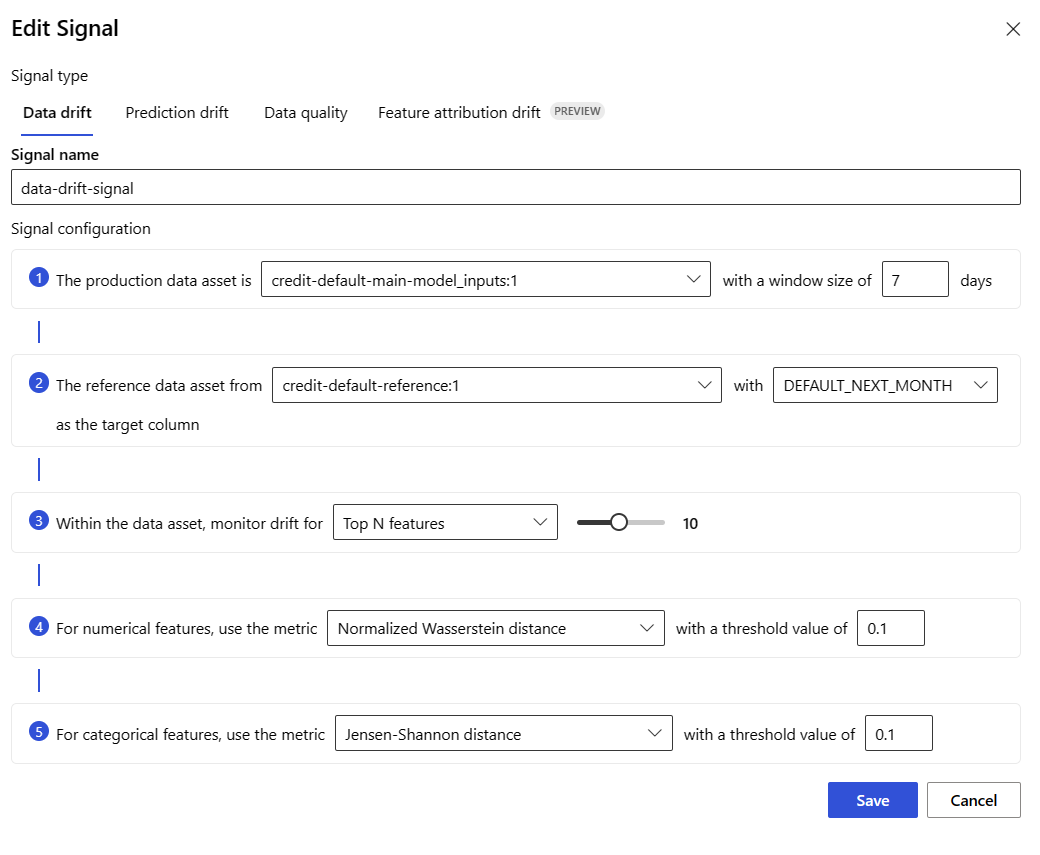

Azure Machine Learning provides many capabilities for continuous model monitoring. See Capabilities of model monitoring for a comprehensive list of these capabilities. In many cases, you need to set up model monitoring with advanced monitoring capabilities. In the following sections, you set up model monitoring with these capabilities:

- Use of multiple monitoring signals for a broad view.

- Use of historical model training data or validation data as the comparison reference dataset.

- Monitoring of top N most important features and individual features.

Configure feature importance

Feature importance represents the relative importance of each input feature to a model's output. For example, temperature might be more important to a model's prediction compared to elevation. Enabling feature importance can give you visibility into which features you don't want drifting or having data quality issues in production.

To enable feature importance with any of your signals (such as data drift or data quality), you need to provide:

- Your training dataset as the

reference_datadataset. - The

reference_data.data_column_names.target_columnproperty, which is the name of your model's output/prediction column.

After enabling feature importance, you'll see a feature importance for each feature you're monitoring in the Azure Machine Learning model monitoring studio UI.

You can enable/disable alerts for each signal by setting alert_enabled property while using SDK or CLI.

You can use Azure CLI, the Python SDK, or the studio for advanced setup of model monitoring.

Create advanced model monitoring setup with the following CLI command and YAML definition:

az ml schedule create -f ./advanced-model-monitoring.yaml

The following YAML contains the definition for advanced model monitoring.

# advanced-model-monitoring.yaml

$schema: http://azureml/sdk-2-0/Schedule.json

name: fraud_detection_model_monitoring

display_name: Fraud detection model monitoring

description: Fraud detection model monitoring with advanced configurations

trigger:

# perform model monitoring activity daily at 3:15am

type: recurrence

frequency: day #can be minute, hour, day, week, month

interval: 1 # #every day

schedule:

hours: 3 # at 3am

minutes: 15 # at 15 mins after 3am

create_monitor:

compute:

instance_type: standard_e4s_v3

runtime_version: "3.3"

monitoring_target:

ml_task: classification

endpoint_deployment_id: azureml:credit-default:main

monitoring_signals:

advanced_data_drift: # monitoring signal name, any user defined name works

type: data_drift

# reference_dataset is optional. By default referece_dataset is the production inference data associated with Azure Machine Learning online endpoint

reference_data:

input_data:

path: azureml:credit-reference:1 # use training data as comparison reference dataset

type: mltable

data_context: training

data_column_names:

target_column: DEFAULT_NEXT_MONTH

features:

top_n_feature_importance: 10 # monitor drift for top 10 features

alert_enabled: true

metric_thresholds:

numerical:

jensen_shannon_distance: 0.01

categorical:

pearsons_chi_squared_test: 0.02

advanced_data_quality:

type: data_quality

# reference_dataset is optional. By default reference_dataset is the production inference data associated with Azure Machine Learning online endpoint

reference_data:

input_data:

path: azureml:credit-reference:1

type: mltable

data_context: training

features: # monitor data quality for 3 individual features only

- SEX

- EDUCATION

alert_enabled: true

metric_thresholds:

numerical:

null_value_rate: 0.05

categorical:

out_of_bounds_rate: 0.03

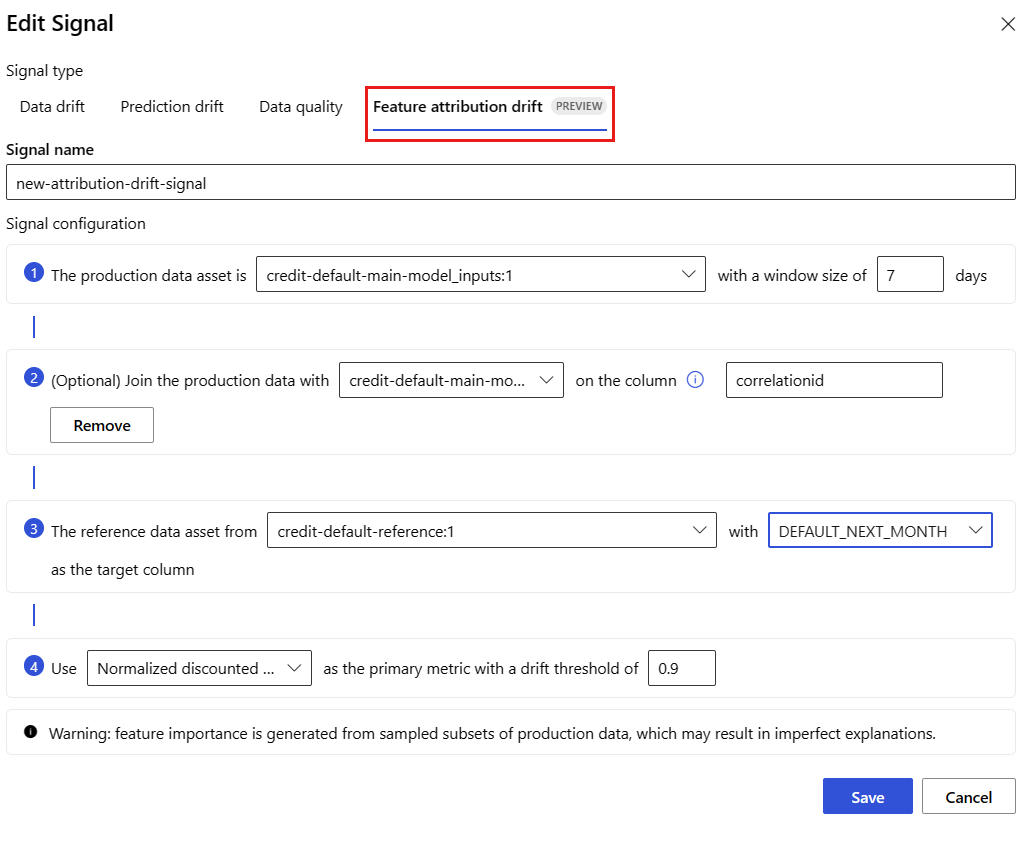

feature_attribution_drift_signal:

type: feature_attribution_drift

# production_data: is not required input here

# Please ensure Azure Machine Learning online endpoint is enabled to collected both model_inputs and model_outputs data

# Azure Machine Learning model monitoring will automatically join both model_inputs and model_outputs data and used it for computation

reference_data:

input_data:

path: azureml:credit-reference:1

type: mltable

data_context: training

data_column_names:

target_column: DEFAULT_NEXT_MONTH

alert_enabled: true

metric_thresholds:

normalized_discounted_cumulative_gain: 0.9

alert_notification:

emails:

- abc@example.com

- def@example.com

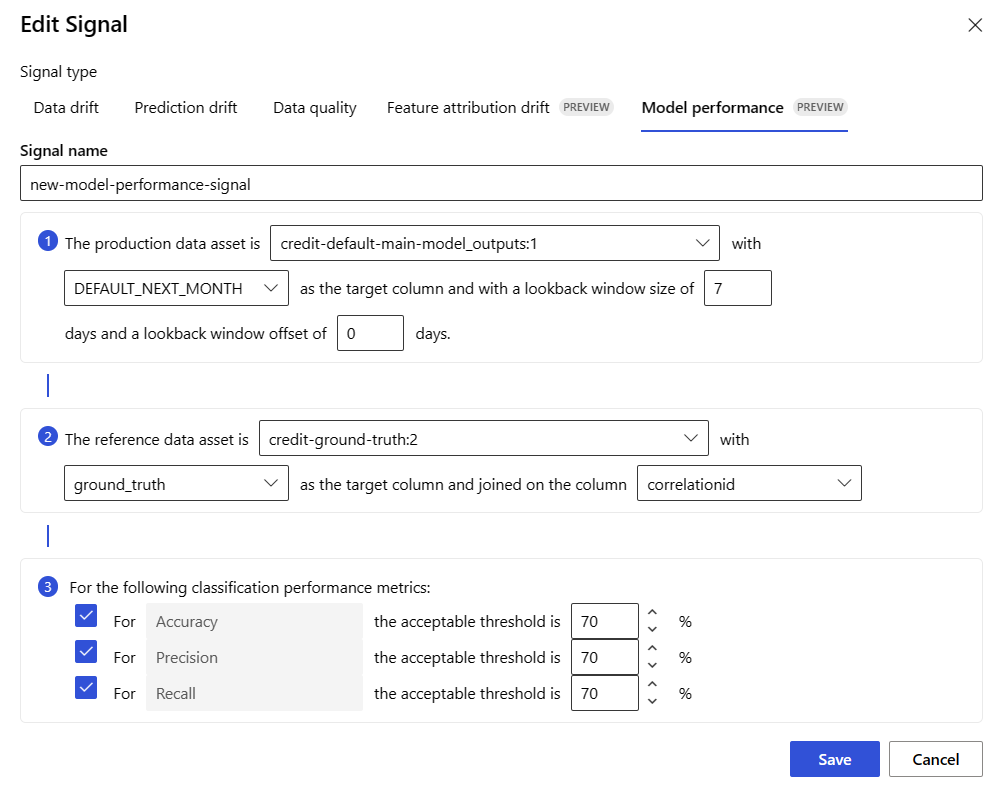

Set up model performance monitoring

Azure Machine Learning model monitoring enables you to track the performance of your models in production by calculating their performance metrics. The following model performance metrics are currently supported:

For classification models:

- Precision

- Accuracy

- Recall

For regression models:

- Mean Absolute Error (MAE)

- Mean Squared Error (MSE)

- Root Mean Squared Error (RMSE)

More prerequisites for model performance monitoring

You must satisfy the following requirements for you to configure your model performance signal:

Have output data for the production model (the model's predictions) with a unique ID for each row. If you collect production data with the Azure Machine Learning data collector, a

correlation_idis provided for each inference request for you. With the data collector, you also have the option to log your own unique ID from your application.Note

For Azure Machine Learning model performance monitoring, we recommend that you log your unique ID in its own column, using the Azure Machine Learning data collector.

Have ground truth data (actuals) with a unique ID for each row. The unique ID for a given row should match the unique ID for the model outputs for that particular inference request. This unique ID is used to join your ground truth dataset with the model outputs.

Without having ground truth data, you can't perform model performance monitoring. Since ground truth data is encountered at the application level, it's your responsibility to collect it as it becomes available. You should also maintain a data asset in Azure Machine Learning that contains this ground truth data.

(Optional) Have a pre-joined tabular dataset with model outputs and ground truth data already joined together.

Monitor model performance requirements when using data collector

If you use the Azure Machine Learning data collector to collect production inference data without supplying your own unique ID for each row as a separate column, a correlationid will be autogenerated for you and included in the logged JSON object. However, the data collector will batch rows that are sent within short time intervals of each other. Batched rows will fall within the same JSON object and will thus have the same correlationid.

In order to differentiate between the rows in the same JSON object, Azure Machine Learning model performance monitoring uses indexing to determine the order of the rows in the JSON object. For example, if three rows are batched together, and the correlationid is test, row one will have an ID of test_0, row two will have an ID of test_1, and row three will have an ID of test_2. To ensure that your ground truth dataset contains unique IDs that match to the collected production inference model outputs, ensure that you index each correlationid appropriately. If your logged JSON object only has one row, then the correlationid would be correlationid_0.

To avoid using this indexing, we recommend that you log your unique ID in its own column within the pandas DataFrame that you're logging with the Azure Machine Learning data collector. Then, in your model monitoring configuration, you specify the name of this column to join your model output data with your ground truth data. As long as the IDs for each row in both datasets are the same, Azure Machine Learning model monitoring can perform model performance monitoring.

Example workflow for monitoring model performance

To understand the concepts associated with model performance monitoring, consider this example workflow. Suppose you're deploying a model to predict whether credit card transactions are fraudulent or not, you can follow these steps to monitor the model's performance:

- Configure your deployment to use the data collector to collect the model's production inference data (input and output data). Let's say that the output data is stored in a column

is_fraud. - For each row of the collected inference data, log a unique ID. The unique ID can come from your application, or you can use the

correlationidthat Azure Machine Learning uniquely generates for each logged JSON object. - Later, when the ground truth (or actual)

is_frauddata becomes available, it also gets logged and mapped to the same unique ID that was logged with the model's outputs. - This ground truth

is_frauddata is also collected, maintained, and registered to Azure Machine Learning as a data asset. - Create a model performance monitoring signal that joins the model's production inference and ground truth data assets, using the unique ID columns.

- Finally, compute the model performance metrics.

Once you've satisfied the prerequisites for model performance monitoring, you can set up model monitoring with the following CLI command and YAML definition:

az ml schedule create -f ./model-performance-monitoring.yaml

The following YAML contains the definition for model monitoring with production inference data that you've collected.

$schema: http://azureml/sdk-2-0/Schedule.json

name: model_performance_monitoring

display_name: Credit card fraud model performance

description: Credit card fraud model performance

trigger:

type: recurrence

frequency: day

interval: 7

schedule:

hours: 10

minutes: 15

create_monitor:

compute:

instance_type: standard_e8s_v3

runtime_version: "3.3"

monitoring_target:

ml_task: classification

endpoint_deployment_id: azureml:loan-approval-endpoint:loan-approval-deployment

monitoring_signals:

fraud_detection_model_performance:

type: model_performance

production_data:

data_column_names:

prediction: is_fraud

correlation_id: correlation_id

reference_data:

input_data:

path: azureml:my_model_ground_truth_data:1

type: mltable

data_column_names:

actual: is_fraud

correlation_id: correlation_id

data_context: actuals

alert_enabled: true

metric_thresholds:

tabular_classification:

accuracy: 0.95

precision: 0.8

alert_notification:

emails:

- abc@example.com

Set up model monitoring by bringing in your production data to Azure Machine Learning

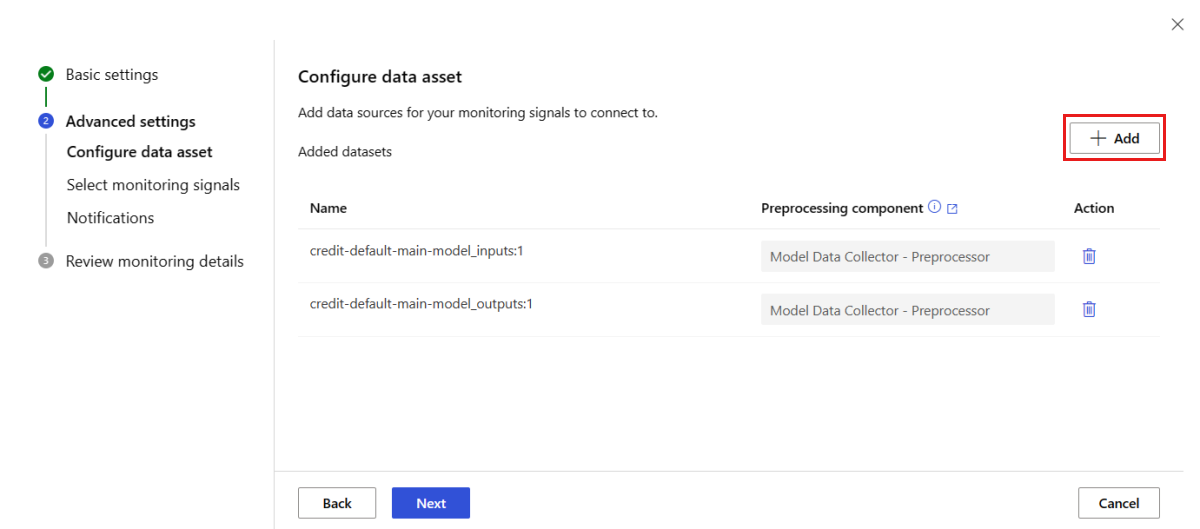

You can also set up model monitoring for models deployed to Azure Machine Learning batch endpoints or deployed outside of Azure Machine Learning. If you don't have a deployment, but you have production data, you can use the data to perform continuous model monitoring. To monitor these models, you must be able to:

- Collect production inference data from models deployed in production.

- Register the production inference data as an Azure Machine Learning data asset, and ensure continuous updates of the data.

- Provide a custom data preprocessing component and register it as an Azure Machine Learning component.

You must provide a custom data preprocessing component if your data isn't collected with the data collector. Without this custom data preprocessing component, the Azure Machine Learning model monitoring system won't know how to process your data into tabular form with support for time windowing.

Your custom preprocessing component must have these input and output signatures:

| Input/Output | Signature name | Type | Description | Example value |

|---|---|---|---|---|

| input | data_window_start |

literal, string | data window start-time in ISO8601 format. | 2023-05-01T04:31:57.012Z |

| input | data_window_end |

literal, string | data window end-time in ISO8601 format. | 2023-05-01T04:31:57.012Z |

| input | input_data |

uri_folder | The collected production inference data, which is registered as an Azure Machine Learning data asset. | azureml:myproduction_inference_data:1 |

| output | preprocessed_data |

mltable | A tabular dataset, which matches a subset of the reference data schema. |

For an example of a custom data preprocessing component, see custom_preprocessing in the azuremml-examples GitHub repo.

Once you've satisfied the previous requirements, you can set up model monitoring with the following CLI command and YAML definition:

az ml schedule create -f ./model-monitoring-with-collected-data.yaml

The following YAML contains the definition for model monitoring with production inference data that you've collected.

# model-monitoring-with-collected-data.yaml

$schema: http://azureml/sdk-2-0/Schedule.json

name: fraud_detection_model_monitoring

display_name: Fraud detection model monitoring

description: Fraud detection model monitoring with your own production data

trigger:

# perform model monitoring activity daily at 3:15am

type: recurrence

frequency: day #can be minute, hour, day, week, month

interval: 1 # #every day

schedule:

hours: 3 # at 3am

minutes: 15 # at 15 mins after 3am

create_monitor:

compute:

instance_type: standard_e4s_v3

runtime_version: "3.3"

monitoring_target:

ml_task: classification

endpoint_deployment_id: azureml:fraud-detection-endpoint:fraud-detection-deployment

monitoring_signals:

advanced_data_drift: # monitoring signal name, any user defined name works

type: data_drift

# define production dataset with your collected data

production_data:

input_data:

path: azureml:my_production_inference_data_model_inputs:1 # your collected data is registered as Azure Machine Learning asset

type: uri_folder

data_context: model_inputs

pre_processing_component: azureml:production_data_preprocessing:1

reference_data:

input_data:

path: azureml:my_model_training_data:1 # use training data as comparison baseline

type: mltable

data_context: training

data_column_names:

target_column: is_fraud

features:

top_n_feature_importance: 20 # monitor drift for top 20 features

alert_enabled: true

metric_thresholds:

numberical:

jensen_shannon_distance: 0.01

categorical:

pearsons_chi_squared_test: 0.02

advanced_prediction_drift: # monitoring signal name, any user defined name works

type: prediction_drift

# define production dataset with your collected data

production_data:

input_data:

path: azureml:my_production_inference_data_model_outputs:1 # your collected data is registered as Azure Machine Learning asset

type: uri_folder

data_context: model_outputs

pre_processing_component: azureml:production_data_preprocessing:1

reference_data:

input_data:

path: azureml:my_model_validation_data:1 # use training data as comparison reference dataset

type: mltable

data_context: validation

alert_enabled: true

metric_thresholds:

categorical:

pearsons_chi_squared_test: 0.02

alert_notification:

emails:

- abc@example.com

- def@example.com

Set up model monitoring with custom signals and metrics

With Azure Machine Learning model monitoring, you can define a custom signal and implement any metric of your choice to monitor your model. You can register this custom signal as an Azure Machine Learning component. When your Azure Machine Learning model monitoring job runs on the specified schedule, it computes the metric(s) you've defined within your custom signal, just as it does for the prebuilt signals (data drift, prediction drift, and data quality).

To set up a custom signal to use for model monitoring, you must first define the custom signal and register it as an Azure Machine Learning component. The Azure Machine Learning component must have these input and output signatures:

Component input signature

The component input DataFrame should contain the following items:

- An

mltablewith the processed data from the preprocessing component - Any number of literals, each representing an implemented metric as part of the custom signal component. For example, if you've implemented the metric,

std_deviation, then you'll need an input forstd_deviation_threshold. Generally, there should be one input per metric with the name<metric_name>_threshold.

| Signature name | Type | Description | Example value |

|---|---|---|---|

| production_data | mltable | A tabular dataset that matches a subset of the reference data schema. | |

| std_deviation_threshold | literal, string | Respective threshold for the implemented metric. | 2 |

Component output signature

The component output port should have the following signature.

| Signature name | Type | Description |

|---|---|---|

| signal_metrics | mltable | The mltable that contains the computed metrics. The schema is defined in the next section signal_metrics schema. |

signal_metrics schema

The component output DataFrame should contain four columns: group, metric_name, metric_value, and threshold_value.

| Signature name | Type | Description | Example value |

|---|---|---|---|

| group | literal, string | Top-level logical grouping to be applied to this custom metric. | TRANSACTIONAMOUNT |

| metric_name | literal, string | The name of the custom metric. | std_deviation |

| metric_value | numerical | The value of the custom metric. | 44,896.082 |

| threshold_value | numerical | The threshold for the custom metric. | 2 |

The following table shows an example output from a custom signal component that computes the std_deviation metric:

| group | metric_value | metric_name | threshold_value |

|---|---|---|---|

| TRANSACTIONAMOUNT | 44,896.082 | std_deviation | 2 |

| LOCALHOUR | 3.983 | std_deviation | 2 |

| TRANSACTIONAMOUNTUSD | 54,004.902 | std_deviation | 2 |

| DIGITALITEMCOUNT | 7.238 | std_deviation | 2 |

| PHYSICALITEMCOUNT | 5.509 | std_deviation | 2 |

To see an example custom signal component definition and metric computation code, see custom_signal in the azureml-examples repo.

Once you've satisfied the requirements for using custom signals and metrics, you can set up model monitoring with the following CLI command and YAML definition:

az ml schedule create -f ./custom-monitoring.yaml

The following YAML contains the definition for model monitoring with a custom signal. Some things to notice about the code:

- It assumes that you've already created and registered your component with the custom signal definition in Azure Machine Learning.

- The

component_idof the registered custom signal component isazureml:my_custom_signal:1.0.0. - If you've collected your data with the data collector, you can omit the

pre_processing_componentproperty. If you wish to use a preprocessing component to preprocess production data not collected by the data collector, you can specify it.

# custom-monitoring.yaml

$schema: http://azureml/sdk-2-0/Schedule.json

name: my-custom-signal

trigger:

type: recurrence

frequency: day # can be minute, hour, day, week, month

interval: 7 # #every day

create_monitor:

compute:

instance_type: "standard_e4s_v3"

runtime_version: "3.3"

monitoring_signals:

customSignal:

type: custom

component_id: azureml:my_custom_signal:1.0.0

input_data:

production_data:

input_data:

type: uri_folder

path: azureml:my_production_data:1

data_context: test

data_window:

lookback_window_size: P30D

lookback_window_offset: P7D

pre_processing_component: azureml:custom_preprocessor:1.0.0

metric_thresholds:

- metric_name: std_deviation

threshold: 2

alert_notification:

emails:

- abc@example.com

Interpret monitoring results

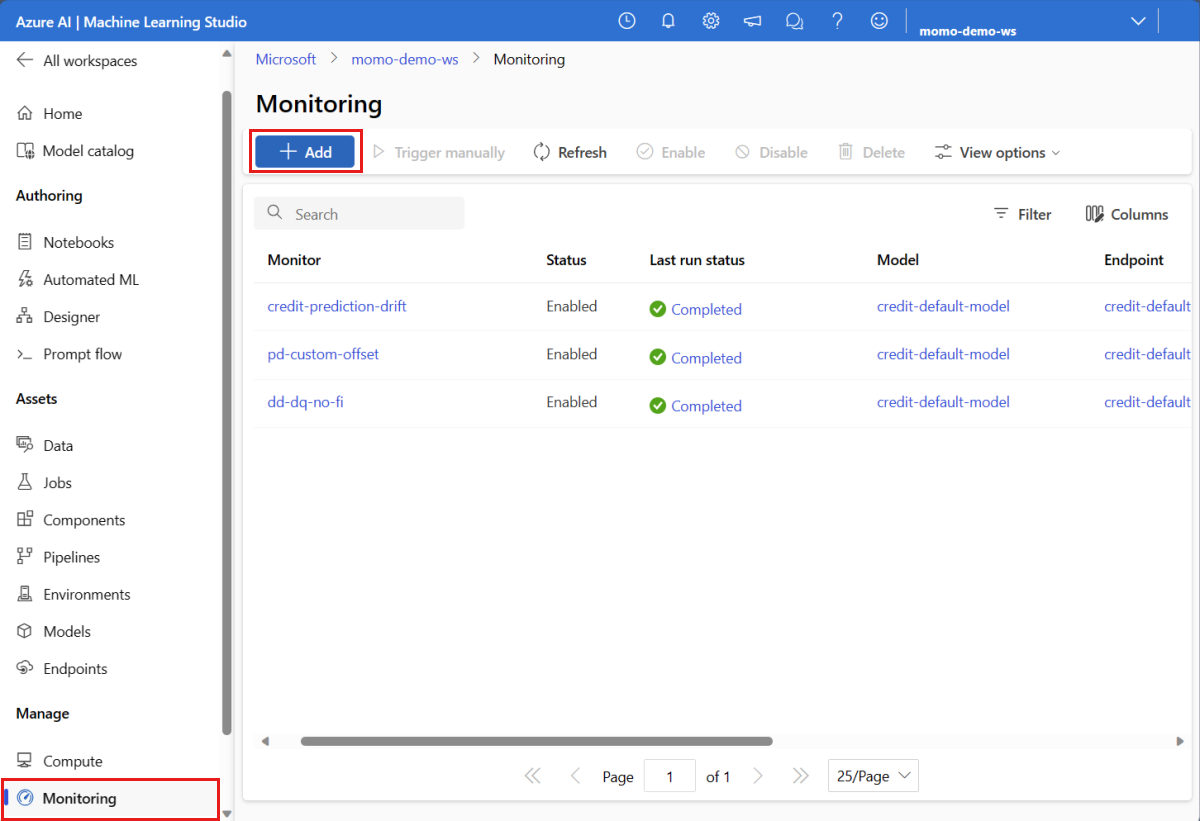

After you've configured your model monitor and the first run has completed, you can navigate back to the Monitoring tab in Azure Machine Learning studio to view the results.

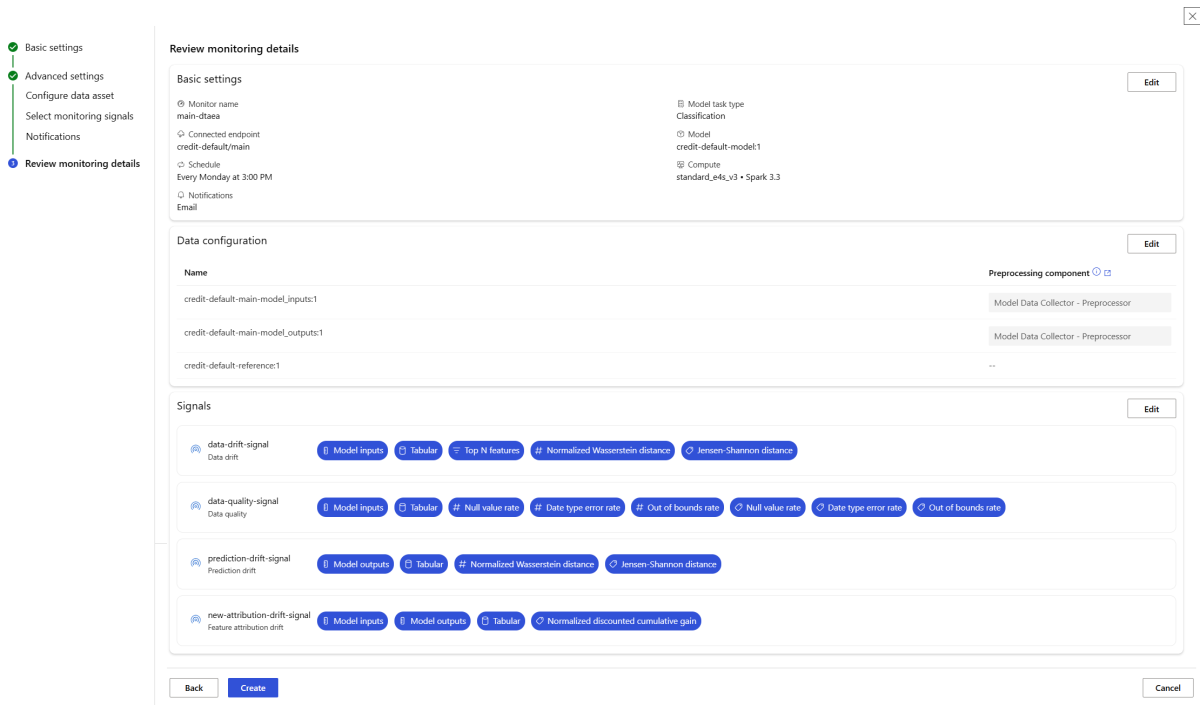

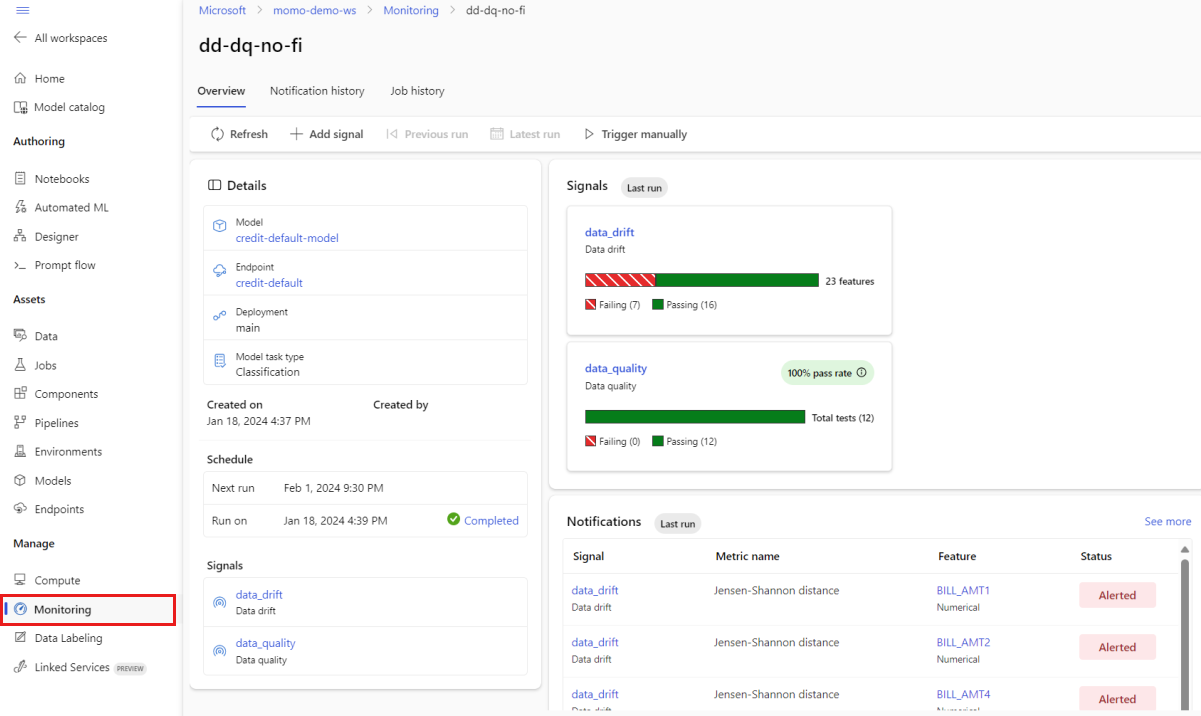

From the main Monitoring view, select the name of your model monitor to see the Monitor overview page. This page shows the corresponding model, endpoint, and deployment, along with details regarding the signals you configured. The next image shows a monitoring dashboard that includes data drift and data quality signals. Depending on the monitoring signals you configured, your dashboard might look different.

Look in the Notifications section of the dashboard to see, for each signal, which features breached the configured threshold for their respective metrics:

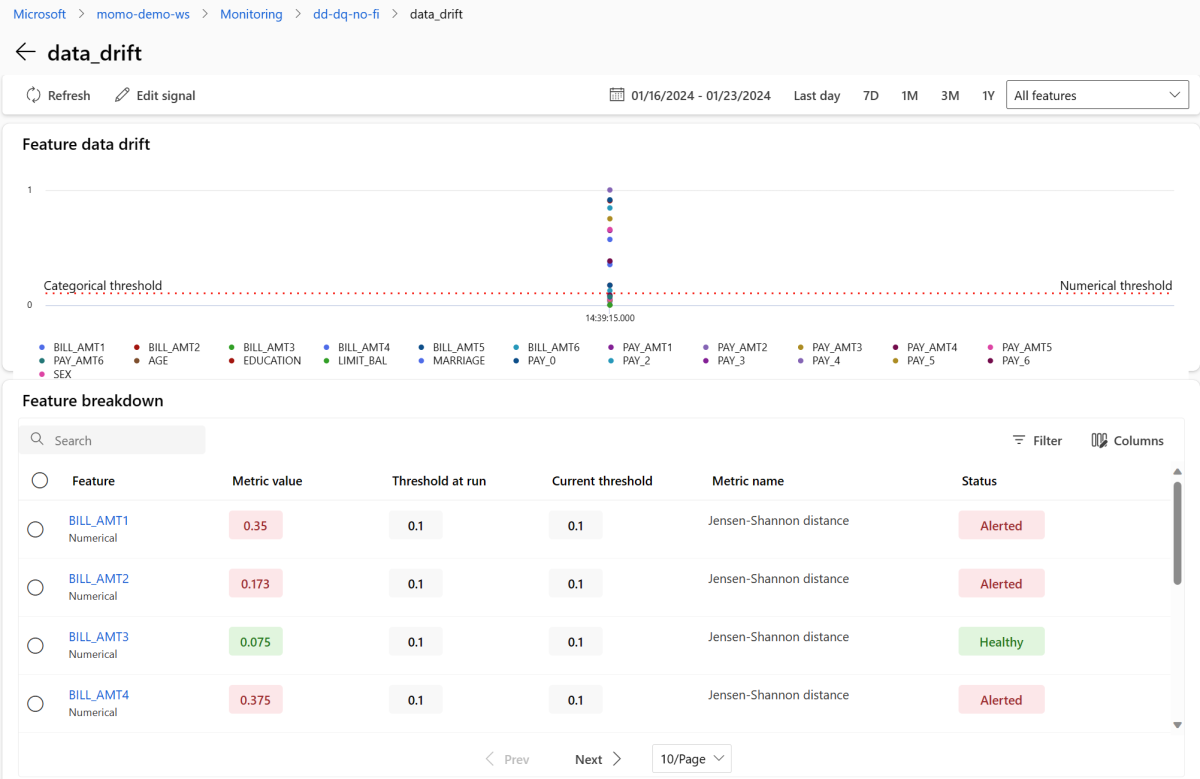

Select the data_drift to go to the data drift details page. On the details page, you can see the data drift metric value for each numerical and categorical feature that you included in your monitoring configuration. When your monitor has more than one run, you'll see a trendline for each feature.

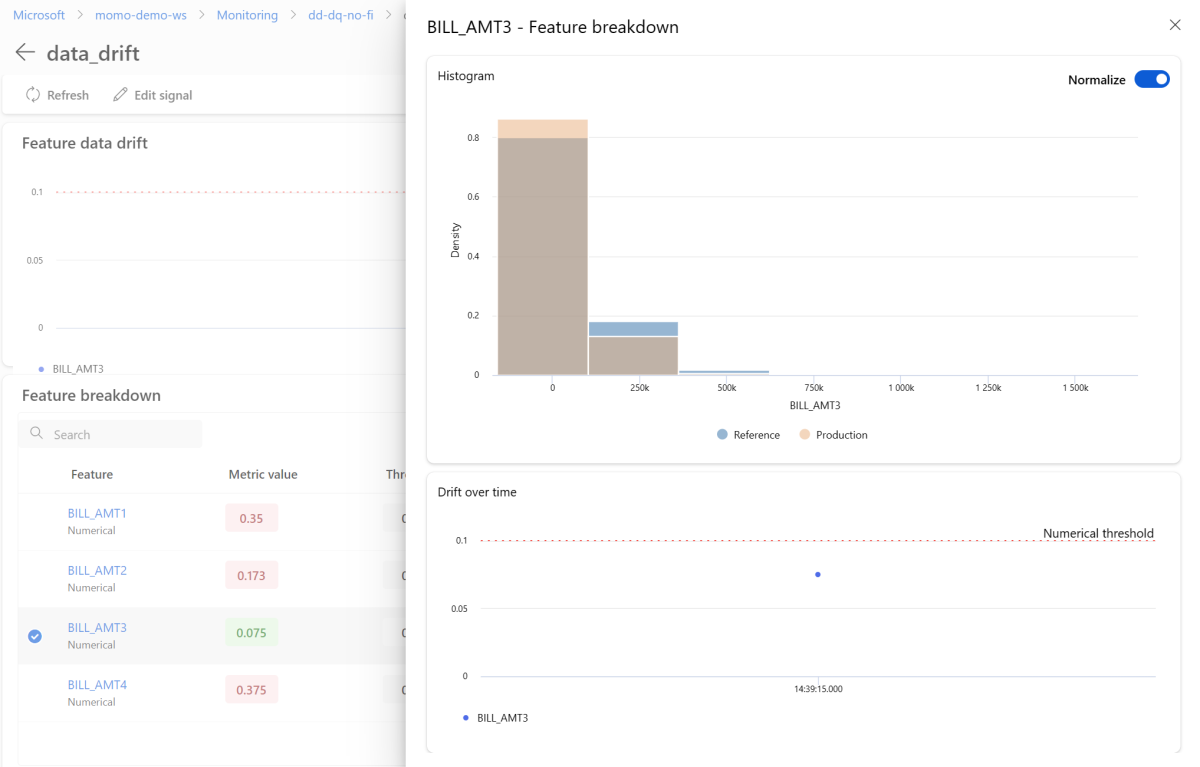

To view an individual feature in detail, select the name of the feature to view the production distribution compared to the reference distribution. This view also allows you to track drift over time for that specific feature.

Return to the monitoring dashboard and select data_quality to view the data quality signal page. On this page, you can see the null value rates, out-of-bounds rates, and data type error rates for each feature you're monitoring.

Model monitoring is a continuous process. With Azure Machine Learning model monitoring, you can configure multiple monitoring signals to obtain a broad view into the performance of your models in production.

Integrate Azure Machine Learning model monitoring with Azure Event Grid

You can use events generated by Azure Machine Learning model monitoring to set up event-driven applications, processes, or CI/CD workflows with Azure Event Grid. You can consume events through various event handlers, such as Azure Event Hubs, Azure functions, and logic apps. Based on the drift detected by your monitors, you can take action programmatically, such as by setting up a machine learning pipeline to re-train a model and re-deploy it.

To get started with integrating Azure Machine Learning model monitoring with Event Grid:

Follow the steps in see Set up in Azure portal. Give your Event Subscription a name, such as MonitoringEvent, and select only the Run status changed box under Event Types.

Warning

Be sure to select Run status changed for the event type. Don't select Dataset drift detected, as it applies to data drift v1, rather than Azure Machine Learning model monitoring.

Follow the steps in Filter & subscribe to events to set up event filtering for your scenario. Navigate to the Filters tab and add the following Key, Operator, and Value under Advanced Filters:

- Key:

data.RunTags.azureml_modelmonitor_threshold_breached - Value: has failed due to one or more features violating metric thresholds

- Operator: String contains

With this filter, events are generated when the run status changes (from Completed to Failed, or from Failed to Completed) for any monitor within your Azure Machine Learning workspace.

- Key:

To filter at the monitoring level, use the following Key, Operator, and Value under Advanced Filters:

- Key:

data.RunTags.azureml_modelmonitor_threshold_breached - Value:

your_monitor_name_signal_name - Operator: String contains

Ensure that

your_monitor_name_signal_nameis the name of a signal in the specific monitor you want to filter events for. For example,credit_card_fraud_monitor_data_drift. For this filter to work, this string must match the name of your monitoring signal. You should name your signal with both the monitor name and the signal name for this case.- Key:

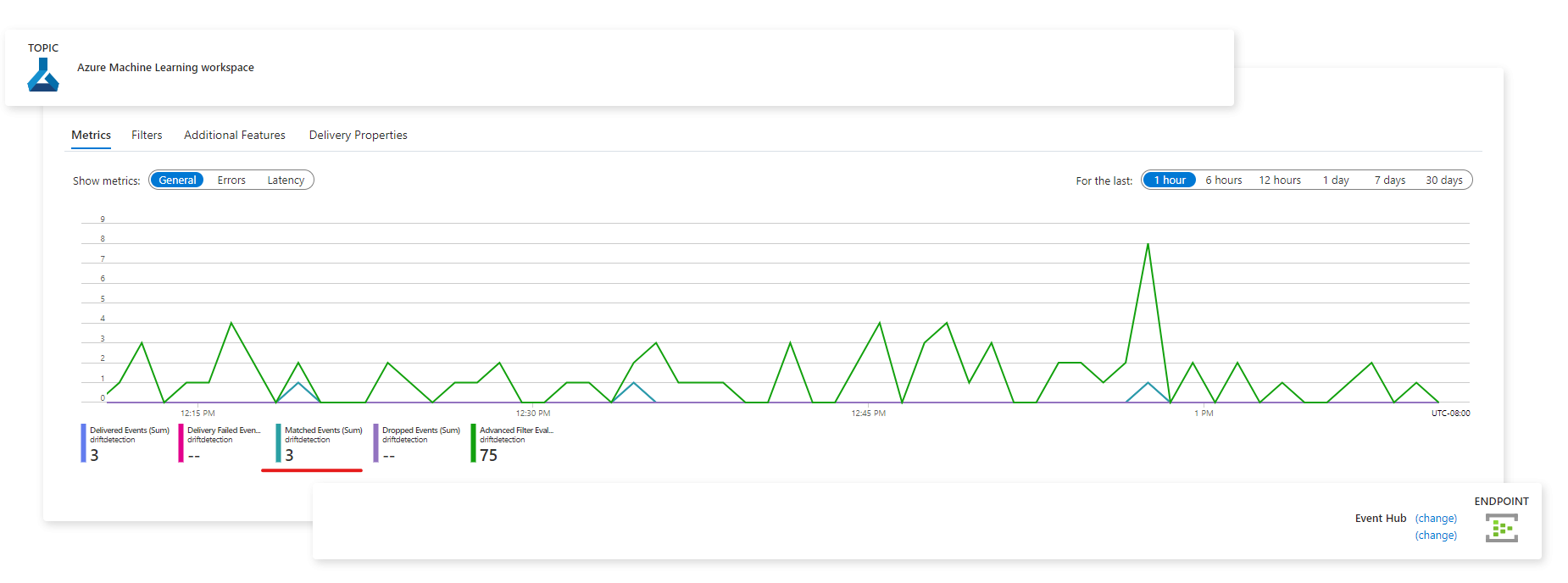

When you've completed your Event Subscription configuration, select the desired endpoint to serve as your event handler, such as Azure Event Hubs.

After events have been captured, you can view them from the endpoint page:

You can also view events in the Azure Monitor Metrics tab: