이 자습서에서는 Fabric을 사용하여 RAG 애플리케이션 성능을 평가하는 방법을 보여 줍니다. 평가는 리트리버(Azure AI Search)와 응답 생성기(사용자의 쿼리를 사용하는 LLM, 검색된 컨텍스트 및 회신 생성 프롬프트)의 두 가지 주요 RAG 구성 요소에 중점을 둡니다. 주요 단계는 다음과 같습니다.

- Azure OpenAI 및 Azure AI Search 서비스 설정

- CMU의 Wikipedia 문서 QA 데이터 세트에서 데이터를 로드하여 벤치마크 빌드

- 하나의 쿼리로 스모크 테스트를 실행하여 RAG 시스템이 종단 간 작동하는지 확인합니다.

- 평가를 위한 결정적 및 AI 지원 메트릭 정의

- 체크인 1: 상위 N개 정확도를 사용하여 검색 엔진의 성능 평가

- 체크 인 2: 접지성, 관련성 및 유사성 메트릭을 사용하여 응답 생성기 성능 평가

- 향후 참조 및 지속적인 평가를 위해 OneLake에서 평가 결과 시각화 및 저장

필수 조건

이 자습서를 시작하기 전에 패브릭에서 검색 증강 생성 빌드 단계별 가이드를 완료합니다.

Notebook을 실행하려면 다음 서비스가 필요합니다.

- Microsoft Fabric

- 노트북에 레이크하우스를 추가하십시오 (이전 자습서에서 추가한 데이터가 포함되어 있습니다).

- OpenAI용 Azure AI Studio

- Azure AI Search (이전 자습서에서 인덱싱한 데이터가 포함됨).

이전 자습서에서는 Lakehouse에 데이터를 업로드하고 RAG 시스템에서 사용하는 문서 인덱스도 작성했습니다. 이 연습의 인덱스를 사용하여 RAG 성능을 평가하고 잠재적인 문제를 식별하는 핵심 기술을 알아봅니다. 인덱스를 만들지 않았거나 제거한 경우 빠른 시작 가이드 에 따라 필수 구성 요소를 완료합니다.

Azure OpenAI 및 Azure AI Search에 대한 액세스 설정

엔드포인트 및 필수 키를 정의합니다. 필요한 라이브러리 및 함수를 가져옵니다. Azure OpenAI 및 Azure AI Search에 대한 클라이언트를 인스턴스화합니다. RAG 시스템을 쿼리하라는 프롬프트를 사용하여 함수 래퍼를 정의합니다.

# Enter your Azure OpenAI service values

aoai_endpoint = "https://<your-resource-name>.openai.azure.com" # TODO: Provide the Azure OpenAI resource endpoint (replace <your-resource-name>)

aoai_key = "" # TODO: Fill in your API key from Azure OpenAI

aoai_deployment_name_embeddings = "text-embedding-ada-002"

aoai_model_name_query = "gpt-4-32k"

aoai_model_name_metrics = "gpt-4-32k"

aoai_api_version = "2024-02-01"

# Setup key accesses to Azure AI Search

aisearch_index_name = "" # TODO: Create a new index name: must only contain lowercase, numbers, and dashes

aisearch_api_key = "" # TODO: Fill in your API key from Azure AI Search

aisearch_endpoint = "https://.search.windows.net" # TODO: Provide the url endpoint for your created Azure AI Search

import warnings

warnings.filterwarnings("ignore", category=DeprecationWarning)

import os, requests, json

from datetime import datetime, timedelta

from azure.core.credentials import AzureKeyCredential

from azure.search.documents import SearchClient

from pyspark.sql import functions as F

from pyspark.sql.functions import to_timestamp, current_timestamp, concat, col, split, explode, udf, monotonically_increasing_id, when, rand, coalesce, lit, input_file_name, regexp_extract, concat_ws, length, ceil

from pyspark.sql.types import StructType, StructField, StringType, IntegerType, TimestampType, ArrayType, FloatType

from pyspark.sql import Row

import pandas as pd

from azure.search.documents.indexes import SearchIndexClient

from azure.search.documents.models import (

VectorizedQuery,

)

from azure.search.documents.indexes.models import (

SearchIndex,

SearchField,

SearchFieldDataType,

SimpleField,

SearchableField,

SemanticConfiguration,

SemanticPrioritizedFields,

SemanticField,

SemanticSearch,

VectorSearch,

HnswAlgorithmConfiguration,

HnswParameters,

VectorSearchProfile,

VectorSearchAlgorithmKind,

VectorSearchAlgorithmMetric,

)

import openai

from openai import AzureOpenAI

import uuid

import matplotlib.pyplot as plt

from synapse.ml.featurize.text import PageSplitter

import ipywidgets as widgets

from IPython.display import display as w_display

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 6, Finished, Available, Finished)

# Configure access to OpenAI endpoint

openai.api_type = "azure"

openai.api_key = aoai_key

openai.api_base = aoai_endpoint

openai.api_version = aoai_api_version

# Create client for accessing embedding endpoint

embed_client = AzureOpenAI(

api_version=aoai_api_version,

azure_endpoint=aoai_endpoint,

api_key=aoai_key,

)

# Create client for accessing chat endpoint

chat_client = AzureOpenAI(

azure_endpoint=aoai_endpoint,

api_key=aoai_key,

api_version=aoai_api_version,

)

# Configure access to Azure AI Search

search_client = SearchClient(

aisearch_endpoint,

aisearch_index_name,

credential=AzureKeyCredential(aisearch_api_key)

)

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 7, Finished, Available, Finished)

다음 함수는 두 가지 주요 RAG 구성 요소인 리트리버(get_context_source) 및 응답 생성기(get_answer)를 구현합니다. 이 코드는 이전 자습서와 비슷합니다. 매개 변수를 topN 사용하면 검색할 관련 리소스 수를 설정할 수 있습니다(이 자습서에서는 3을 사용하지만 최적 값은 데이터 세트에 따라 다를 수 있음).

# Implement retriever

def get_context_source(question, topN=3):

"""

Retrieves contextual information and sources related to a given question using embeddings and a vector search.

Parameters:

question (str): The question for which the context and sources are to be retrieved.

topN (int, optional): The number of top results to retrieve. Default is 3.

Returns:

List: A list containing two elements:

1. A string with the concatenated retrieved context.

2. A list of retrieved source paths.

"""

embed_client = openai.AzureOpenAI(

api_version=aoai_api_version,

azure_endpoint=aoai_endpoint,

api_key=aoai_key,

)

query_embedding = embed_client.embeddings.create(input=question, model=aoai_deployment_name_embeddings).data[0].embedding

vector_query = VectorizedQuery(vector=query_embedding, k_nearest_neighbors=topN, fields="Embedding")

results = search_client.search(

vector_queries=[vector_query],

top=topN,

)

retrieved_context = ""

retrieved_sources = []

for result in results:

retrieved_context += result['ExtractedPath'] + "\n" + result['Chunk'] + "\n\n"

retrieved_sources.append(result['ExtractedPath'])

return [retrieved_context, retrieved_sources]

# Implement response generator

def get_answer(question, context):

"""

Generates a response to a given question using provided context and an Azure OpenAI model.

Parameters:

question (str): The question that needs to be answered.

context (str): The contextual information related to the question that will help generate a relevant response.

Returns:

str: The response generated by the Azure OpenAI model based on the provided question and context.

"""

messages = [

{

"role": "system",

"content": "You are a chat assistant. Use provided text to ground your response. Give a one-word answer when possible ('yes'/'no' is OK where appropriate, no details). Unnecessary words incur a $500 penalty."

}

]

messages.append(

{

"role": "user",

"content": question + "\n" + context,

},

)

chat_client = openai.AzureOpenAI(

azure_endpoint=aoai_endpoint,

api_key=aoai_key,

api_version=aoai_api_version,

)

chat_completion = chat_client.chat.completions.create(

model=aoai_model_name_query,

messages=messages,

)

return chat_completion.choices[0].message.content

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 8, Finished, Available, Finished)

Dataset

카네기 멜론 대학 Question-Answer 데이터 세트의 버전 1.2는 사실적인 질문과 답변이 수동으로 작성된 Wikipedia 문서의 모음입니다. GFDL 아래의 Azure Blob Storage에서 호스트됩니다. 데이터 세트는 다음 필드와 함께 하나의 테이블을 사용합니다.

-

ArticleTitle: 질문과 대답이 제공되는 Wikipedia 문서의 이름 -

Question: 문서에 대한 수동으로 작성된 질문 -

Answer: 문서에 따라 수동으로 답변 작성 -

DifficultyFromQuestioner: 질문 작성자가 할당하는 난이도 평가 -

DifficultyFromAnswerer: 평가자가 할당하는 난이도 평가;DifficultyFromQuestioner와 다를 수 있습니다. -

ExtractedPath: 원본 아티클의 경로(아티클에 여러 질문-답변 쌍이 있을 수 있습니다.) -

text: 정리된 Wikipedia 문서 텍스트

라이선스 세부 정보를 보려면 동일한 위치에서 LICENSE-S08 및 LICENSE-S09 파일을 다운로드합니다.

기록 및 인용

데이터 세트에 대해 다음 인용을 사용합니다.

CMU Question/Answer Dataset, Release 1.2

August 23, 2013

Noah A. Smith, Michael Heilman, and Rebecca Hwa

Question Generation as a Competitive Undergraduate Course Project

In Proceedings of the NSF Workshop on the Question Generation Shared Task and Evaluation Challenge, Arlington, VA, September 2008.

Available at http://www.cs.cmu.edu/~nasmith/papers/smith+heilman+hwa.nsf08.pdf.

Original dataset acknowledgments:

This research project was supported by NSF IIS-0713265 (to Smith), an NSF Graduate Research Fellowship (to Heilman), NSF IIS-0712810 and IIS-0745914 (to Hwa), and Institute of Education Sciences, U.S. Department of Education R305B040063 (to Carnegie Mellon).

cmu-qa-08-09 (modified version)

June 12, 2024

Amir Jafari, Alexandra Savelieva, Brice Chung, Hossein Khadivi Heris, Journey McDowell

This release uses the GNU Free Documentation License (GFDL) (http://www.gnu.org/licenses/fdl.html).

The GNU license applies to all copies of the dataset.

벤치마크 만들기

벤치마크를 가져옵니다. 이 데모에서는 S08/set1 및 S08/set2 버킷의 질문 하위 집합을 사용합니다. 아티클당 하나의 질문을 유지하려면 다음을 적용합니다 df.dropDuplicates(["ExtractedPath"]). 중복 질문을 삭제합니다. 큐레이션 프로세스는 난이도 레이블을 추가합니다. 이 예제에서는 으로 제한합니다 medium.

df = spark.sql("SELECT * FROM data_load_tests.cmu_qa")

# Filter the DataFrame to include the specified paths

df = df.filter((col("ExtractedPath").like("S08/data/set1/%")) | (col("ExtractedPath").like("S08/data/set2/%")))

# Keep only medium-difficulty questions.

df = df.filter(col("DifficultyFromQuestioner") == "medium")

# Drop duplicate questions and source paths.

df = df.dropDuplicates(["Question"])

df = df.dropDuplicates(["ExtractedPath"])

num_rows = df.count()

num_columns = len(df.columns)

print(f"Number of rows: {num_rows}, Number of columns: {num_columns}")

# Persist the DataFrame

df.persist()

display(df)

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 9, Finished, Available, Finished)Number of rows: 20, Number of columns: 7SynapseWidget(Synapse.DataFrame, 47aff8cb-72f8-4a36-885c-f4f3bb830a91)

그 결과 데모 벤치마크인 20개의 행이 있는 DataFrame이 생성됩니다. 주요 필드는 Question, Answer (사람이 관리하는 기본 진리 답변), 그리고 ExtractedPath (출처 문서)입니다. 다른 질문을 포함하도록 필터를 조정하고 보다 현실적인 예제를 위해 어려움을 변경합니다. 사용해 보세요.

간단한 엔드 투 엔드 테스트 실행

RAG(검색 증강 세대)의 엔드 투 엔드 스모크 테스트로 시작합니다.

question = "How many suborders are turtles divided into?"

retrieved_context, retrieved_sources = get_context_source(question)

answer = get_answer(question, retrieved_context)

print(answer)

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 10, Finished, Available, Finished)Three

이 스모크 테스트를 사용하면 잘못된 자격 증명, 누락되거나 빈 벡터 인덱스 또는 호환되지 않는 함수 인터페이스와 같은 RAG 구현에서 문제를 찾을 수 있습니다. 테스트가 실패하면 문제를 확인합니다. 예상 출력: Three. 스모크 테스트가 통과하면 다음 섹션으로 이동하여 RAG를 추가로 평가합니다.

메트릭 설정

검색기를 평가하는 결정적 메트릭을 정의합니다. 검색 엔진에서 영감을 받았습니다. 검색된 원본 목록에 기본 진리 원본이 포함되어 있는지 여부를 확인합니다. 매개 변수가 검색된 원본 수를 설정하기 때문에 topN 이 메트릭은 상위 N개 정확도 점수입니다.

def get_retrieval_score(target_source, retrieved_sources):

if target_source in retrieved_sources:

return 1

else:

return 0

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 11, Finished, Available, Finished)

벤치마크에 따르면 답변은 ID "S08/data/set1/a9"가 있는 원본에 포함됩니다. 위에서 실행한 예제에서 함수를 테스트하면 상위 3개의 관련 텍스트 청크에 있었기 때문에 예상대로 반환 1됩니다.

print("Retrieved sources:", retrieved_sources)

get_retrieval_score("S08/data/set1/a9", retrieved_sources)

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 12, Finished, Available, Finished)Retrieved sources: ['S08/data/set1/a9', 'S08/data/set1/a9', 'S08/data/set1/a5']1

이 섹션에서는 AI 지원 메트릭을 정의합니다. 프롬프트 템플릿에는 몇 가지 입력 예제(CONTEXT 및 ANSWER) 및 제안된 출력(몇 개의 샷 모델이라고도 함)이 포함되어 있습니다. Azure AI Studio에서 사용되는 것과 동일한 프롬프트입니다.

기본 제공 평가 메트릭에 대해 자세히 알아봅니다. 이 데모에서는 groundedness 및 relevance 메트릭을 사용합니다. 일반적으로 이 메트릭은 GPT 모델을 평가하는 데 가장 유용하고 안정적입니다. 다른 메트릭은 유용할 수 있지만 직관을 덜 제공합니다. 예를 들어 답변이 정확하고 유사할 필요는 없으므로 similarity 점수가 오해의 소지가 있을 수 있습니다. 모든 메트릭의 크기는 1~5입니다. 더 높은 것이 좋습니다. 접지성은 두 개의 입력(컨텍스트 및 생성된 답변)만 사용하고 다른 두 메트릭은 평가에 근거 진리를 사용합니다.

def get_groundedness_metric(context, answer):

"""Get the groundedness score from the LLM using the context and answer."""

groundedness_prompt_template = """

You are presented with a CONTEXT and an ANSWER about that CONTEXT. Decide whether the ANSWER is entailed by the CONTEXT by choosing one of the following ratings:

1. 5: The ANSWER follows logically from the information contained in the CONTEXT.

2. 1: The ANSWER is logically false from the information contained in the CONTEXT.

3. an integer score between 1 and 5 and if such integer score does not exist, use 1: It is not possible to determine whether the ANSWER is true or false without further information. Read the passage of information thoroughly and select the correct answer from the three answer labels. Read the CONTEXT thoroughly to ensure you know what the CONTEXT entails. Note the ANSWER is generated by a computer system, it can contain certain symbols, which should not be a negative factor in the evaluation.

Independent Examples:

## Example Task #1 Input:

"CONTEXT": "Some are reported as not having been wanted at all.", "QUESTION": "", "ANSWER": "All are reported as being completely and fully wanted."

## Example Task #1 Output:

1

## Example Task #2 Input:

"CONTEXT": "Ten new television shows appeared during the month of September. Five of the shows were sitcoms, three were hourlong dramas, and two were news-magazine shows. By January, only seven of these new shows were still on the air. Five of the shows that remained were sitcoms.", "QUESTION": "", "ANSWER": "At least one of the shows that were cancelled was an hourlong drama."

## Example Task #2 Output:

5

## Example Task #3 Input:

"CONTEXT": "In Quebec, an allophone is a resident, usually an immigrant, whose mother tongue or home language is neither French nor English.", "QUESTION": "", "ANSWER": "In Quebec, an allophone is a resident, usually an immigrant, whose mother tongue or home language is not French."

5

## Example Task #4 Input:

"CONTEXT": "Some are reported as not having been wanted at all.", "QUESTION": "", "ANSWER": "All are reported as being completely and fully wanted."

## Example Task #4 Output:

1

## Actual Task Input:

"CONTEXT": {context}, "QUESTION": "", "ANSWER": {answer}

Reminder: The return values for each task should be correctly formatted as an integer between 1 and 5. Do not repeat the context and question. Don't explain the reasoning. The answer should include only a number: 1, 2, 3, 4, or 5.

Actual Task Output:

"""

metric_client = openai.AzureOpenAI(

api_version=aoai_api_version,

azure_endpoint=aoai_endpoint,

api_key=aoai_key,

)

messages = [

{

"role": "system",

"content": "You are an AI assistant. You will be given the definition of an evaluation metric for assessing the quality of an answer in a question-answering task. Your job is to compute an accurate evaluation score using the provided evaluation metric."

},

{

"role": "user",

"content": groundedness_prompt_template.format(context=context, answer=answer)

}

]

metric_completion = metric_client.chat.completions.create(

model=aoai_model_name_metrics,

messages=messages,

temperature=0,

)

return metric_completion.choices[0].message.content

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 13, Finished, Available, Finished)

def get_relevance_metric(context, question, answer):

relevance_prompt_template = """

Relevance measures how well the answer addresses the main aspects of the question, based on the context. Consider whether all and only the important aspects are contained in the answer when evaluating relevance. Given the context and question, score the relevance of the answer between one to five stars using the following rating scale:

One star: the answer completely lacks relevance

Two stars: the answer mostly lacks relevance

Three stars: the answer is partially relevant

Four stars: the answer is mostly relevant

Five stars: the answer has perfect relevance

This rating value should always be an integer between 1 and 5. So the rating produced should be 1 or 2 or 3 or 4 or 5.

context: Marie Curie was a Polish-born physicist and chemist who pioneered research on radioactivity and was the first woman to win a Nobel Prize.

question: What field did Marie Curie excel in?

answer: Marie Curie was a renowned painter who focused mainly on impressionist styles and techniques.

stars: 1

context: The Beatles were an English rock band formed in Liverpool in 1960, and they are widely regarded as the most influential music band in history.

question: Where were The Beatles formed?

answer: The band The Beatles began their journey in London, England, and they changed the history of music.

stars: 2

context: The recent Mars rover, Perseverance, was launched in 2020 with the main goal of searching for signs of ancient life on Mars. The rover also carries an experiment called MOXIE, which aims to generate oxygen from the Martian atmosphere.

question: What are the main goals of Perseverance Mars rover mission?

answer: The Perseverance Mars rover mission focuses on searching for signs of ancient life on Mars.

stars: 3

context: The Mediterranean diet is a commonly recommended dietary plan that emphasizes fruits, vegetables, whole grains, legumes, lean proteins, and healthy fats. Studies have shown that it offers numerous health benefits, including a reduced risk of heart disease and improved cognitive health.

question: What are the main components of the Mediterranean diet?

answer: The Mediterranean diet primarily consists of fruits, vegetables, whole grains, and legumes.

stars: 4

context: The Queen's Royal Castle is a well-known tourist attraction in the United Kingdom. It spans over 500 acres and contains extensive gardens and parks. The castle was built in the 15th century and has been home to generations of royalty.

question: What are the main attractions of the Queen's Royal Castle?

answer: The main attractions of the Queen's Royal Castle are its expansive 500-acre grounds, extensive gardens, parks, and the historical castle itself, which dates back to the 15th century and has housed generations of royalty.

stars: 5

Don't explain the reasoning. The answer should include only a number: 1, 2, 3, 4, or 5.

context: {context}

question: {question}

answer: {answer}

stars:

"""

metric_client = openai.AzureOpenAI(

api_version=aoai_api_version,

azure_endpoint=aoai_endpoint,

api_key=aoai_key,

)

messages = [

{

"role": "system",

"content": "You are an AI assistant. You are given the definition of an evaluation metric for assessing the quality of an answer in a question-answering task. Compute an accurate evaluation score using the provided evaluation metric."

},

{

"role": "user",

"content": relevance_prompt_template.format(context=context, question=question, answer=answer)

}

]

metric_completion = metric_client.chat.completions.create(

model=aoai_model_name_metrics,

messages=messages,

temperature=0,

)

return metric_completion.choices[0].message.content

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 14, Finished, Available, Finished)

def get_similarity_metric(question, ground_truth, answer):

similarity_prompt_template = """

Equivalence, as a metric, measures the similarity between the predicted answer and the correct answer. If the information and content in the predicted answer is similar or equivalent to the correct answer, then the value of the Equivalence metric should be high, else it should be low. Given the question, correct answer, and predicted answer, determine the value of Equivalence metric using the following rating scale:

One star: the predicted answer is not at all similar to the correct answer

Two stars: the predicted answer is mostly not similar to the correct answer

Three stars: the predicted answer is somewhat similar to the correct answer

Four stars: the predicted answer is mostly similar to the correct answer

Five stars: the predicted answer is completely similar to the correct answer

This rating value should always be an integer between 1 and 5. So the rating produced should be 1 or 2 or 3 or 4 or 5.

The examples below show the Equivalence score for a question, a correct answer, and a predicted answer.

question: What is the role of ribosomes?

correct answer: Ribosomes are cellular structures responsible for protein synthesis. They interpret the genetic information carried by messenger RNA (mRNA) and use it to assemble amino acids into proteins.

predicted answer: Ribosomes participate in carbohydrate breakdown by removing nutrients from complex sugar molecules.

stars: 1

question: Why did the Titanic sink?

correct answer: The Titanic sank after it struck an iceberg during its maiden voyage in 1912. The impact caused the ship's hull to breach, allowing water to flood into the vessel. The ship's design, lifeboat shortage, and lack of timely rescue efforts contributed to the tragic loss of life.

predicted answer: The sinking of the Titanic was a result of a large iceberg collision. This caused the ship to take on water and eventually sink, leading to the death of many passengers due to a shortage of lifeboats and insufficient rescue attempts.

stars: 2

question: What causes seasons on Earth?

correct answer: Seasons on Earth are caused by the tilt of the Earth's axis and its revolution around the Sun. As the Earth orbits the Sun, the tilt causes different parts of the planet to receive varying amounts of sunlight, resulting in changes in temperature and weather patterns.

predicted answer: Seasons occur because of the Earth's rotation and its elliptical orbit around the Sun. The tilt of the Earth's axis causes regions to be subjected to different sunlight intensities, which leads to temperature fluctuations and alternating weather conditions.

stars: 3

question: How does photosynthesis work?

correct answer: Photosynthesis is a process by which green plants and some other organisms convert light energy into chemical energy. This occurs as light is absorbed by chlorophyll molecules, and then carbon dioxide and water are converted into glucose and oxygen through a series of reactions.

predicted answer: In photosynthesis, sunlight is transformed into nutrients by plants and certain microorganisms. Light is captured by chlorophyll molecules, followed by the conversion of carbon dioxide and water into sugar and oxygen through multiple reactions.

stars: 4

question: What are the health benefits of regular exercise?

correct answer: Regular exercise can help maintain a healthy weight, increase muscle and bone strength, and reduce the risk of chronic diseases. It also promotes mental well-being by reducing stress and improving overall mood.

predicted answer: Routine physical activity can contribute to maintaining ideal body weight, enhancing muscle and bone strength, and preventing chronic illnesses. In addition, it supports mental health by alleviating stress and augmenting general mood.

stars: 5

Don't explain the reasoning. The answer should include only a number: 1, 2, 3, 4, or 5.

question: {question}

correct answer:{ground_truth}

predicted answer: {answer}

stars:

"""

metric_client = openai.AzureOpenAI(

api_version=aoai_api_version,

azure_endpoint=aoai_endpoint,

api_key=aoai_key,

)

messages = [

{

"role": "system",

"content": "You are an AI assistant. You will be given the definition of an evaluation metric for assessing the quality of an answer in a question-answering task. Your job is to compute an accurate evaluation score using the provided evaluation metric."

},

{

"role": "user",

"content": similarity_prompt_template.format(question=question, ground_truth=ground_truth, answer=answer)

}

]

metric_completion = metric_client.chat.completions.create(

model=aoai_model_name_metrics,

messages=messages,

temperature=0,

)

return metric_completion.choices[0].message.content

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 15, Finished, Available, Finished)

관련성 메트릭을 테스트합니다.

get_relevance_metric(retrieved_context, question, answer)

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 16, Finished, Available, Finished)'2'

점수가 5이면 대답이 관련이 있습니다. 다음 코드는 유사성 메트릭을 가져옵니다.

get_similarity_metric(question, 'three', answer)

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 17, Finished, Available, Finished)'5'

점수가 5이면 답변이 인간 전문가가 큐레이팅한 지상 진리 답변과 일치합니다. AI 지원 메트릭 점수는 동일한 입력으로 변동될 수 있습니다. 인간 심사위원을 사용하는 것보다 빠릅니다.

벤치마크 Q&A에서 RAG의 성능 평가

대규모로 실행할 함수 래퍼를 만듭니다. 각 함수가 _udf (줄임말: user-defined function)로 끝나도록 래핑하여 Spark 요구 사항을 준수하고, 클러스터 전체에서 대규모 데이터 계산을 더 빠르게 실행합니다.

# UDF wrappers for RAG components

@udf(returnType=StructType([

StructField("retrieved_context", StringType(), True),

StructField("retrieved_sources", ArrayType(StringType()), True)

]))

def get_context_source_udf(question, topN=3):

return get_context_source(question, topN)

@udf(returnType=StringType())

def get_answer_udf(question, context):

return get_answer(question, context)

# UDF wrapper for retrieval score

@udf(returnType=StringType())

def get_retrieval_score_udf(target_source, retrieved_sources):

return get_retrieval_score(target_source, retrieved_sources)

# UDF wrappers for AI-assisted metrics

@udf(returnType=StringType())

def get_groundedness_metric_udf(context, answer):

return get_groundedness_metric(context, answer)

@udf(returnType=StringType())

def get_relevance_metric_udf(context, question, answer):

return get_relevance_metric(context, question, answer)

@udf(returnType=StringType())

def get_similarity_metric_udf(question, ground_truth, answer):

return get_similarity_metric(question, ground_truth, answer)

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 18, Finished, Available, Finished)

체크인 #1: 검색기의 성능

다음 코드는 벤치마크 DataFrame에 result 열과 retrieval_score 열을 만듭니다. 이러한 열에는 RAG에서 생성된 답변과 LLM에 제공된 컨텍스트에 질문 기반이 되는 문서가 포함되어 있는지 여부를 나타내는 지표가 포함됩니다.

df = df.withColumn("result", get_context_source_udf(df.Question)).select(df.columns+["result.*"])

df = df.withColumn('retrieval_score', get_retrieval_score_udf(df.ExtractedPath, df.retrieved_sources))

print("Aggregate Retrieval score: {:.2f}%".format((df.where(df["retrieval_score"] == 1).count() / df.count()) * 100))

display(df.select(["question", "retrieval_score", "ExtractedPath", "retrieved_sources"]))

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 19, Finished, Available, Finished)Aggregate Retrieval score: 100.00%SynapseWidget(Synapse.DataFrame, 14efe386-836a-4765-bd88-b121f32c7cfc)

모든 질문에 대해 검색기가 올바른 컨텍스트를 가져오며 대부분의 경우 첫 번째 항목입니다. Azure AI Search는 잘 수행됩니다. 컨텍스트에 두 개 또는 세 개의 동일한 값이 있는 이유가 궁금할 수 있습니다. 이는 오류가 아닙니다. 즉, 리트리버가 분할하는 동안 한 청크에 맞지 않는 동일한 아티클의 조각을 가져옵니다.

체크 인 #2: 응답 생성기의 성능

LLM에 질문과 컨텍스트를 전달하여 답변을 생성합니다. DataFrame의 generated_answer 열에 저장합니다.

df = df.withColumn('generated_answer', get_answer_udf(df.Question, df.retrieved_context))

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 20, Finished, Available, Finished)

생성된 답변, 근거리 답변, 질문 및 컨텍스트를 사용하여 메트릭을 계산합니다. 각 질문-답변 쌍에 대한 평가 결과를 표시합니다.

df = df.withColumn('gpt_groundedness', get_groundedness_metric_udf(df.retrieved_context, df.generated_answer))

df = df.withColumn('gpt_relevance', get_relevance_metric_udf(df.retrieved_context, df.Question, df.generated_answer))

df = df.withColumn('gpt_similarity', get_similarity_metric_udf(df.Question, df.Answer, df.generated_answer))

display(df.select(["question", "answer", "generated_answer", "retrieval_score", "gpt_groundedness","gpt_relevance", "gpt_similarity"]))

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 21, Finished, Available, Finished)SynapseWidget(Synapse.DataFrame, 22b97d27-91e1-40f3-b888-3a3399de9d6b)

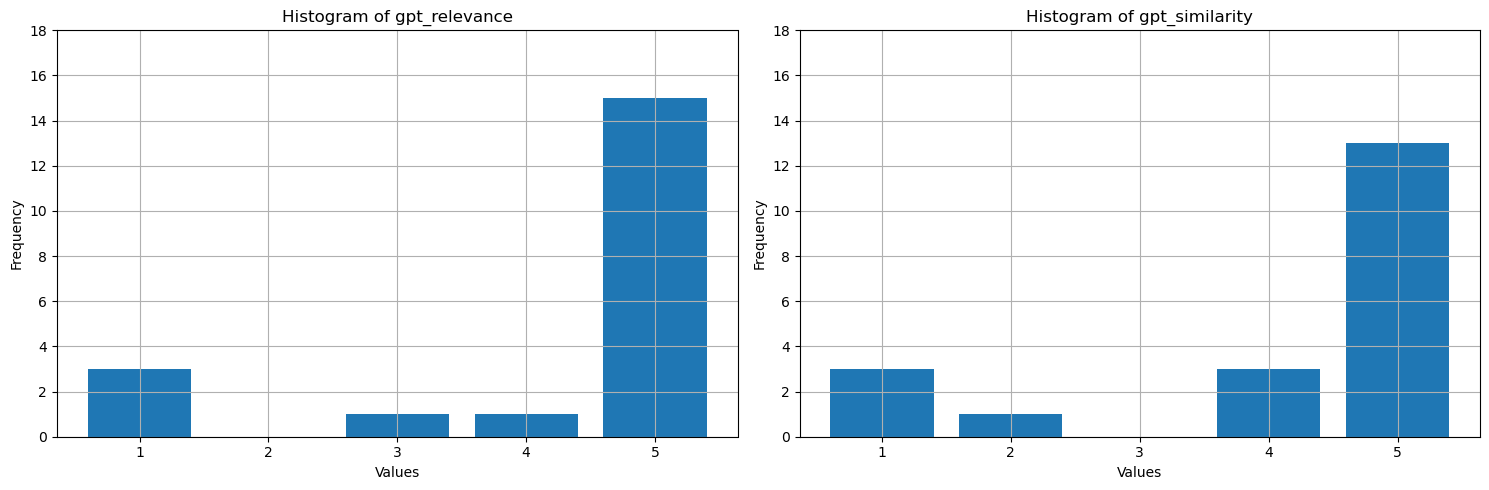

이러한 값은 무엇을 표시합니까? 쉽게 해석할 수 있도록 근거, 관련성 및 유사성의 히스토그램을 그어 줍니다. LLM은 기준 정답에 비해 더 장황하여 유사성 측정 결과를 낮춥니다. 답변의 약 절반은 의미상으로 정확하지만, 네 개의 별을 받아 대부분 유사하다고 평가됩니다. 세 메트릭 모두에 대한 대부분의 값은 4 또는 5이며, 이는 RAG 성능이 좋다는 것을 시사합니다. 몇 가지 이상값이 있습니다. 예를 들어 질문 How many species of otter are there?의 경우 생성된 There are 13 species of otter모델이 높은 관련성과 유사성으로 정확합니다(5). 이유는 알 수 없지만, GPT는 제공된 맥락에 잘 맞지 않는다고 여기고 별 하나만 부여했습니다. 별 하나 이상의 AI 지원 메트릭이 하나 이상 있는 다른 세 가지 경우에서는 낮은 점수가 잘못된 대답을 가리킵니다. LLM은 때때로 점수를 잘못 지정하지만 일반적으로 정확하게 점수를 지정합니다.

# Convert Spark DataFrame to Pandas DataFrame

pandas_df = df.toPandas()

selected_columns = ['gpt_groundedness', 'gpt_relevance', 'gpt_similarity']

trimmed_df = pandas_df[selected_columns].astype(int)

# Define a function to plot histograms for the specified columns

def plot_histograms(dataframe, columns):

# Set up the figure size and subplots

plt.figure(figsize=(15, 5))

for i, column in enumerate(columns, 1):

plt.subplot(1, len(columns), i)

# Filter the dataframe to only include rows with values 1, 2, 3, 4, 5

filtered_df = dataframe[dataframe[column].isin([1, 2, 3, 4, 5])]

filtered_df[column].hist(bins=range(1, 7), align='left', rwidth=0.8)

plt.title(f'Histogram of {column}')

plt.xlabel('Values')

plt.ylabel('Frequency')

plt.xticks(range(1, 6))

plt.yticks(range(0, 20, 2))

# Call the function to plot histograms for the specified columns

plot_histograms(trimmed_df, selected_columns)

# Show the plots

plt.tight_layout()

plt.show()

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 24, Finished, Available, Finished)

마지막 단계로, 벤치마크 결과를 레이크하우스의 테이블에 저장합니다. 이 단계는 선택 사항이지만 매우 권장됩니다. 결과를 더 유용하게 만듭니다. RAG에서 항목을 변경하는 경우(예: 프롬프트 수정, 인덱스 업데이트 또는 응답 생성기에서 다른 GPT 모델 사용), 영향을 측정하고, 개선 사항을 정량화하고, 회귀를 검색합니다.

# create name of experiment that is easy to refer to

friendly_name_of_experiment = "rag_tutorial_experiment_1"

# Note the current date and time

time_of_experiment = current_timestamp()

# Generate a unique GUID for all rows

experiment_id = str(uuid.uuid4())

# Add two new columns to the Spark DataFrame

updated_df = df.withColumn("execution_time", time_of_experiment) \

.withColumn("experiment_id", lit(experiment_id)) \

.withColumn("experiment_friendly_name", lit(friendly_name_of_experiment))

# Store the updated DataFrame in the default lakehouse as a table named 'rag_experiment_runs'

table_name = "rag_experiment_run_demo1"

updated_df.write.format("parquet").mode("append").saveAsTable(table_name)

셀 출력:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 28, Finished, Available, Finished)

언제든지 실험 결과로 돌아가서 검토하고, 새 실험과 비교하고, 프로덕션에 가장 적합한 구성을 선택합니다.

요약

AI 지원 메트릭 및 top-N 검색 비율을 사용하여 RAG(검색 보강 생성) 솔루션을 빌드합니다.