Обучение

Сертификация

Microsoft Certified: Azure AI Engineer Associate - Certifications

Разработка и реализация решения Azure AI с помощью служб ИИ Azure, поиска ИИ Azure и Azure Open AI.

Этот браузер больше не поддерживается.

Выполните обновление до Microsoft Edge, чтобы воспользоваться новейшими функциями, обновлениями для системы безопасности и технической поддержкой.

Creating AI models is more than training and validating a model. This article summarizes key requirements for production-ready models.

💡Model development checklist:

The following sections describe more elements of developing high-quality production models.

Much of the complexity in developing ML systems is in data preparation and data normalization for feature engineering workflows. Feature management enables accessing features by name, model version, lineage, etc., in a uniform way across environments. Using feature management provides consistency to help improve developer productivity. It also promotes discoverability and reusability of features across teams.

For more information, see Feature Management

A machine learning pipeline is an automated process that generates an AI model. In general, it can be any process that uses machine learning patterns and practices or a part of bigger ML process. It’s common to see data preprocessing pipelines, scoring pipelines for batch scenarios, and even pipelines that orchestrate training based on cognitive services. Therefore, an ML pipeline should be understood as a set of steps that is sequential or in parallel, where each step is executed on a single or multiple nodes (to speed up some tasks). The pipeline itself defines the steps and configures the compute resources needed to execute the steps and access-related datasets and data stores. To keep things simple, a pipeline typically is built with a well-defined set of technologies and/or services.

If a pipeline is properly configured, regenerating a model is simple and reliable. If the same training dataset is used each time the pipeline runs, the quality of the model should be similar. The importance of a given model artifact is thus reduced, as it can be regenerated. (We are not yet discussing model deployment.)

Datasets are important from many different perspectives, including traceability, data labeling, and data drift detection. While each of these topics is complex, we'll make some simplified assumptions for this article.

Consider the following example: We need to train a model to find some bad pixels in raw video frames. In most real-world scenarios, raw data must be preprocessed before they can be used to train models. In this example, raw video files first need to be decomposed into raw video frames, and more preprocessing is likely to be required for each frame. Other processing includes selecting statistically relevant training data, extract features, and so on. Only when all data curation/preparation steps are finished can model training begin.

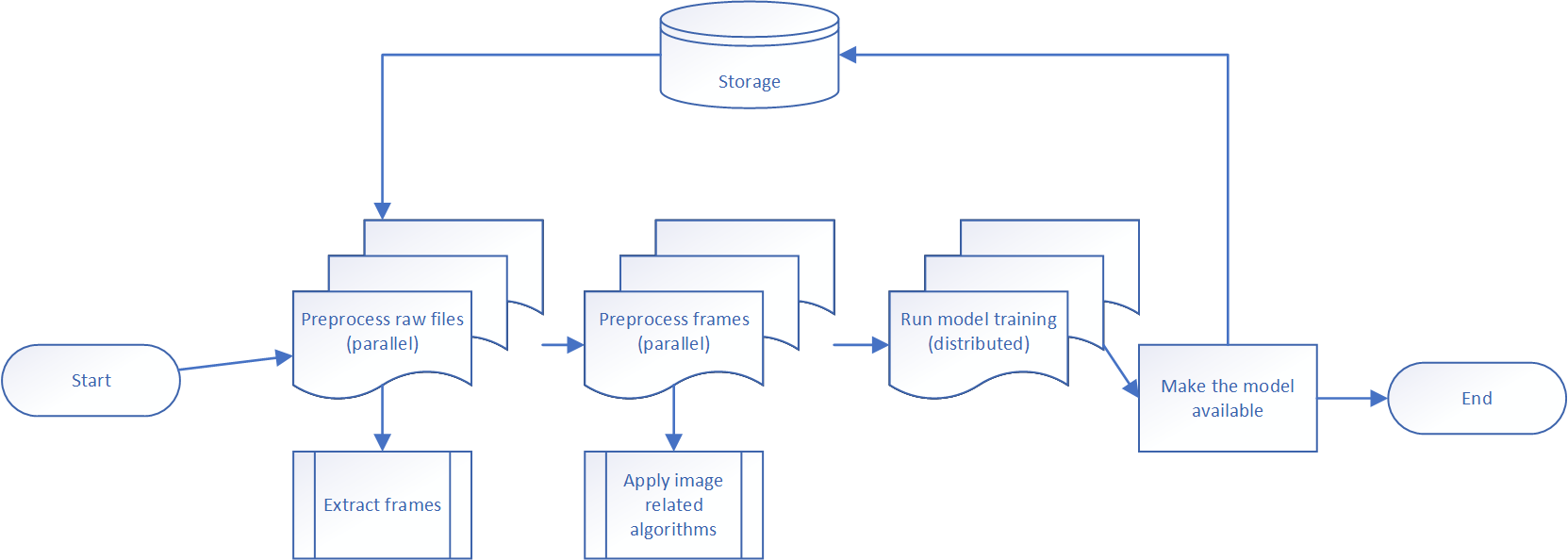

It is not uncommon for pre-processing to take more time than training itself. We might mitigate this time by running pre-processing on several compute nodes at the same time. This mitigation requires an updated pipeline to split processing into multiple steps that execute in different environments. For example, using multi-node clusters or single-node clusters, or GPU nodes versus CPU nodes. If we use a diagram to design our pipeline, it might look like this:

In this pipeline, there are four sequential steps:

This example illustrates why a robust ML pipeline is required rather than a simple build script or manually run process. Let’s discuss technologies.

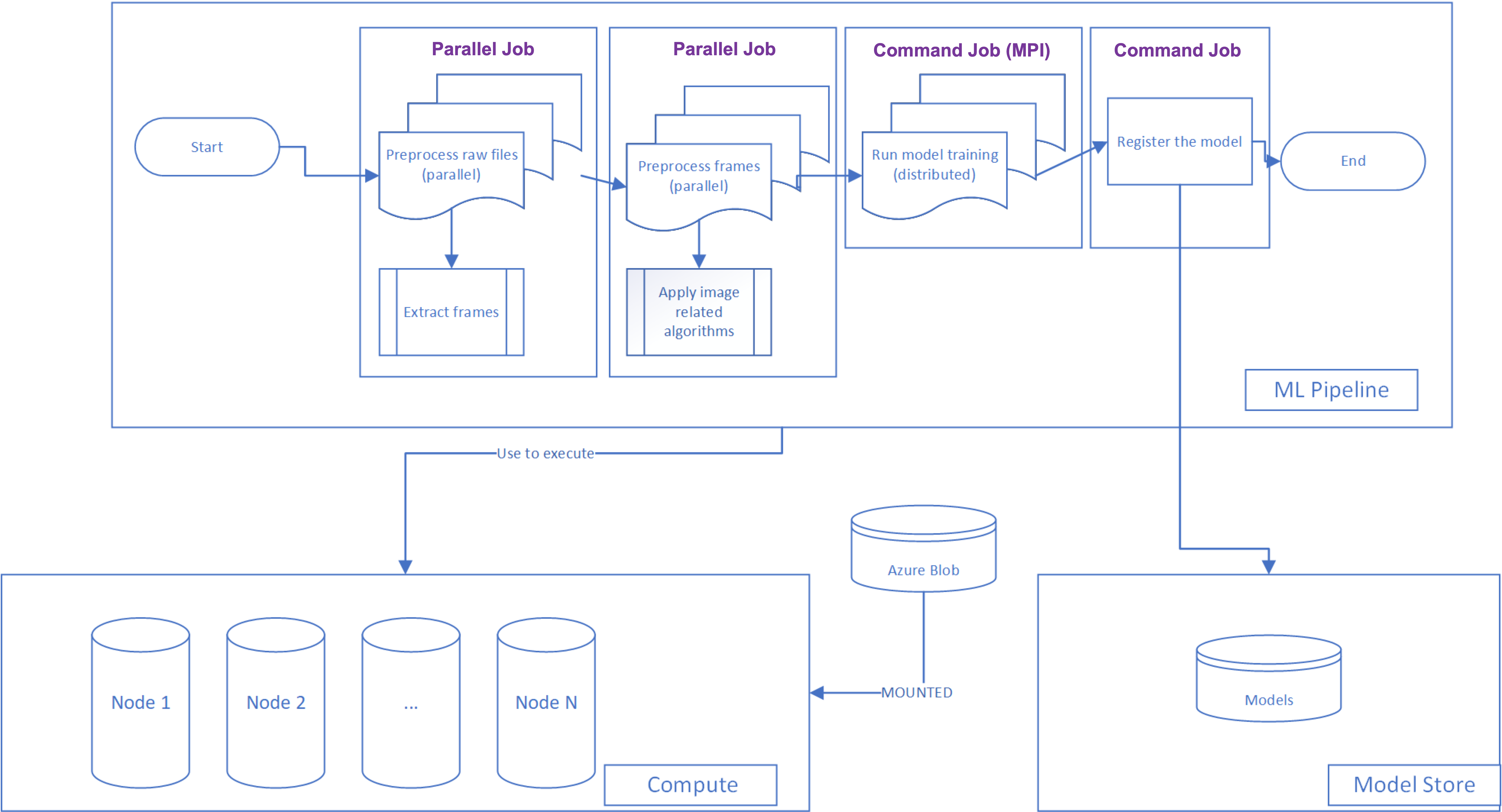

There are many different technologies to implement a pipeline. For example, it's possible to use Kubeflow as a framework for pipeline development and Kubernetes as a compute cluster. Databricks and MLFlow can be utilized as well. At the same time, Microsoft offers a service to develop and manage machine learning pipelines (jobs): Azure Machine Learning service. Let's take Azure ML as an example of a technology to implement our generalized pipeline.

Azure ML has several important components that are useful for pipeline implementations:

Let’s show how our generalized pipeline will look from a technologies perspective:

Data scientists generally prefer using Jupyter Notebooks for Exploratory Data Analysis and initial experiments.

Requiring the use of pipelines at this stage may be premature, as it could limit the data scientists technology choices and overall creativity. Not every Jupyter notebook is going to create a need for a production pipeline, as the data scientists need to test many different approaches and ideas before converging on an approach. Until that work is done, it is hard to design a pipeline.

While the initial experimentation can be done in a notebook, there are some good signals that tell when it is time to start moving experiments from a notebook to an ML pipeline:

When these things begin happening, it’s time to wrap up experimentation and implement a pipeline with technology such as the Azure ML Pipeline SDK.

We recommend following a three-step approach:

train.py. At this stage, you will see how the pipeline works and be able to define all datasets/datastores and parameters as needed. Starting from this stage you are able to run several experiments of the pipeline using different parameters.Here is an example of an Azure ML pipeline definition: https://github.com/microsoft/mlops-basic-template-for-azureml-cli-v2/blob/main/mlops/nyc-taxi/pipeline.yml. This YAML file defines a series of steps (called "jobs" in the YAML) consisting of the Python scripts which execute a training pipeline. The examples follow this sequence of steps (you may not use all of the steps):

prep_job, which processes and cleans the raw datatransform_job, which shapes the cleansed data to match the training job's expected inputstrain_job, which trains the modelpredict_job, which uses a test set of data to run a set of predictions through the trained modelscore_job, which takes the predictions, and scores them against the ground truth labels.Each of these steps references a Python script, which was likely once a set of cells in a notebook. Now, they consist of modular components that can be reused by multiple pipelines.

The full template is available in the MLOps Basic Template for Azure ML CLI v2 repo:

Here are other similar templates:

From a DevOps perspective the development process can be divided into three different stages:

There are a few pieces of advice that we can provide:

.amlignore file.The biggest challenge of the ML pipeline development process is how to modify and test the same pipeline from different branches. If we use fixed names for all experiments, models, and pipeline names, it will be hard to differentiate your own artifacts working in a large team. To make sure we can locate all feature branch-related experiments, we recommend using the feature branch name to mark all pipelines. Also, use the branch name in experiments and artifact names. This way, it will be possible to differentiate pipelines from different branches and help data scientists to log various feature-branch runs under the same name.

The designed scripts can be utilized to publish pipelines and execute them from a local computer or from a DevOps system. Below is an example of how you can define Python variables based on initial environment variables and on a branch name:

pipeline_type = os.environ.get('PIPELINE_TYPE')

source_branch = os.environ.get('BUILD_SOURCEBRANCHNAME')

model_name = f"{pipeline_type}_{os.environ.get('MODEL_BASE_NAME')}_{source_branch}"

pipeline_name = f"{pipeline_type}_{os.environ.get('PIPELINE_BASE_NAME')}_{source_branch}"

experiment_name = f"{pipeline_type}_{os.environ.get('EXPERIMENT_BASE_NAME')}_{source_branch}"

You can see that in the code above we are using branch name and pipeline type. The second one is useful if you have several pipelines. To get a branch name from a local computer, you can use the following code:

git_branch = subprocess.check_output("git rev-parse --abbrev-ref HEAD",

shell=True,

universal_newlines=True)

Let’s start with introduction of two branch types that we are going to use in the process:

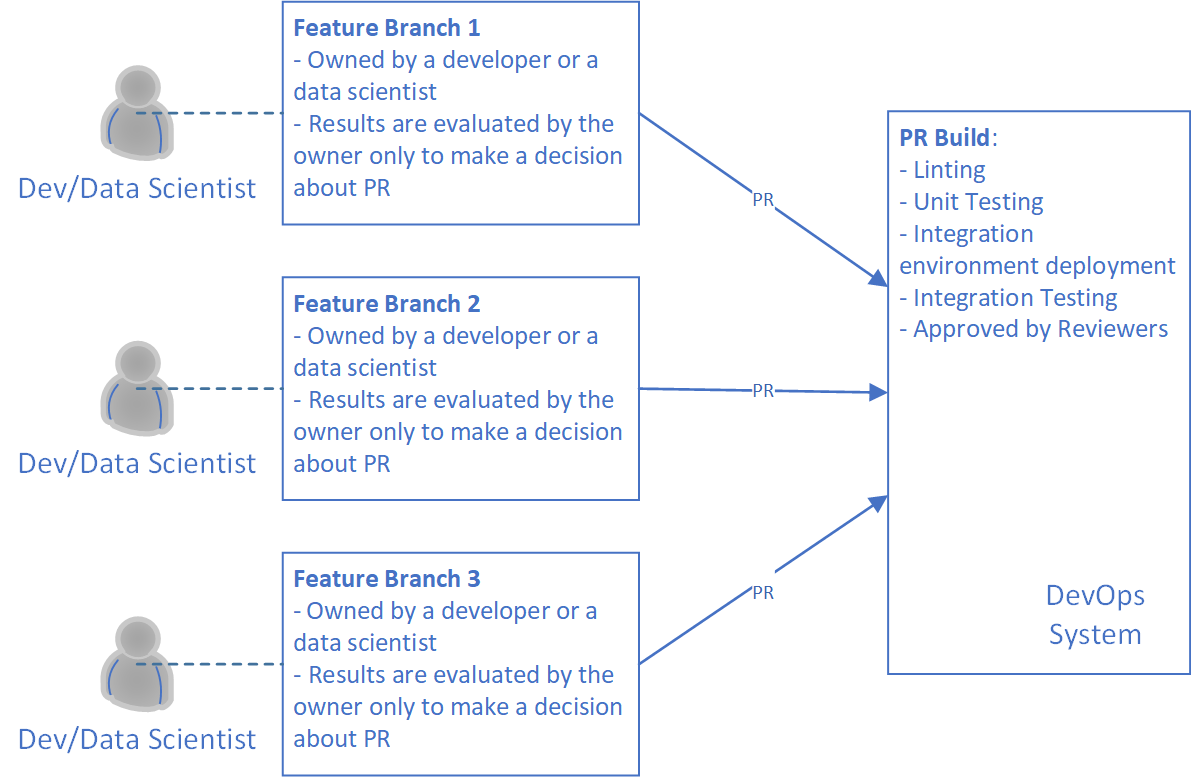

So, we need to start with a feature branch. It's important to guarantee that all code that is going to a development branch can represent working ML training pipelines. The only way to do that is to publish the pipeline and execute the pipelines on a toy data set. The toy dataset should be prepared in advance. It should be small enough to guarantee the pipelines won't spend much time on execution. Therefore, if we have changes in our ML training pipeline, we have to publish it and execute it on the toy dataset.

The diagram above demonstrates the process that we explained in the section. Furthermore, you can see that there is a Build for Linting and Unit Testing. This Build can be another policy to guarantee code quality.

Pay attention so that if you have more than one pipeline in the project, you might need to implement several PR Builds. Ideally, one Build per pipeline, if it's available in your DevOps system. Our general recommendation is to have as many Builds for as many pipelines as we have. It allows us to speed up the development process since developers shouldn't wait for all experiments to be done. In some cases, it’s not possible.

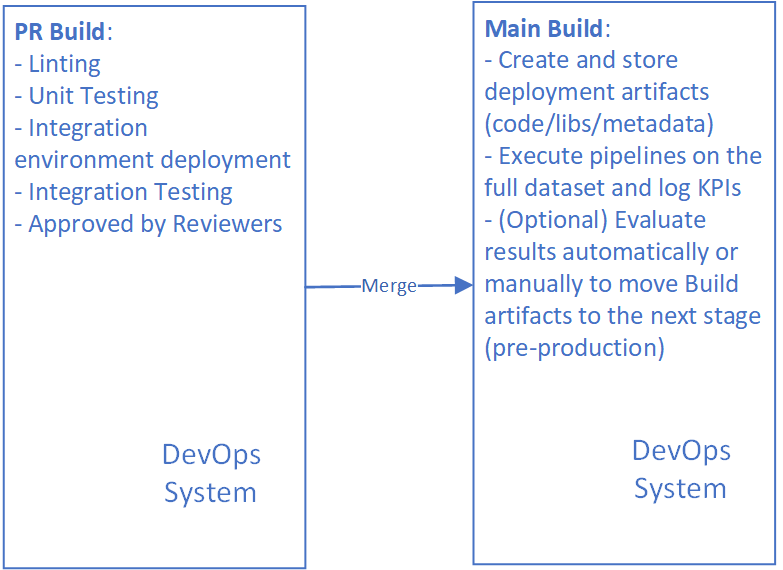

Once we have code in our development branch, we need to publish modified pipelines to our stable environment, and we can execute it to produce all required artifacts (models, update scoring infrastructure, etc.)

Pay attention that our output artifact on this stage is not always a model. It can be a library or even our pipeline itself if we are planning to run it in production (scoring one, for example).

Look at the Model Release section to get details about next steps.

When unit testing we should mock model load and model predictions similarly to mocking file access.

There may be cases when you want to load your model to do smoke tests, or integration tests.

Note, it will often take a bit longer to run it's important to be able to separate them from unit tests. The developers on the team will still be able to run unit tests as part of their test-driven development.

One approach is using marks

@pytest.mark.longrunning

def test_integration_between_two_systems():

# this might take a while

Run all tests that are not marked long-running

pytest -v -m "not longrunning"

ML unit tests are not intended to check the accuracy or performance of a model. Unit tests for an ML model are for code quality checks - for example:

fit?The ML model tests do not strictly follow all of the best practices of standard Unit tests; not all outside calls are mocked. These tests are much closer to a narrow integration test. However, the benefits of having simple tests for the ML model help to stop a poorly configured model from spending hours in training, while still producing poor results.

Examples of how to implement these tests (for Deep Learning models) include:

An important part of the unit test is to include test cases for data validation.

For example:

Apart from unit testing code, we can also test, debug, and validate our models in different ways during the training process.

Some options to consider at this stage:

Running AI/ML systems in production expose companies to a brand-new class of attacks. The following attacks should be considered:

These attacks should be carefully considered. For information on adversarial attacks and how to include them in the threat modeling process, follow the links below:

Failure Modes in ML | Microsoft Docs

Threat Modeling AI/ML Documentation | Microsoft Docs

As we mentioned above, there are many technologies to use to implement a generalized pipeline. In this example, we show a pipeline implementation based on Microsoft Azure AI Services.

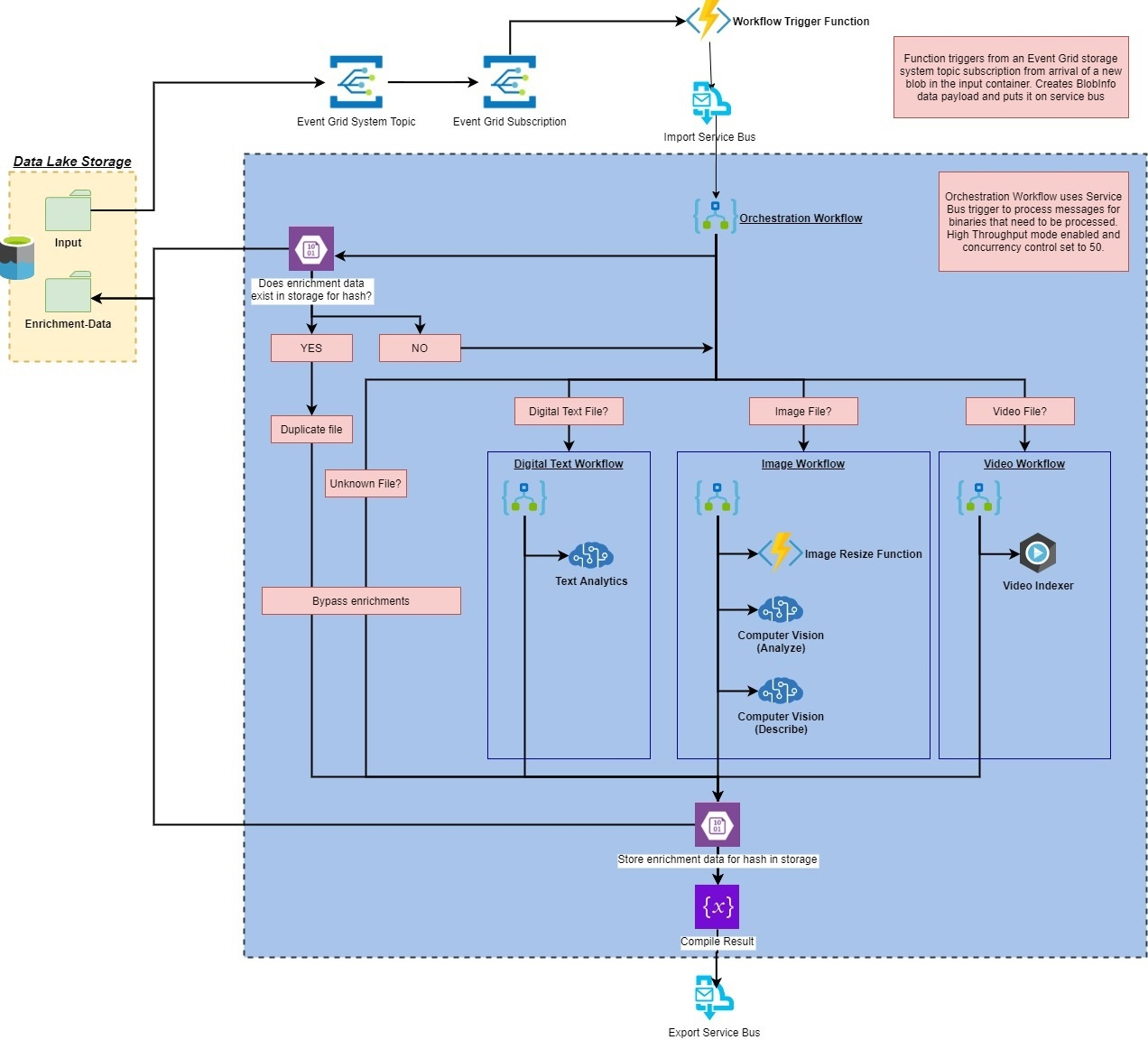

This example contains an AI enrichment pipeline, the pipeline is triggered by the upload of a binary file to an Azure Storage account. The pipeline will "enrich" the file with insights from various Azure AI Services, custom code, and Video Indexer before submitting it to a Service Bus queue for further processing.

The entire end-to-end pipeline illustrates the flow data: From ingestion, to enrichment, to training, to inference for use with unstructured data such as videos, documents, and images.

The image below illustrates the high-level system architecture:

The full example and source code may be found in the Official Azure Media Services | Video Indexer Samples repository

Обучение

Сертификация

Microsoft Certified: Azure AI Engineer Associate - Certifications

Разработка и реализация решения Azure AI с помощью служб ИИ Azure, поиска ИИ Azure и Azure Open AI.

Документация

Примеры конвейеров и наборов данных для конструктора - Azure Machine Learning

Узнайте, как с помощью примером в конструкторе Машинного обучение Azure быстрее начать работу с конвейерами машинного обучения.

AutoMLStep позволяет использовать автоматизированное машинное обучение в конвейерах.

Конвейеры Машинного обучение Azure

В этом видео рассказывается о Машинное обучение Azure Pipelines, комплексном оркестраторе заданий, оптимизированном для рабочих нагрузок машинного обучения. С помощью Конвейеров машинного обучения Azure все шаги, участвующие в жизненном цикле ученых по обработке и анализу данных, можно объединить в одном конвейере, повышая гибкость внутреннего цикла, совместную работу и повторное использование данных и кода, обеспечивая высокую надежность.Избранное шоу ИИ:Не пропустите новые эпизоды, подпишитесь на ai Show&