I have the same problem - did anyone find a root cause of this yet please?

We're running a 16 node failover cluster with storage hosted on an SMB3 share with RDMA on a Windows 2019 server.

Most of our VMs use a differencing VHDX with the same parent VHDX, and when we need another batch of VMs I create 8 at a time.

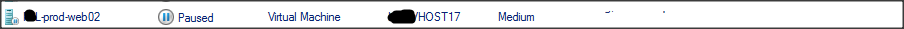

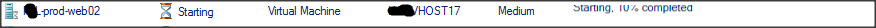

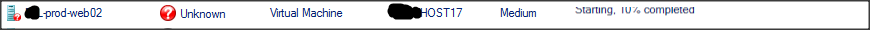

When I create a batch and start them all, often one or two of the VMs will get stuck in a loop as described above, which is a nightmare to resolve without affecting the in-use VMs.

Occasionally this happens when bringing the cluster back up from maintenance on an established VM too, but mostly its the new ones, which after booting install updates etc so rapidly expand their VHDXs.

If I kill the VM process on the hosting HyperV server, then try to start it again, I usually get one of the two errors:

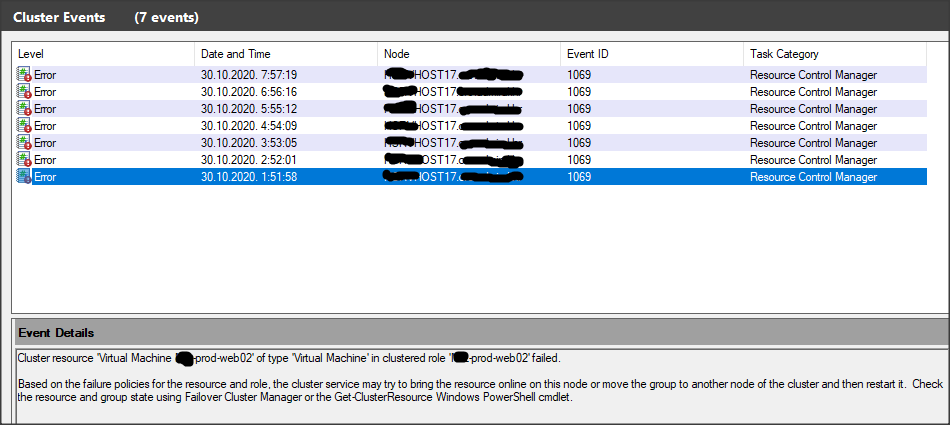

"Cluster resource 'Virtual Machine XXXX' of type 'Virtual Machine' in clustered role 'YYYY' failed. The error code was '0x26' ('Reached the end of the file.')."

or

"Cluster resource 'Virtual Machine XXXX' of type 'Virtual Machine' in clustered role 'YYYY' failed. The error code was '0xc03a0016' ('The chain of virtual hard disks is inaccessible. The process has not been granted access rights to the parent virtual hard disk for the differencing disk.')."

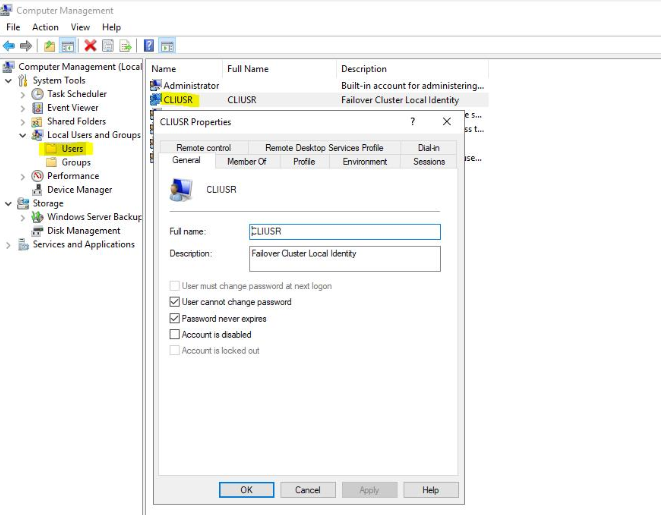

Permissions don't seem to be the problem though, I can grant the everyone group access to the VHDX file with no change in outcome.

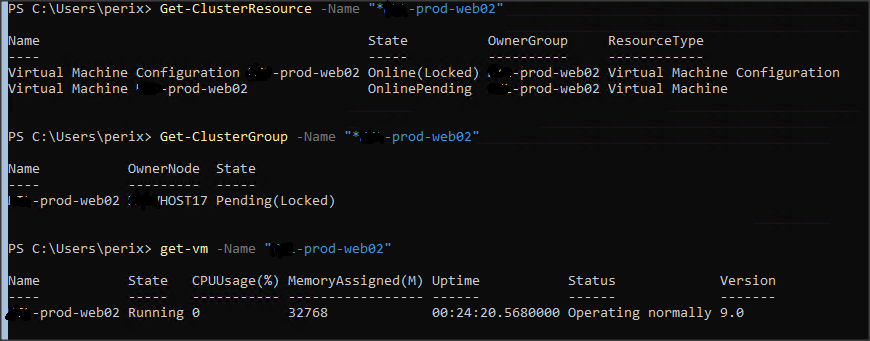

Once the VM process is killed, the VM status viewed from the individual HyperV host is 'Off', however the failover cluster still shows it looping through starting, paused, etc. If you click on the VM in the Failover Cluster Manager it crashes mmc.

If I restart the VMMS service on that host I can usually get the VM running again, though that doesn't seem to fix the state of the VM in the cluster (which keeps looping). If I can get the other VMs migrated from the host (temperamental when the cluster is experiencing the rapid looping status issue on a VM) and restart it, all is reset and I can continue again.

Often when I resolve one of these looping VMs though, I find another one starts...

There are no storage issues as far as I can see - the established VMs are all happy and I can save/resume 10 or more at a time fairly rapidly.

All very odd, the above is the only mention of this issue I can find on the web.

Any further info from others experiences appreciated, this problem makes me nervous touching the cluster in working hours...