Hi @Kakehi Shunya (筧 隼弥) ,

Thankyou for sharing more details on your requirement. Additionally , you can try following approach to achive the requirement:

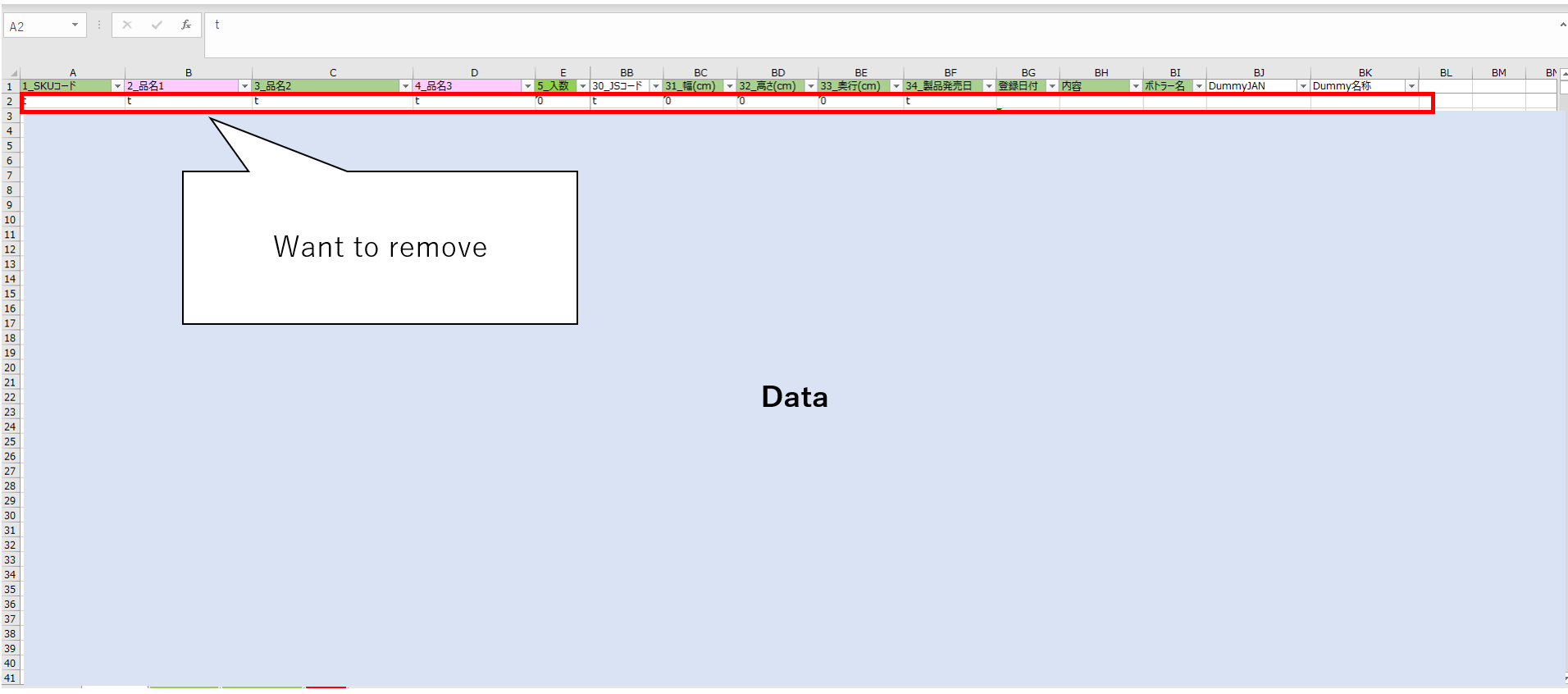

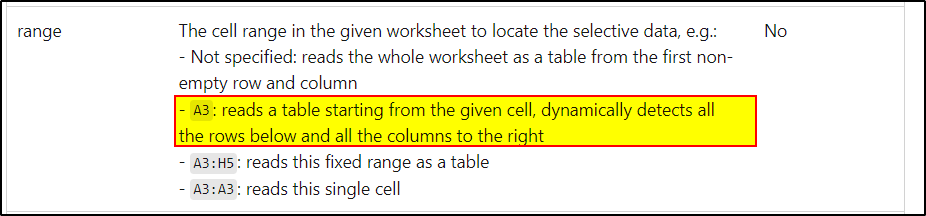

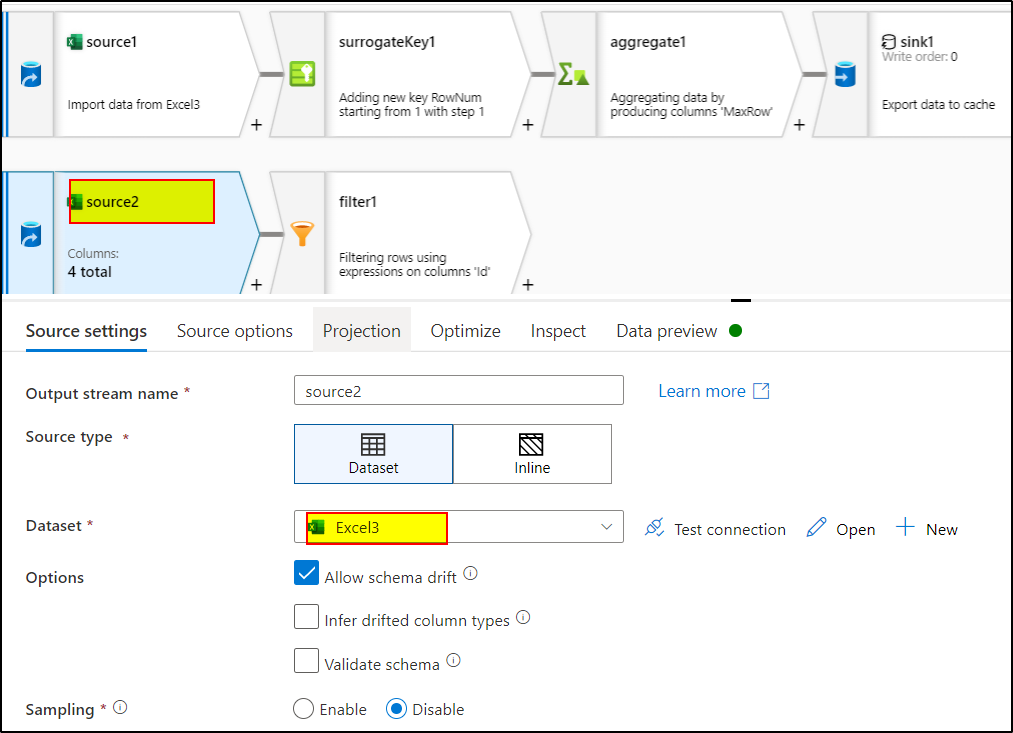

1. Create the dataset pointing to your excel, make sure to import the schema and check first row as header.

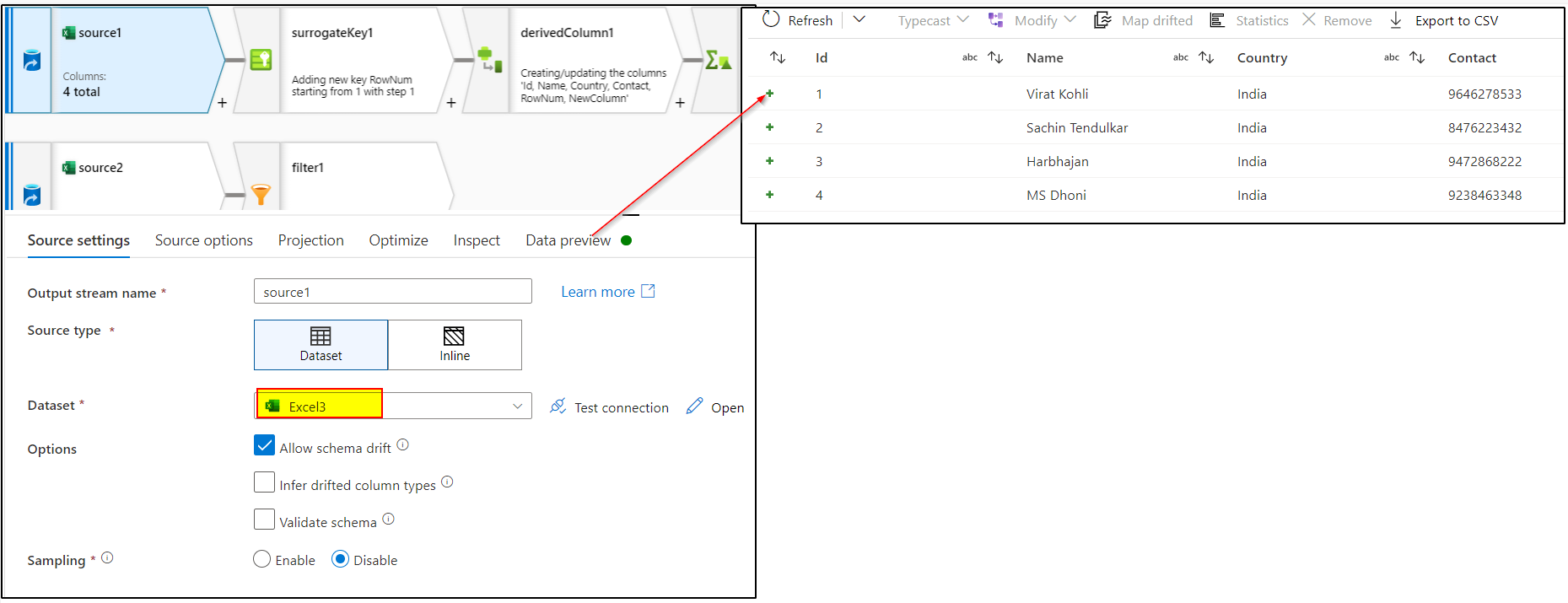

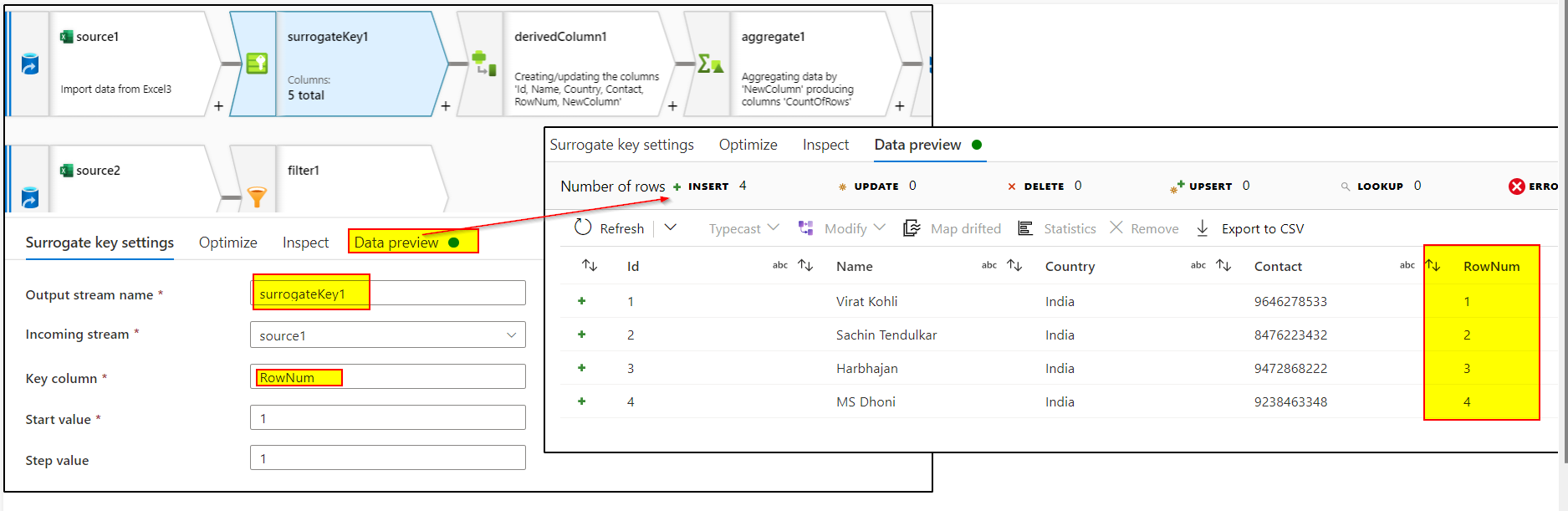

2. In the source transformation of the dataflow, select the above dataset and preview the data.

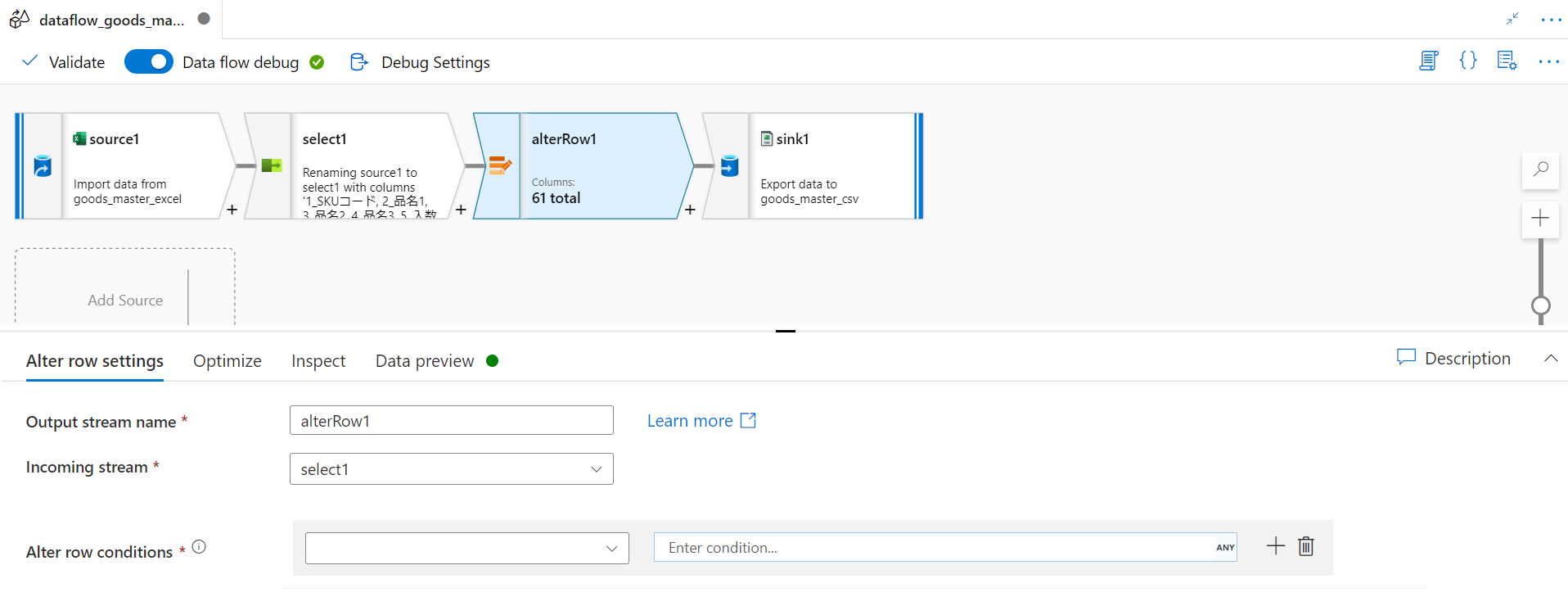

3. Use surrogate key transformation to generate column say RowNum which would add an incrementing key value to each row of data.

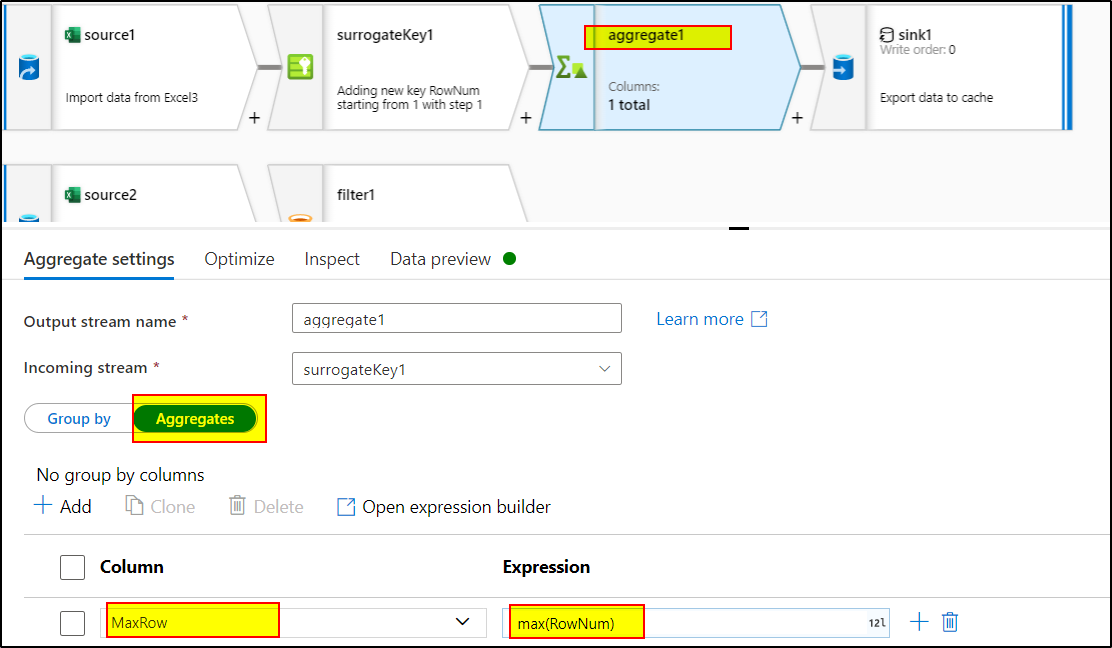

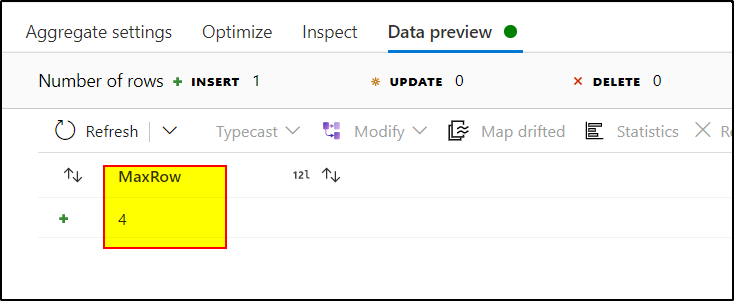

4. Use aggregate transformation to find out the maximum RowNum by using the expression : max(RowNum) in aggregate tab. As group by tab is optional, you can skip that.

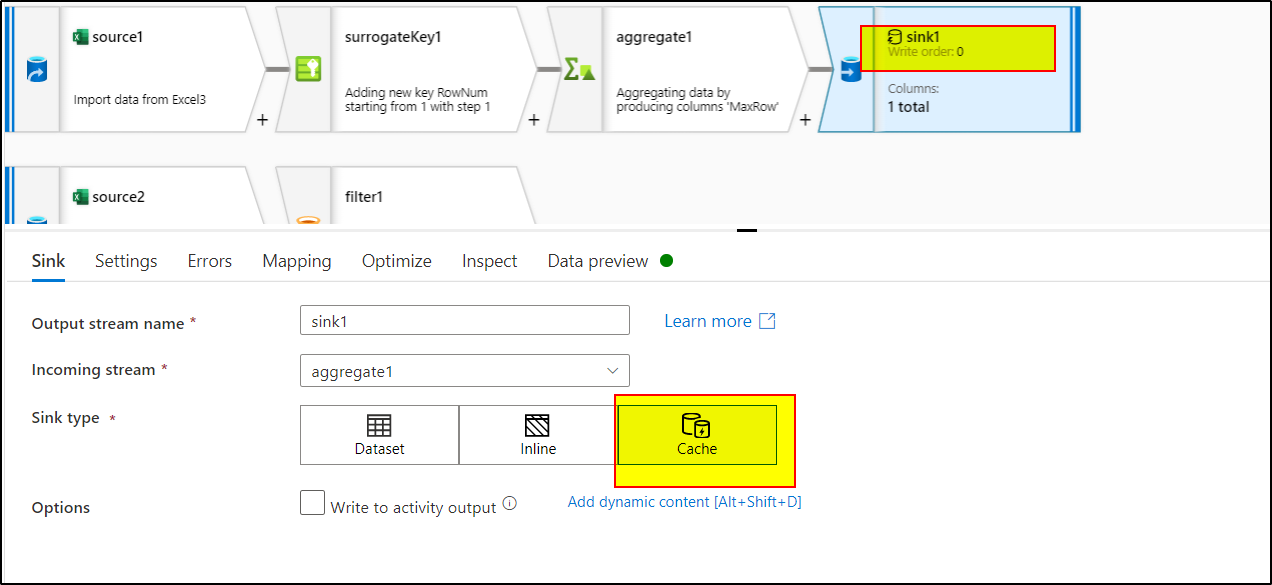

5. Use Sink transformation and select cache as the sink type to write the data into spark cache that can be referenced in other transformations.

6. Add another source transformation and refer to the same source dataset.

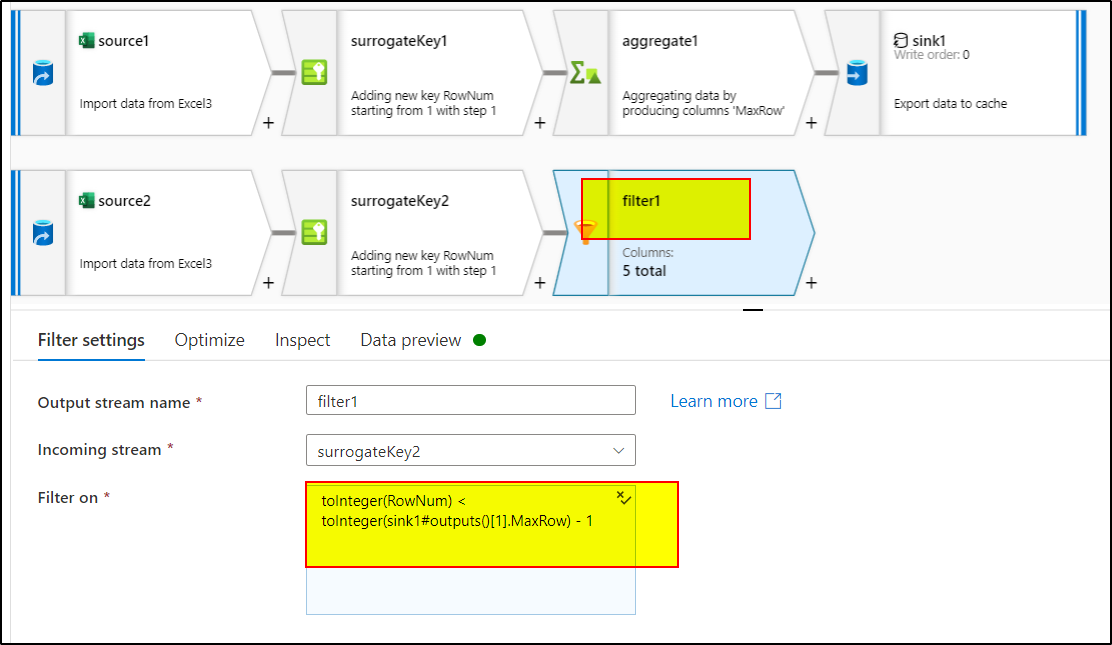

7. Use Surrogate key again to generate the RowNum again as done in step 3

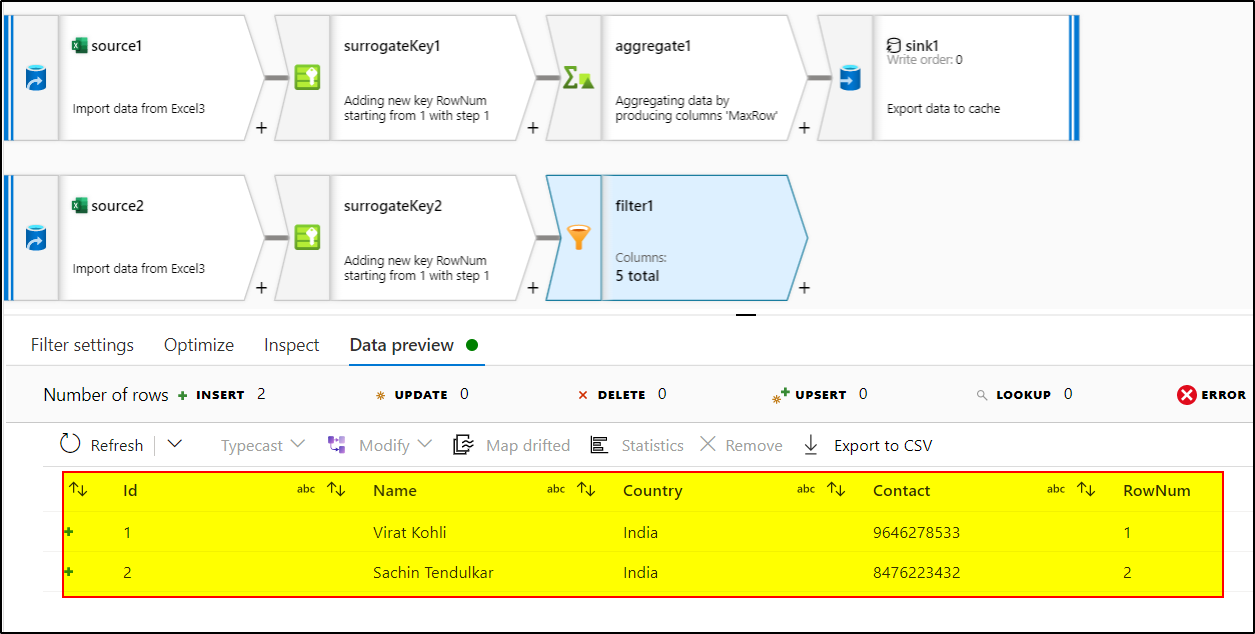

8. Use Filter transformation and use this expression : toInteger(RowNum) < toInteger(sink1#outputs()[1].MaxRow) - 1

9. Use sink transformation to load this data to the target database.

You can use the similar approach to filter out the first row as well by getting the min(RowNum) and using expression in the filter transformation : toInteger(RowNum) > toInteger(sink1#outputs()[1].MinRow)

Hope this will help. Please let us know if any further queries.

------------------------------

- Please don't forget to click on

or upvote

or upvote  button whenever the information provided helps you.

button whenever the information provided helps you.

Original posters help the community find answers faster by identifying the correct answer. Here is how - Want a reminder to come back and check responses? Here is how to subscribe to a notification

- If you are interested in joining the VM program and help shape the future of Q&A: Here is how you can be part of Q&A Volunteer Moderators