Copilot for Security in Defender for Cloud (Preview)

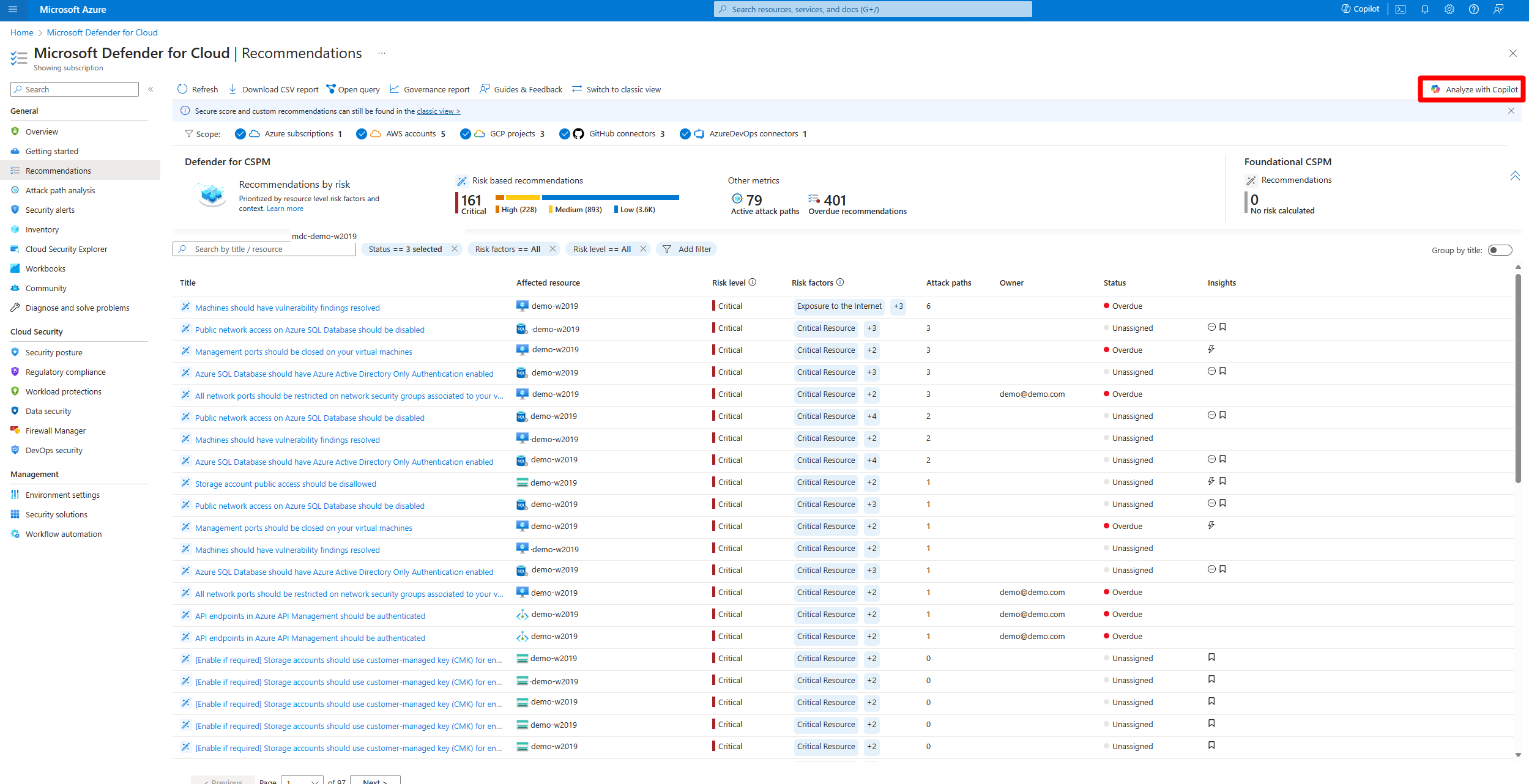

Microsoft Defender for Cloud integrates both Microsoft Copilot for Security and Microsoft Copilot for Azure into its experience. With these integrations, you can ask security-related questions, receive responses, and automatically trigger the necessary skills needed to analyze, summarize, remediate, and delegate recommendations using natural language prompts.

Both Copilot for Security and Copilot for Azure are cloud-based AI platforms that provide a natural language copilot experience. They assist security professionals in understanding the context and effect of recommendations, remediating or delegating tasks, and addressing misconfigurations in code.

Defender for Cloud's integration with Copilot for Security and Copilot for Azure on the recommendations page allows you to enhance your security posture and mitigate risks in your environments. This integration streamlines the process of understanding and implementing recommendations, making your security management more efficient and effective.

How Copilot works in Defender for Cloud

Defender for Cloud integrates Copilot directly in to the Defender for Cloud experience. This integration allows you to analyze, summarize, remediate, and delegate your recommendations with natural language prompts

When you open Copilot, you can use natural language prompts to ask questions about the recommendations. Copilot provides you with a response in natural language that helps you understand the context of the recommendation. It also explains the effect of implementing the recommendation and provides steps to take for implementation.

Some sample prompts include:

- Show critical risks for publicly exposed resources

- Show critical risks to sensitive data

- Show resources with high severity vulnerabilities

Copilot can assist with refining recommendations, providing summaries, remediation steps, and delegation. It enhances your ability to analyze and act on recommendations.

Data processing workflow

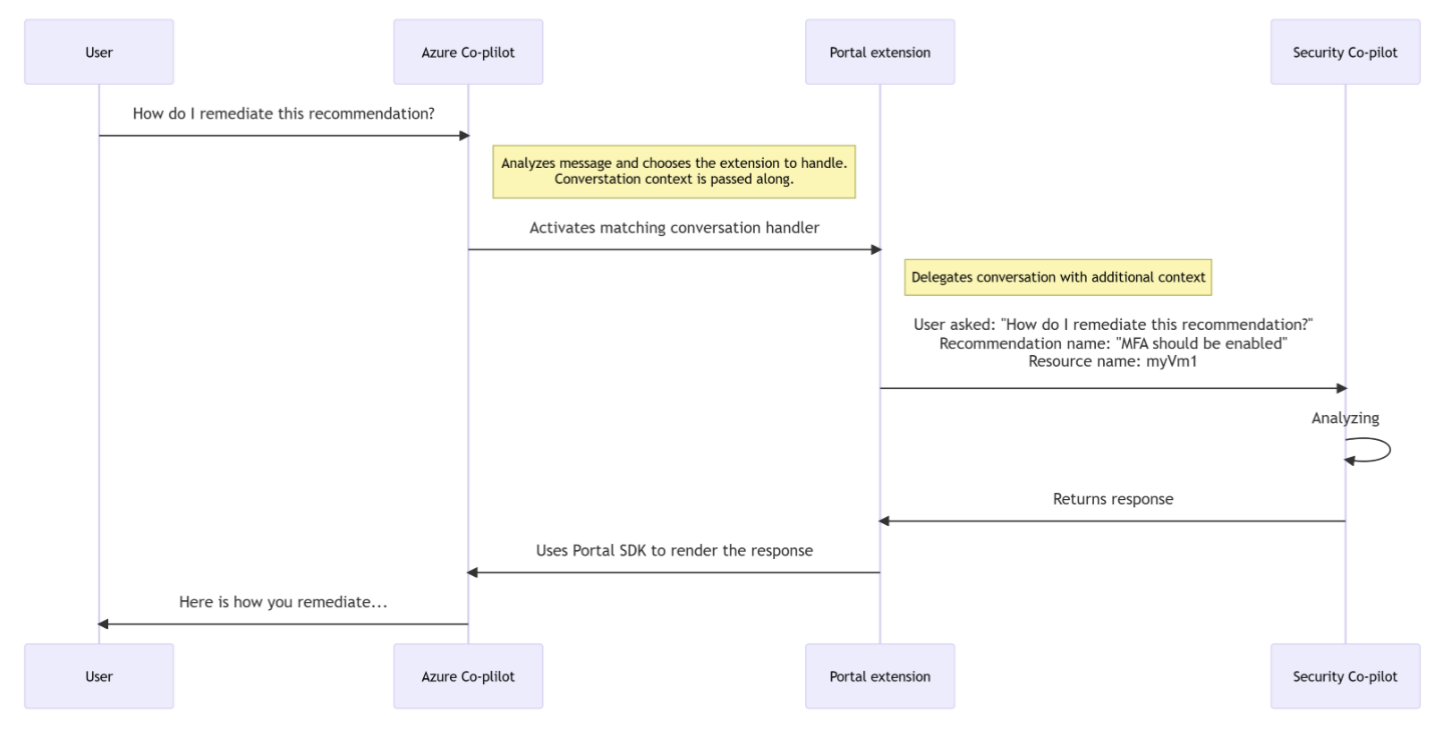

When you use Copilot for Security in Defender for Cloud, the following data processing workflow occurs:

A user enters a prompt in the Copilot interface.

Copilot for Azure receives the prompt.

Copilot for Azure evaluates the prompt and the active page, to determine the skills needed to resolve the prompt.

If the prompt is security related and the skill is available, Copilot for Security executes the skills and sends back a response to Copilot in Azure for presentation.

If a security-related prompt is received but the skill is unavailable, Azure Copilot searches all of its available skills to find the most relevant skills to resolve the prompt. A response is then sent to the user.

Check out the Copilot for Security FAQs.

Copilot's capabilities in Defender for Cloud

Copilot for Security in Defender for Cloud isn't reliant on any of the available plans in Defender for Cloud and is available for all users when you:

- Enable Defender for Cloud on your environment.

- Have access to Azure Copilot.

- Have Security Compute Units assigned for Copilot for Security.

However, in order to enjoy the full range of Copilot for Security's capabilities in Defender for Cloud, we recommend enabling the Defender for Cloud Security Posture Management (DCSPM) plan on your environments. The DCSPM plan includes many extra security features such as Attack path analysis, Risk prioritization and more, all of which can be navigated and managed using Copilot for Security. Without the DCSPM plan, you're still able to use Copilot for Security in Defender for Cloud, but in a limited capacity.

Monitor your usage

Copilot for Security has a usage limit. When the usage in your organization is nearing its limit, you're notified when you submit a prompt. To avoid a disruption of service, you need to contact the Azure capacity owner or contributor to increase the Security Compute Units (SCU) or limit the number of prompts.

Learn more about usage limits.