@Miclaud Welcome to Microsoft Q&A Forum, Thank you for posting your query here!

FSRM is nothing to do with AFS! If you are asking specifically related FSRM can be changed to use a quota on "size" then that is for the windows on-prem team to look at.

Additional information: There are couple of AFS documents for Cloud tiering with FSRM

https://learn.microsoft.com/en-us/azure/storage/file-sync/file-sync-how-to-manage-tiered-files

https://learn.microsoft.com/en-us/azure/storage/file-sync/file-sync-choose-cloud-tiering-policies and from this document

You can still enable cloud tiering if you have a volume-level FSRM quota. Once an FSRM quota is set, the free space query APIs that get called automatically report the free space on the volume as per the quota setting.

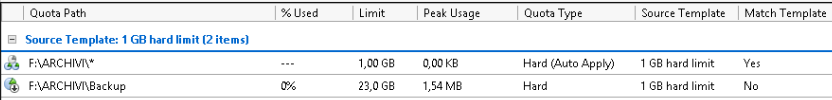

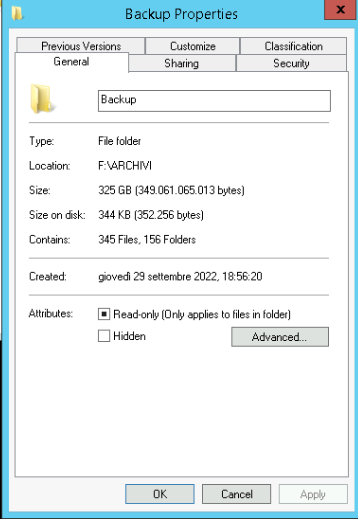

• When FSRM quota is configured it looks at the logical size of the file to determine if quota is met or not.

o E.g. if a 1GB file is tiered for FSRM Quota it still looks at it as 1GB file instead of 0

• When FSRM Quota is configured the volume free space policy looks at free space reported by FSRM Quota. It does not look at the actual volume free space percent

o E.g. if on a volume 10GB Quota is set to 5GB then for tiering it is reported as 5 GB

• Cloud tiering does not recall files to meet the policies

o E.g. if cloud tiering policy was set to 20% it frees up space and later the volume was doubled. It does not recall files to meet the 20%

• Tiering can tier files only when they are synced

• Tiering runs every 1 hr and tends to tier approx. 5% more

For an example

• Volume: 10 GB

• Quota configured on root of volume which is also root of Server Endpoint

• Quota: 20 GB

Note: By default at least UI doesn’t allow it allows max of 10GB but with cmdlet it allows 20GB

• Free space Percent: 20%

If you have any additional questions or need further clarification, please let me know.

----------

Please do not forget to  and “up-vote” wherever the information provided helps you, this can be beneficial to other community members.

and “up-vote” wherever the information provided helps you, this can be beneficial to other community members.

and “up-vote” wherever the information provided helps you, this can be beneficial to other community members.

and “up-vote” wherever the information provided helps you, this can be beneficial to other community members.