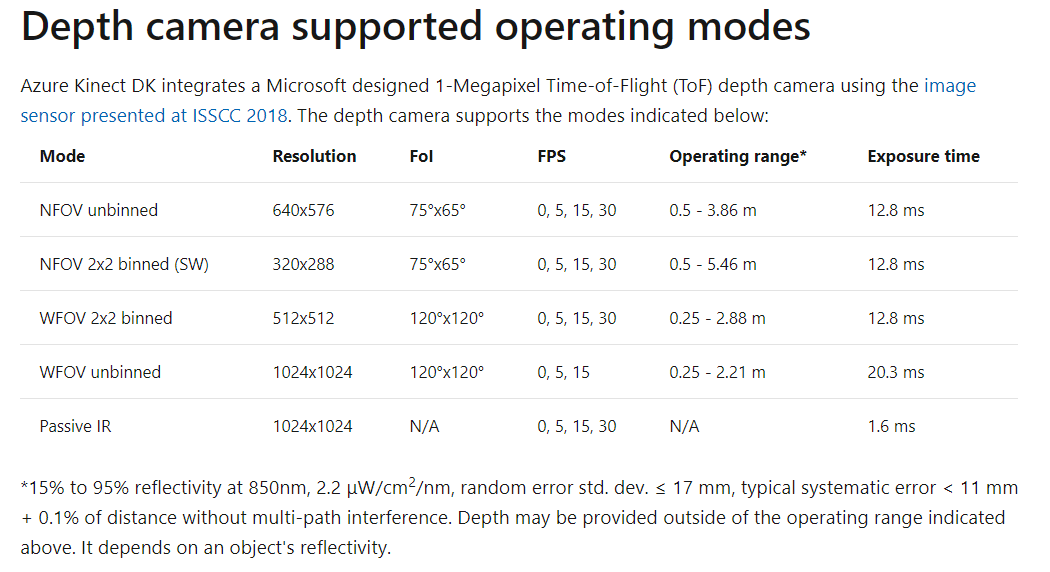

@Chhay Lyhour Azure Kinect DK will not meet your requirements. The minimum distance is 25cm in WFOV and the Z resolution is ~1cm. This is a camera design limitation and not a technology limitation. It is possible to build a bespoke depth camera using Kinect technology that met your requirements. That said the use case would have to justify the expenditure in developing said camera.

Can Kinect Depth camera working for macroscale ?

I want to know whether Kinect RGB-D camera can estimate the depth of small object (0.5mm - 5mm depth) ? I want to capture the aggregate (small rock) on pavement surface texture. The distance from the object is 15cm. I want to use depth image to combine with RGB image for improve object detection (aggregate detection and counting). I am concern that the depth information cannot be achieve with the small scale. Therefore, is there any type of Kinect camera to address this problem ? Thank you very much.

Azure Kinect DK

5 answers

Sort by: Most helpful

-

-

JAMES MORGENSTERN 196 Reputation points

2021-04-21T23:10:58.583+00:00 @Chhay Lyhour I will respond with my experience: imaging at 40-60 cm standoff, found able to detect depth differnce on the order of 1 to 2 mm. the cause of much error was in having mutiple reflectances from the edge/background boundaries. i was able to minimize this problem by using a sheet of plexiglass as the backgound dor my objects. I would encourage you to get a unit and try some experiments.

-

JAMES MORGENSTERN 196 Reputation points

2021-04-23T16:03:42.48+00:00 @Chhay Lyhour ·- I cannot advise you directly as i do not understand your problem in its entirety. I would offer this guidance: Do not treat any sensor system simply as a recipe or tool that you mindlessly employ. You need to start with the physical problem you have and model it: What is the object/target you are interested in? What is the characteristic of this object you are trying to measure? How will you differentiate the object of interest from the background? by color? texture? 2D shape? 3D shape? what is the physical character[s] of the object that you will exploit? Model it -- write out the equations that show the sensing problem and data acquisition physics. Why are you limited to 15cm distance ? what other parts of the imaging problem can you exploit -- that is, is the object moving and there is information in the differences from image to image? Or alternatively is the scene static and you can accumulate a set of images and use the over sampling in time to your advantage by converting that to oversampling in space? Can you fill out your profile to indicate your professional presence ?

-

JAMES MORGENSTERN 196 Reputation points

2021-04-24T14:58:03.803+00:00 @Chhay Lyhour -- i have some suggestion for you but I think we have moved beyond the scope of this forum. pls contact me directly .. you can see my contact info on my profile or email at jm at ImageMining.net

-

JAMES MORGENSTERN 196 Reputation points

2021-04-25T14:16:27.64+00:00 @Chhay Lyhour ... Yes to your question.

here is a thought you might try: mount the Kinect on a tripod but instead of looking vertically down at the pavement place it to the side and rotate the Kinect so it is at a shallow grazing angle to the horizontal -- 10 or maybe 20 degrees. Measure the inclination from the horizontal and collect your image[s]. this gets you away from the problem of being far enough from the aggregate and also the problem with range resolution from the Kinect. that is, the height of the aggregate now obscures some portion of the surface 'behind' the aggregate; you can work the geometry to solve for the actual height of the aggregate.

for @Anonymous ... another example where it would be very helpful to get the range measurement from the Kinect and not the processed depth.