Hello @Samy Abdul ,

Thanks for the question and using MS Q&A platform.

In the question doesn't have details about you business requirement and sharing the solution as per the best practices.

Event Hubs is a fully managed Platform-as-a-Service (PaaS) with little configuration or management overhead, so you focus on your business solutions.

Event Hubs for Apache Kafka ecosystems gives you the PaaS Kafka experience without having to manage, configure, or run your clusters.

If you are looking to stream data from event Hubs and then store data in ADLS?

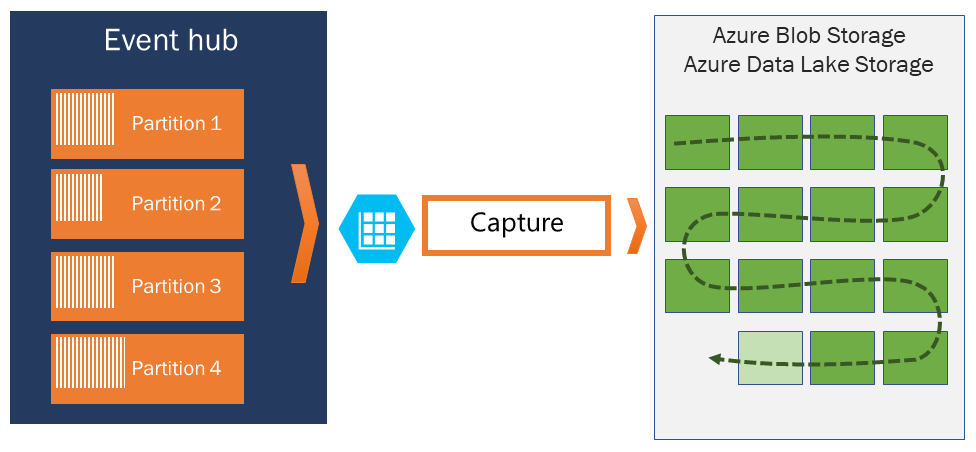

Azure Event Hubs enables you to automatically capture the streaming data in Event Hubs in an Azure Blob storage or Azure Data Lake Storage Gen 1 or Gen 2 account of your choice, with the added flexibility of specifying a time or size interval. Setting up Capture is fast, there are no administrative costs to run it, and it scales automatically with Event Hubs throughput units in the standard tier or processing units in the premium tier. Event Hubs Capture is the easiest way to load streaming data into Azure, and enables you to focus on data processing rather than on data capture.

For more details, refer to Capture events through Azure Event Hubs in Azure Blob Storage or Azure Data Lake Storage

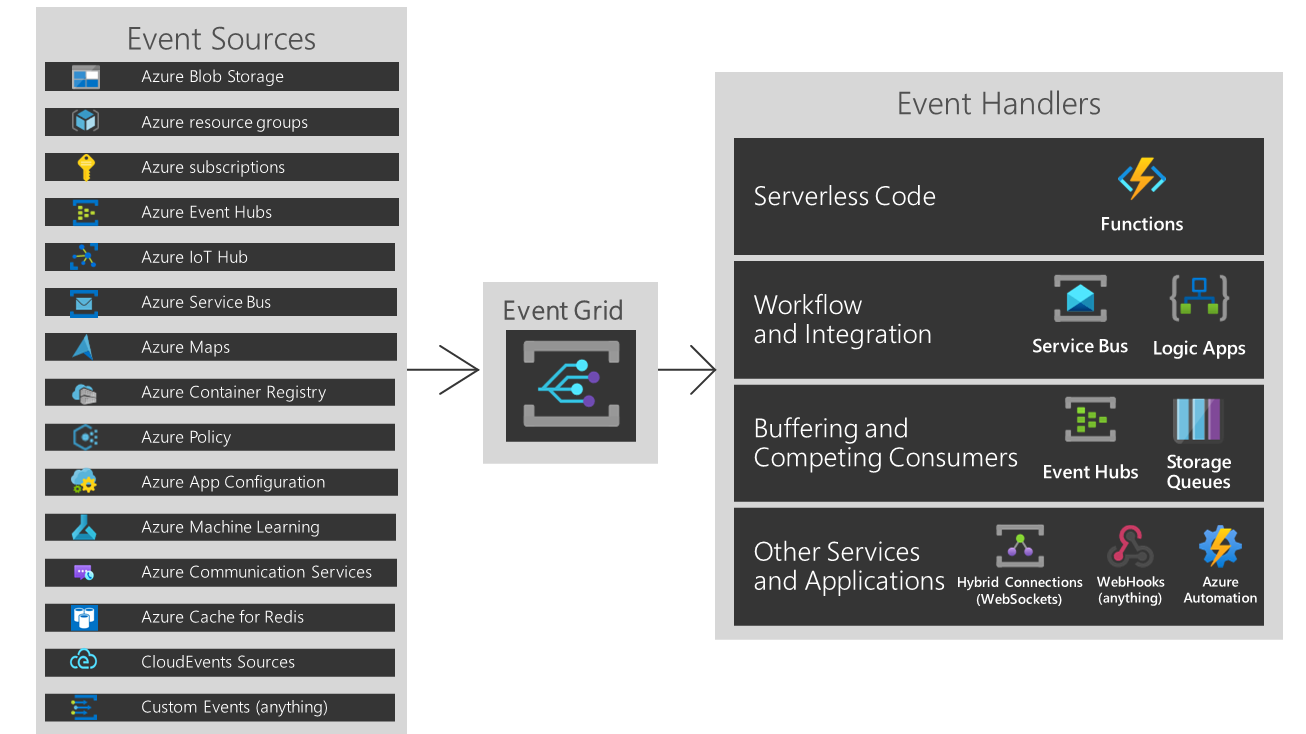

Azure Event Grid allows you to easily build applications with event-based architectures. First, select the Azure resource you would like to subscribe to, and then give the event handler or WebHook endpoint to send the event to. Event Grid has built-in support for events coming from Azure services, like storage blobs and resource groups. Event Grid also has support for your own events, using custom topics.

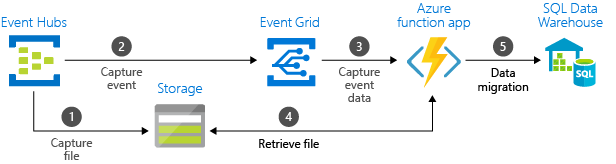

Azure Event Grid is an intelligent event routing service that enables you to react to notifications or events from apps and services. For example, it can trigger an Azure Function to process Event Hubs data that's captured to a Blob storage or Data Lake Storage. The below image shows you how to use Event Grid and Azure Functions to migrate captured Event Hubs data from blob storage to Azure Synapse Analytics, specifically a dedicated SQL pool.

For more details, refer to Tutorial: Stream big data into a data warehouse

What are the key considerations make ingestion of streaming data faster?

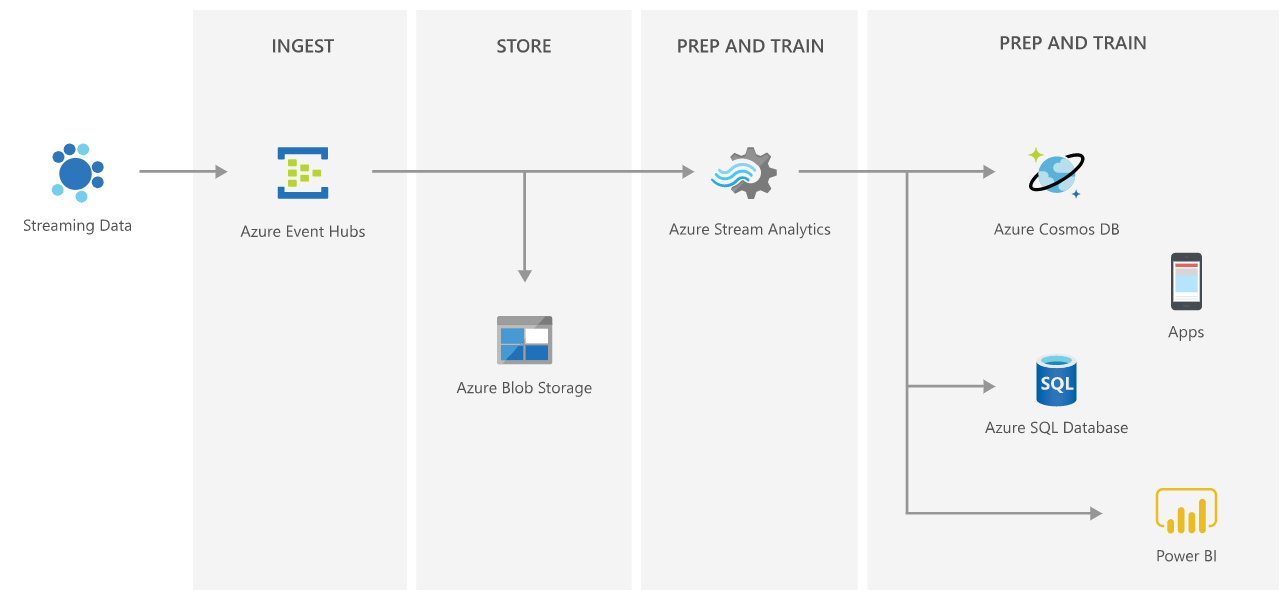

Build an end-to-end serverless streaming platform with Event Hubs and Stream Analytics

This reference architecture shows a serverless, event-driven architecture that ingests a stream of data, processes the data, and writes the results to a back-end database.

Different streaming tutorials:

- Visualize data anomalies on Event Hubs data streams

- Analyze fraudulent call data with Stream Analytics

- Store captured data in a Azure Synapse Analytics

- Process Apache Kafka for Event Hubs events using Stream analytics

- Stream data into Azure Databricks using Event Hubs

Hope this helps. Do let us know if you any further queries.

---------------------------------------------------------------------------

Please "Accept the answer" if the information helped you. This will help us and others in the community as well.