Hello @Ruben Dario Reyes Monsalve

Welcome to the Microsoft Q&A platform.

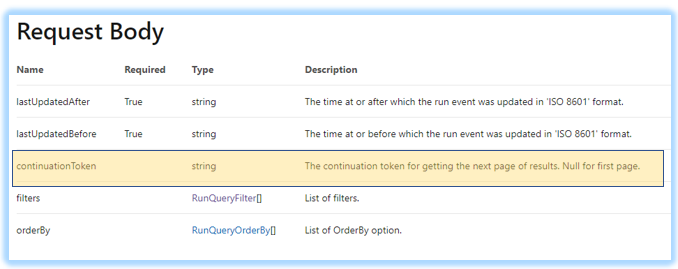

Currently the Endpoint PipeLine runs - Query By Factory supports pagination through the Request Body

The continuation token is taken as part of the request body and not through the Query Parameters / headers.

That is the reason in the above scenario - the data (first page) kept repeating - as the Continuation token was not being honored as it was passed as headers.

Unfortunately, at this point of time - pagination for this endpoint (Pipeline Runs - Query By Factory) cannot be achieved via headers/queryparameters/abosolute uri - which are the only supported form of pagination for the copy activity.

Having said that - one workaround I can think of :

Step 1 : ** Initialize a variable ContinuationToken as blank

**Step 2 : ** Perform Copy activity with the ContinuationToken value (with merge behavior)

**Step 3 : Perform Web activity

Step 4 : Set the variable ContinuationToken with continuationtoken obtained from the Web activity.

Step 5 : Repeat steps 2-4 using the Until activity - until the continuationtoken is not returned.

The end goal is to perform the copy activity in an iterative way with a different Request body for getting next pages of the data until ContinuationToken is returned blank. The above is just for demonstration, you can optimize the logic as per your convenience.

Note :

When you are passing ContinuationToken in the response body. Ensure, you pass theLastUpdatedAfterandLastUpdatedBefore- these are mandatory parameters of the request body without which the data will be returned blank.

Hope this will help. Please let us know if any further queries.

- Please don't forget to click on

or upvote

or upvote  button whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how

button whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how - Want a reminder to come back and check responses? Here is how to subscribe to a notification

- If you are interested in joining the VM program and help shape the future of Q&A: Here is how you can be part of Q&A Volunteer Moderators