Hello @kuljeet panag ,

Thanks for the ask and using Microsoft Q&A platform .

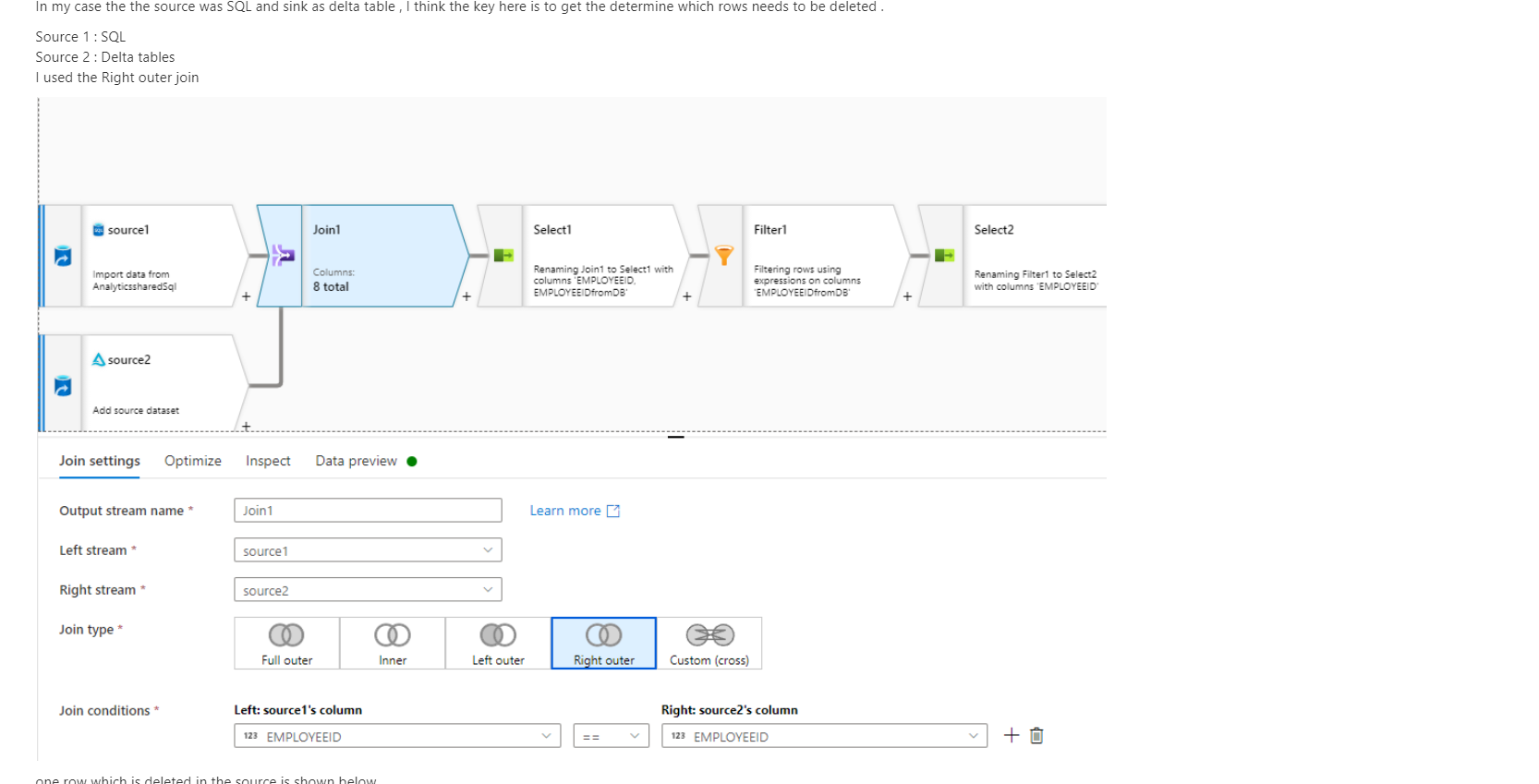

I think one way to go is to use the Mapping data flow . In mapping data flow you can create two sources and then use a JOIN transformation with NOT EXIST option .

if you review the thread : https://learn.microsoft.com/en-us/answers/questions/578219/data-flow-34delete-if34-setting-in-alter-row.html?childToView=580571#answer-580571 , it does use the join and it should give you some idea .

Please do let me know how it goes .

Thanks

Himanshu

-------------------------------------------------------------------------------------------------------------------------

- Please don't forget to click on

or upvote

or upvote  button whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how

button whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how - Want a reminder to come back and check responses? Here is how to subscribe to a notification

- If you are interested in joining the VM program and help shape the future of Q&A: Here is how you can be part of Q&A Volunteer Moderators