anonymous user

Thank you for your patience with us on this thread. We tested this and would like to share our findings.

You required information on changes made to NSG rule and information about who updated/created a network security group. The data for any activity on azure ARM resources is stored in the Activity Log . This can be done by querying the platform logs within a log analytics workspace. Platform logs provide detailed diagnostic and auditing information for Azure resources . The data that we would require for this scenario would be present in the AzureActivity logs within a table with same name. For this, the data will first need to be ingested to a log analytics workspace and then analyzed.

Azure Activity logs are retained for 90 days and then they are deleted from the backend. In order to collect data older than 90 days , you would have to create a separate log analytics workspace and ingest the Activity Logs . For the first 90 days it wont be charged and after that the logs will be charged as per the standard azure monitor pricing .

As mentioned earlier , the Log Analytics workspace needs to be configured to collect All AzureActivity logs to the Log Analytics Workspace for the subscriptions that you would like to monitor. I tested this in my lab in a step-by-step fashion as mentioned below by enabling diagnostics settings on the subscription .

- Open Azure Portal .

- Go to the Azure subscription where your users would be creating the Network security groups.

- Create a new Log analytics workspace in your subscription .

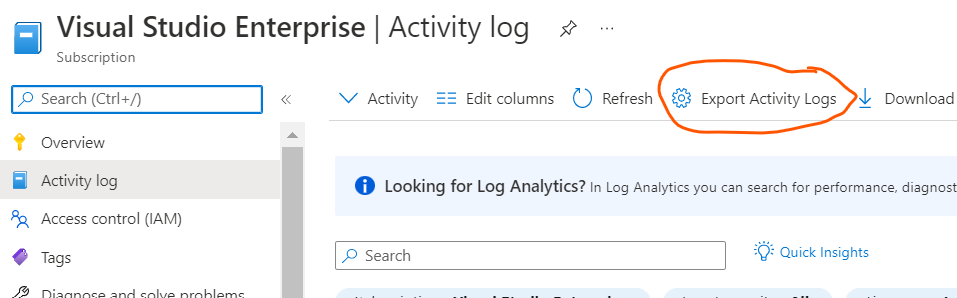

- Click on Subscription > [Your Subscription Name ] > Activity Log > Export Activity Log option.

-

- If you have multiple subscriptions you may have to repeat this for every subscription . But all these subscriptions need to be associated with the same Azure Ad instance . For cross workspace queries please see this article.

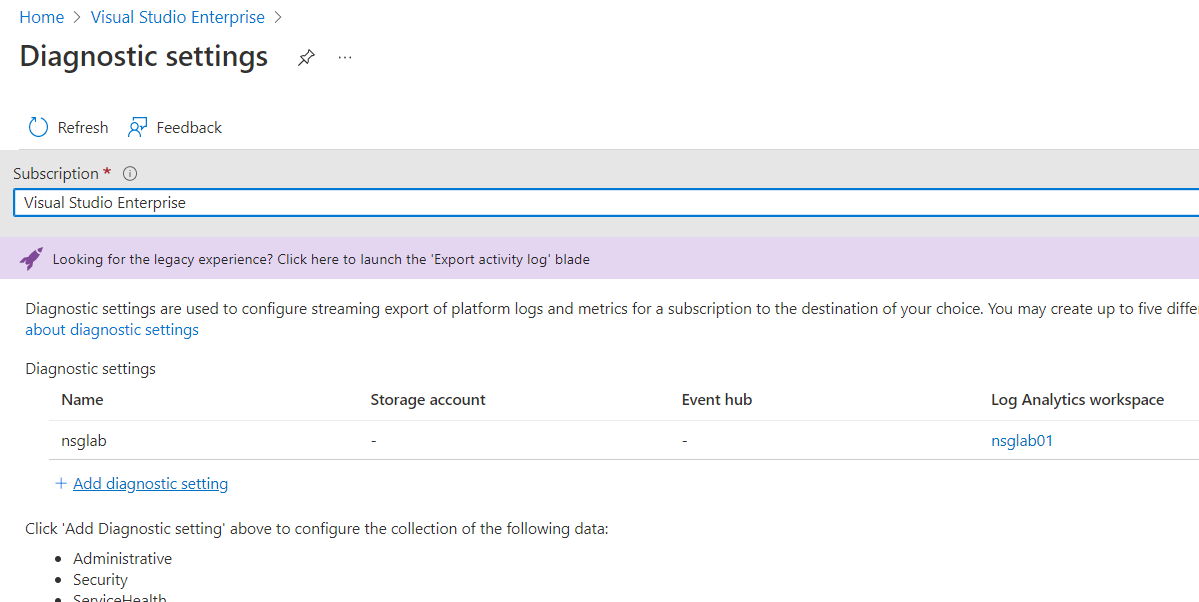

- Once you click the Export Activity Log , It will show the diagnostic settings that need to be configured in order for the log analytics workspace to receive platform logs which we need in our case.

-

- Click on **Add diagnostic Settings ** to add a diagnostic setting which is like subscribing to the platform logs so that the logs that get generated for this azure subscription also get copied you your log analytics workspace which you have created .

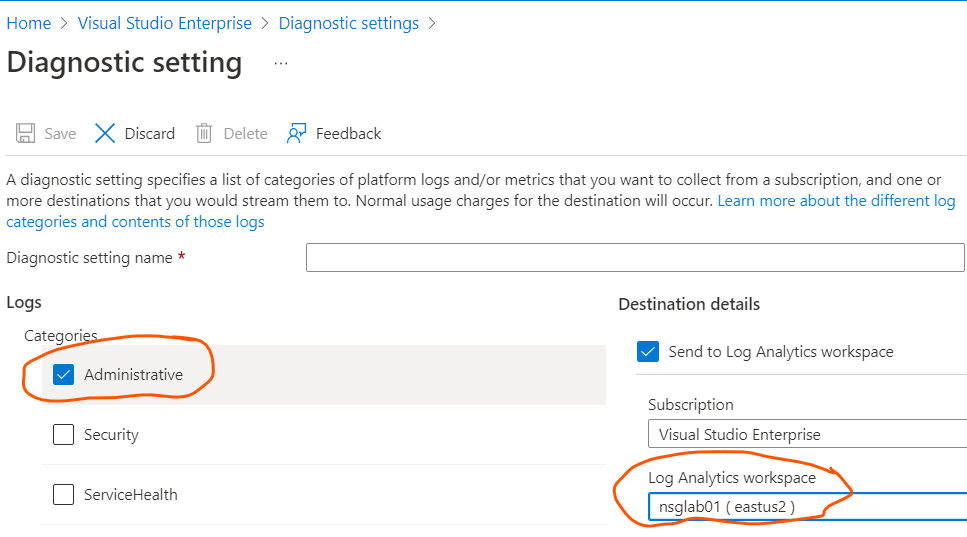

- There are multiple different types of logs created on Azure which you could export to the log analytics workspace.

- In this case only choosing Administrative logs should work for us. Since ingestion of all the data would increase the cost as well .

-

- Once this is setup , the logs started appearing in 10 mins in the log analytics workspace in my subscription .

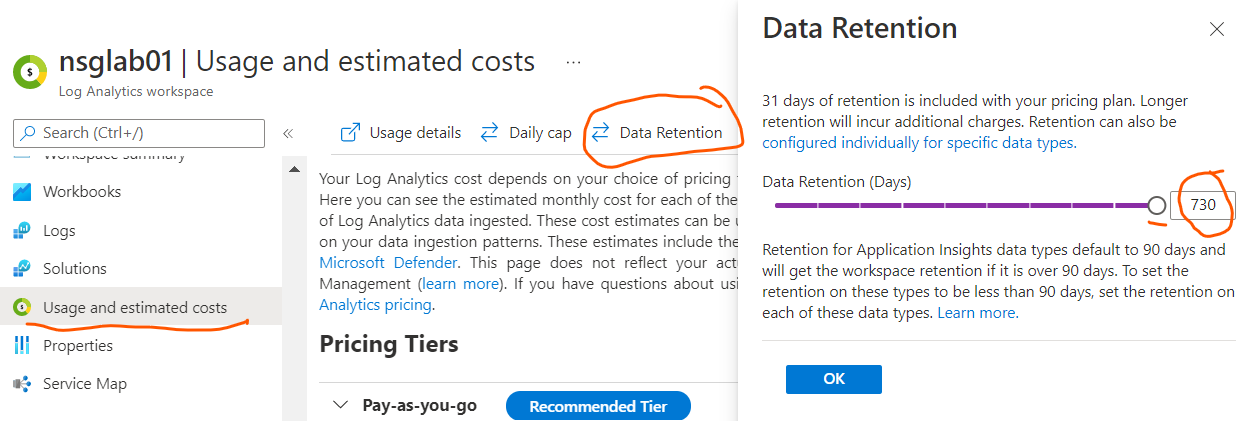

- The data retention for log analytics is a maximum for 2 years as shown below. You can further export the data to a storage account for long term storage.

-

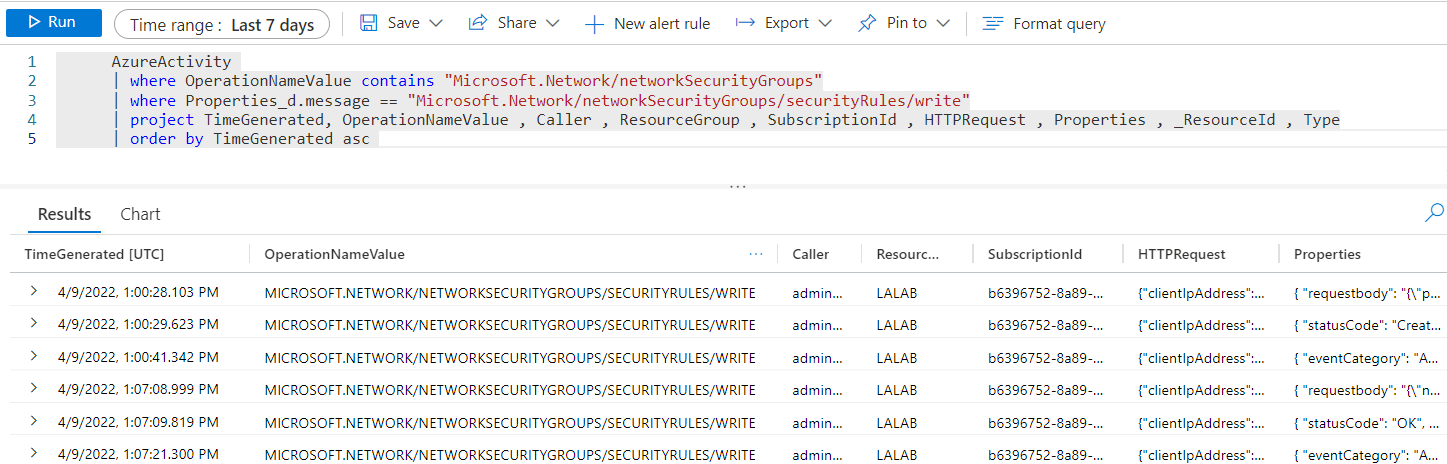

- You can use the following Kusto query to query the log analytics workspace . this query will search for Network Security group create and modify operations specifically . AzureActivity

| where OperationNameValue contains "Microsoft.Network/networkSecurityGroups"

| where Properties_d.message == "Microsoft.Network/networkSecurityGroups/securityRules/write"

| project TimeGenerated, OperationNameValue , Caller , ResourceGroup , SubscriptionId , HTTPRequest , Properties , _ResourceId , Type

| order by TimeGenerated asc - The following is how the result would look .

-

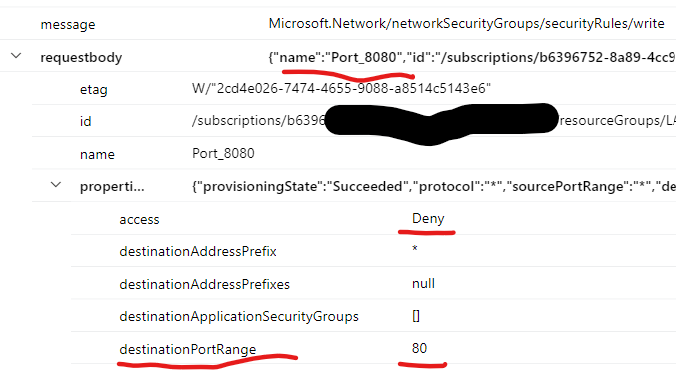

- In case you are looking for exact rule change you would have to expand and check each of the properties for the securityrules/write operations and find details in the request body .

- If you check the event and expand the event > Properties > requestbody you can find the change .

-

- Here you can see who the caller for this event is and what rules were updated.

This will not show any old logs and only ingest the logs from the day you enable this . And it can be enabled for a maximum of 2 years. However you should consider the cost for this before going ahead with this. Exporting and storing these logs to a Azure storage account would be more cost effective however in that case you would have to download it you may not get the flexibility of KQL query from the portal's UI interface. You can enable the diagnostic settings for the sending logs to storage account and export it to similar to what is shown in this article. I would strongly suggest to understand how logs are stored in the storage account by reading this doc . You can download these logs using Storage explorer whenever its required to audit them. This can save overall cost of auditing NSGs in your scenario.

Hope this helps answer your query . I have included multiple links from our documentation and I would suggest you to go through them as they will help you understand more on the options for logs storage . In case the information provided is helpful , please do accept the post as answer in order to improve the relevancy of this answer for the community and other readers. In case you have any other query on this feel free to let us know and we will be happy to help you further.

Thank you .

----------------------------------------------------------------------------------------------------------------------------------------------------------

- Please don't forget to click on

whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how

whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how - Want a reminder to come back and check responses? Here is how to subscribe to a notification

- If you are interested in joining the VM program and help shape the future of Q&A: Here is how you can be part of Q&A Volunteer Moderators