Hello @Samyak ,

Thanks for the question and using MS Q&A platform.

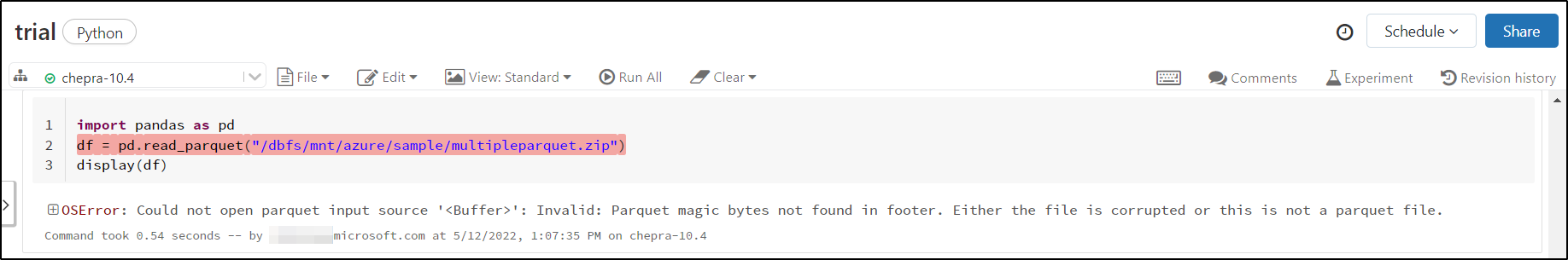

When I tired to read multiple parquet.gzip files using pandas got it error message:

OSError: Could not open parquet input source '<Buffer>': Invalid: Parquet magic bytes not found in footer. Either the file is corrupted or this is not a parquet file.

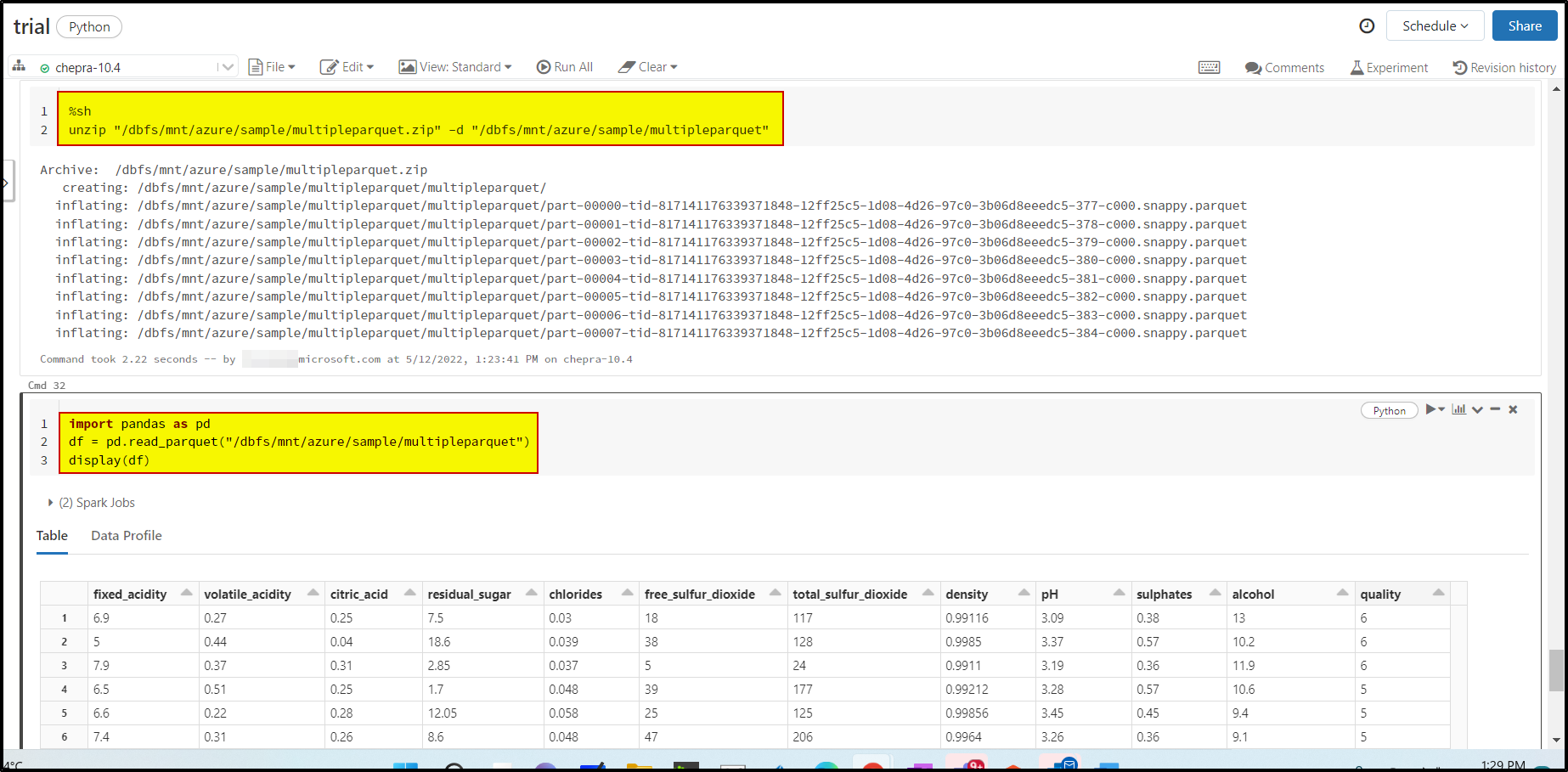

After bit of research, found this document - Azure Databricks - Zip Files which explains to unzip the files and then load the files directly.

You can invoke the Azure Databricks

%shzip magic command to unzip the file and read using pandas as shown below:

Hope this will help. Please let us know if any further queries.

------------------------------

- Please don't forget to click on

or upvote

or upvote  button whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how

button whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how - Want a reminder to come back and check responses? Here is how to subscribe to a notification

- If you are interested in joining the VM program and help shape the future of Q&A: Here is how you can be part of Q&A Volunteer Moderators