Hello @Chris and welcome to Microsoft Q&A.

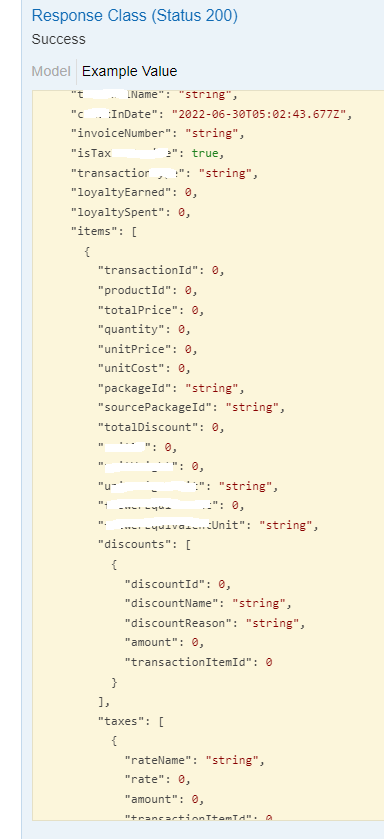

As I understand, you are looking for advice on both Data Flow and Copy activity for HTTP(JSON) -> Azure SQL DB, or REST -> Azure SQL DB. The schema is not expected to change, and is likely to contain empty or null data, possible the entire thing. The form these will take is another question. First, I will provide some baseline info.

First of all, the Copy Activity can only flatten one level of nesting. For more than one level, you need to either use Data Flow, multiple Copy Activities, or do it SQL side.

Copy Activity supports HTTP data set type, but Data Flow does not support HTTP data set type. Both support REST connector. REST connector expects JSON, or in the case of Data Flow, JSON or XML.

If you need to do pagination, REST is better than HTTP usually.

Data Flow provides more flexibility on null or missing fields than Copy Activity does.

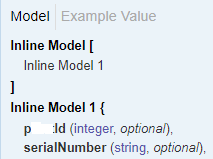

If you want all columns (even if missing from source data) to be present in your dataflow, then the easiest way is to specify them in the Dataset schema.

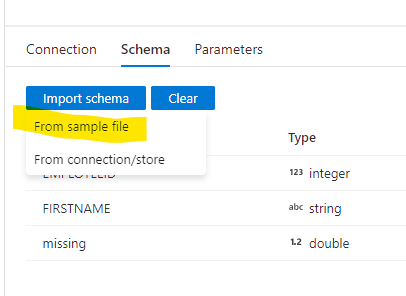

What you want to do, is when you make the dataset (or afterwards) import the schema from that template file. Screenshot below on a JSON dataset.

Unfortunately, the REST connector doesn't have a schema to work with. This is probably what brought you here. You mentioned you are using both Data Flow and Copy Activity. Are you perhaps doing a 2-step process?

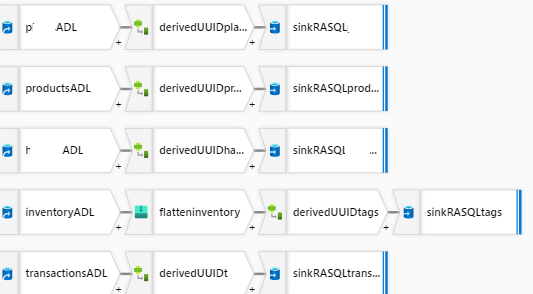

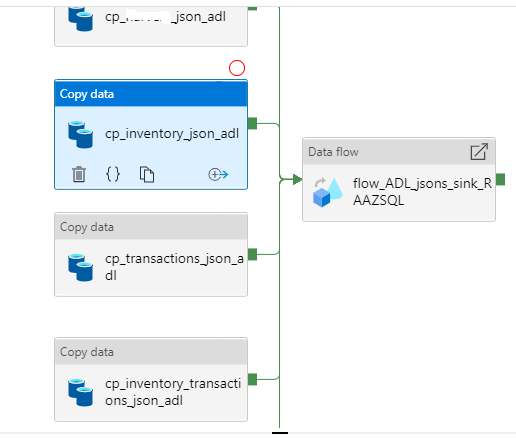

HTTP --copy_activity--> Blob (JSON) then Blob (JSON) --Data_Flow--> SQL

This would allow us to make use of the schema in the JSON dataset as demonstrated below:

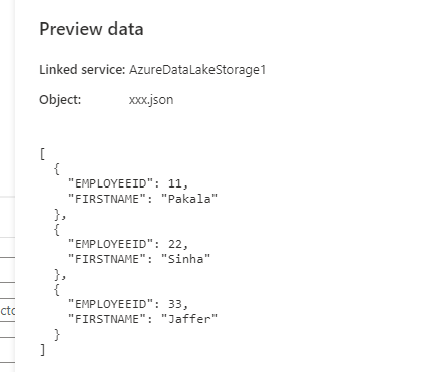

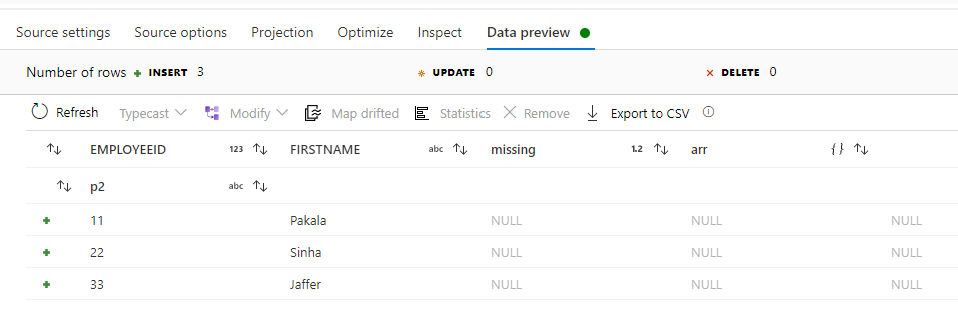

Here I have taken a JSON file (in my blob storage) which only has a couple basic properties. (Data is made up)

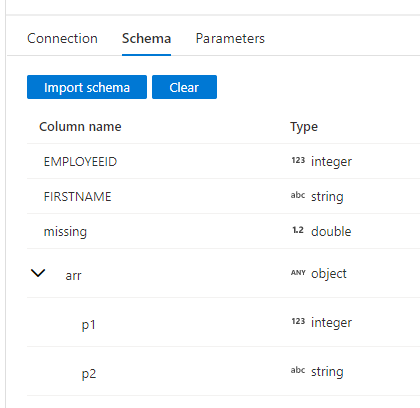

Then I altered its schema (manually) to add in complex properties which do not exist in the data.

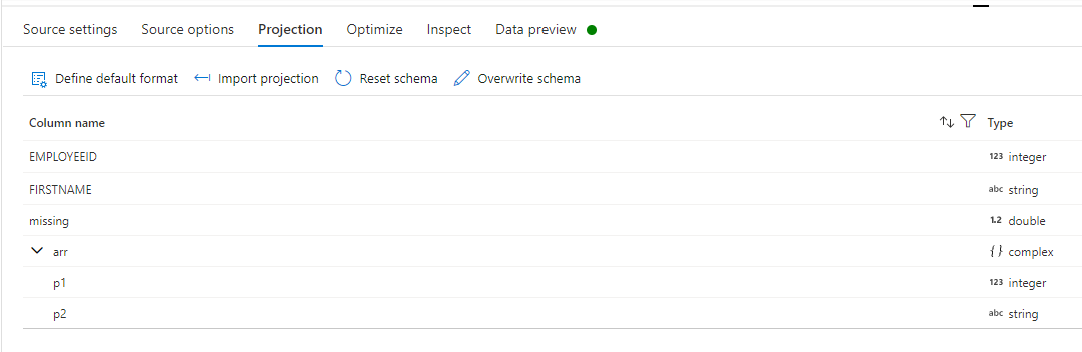

This is then reflected in the Dataflow, and shows up as nulls.

Please do let me if you have any queries.

Thanks

Martin

- Please don't forget to click on

or upvote

or upvote  button whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how

button whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how - Want a reminder to come back and check responses? Here is how to subscribe to a notification

- If you are interested in joining the VM program and help shape the future of Q&A: Here is how you can be part of Q&A Volunteer Moderators