Hello @eugenia apostolopoulou ,

Thanks for the question and using MS Q&A platform.

As we understand the ask here is how to partiion in spark , please do let us know if its not accurate.

I read that the Pyspark method PartitionBy() is not recommended

It will be great if you can point me to the document here . I know that partitioning too less or too much can effect the performance .

On looking that the folder structure . I think you can go with the below code .

jdbc_df1 = jdbc_df.withColumn("NewDate",to_date("DateOpened"))

jdbc_df1.show()

partitionBy()

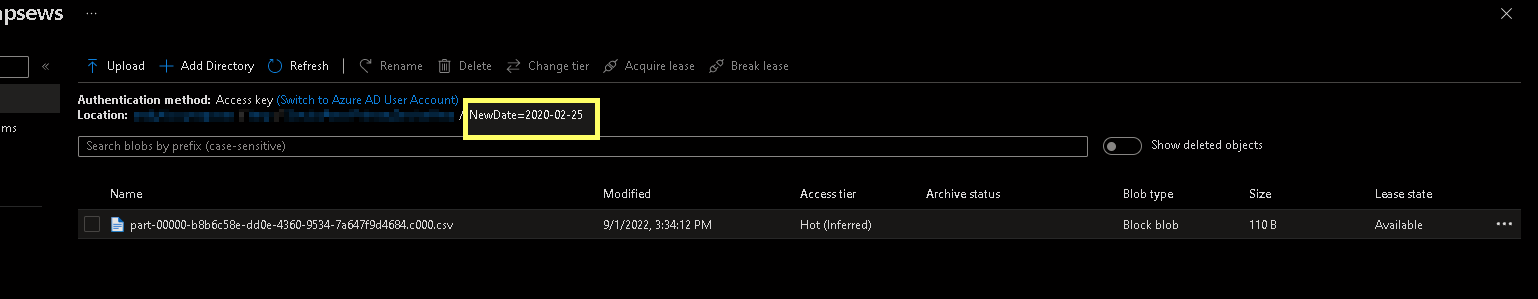

jdbc_df1.write.option("header",True) \

.partitionBy("NewDate") \

.mode("overwrite") \

.csv("/tmp/SomeFolder")

Please do let me if you have any queries.

Thanks

Himanshu

- Please don't forget to click on

or upvote

or upvote  button whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how

button whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how - Want a reminder to come back and check responses? Here is how to subscribe to a notification

- If you are interested in joining the VM program and help shape the future of Q&A: Here is how you can be part of Q&A Volunteer Moderators