In this example scenario, you integrate Azure Digital Twins into line-of-business (LOB) systems by synchronizing or updating your Azure Digital Twins graph with data.

Architecture

Download a Visio file of this architecture.

Dataflow

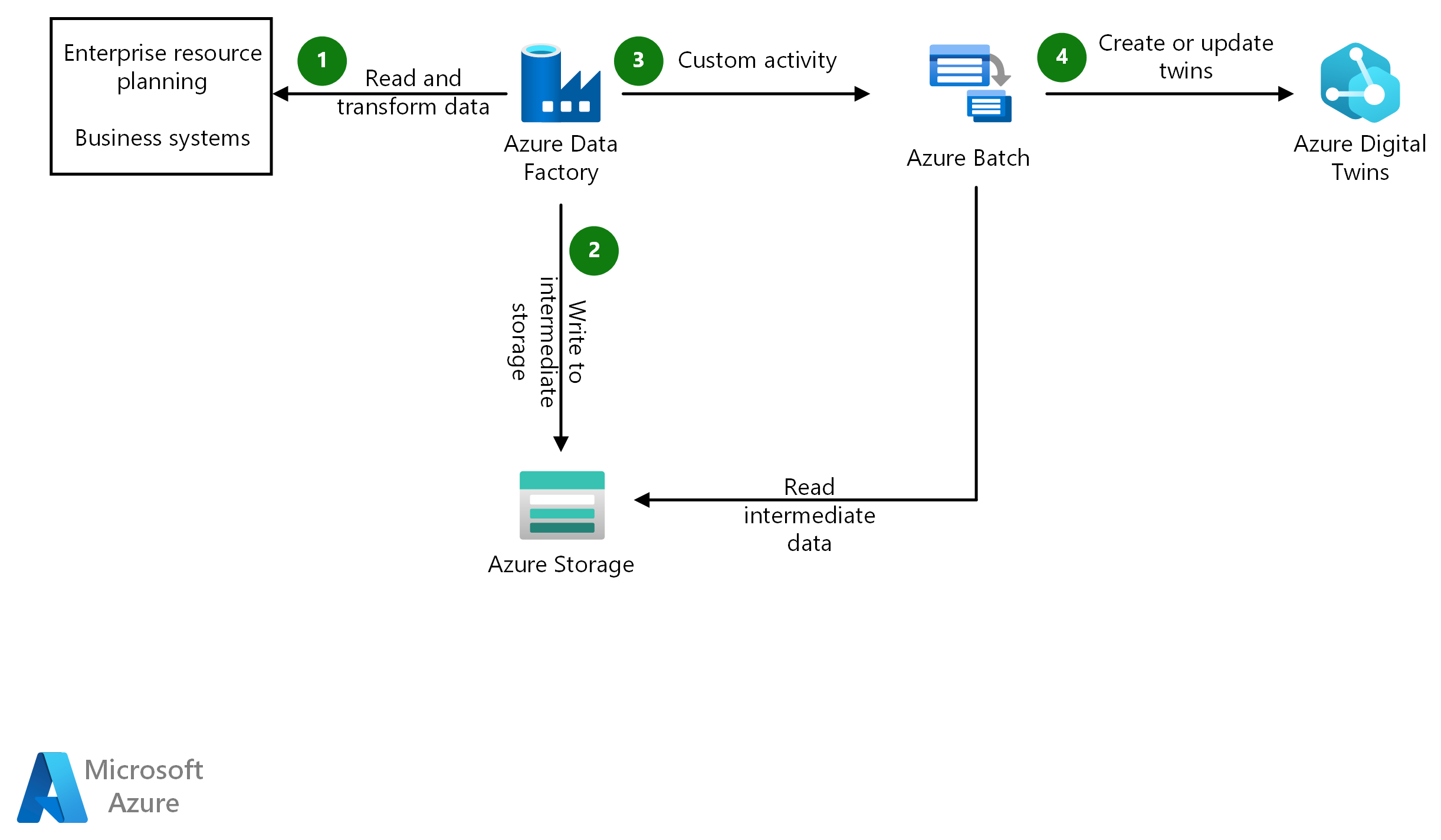

Azure Data Factory uses either a Copy activity or a mapping data flow to connect to the business system and copy the data to a temporary location.

A mapping data flow handles any transformations and outputs a file for each twin that must be processed.

A Get Metadata activity retrieves the list of files, loops through them, and calls a Custom activity.

Azure Batch creates a task for each file that runs the custom code to interface with Azure Digital Twins.

Components

Azure Digital Twins is the foundation for the metaverse that represents the digital representation of the physical assets.

Azure Data Factory handles the connectivity and orchestration between the source system and Azure Digital Twins.

Azure Storage stores the code for the Custom activity and the data files that need to be processed.

Azure Batch runs the Custom activity code.

Microsoft Entra ID provides managed identities for securely connecting from the Custom activity to Azure Digital Twins.

Alternatives

An alternative to this approach is to use Azure Functions instead of Azure Batch. We chose not to use Azure Functions for this architecture because Functions has a timeout for execution. A timeout could be a problem if the update to Azure Digital Twins requires complex logic, or if the API gets throttled. In such a case, the function could time out before execution is complete. Azure Batch doesn't have this restriction. Also, when using Batch, you can configure the number of virtual machines that are active to process the files. This flexibility helps you to find a balance between the scale and the speed of updates.

Scenario details

Azure Digital Twins can help you build virtual representations of your systems and your enterprise that are regularly updated with data from the people, places, and things in your enterprise. Figuring out how to get relevant data into Azure Digital Twins can seem like a challenge. If that data is from systems that require traditional extract, transform, and load (ETL) techniques, this article can help.

In this example scenario, you integrate Azure Digital Twins into line-of-business (LOB) systems by synchronizing or updating your Azure Digital Twins graph with data. With your model and the data pipelines established, you can have a 360-degree view of your environment and system. You determine the frequency of synchronization based on your source systems and the requirements of your solution.

Potential use cases

This solution is ideal for the manufacturing, automotive, and transportation industries. These other use cases have similar design patterns:

You have a graph in Azure Digital Twins of moving assets in a warehouse (for example, forklifts). You might want to receive data about the order that's currently being processed for each asset. To do so, you could integrate data from the warehouse management system or the sales LOB application every 10 minutes. The same graph in Azure Digital Twins can be synchronized with asset management solutions every day to receive inventory of assets that are available that day for use in the warehouse.

You have a fleet of vehicles that belong to a hierarchy that contains data that doesn't change often. You could use this solution to keep that data updated as needed.

Considerations

These considerations implement the pillars of the Azure Well-Architected Framework, which is a set of guiding tenets that can be used to improve the quality of a workload. For more information, see Microsoft Azure Well-Architected Framework.

Custom activities are essentially console applications. We took some of the best practices that are outlined in Creating an Azure Data Factory v2 Custom Activity, a post by Paul Andrew on his blog, as a foundation to be able to run and debug locally.

Consider archiving the files, after they've been processed, for historical purposes.

Consider implementing a change-data-capture pattern so that you only update the twins that are necessary.

Availability

For information about monitoring Data Factory pipelines, see the following resources:

- Monitor and Alert Data Factory by using Azure Monitor

- Data Factory metrics and alerts

- Monitoring data flows

- Using Azure Monitor Effectively

For information about monitoring Azure Batch, see the following resources:

Operations

For information related to operations, see the following resources:

- Deliver service level agreement for data pipelines

- Understanding pipeline failure

- Send an email with an Azure Data Factory or Azure Synapse pipeline

- Send notifications to a Microsoft Teams channel from an Azure Data Factory or Synapse Analytics pipeline

Performance

Performance could be a problem if you need to integrate Azure Digital Twins with large datasets. Consider how to scale Azure Batch appropriately to find the balance you need. For help with scaling, see the following resources:

- Auto-scaling of Azure Batch

- Azure Batch and performance efficiency

- Create Generic SCD Pattern in ADF Mapping Data Flows

- Data integration at scale with Azure Data Factory or Azure Synapse Pipeline

Depending on the complexity and size of data in the source system, consider the scale of your mapping data flow. For help with addressing performance, see Mapping data flows performance and tuning guide.

Security

Security provides assurances against deliberate attacks and the abuse of your valuable data and systems. For more information, see Overview of the security pillar.

This scenario relies on managed identities for security of the data. Data Factory requires the storage account key to generate shared access signatures. To help protect that key, store it in Azure Key Vault, and grant the data factory access to it through managed identity.

DevOps

- The Custom activity code is contained in a .zip file that's placed in Azure Storage. A DevOps pipeline can manage the deployment of that code.

- Data Factory supports an end-to-end DevOps lifecycle.

Cost optimization

Cost optimization is about looking at ways to reduce unnecessary expenses and improve operational efficiencies. For more information, see Overview of the cost optimization pillar.

Use the Azure Pricing Calculator to get accurate pricing on Azure Digital Twins, Data Factory, and Azure Batch.

Deploy this scenario

You can find a reference implementation on GitHub: Azure Digital Twins Batch Update Prototype.

Contributors

This article is maintained by Microsoft. It was originally written by the following contributors.

Principal author:

- Howard Ginsburg | Senior Cloud Solution Architect

Other contributors:

- Mike Downs | Senior Cloud Solution Architect

- Gary Moore | Programmer/Writer

- Onder Yildirim | Senior Cloud Solution Architect

Next steps

- Explore Azure Digital Twins implementation

- Examine the components of an Azure Digital Twins solution

- Examine the Azure Digital Twins solution development tools and processes

- Integrate data with Azure Data Factory or Azure Synapse Pipeline

- Introduction to Azure Data Factory

- Azure Data Factory documentation

- Azure Digital Twins documentation