Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

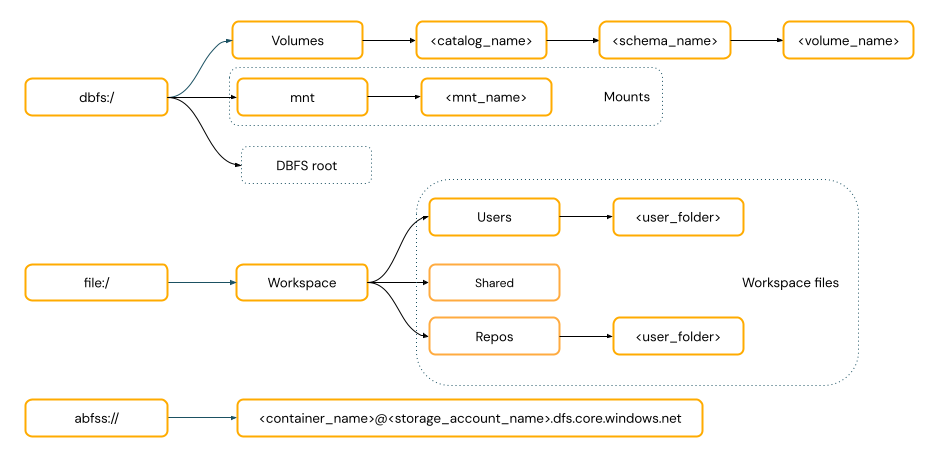

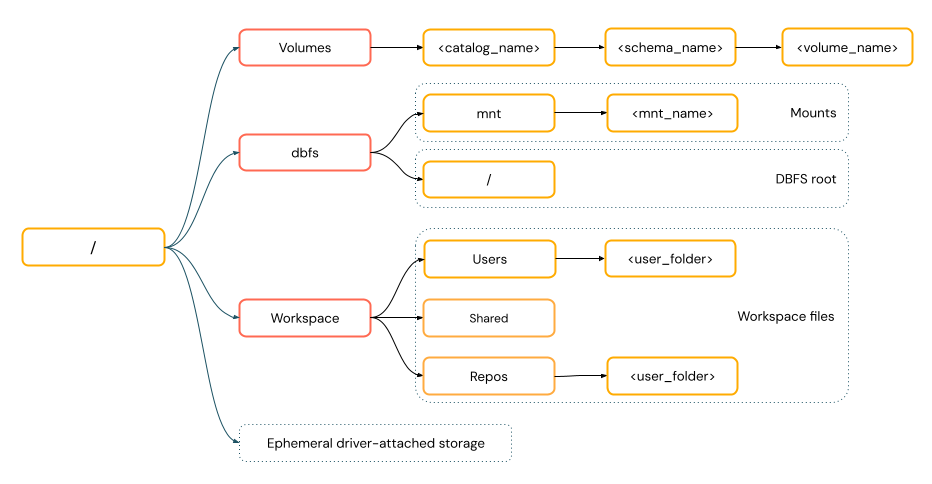

Azure Databricks has multiple utilities and APIs for interacting with files in the following locations:

- Unity Catalog volumes

- Workspace files

- Cloud object storage

- DBFS mounts and DBFS root

- Ephemeral storage attached to the driver node of the cluster

This article has examples for interacting with files in these locations for the following tools:

- Apache Spark

- Spark SQL and Databricks SQL

- Databricks file system utilities (

dbutils.fsor%fs) - Databricks CLI

- Databricks REST API

- Bash shell commands (

%sh) - Notebook-scoped library installs using

%pip - pandas

- OSS Python file management and processing utilities

Important

Some operations in Databricks, especially those using Java or Scala libraries, run as JVM processes, for example:

- Specifying a JAR file dependency using

--jarsin Spark configurations - Calling

catorjava.io.Filein Scala notebooks - Custom data sources, such as

spark.read.format("com.mycompany.datasource") - Libraries that load files using Java’s

FileInputStreamorPaths.get()

These operations do not support reading from or writing to Unity Catalog volumes or workspace files using standard file paths, such as /Volumes/my-catalog/my-schema/my-volume/my-file.csv. If you need to access volume files or workspace files from JAR dependencies or JVM-based libraries, copy the files first to compute local storage using Python or %sh commands, such as %sh mv.. Do not use %fs and dbutils.fs which use the JVM. To access files already copied locally, use language-specific commands such as Python shutil or use %sh commands. If a file needs to be present during cluster start, use an init script to move the file first. See What are init scripts?.

Do I need to provide a URI scheme to access data?

Data access paths in Azure Databricks follow one of the following standards:

URI-style paths include a URI scheme. For Databricks-native data access solutions, URI schemes are optional for most use cases. When directly accessing data in cloud object storage, you must provide the correct URI scheme for the storage type.

POSIX-style paths provide data access relative to the driver root (

/). POSIX-style paths never require a scheme. You can use Unity Catalog volumes or DBFS mounts to provide POSIX-style access to data in cloud object storage. Many ML frameworks and other OSS Python modules require FUSE and can only use POSIX-style paths.

Note

File operations requiring FUSE data access cannot directly access cloud object storage using URIs. Databricks recommends using Unity Catalog volumes to configure access to these locations for FUSE.

On compute configured with dedicated access mode (formerly single user access mode) and Databricks Runtime 14.3 and above, Scala supports FUSE for Unity Catalog volumes and workspace files, except for subprocesses that originate from Scala, such as the Scala command "cat /Volumes/path/to/file".!!.

Work with files in Unity Catalog volumes

Databricks recommends using Unity Catalog volumes to configure access to non-tabular data files stored in cloud object storage. For complete documentation about managing files in volumes, including detailed instructions and best practices, see Work with files in Unity Catalog volumes.

The following examples show common operations using different tools and interfaces:

| Tool | Example |

|---|---|

| Apache Spark | spark.read.format("json").load("/Volumes/my_catalog/my_schema/my_volume/data.json").show() |

| Spark SQL and Databricks SQL | SELECT * FROM csv.`/Volumes/my_catalog/my_schema/my_volume/data.csv`;LIST '/Volumes/my_catalog/my_schema/my_volume/'; |

| Databricks file system utilities | dbutils.fs.ls("/Volumes/my_catalog/my_schema/my_volume/")%fs ls /Volumes/my_catalog/my_schema/my_volume/ |

| Databricks CLI | databricks fs cp /path/to/local/file dbfs:/Volumes/my_catalog/my_schema/my_volume/ |

| Databricks REST API | POST https://<databricks-instance>/api/2.1/jobs/create{"name": "A multitask job", "tasks": [{..."libraries": [{"jar": "/Volumes/dev/environment/libraries/logging/Logging.jar"}],},...]} |

| Bash shell commands | %sh curl http://<address>/text.zip -o /Volumes/my_catalog/my_schema/my_volume/tmp/text.zip |

| Library installs | %pip install /Volumes/my_catalog/my_schema/my_volume/my_library.whl |

| Pandas | df = pd.read_csv('/Volumes/my_catalog/my_schema/my_volume/data.csv') |

| OSS Python | os.listdir('/Volumes/my_catalog/my_schema/my_volume/path/to/directory') |

For information about volumes limitations and workarounds, see Limitations of working with files in volumes.

Work with workspace files

Databricks workspace files are the files in a workspace, stored in the workspace storage account. You can use workspace files to store and access files such as notebooks, source code files, data files, and other workspace assets.

Important

Because workspace files have size restrictions, Databricks recommends only storing small data files here primarily for development and testing. For recommendations on where to store other file types, see File types.

| Tool | Example |

|---|---|

| Apache Spark | spark.read.format("json").load("file:/Workspace/Users/<user-folder>/data.json").show() |

| Spark SQL and Databricks SQL | SELECT * FROM json.`file:/Workspace/Users/<user-folder>/file.json`; |

| Databricks file system utilities | dbutils.fs.ls("file:/Workspace/Users/<user-folder>/")%fs ls file:/Workspace/Users/<user-folder>/ |

| Databricks CLI | databricks workspace list |

| Databricks REST API | POST https://<databricks-instance>/api/2.0/workspace/delete{"path": "/Workspace/Shared/code.py", "recursive": "false"} |

| Bash shell commands | %sh curl http://<address>/text.zip -o /Workspace/Users/<user-folder>/text.zip |

| Library installs | %pip install /Workspace/Users/<user-folder>/my_library.whl |

| Pandas | df = pd.read_csv('/Workspace/Users/<user-folder>/data.csv') |

| OSS Python | os.listdir('/Workspace/Users/<user-folder>/path/to/directory') |

Note

The file:/ schema is required when working with Databricks Utilities, Apache Spark, or SQL.

In workspaces where DBFS root and mounts are disabled, you can also use dbfs:/Workspace to access workspace files with Databricks utilities. This requires Databricks Runtime 13.3 LTS or above. See Disable access to DBFS root and mounts in your existing Azure Databricks workspace.

For the limitations in working with workspace files, see Limitations.

Where do deleted workspace files go?

Deleting a workspace file sends it to the trash. You can recover or permanently delete files from the trash using the UI.

See Delete an object.

Work with files in cloud object storage

Databricks recommends using Unity Catalog volumes to configure secure access to files in cloud object storage. You must configure permissions if you choose to access data directly in cloud object storage using URIs. See Managed and external volumes.

The following examples use URIs to access data in cloud object storage:

| Tool | Example |

|---|---|

| Apache Spark | spark.read.format("json").load("abfss://container-name@storage-account-name.dfs.core.windows.net/path/file.json").show() |

| Spark SQL and Databricks SQL | SELECT * FROM csv.`abfss://container-name@storage-account-name.dfs.core.windows.net/path/file.json`; LIST 'abfss://container-name@storage-account-name.dfs.core.windows.net/path'; |

| Databricks file system utilities | dbutils.fs.ls("abfss://container-name@storage-account-name.dfs.core.windows.net/path/") %fs ls abfss://container-name@storage-account-name.dfs.core.windows.net/path/ |

| Databricks CLI | Not supported |

| Databricks REST API | Not supported |

| Bash shell commands | Not supported |

| Library installs | %pip install abfss://container-name@storage-account-name.dfs.core.windows.net/path/to/library.whl |

| Pandas | Not supported |

| OSS Python | Not supported |

Work with files in DBFS mounts and DBFS root

Important

Both DBFS root and DBFS mounts are deprecated and not recommended by Databricks. New accounts are provisioned without access to these features. Databricks recommends using Unity Catalog volumes, external locations, or workspace files instead.

| Tool | Example |

|---|---|

| Apache Spark | spark.read.format("json").load("/mnt/path/to/data.json").show() |

| Spark SQL and Databricks SQL | SELECT * FROM json.`/mnt/path/to/data.json`; |

| Databricks file system utilities | dbutils.fs.ls("/mnt/path")%fs ls /mnt/path |

| Databricks CLI | databricks fs cp dbfs:/mnt/path/to/remote/file /path/to/local/file |

| Databricks REST API | POST https://<host>/api/2.0/dbfs/delete --data '{ "path": "/tmp/HelloWorld.txt" }' |

| Bash shell commands | %sh curl http://<address>/text.zip > /dbfs/mnt/tmp/text.zip |

| Library installs | %pip install /dbfs/mnt/path/to/my_library.whl |

| Pandas | df = pd.read_csv('/dbfs/mnt/path/to/data.csv') |

| OSS Python | os.listdir('/dbfs/mnt/path/to/directory') |

Note

The dbfs:/ scheme is required when working with the Databricks CLI.

Work with files in ephemeral storage attached to the driver node

The ephemeral storage attached to the driver node is block storage with built-in POSIX-based path access. Any data stored in this location disappears when a cluster terminates or restarts.

| Tool | Example |

|---|---|

| Apache Spark | Not supported |

| Spark SQL and Databricks SQL | Not supported |

| Databricks file system utilities | dbutils.fs.ls("file:/path")%fs ls file:/path |

| Databricks CLI | Not supported |

| Databricks REST API | Not supported |

| Bash shell commands | %sh curl http://<address>/text.zip > /tmp/text.zip |

| Library installs | Not supported |

| Pandas | df = pd.read_csv('/path/to/data.csv') |

| OSS Python | os.listdir('/path/to/directory') |

Note

The file:/ schema is required when working with Databricks Utilities.

Move data from ephemeral storage to volumes

You might want to access data downloaded or saved to ephemeral storage using Apache Spark. Because ephemeral storage is attached to the driver and Spark is a distributed processing engine, not all operations can directly access data here. Suppose you must move data from the driver filesystem to Unity Catalog volumes. In that case, you can copy files using magic commands or the Databricks utilities, as in the following examples:

dbutils.fs.cp ("file:/<path>", "/Volumes/<catalog>/<schema>/<volume>/<path>")

%sh cp /<path> /Volumes/<catalog>/<schema>/<volume>/<path>

%fs cp file:/<path> /Volumes/<catalog>/<schema>/<volume>/<path>