Building your Agent on Azure

Overview

Once a startup decides to move beyond prototypes and build production-grade AI agents, the focus shifts from experimentation to architecture. Building an agent for enterprise customers requires security, reliability, and adaptability across multiple customers. Startups must also look to balance thoughtful design with speed and simplicity.

When building agents on Azure, there are four core design areas every startup must address:

- Multitenancy: how to securely and efficiently serve multiple customers while isolating data, context, and compute.

- Application Layer: how users interact with the agent through APIs, Teams apps, or web experiences, and how those interfaces map to tenant-specific logic and security.

- Orchestration Layer: how reasoning, tool use, and action coordination are managed to produce reliable, auditable outcomes across diverse tasks and models.

- Context Layer: how the agent retrieves, structures, and reasons over relevant knowledge using vector search, memory stores, and live data integration.

These four areas form the backbone of a scalable agentic architecture. They determine not just how the agent performs, but how it evolves, supporting continuous improvement, customization per tenant, and deeper integration into customer ecosystems.

Multitenancy

For startups, multitenancy is the cornerstone of building a sustainable, scalable agent platform. It defines how your system serves multiple customers, each with their own data, models, and context while maintaining security, performance, and cost efficiency. In the world of AI agents, where context and personalization are central to value creation, multitenancy also governs how intelligence is partitioned, shared, and evolved across tenants.

Azure provides several native patterns and services that make multitenancy both flexible and secure. The right approach depends on your product model, data sensitivity, and scale requirements.

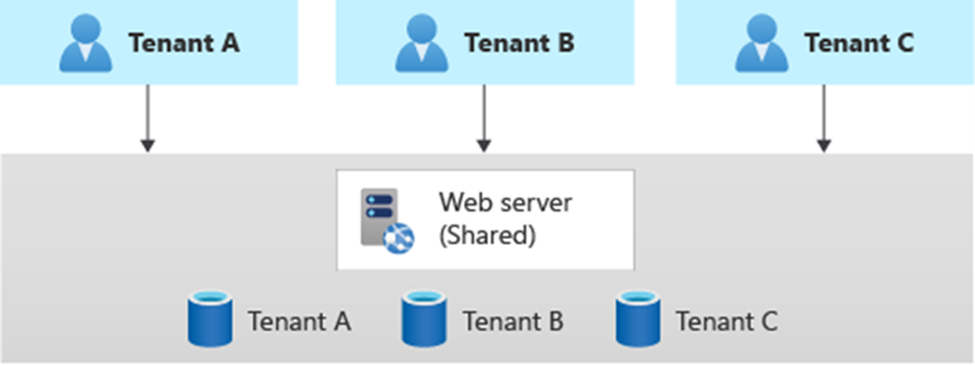

Logical vs. Physical Multitenancy

- Logical multitenancy is achieved by isolating customer data and configurations within shared resources (for example, a single Cosmos DB instance with tenant-specific partitions or collections, or a single Azure AI Search service with per-tenant indexes). This model offers high efficiency and simpler operations, making it ideal for early-stage startups.

- Physical multitenancy provides stronger isolation by provisioning dedicated resources per tenant, such as separate databases, storage accounts, or entire deployments using Azure Application offers. This approach is common for regulated industries or enterprise customers that require data residency guarantees.

Most startups adopt a hybrid model: logical isolation for the majority of tenants, and physical isolation for high-value or compliance-driven customers. This is often referred to as a horizontally partitioned deployment. Horizontal partitioned deployments are optimal for early-stage startups because they allow minimal application infrastructure while providing tenant data isolation for B2B clients. This reduces the need for complex data partitioning and reduces costs for redundant infrastructure.

Identity and Access Control

At the heart of multitenancy is identity. Microsoft Entra ID (Azure AD) provides the foundation for secure, tenant-aware access control.

- Each tenant can be associated with an Entra organization, allowing fine-grained role assignments, API access, and integration with enterprise SSO.

- Managed identities simplify service-to-service authentication without storing credentials.

- Conditional Access and Custom App Roles ensure that only authorized users and services can access tenant-specific data and context.

Many multitenant solutions operate as SaaS. However, your choice to use Microsoft Entra ID or External ID depends partly on how you define your tenants or customer base.

- If your tenants or customers are organizations, they might already use Microsoft Entra ID for services like Microsoft 365, Microsoft Teams, or for their own Azure environments. You can create a multitenant application in your own Microsoft Entra ID directory to make your solution available to other Microsoft Entra ID directories. You can also list your solution in Azure Marketplace and make it accessible to organizations that use Microsoft Entra ID.

- If your tenants or customers do not use Microsoft Entra ID, or if they are individuals rather than organizations, consider using External ID. External ID provides features to control how users sign up and sign in. For example, you can restrict access to your solution to only the users that you invite, or you can enable self-service sign-up. You can use custom branding. To enable your own staff to sign in, you can invite users from your Microsoft Entra ID tenant as guests into External ID via guest access. External ID also enables federation with other IdPs.

- Some multitenant solutions are intended for both scenarios. Some tenants might have their own Microsoft Entra ID tenants, and other tenants might not. You can use External ID for this scenario and use federation to allow user sign-in from a tenant's Microsoft Entra ID directory.

Follow this guide (Convert single-tenant app to multitenant on Microsoft Entra ID: Microsoft identity platform | Microsoft Learn) to use Entra ID to enable a multitenant application.

Data and Context Isolation

Because agents rely heavily on contextual knowledge, isolating data retrieval and embeddings per tenant is critical. Azure Cache for Redis, Cosmos DB, and Azure Storage support tenant-specific namespaces and indexes, while services like Azure Confidential Computing or Private Endpoints protect sensitive interactions.

When using vector databases for retrieval-augmented generation (RAG), startups should implement per-tenant vector namespaces or separate collections to prevent data leakage between customers. This also simplifies per-tenant scaling and billing.

Observability, Cost, and Scale

Operational visibility is key in a multitenant agent platform.

- Azure Monitor and Application Insights can be extended to log usage per tenant, helping with troubleshooting, performance tuning, and usage-based billing.

- Azure Container Apps and AKS allow autoscaling based on tenant load, maintaining cost efficiency.

- When monetizing through the Microsoft commercial marketplace, tenant usage data can feed directly into metering APIs for automated billing and reporting.

Why It Matters

Getting multitenancy right early on allows startups to:

- Serve many customers without duplicating infrastructure.

- Enforce strong data boundaries and compliance controls.

- Support both small and enterprise tenants with tailored isolation.

- Simplify future marketplace monetization and co-sell readiness.

In short, multitenancy transforms an agent from a standalone prototype into a platform business, capable of serving hundreds of organizations through a single, secure, and elastic Azure backbone.

Application Layer

The application layer is where users interact with your agent through chat interfaces, APIs, or embedded copilots in tools like Microsoft Teams. For startups, this layer is where customer value becomes tangible. It translates orchestration logic and contextual intelligence into a user experience that feels responsive, personalized, and secure across tenants.

On Azure, the application layer serves two critical roles:

- It acts as the gateway for tenant-specific requests and identity validation.

- It defines the experience layer that users, developers, and external systems interact with.

Tenant-Aware Application Boundaries

The application layer must be fully aware of which tenant is making the request and what data or capabilities they are entitled to access. Azure provides several services to enable this:

- Azure Front Door or API Management (APIM) can act as the global entry point, routing requests to tenant-specific environments or functions.

- Entra ID handles authentication and authorization, ensuring that user and service tokens map to the correct tenant context.

- Azure App Configuration and Key Vault manage tenant-specific configurations, API keys, and environment secrets.

These boundaries ensure that each tenant experiences the same agent platform but within their own secure logical sandbox, which is a critical step in preventing data crossover and maintaining enterprise-grade compliance.

Multi-Channel Delivery

The modern agent experience extends beyond a single chat UI. Startups can expose their agent through multiple delivery channels:

- Teams Copilots and Message Extensions for workplace collaboration and conversational workflows.

- Web and Mobile Apps built using frameworks like React or React Native, hosted in Azure App Service or Static Web Apps.

- API endpoints secured by Entra ID or APIM, allowing programmatic integration with customer systems. These are often built using Azure Functions.

Azure’s identity layer ensures that all these interfaces share a unified authentication and authorization model, even if they connect to different backend services. This consistency allows startups to maintain one agent core while delivering customized front-ends per customer or use case.

State Management and Session Context

In agentic applications, sessions often bridge multiple interactions and modalities. For example, a user may start a conversation in Teams, continue via API, and review insights in a web dashboard.

To maintain coherence:

- Azure Cosmos DB or Azure Cache for Redis can persist session state and conversation context per tenant.

- Durable Functions enable long-running workflows that keep track of agent reasoning steps, even across distributed components.

- Event Grid or Service Bus can propagate context and signals between modules when users or systems trigger updates.

This session-aware design allows agents to feel continuous and contextually intelligent, without hardcoding workflows for each interaction mode.

Telemetry and Experience Insights

The application layer is also where startups gain insight into how customers engage with their agents:

- Application Insights captures interaction metrics, latency, and user satisfaction signals.

- Custom logging can track intent success rates, completion times, or feedback loops to continuously improve orchestration quality.

- Startups can aggregate telemetry by tenant to drive usage-based pricing or SLA reporting. This data also feeds marketplace metering for monetization.

Why It Matters

The application layer defines the customer experience surface of your agent platform. By designing it to be tenant-aware, channel-flexible, and data-secure from the start, startups can:

- Deliver consistent, trusted interactions across Teams, web, and APIs.

- Support enterprise-grade identity, audit, and compliance requirements.

- Collect valuable insights that improve agent reasoning and performance.

- Enable future marketplace monetization through usage telemetry and metering.

In essence, the application layer is the front door to your agent’s intelligence, where product design, security, and user experience converge.

Integrating User Interfaces for Agentic Workflows

While the application layer defines how your agent exposes APIs and manages access, the user interface integration defines how end users experience the agent. For startups, this is a powerful lever. Embedding agents into existing collaboration and workflow surfaces like Microsoft Teams, Outlook, and Microsoft 365 apps can shorten adoption cycles and increase stickiness.

Building in Microsoft Teams

Teams is a natural interface for enterprise-grade agents. Through Teams Apps, startups can embed their agents directly into chat, meetings, and channels, allowing users to interact with the agent where they already work.

- Bots and Message Extensions enable conversational interactions or quick actions that connect directly to your agent’s orchestration layer via a secure API.

- Adaptive Cards and Task Modules can present structured output, enabling guided workflows and approvals.

- SaaS or Azure App Offer linking within Teams allows for monetized experiences tied to Azure Marketplace listings.

- Entra ID integration ensures single sign-on and tenant-level access control, simplifying multitenant deployments across organizations.

Teams acts as both a delivery channel and a trust layer, bridging your AI system with enterprise workflows under Microsoft’s security model. The M365 Agents Toolkit is available to streamline building enterprise-ready agents for integration with Teams and other products from the M365 suite. The Toolkit is a Visual Studio Code extension and CLI that streamlines building, debugging, and deploying custom agents for Microsoft 365 platforms like Copilot and Teams. It automates tasks such as manifest management, sideloading, and Azure resource provisioning, enabling developers to create declarative or pro-code agents with integrated identity and data access.

Embedding in Microsoft 365 Experiences

Beyond Teams, startups can extend their agents across the broader M365 ecosystem:

- Outlook add-ins allow proactive or reactive assistance in emails (for example, summarizing threads or generating follow-up actions).

- Graph Connectors can feed structured data into M365 Search and Copilot experiences, extending the agent’s reach into corporate knowledge.

By integrating with M365 surfaces, startups can leverage the Microsoft Graph API to unify context, bringing together messages, calendar events, documents, and tasks, and making their agent contextually aware of a user’s work environment.

Other Interface Options

For external or hybrid scenarios, startups can also integrate:

- Web apps or portals built with Azure App Service or Static Web Apps, often serving as management consoles or dashboards.

- Mobile apps powered by React Native or .NET MAUI, authenticated via Entra ID and connected through API Management.

- Third-party integrations using REST or Microsoft Graph APIs for Slack, Salesforce, or ServiceNow, ensuring your agent can interact across ecosystems.

Designing for Experience and Security

Regardless of interface, startups should design for:

- Contextual grounding that lets the agent pull relevant tenant or user data from Microsoft Graph or internal APIs.

- Low-friction authentication using Entra single sign-on or delegated tokens for seamless user experience.

- Consistent UX and branding to ensure agent interactions feel natural within each host environment.

Integrating agents into the Microsoft 365 ecosystem is not just about convenience. It is about meeting users where they work and making your AI solution a natural extension of their productivity tools rather than another siloed app.

Orchestration Layer

If the application layer is the front door to your agent platform, the orchestration layer is the brains, coordinating reasoning, tools, and workflows to deliver coherent, contextually aware outcomes. This is where intelligence meets action.

The orchestration layer connects user intent (from the app layer) to domain logic, data, and external systems. For agentic startups, it is the most strategic part of the architecture, balancing flexibility, scalability, and observability while abstracting complexity from the front end.

Core Functions of the Orchestration Layer

The orchestration layer typically performs five key responsibilities:

- Intent interpretation: translating user prompts or API calls into structured actions or goals.

- Context assembly: retrieving relevant data, memory, or tools before invoking reasoning models.

- Tool invocation: executing API calls, workflows, or integrations on behalf of the agent.

- Response synthesis: combining reasoning output with domain logic to generate meaningful replies.

- Observation and learning: logging outcomes, errors, and metrics for continuous improvement.

For enterprises, these functions can be modeled as a pipeline of micro-orchestrations rather than a single monolith. Startups, however, tend to leverage more monolithic design patterns at earlier stages to optimize for speed and simplicity.

Implementing on Azure

Azure provides a native foundation for building and scaling orchestration logic:

- Azure Functions serve as stateless compute nodes that execute specific reasoning or task flows. Each function can be bound to a particular tenant, topic, or event type.

- Durable Functions enable long-running or multi-step orchestration patterns, which are well suited to reasoning loops, agent collaboration, or multi-turn workflows.

- Azure Service Bus provides reliable, ordered message delivery between orchestration components, which is essential for deterministic execution across distributed services.

These serverless primitives allow startups to evolve from simple request-response agents to reactive, event-driven AI systems that adapt to user and system context dynamically.

AI Reasoning and Tool Use

At the heart of the orchestration layer lies reasoning, powered by Azure OpenAI models like GPT-5 or other Azure-Direct Model offerings.

These models are best used not as monolithic brains, but as reasoning nodes within a structured pipeline:

- Use system prompts and function calling to guide reasoning models in a controlled way.

- Store tool definitions and endpoint metadata in a central tool registry (for example, Cosmos DB or Azure App Configuration) that each agent instance can query dynamically.

- Execute high-privilege actions via Managed Identities, so agents invoke Azure or external APIs securely without embedding credentials.

By separating what the model decides from how execution happens, you gain both security isolation and observability into the reasoning process.

Context Assembly and Memory Coordination

Reasoning is only as good as the context provided. The orchestration layer is responsible for assembling this context from multiple sources before model invocation:

- Query Azure AI Search or Cosmos DB to fetch tenant-specific knowledge.

- Retrieve user history or preferences from Redis or PostgreSQL.

- Pull semantic memories from vector stores (for example, Vector Search on Azure DB for PostgreSQL).

This approach enables context-aware reasoning. It is a hallmark of advanced agentic systems.

Observability and Feedback Loops

To ensure agents remain reliable and debuggable at scale, the orchestration layer should emit rich telemetry:

- Azure Application Insights can trace every reasoning step, model call, and API execution.

- Azure Monitor Logs can track agent performance by tenant, intent, or tool usage.

- Feedback signals (for example, user corrections or success rates) can feed into fine-tuning or prompt optimization pipelines in the AI layer.

Why It Matters

The orchestration layer is what makes an agent agentic, able to plan, decide, and act autonomously.

By implementing this layer using Azure’s event-driven and serverless infrastructure, startups can:

- Scale orchestration dynamically per tenant or workload.

- Enable fine-grained control over tool access and reasoning context.

- Maintain a traceable chain of thought for compliance and debugging.

- Rapidly extend their agent with new tools, channels, or behaviors.

In short, the orchestration layer transforms Azure from a cloud platform into an execution fabric for intelligent agents, where reasoning, tools, and context seamlessly converge.

Context Layer

The context layer is where your agent gains understanding. It connects reasoning with real-world knowledge, ensuring responses are accurate, relevant, and tenant-specific. Without a well-designed context layer, even the most advanced reasoning models risk becoming unreliable or generic.

For startups, this layer is a competitive differentiator. It is where proprietary data, customer insights, and system integrations converge to make an agent truly useful. The challenge lies in designing it to be secure, multitenant, and dynamically composable across use cases and customers.

The Role of Context in Agentic Systems

An AI agent’s intelligence depends not only on its model but on what it knows at the time of reasoning. Context serves three essential purposes:

- Knowledge grounding: enriching model responses with facts, data, and structured business logic.

- Memory: maintaining continuity across conversations, workflows, or sessions.

- Retrieval and synthesis: fetching, filtering, and summarizing relevant data in real time.

Together, these functions turn a stateless model into a stateful reasoning system that learns and adapts with every interaction.

Context Composition in Azure

Azure provides multiple services that can be composed into a robust, multi-layered context stack:

- Azure AI Search: the foundation for retrieval-augmented generation (RAG). It indexes structured and unstructured data, enabling agents to pull tenant-specific knowledge at query time.

- Cosmos DB: ideal for storing semi-structured domain knowledge, tool metadata, and configuration per tenant.

- Azure Storage or Data Lake: used for long-term document storage and batch indexing pipelines.

- Redis Cache or PostgreSQL: support short-term and session memory, enabling context continuity across conversations.

- Azure OpenAI Embeddings: enable semantic vectorization of tenant data, powering similarity search for context retrieval.

When orchestrated together, these services form a hierarchical memory system, combining fast-access caches with deeper retrieval layers for long-term grounding.

Multitenant Data Isolation

Startups must design context systems that separate knowledge boundaries cleanly:

- Use per-tenant indexes or partitions in Azure AI Search and Cosmos DB to isolate embeddings and documents.

- Enforce Managed Identity-based access control so that agents can only retrieve data for their current tenant.

- Consider a metadata tagging system that scopes retrieval by tenant, role, and content type.

This architecture ensures compliance and helps prevent cross-tenant data leakage. It is critical for enterprise trust.

Retrieval-Augmented Reasoning

At runtime, the context layer enriches prompts with dynamic knowledge using RAG pipelines. A typical flow might look like:

- Receive a user query or intent from the orchestration layer.

- Run semantic search in Azure AI Search for relevant documents.

- Retrieve supporting facts or tool definitions.

- Construct a composite prompt with retrieved context.

- Send the enriched prompt to the reasoning model (for example, GPT-4 Turbo).

By externalizing knowledge retrieval, startups can keep model prompts lightweight while ensuring up-to-date, tenant-specific grounding.

Memory Systems for Adaptive Behavior

Beyond retrieval, context includes short-term and long-term memory—the mechanisms that let an agent evolve:

- Knowledge: static data that grounds agent behavior (think RAG).

- Long-term memory: semantic memory accumulated by agents through experience and interaction. This supports personalization and improved user experience over time.

- Short-term memory: working memory for context management within a session. This is critical for session persistence and multi-agent solutions.

This layered memory approach allows agents to adapt behavior over time without retraining the model.

Observability and Cost Management

Context retrieval and vector search can become costly at scale, especially with large tenant datasets. Azure helps manage this through:

- Search tiers and scaling in Azure AI Search to align with data size and query load.

- Incremental indexing to optimize storage costs by archiving cold data.

- Application Insights telemetry to monitor latency, recall quality, and cost per query.

Startups can further optimize costs by caching high-frequency retrievals, compressing embeddings, or batching document ingestion.

Why the Context Layer Matters

The context layer is the foundation of trustworthy intelligence. It ensures your agent doesn’t hallucinate, stays grounded in customer data, and evolves with real-world usage. By implementing it with Azure-native services, startups gain:

- Secure, tenant-isolated knowledge access.

- Scalable retrieval and memory management.

- Consistent, factually accurate reasoning across users and contexts. When designed correctly, this layer transforms your agent from a conversational system into a knowledgeable assistant, capable of understanding each tenant’s business as if it were its own.