Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

ALM is a term used to describe the lifecycle management of software applications, which includes development, maintenance, and governance. More information: Application lifecycle management (ALM) with Microsoft Power Platform.

This article describes considerations and strategies for working with specific aspects of lifecycle management from the perspective of code components in Microsoft Dataverse:

Development and debugging ALM considerations

When developing code components, you would follow the steps below:

- Create code component project (

pcfproj) from a template using pac pcf init. More information: Create and build a code component. - Implement code component logic. More information: Component implementation.

- Debug the code component using the local test harness. More information: Debug code components.

- Create a solution project (

cdsproj) and add the code component project as a reference. More information: Package a code component. - Build the code component in release mode for distribution and deployment.

When your code component is ready for testing inside a model-driven app, canvas app, or portal, there are two ways to deploy a code component to Dataverse:

pac pcf push: This deploys a single code component at a time to a solution specified by the

--solution-unique-nameparameter, or a temporary PowerAppsTools solution when no solution is specified.Using pac solution init and

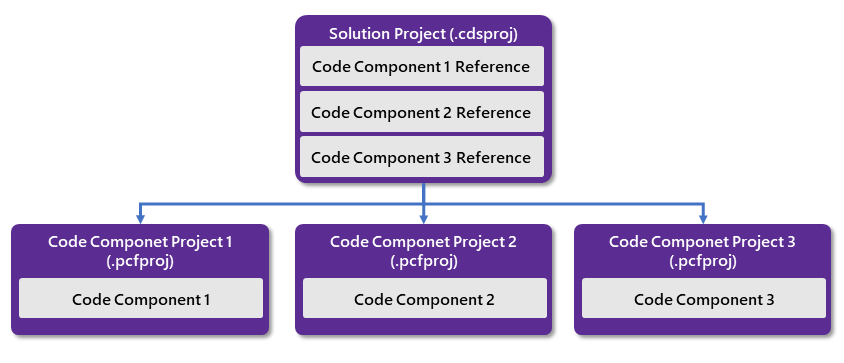

msbuildto build acdsprojsolution project that has references to one or more code components. Each code component is added to thecdsprojusing pac solution add-reference. A solution project can contain references to multiple code components, whereas code component projects may only contain a single code component.The following diagram shows the one-to-many relationship between

cdsprojandpcfprojprojects:

More information: Package a code component.

Building pcfproj code component projects

When building pcfproj projects, the generated JavaScript depends on the command used to build and the PcfBuildMode in the pcfproj file.

You don't normally deploy a code component into Microsoft Dataverse that has been built in development mode since it's often too large to import and may result in slower runtime performance. More information: Debugging after deploying into Microsoft Dataverse.

For pac pcf push to result in a release build, the PcfBuildMode is set inside the pcfproj by adding a new element under the OutputPath element as follows:

<PropertyGroup>

<Name>my-control</Name>

<ProjectGuid>6aaf0d27-ec8b-471e-9ed4-7b3bbc35bbab</ProjectGuid>

<OutputPath>$(MSBuildThisFileDirectory)out\controls</OutputPath>

<PcfBuildMode>production</PcfBuildMode>

</PropertyGroup>

The following table shows which commands result in development vs. release builds:

Build command used on pcfproj |

Development Build (debug purposes only) |

Release Build |

|---|---|---|

npm start watch |

Always | |

| pac pcf push | Default behavior or when PcfBuildMode is set to development in the pcfproj file |

PcfBuildMode is set to production in the pcfproj file |

npm run build |

Default behavior | npm run build -- --buildMode production |

More information: Package a code component.

Building .cdsproj solution projects

When building a solution project (.cdsproj), you have the option to generate the output as a managed or unmanaged solution. Managed solutions are used to deploy to any environment that isn't a development environment for that solution. This includes test, UAT, SIT, and production environments. More information: Managed and unmanaged solutions.

The SolutionPackagerType is included in the .cdsproj file created by pac solution init, but initially commented out. Uncomment the section and set to Managed, Unmanaged, or Both.

<!-- Solution Packager overrides, un-comment to use: SolutionPackagerType (Managed, Unmanaged, Both) -->

<PropertyGroup>

<SolutionPackageType>Managed</SolutionPackageType>

</PropertyGroup>

The following table shows which command and configuration results in development vs. release builds:

Build command used on cdsproj |

SolutionPackageType |

Output |

|---|---|---|

msbuild |

Managed | Development build inside Managed Solution |

msbuild /p:configuration=Release |

Managed | Release build inside Managed Solution |

msbuild |

Unmanaged | Development build inside Unmanaged Solution |

msbuild /p:configuration=Release |

Unmanaged | Release build inside Unmanaged Solution |

More information: Package a code component.

Source code control with code components

When developing code components, it's recommended that you use a source code control provider such as Azure DevOps or GitHub. When committing changes using git source control, the .gitignore file provided by the pac pcf init template will ensure that some files are not added to the source control because they're either restored by npm or are generated as part of the build process:

# dependencies

/node_modules

# generated directory

**/generated

# output directory

/out

# msbuild output directories

/bin

/obj

Since the /out folder is excluded, the resulting bundle.js file (and related resources) built will not be added to the source control. When your code components are built manually or as part of an automated build pipeline, the bundle.js would be built using the latest code to ensure that all changes are included.

Additionally, when a solution is built, any association solution zip files would not be committed to the source control. Instead, the output would be published as binary release artifacts.

Using SolutionPackager with code components

In addition to source controlling the pcfproj and cdsproj, SolutionPackager may be used to incrementally unpack a solution into its respective parts as a series of XML files that can be committed into source control. This has the advantage of creating a complete picture of your metadata in the human-readable format so you can track changes using pull requests or similar. Each time a change is made to the environment's solution metadata, Solution Packager is used to unpack and the changes can be viewed as a change set.

Note

At this time, SolutionPackager differs from using pac solution clone in that it can be used incrementally to export changes from a Dataverse solution.

If you're combining code components with other solution elements like tables, model-driven apps, and canvas apps, you'd normally not need the cdsproj project build. Instead, you would move the built artifacts from the pcfproj into the solution package folder before repacking it for import.

Once a solution that contains a code component is unpacked using SolutionPackager /action: Extract, it will look similar to:

.

├── Controls

│ └── prefix_namespace.ControlName

│ ├── bundle.js *

│ └── css

│ └── ControlName.css *

│ ├── ControlManifest.xml *

│ └── ControlManifest.xml.data.xml

├── Entities

│ └── Contact

│ ├── FormXml

│ │ └── main

│ │ └── {3d60f361-84c5-eb11-bacc-000d3a9d0f1d}.xml

│ ├── Entity.xml

│ └── RibbonDiff.xml

└── Other

├── Customizations.xml

└── Solution.xml

Under the Controls folder, you can see there are subfolders for each code component included in the solution. The other folders contain additional solution components added to the solution.

When committing this folder structure to the source control, you would exclude the files marked with an asterisk (*) above, because they will be output when the pcfproj project is built for the corresponding component.

The only files that are required are the *.data.xml files since they contain metadata that describes the resources required by the packaging process. After each code component is built, the files in the out folder are copied over into the respective control folder in the SolutionPackager folders. Once the build outputs have been added, the package folders contain all the data required to repack into a Dataverse solution using SolutionPackager /action: Pack.

More information: SolutionPackager command-line arguments.

Code component solution strategies

Code components are deployed to downstream environments using Dataverse solutions. Once deployed to your development environment, they can be deployed in the same way as other solution components. More information: Solution concepts - Power Platform.

There are two strategies for deploying code components inside solutions:

Segmented solutions - A solution project is created using pac solution init and then using pac solution add-reference to add one or more code components. This solution can then be exported and imported into downstream environments and other segmented solutions will take a dependency on the code component solution such that it must be deployed into that environment first. This is referred to as solution segmenting because the overall functionality is split between different solution segments and layers, with interdependencies being tracked by the solution framework. If using this approach, you'll likely need multiple development environments—one for each segmented solution. More information: Use segmented solutions in Power Apps.

Single solution - A single solution is created inside a Dataverse environment and then code components are added along with other solution components (such as tables, model-driven apps, or canvas apps) that in turn reference those code components. This solution can be exported and imported into downstream environments without any inter-solution dependencies. With this approach, code and environment branching become important so that you can deploy an update to one part of the solution without taking changes that are being made in another area. More information: Branch and merging strategy with Microsoft Power Platform.

Reasons for adopting a segmented solution approach over a mixed single solution approach might be:

Versioning lifecycle - You want to develop, deploy, and version-control your code components on a separate lifecycle to the other parts of your solution. This is a common scenario in which you have a 'fusion team,' where code components built by developers are being consumed by app makers. Typically, this would also mean that your code components would exist in a different code repository to other solution components.

Shared use - You want to share your code components between multiple environments and therefore don't want to couple your code components with any other solution components. This could be if you're an ISV or developing a code component for use by different parts of your organization that each has its own environment.

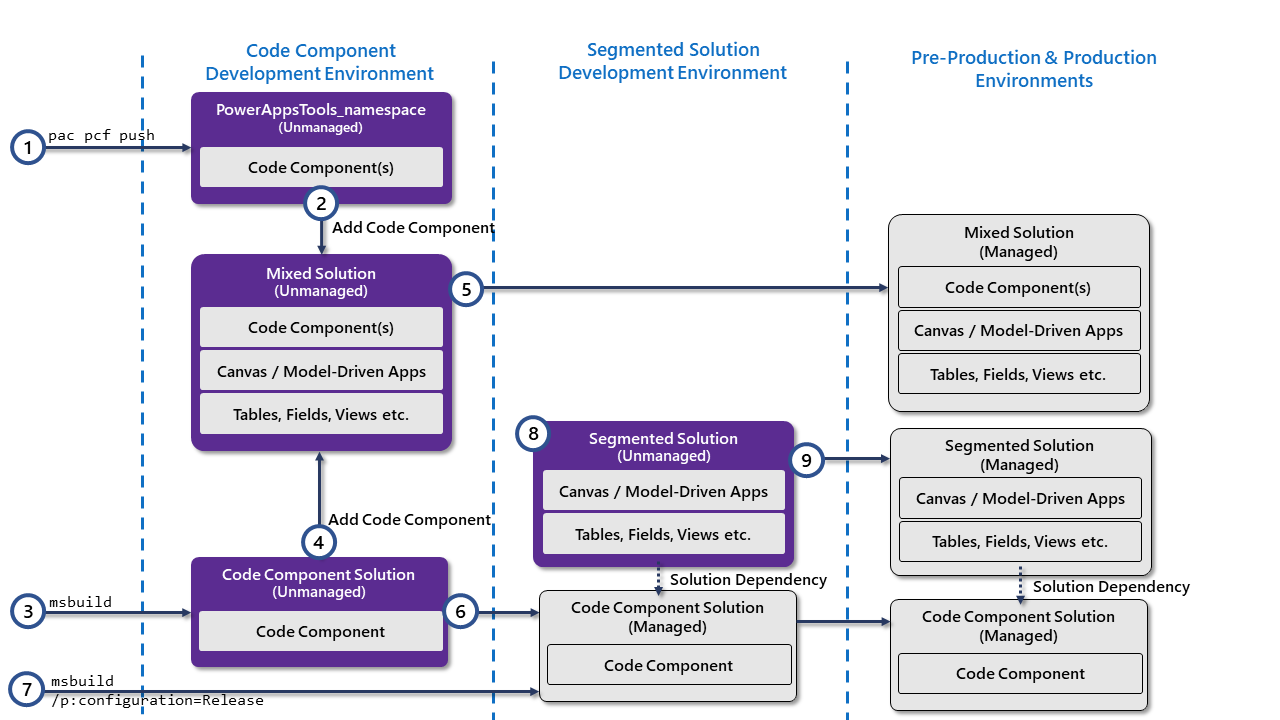

The following diagram shows an overview of the solution lifecycle for these two approaches:

The diagram describes the following points:

Push using PAC CLI - When the code component is ready for testing inside Dataverse, pac pcf push is used to deploy to a development environment. This creates an unmanaged solution named PowerAppsTools_namespace, where namespace is the namespace prefix of the solution provider under which you want to deploy your code component. The solution provider should already exist in the target environmentand must have the same namespace prefix as what you want to use for downstream environments.After deployment, you can add your code component to model-driven or canvas apps for testing.

Note

As described above, it's important to configure the code component

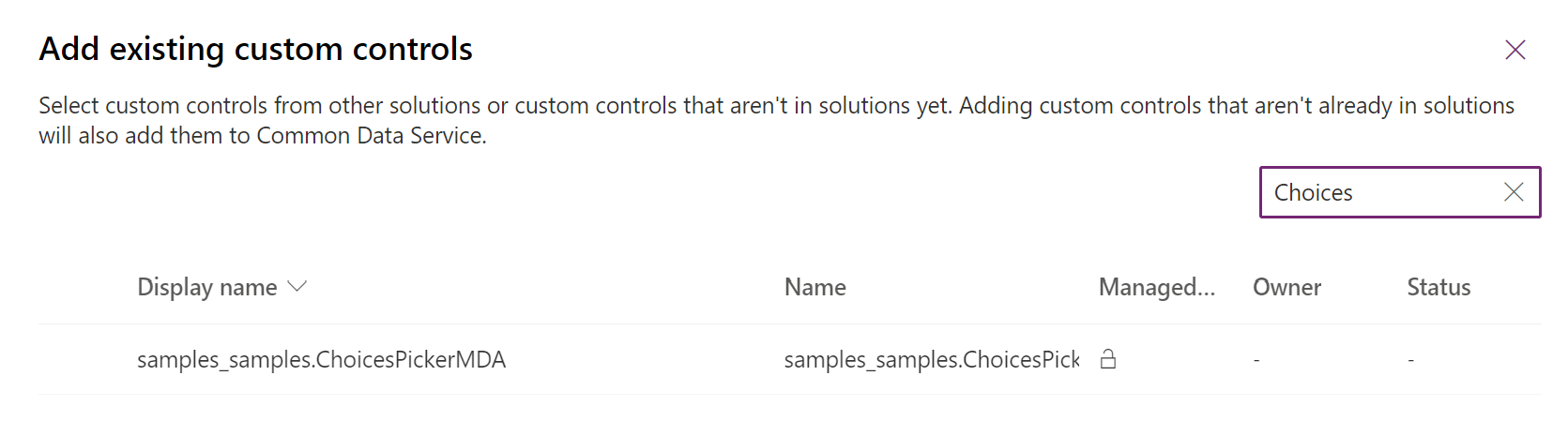

cdsprojfor production build so that you deploy the code optimized for production rather than development.Add existing code components (after PAC CLI deployment) - If you're using the single solution approach, once deployed, the code component can be added to another solution. (That solution must share the same solution publisher as used by the PowerAppsTools solution.)

Build unmanaged solution project - If you're using solution

cdsprojprojects, then an unmanaged solution can be built usingmsbuild, and then imported into your development environment.Add existing code components (after solution project deployment) - Much in the same way as after pac pcf push, code components that are imported from a solution project build may be added to a mixed solution, provided that the same solution publisher prefix was used.

Export single solution as managed - The single mixed component solution can then be exported as managed and imported into the downstream environments. Since the code components and other solution components are deployed in the same solution, they all share the same solution and versioning strategy.

Export code component segmented solution as managed - If you're using the segmented solution approach, you can export your code component solution project as managed into downstream environments.

Build code component segmented solution as managed - If you're using segmented solutions and have no need for an unmanaged solution, you can build the solution project directly as a managed solution using

msbuild /p:configuration=Release. This can then be imported into environments that need to take a dependency on its code components.Consume segmented code component solution - Once code components are deployed via a managed segmented solution, other solutions can be built, taking a dependency on the code component solution. The dependencies on code components will be listed in the solution's

MissingDependenciessection of type 66. More information: Dependency tracking for solution components.Deploy code component segmented solution before solutions that use it - When importing solutions that take dependencies on a segmented code component solution, the code component solution must be installed on the target environment before it can be imported.

More information: Package and distribute extensions using solutions.

Code components and automated build pipelines

In addition to manually building and deploying your code component solutions, you can also build and package your code components using automated build pipelines.

- If you're using Azure DevOps, you can use the Microsoft Power Platform Build Tool for Azure DevOps.

- If you're using GitHub, you can use the Power Platform GitHub Actions.

Some advantages to using automated build pipelines are:

- They're time-efficient - Removing the manual tasks makes the tasks of building and packaging your component quicker so that it can be done more regularly such as every time a change is checked in.

- They're repeatable - Once the build process is automated, it will be performed the same every time, and therefore not be dependent on the member of the team who performs the build.

- They offer versioning consistency - When the build pipeline automatically sets the version of your code component and the solution, you can have confidence that when a new build is created, it's versioned consistently relative to previous versions. This facilitates tracking of features, bug fixes, and deployments.

- They're maintainable - Since everything that's needed to build your solution is contained from inside the source control, you can always create new branches and development environments by checking out the code. When you're ready to deploy an update, pull requests can be merged into downstream branches. More information: Branch and merging strategy with Microsoft Power Platform.

As described above, there are two approaches to solution management of code components—a segmented solution projects, or a single mixed solution containing other artifacts such as model-driven apps, canvas apps, and tables to which the code components are added.

If you're using the segmented code component solution project, you can build the project inside an Azure DevOps Pipeline (using Microsoft Power Platform Build Tools) or GitHub Pipeline (using GitHub Actions for Microsoft Power Platform). Each code component pcfproj folder is added to the source control (excluding the generated, out and node_modules folders), a cdsproj project is created, and each code component is referenced using pac solution add-reference before also being added to source control. The pipeline would then perform the following:

- Update the solution version in the

Solution.xmlfile to match the version of your build. The solution version is held in theImportExportXml/Versionattribute. See below for details on versioning strategies. - Update the code component version in the

ControlManifest.Input.xmlfile. The version is stored in themanifest/control/versionattribute. This should be done for all of thepcfprojprojects that are built. - Run an

MSBuildtask with the argument/restore /p:configuration=Releaseusing a wildcard*.cdsproj. This will build all of thecdsprojprojects and their referencedpcfprojprojects. - Collect the built solution zip into the pipeline release artifacts. This solution will contain all code components included as references in the

cdsproj.

If you're using a mixed solution that contains other components in addition to code components, then this would be extracted into source control using SolutionPackager as described above. Your pipeline would then perform the following:

- Update the solution and code component versions in the same way as described In steps 1 and 2 above.

- Install Microsoft Power Platform Build Tools into the build pipeline using the

PowerPlatformToolInstallertask. - Restore

node_modulesusing anNpmtask with the commandci. - Build code components in production release mode using an

Npmtask with acustomCommandparameter ofrun build -- --buildMode release. - Copy the output of the build into the solution packager folder for the corresponding control.

- Package the solution using the Power Platform Build Tools

PowerPlatformPackSolutiontask. - Collect the built solution zip into the pipeline release artifacts.

It's recommended that you commit your unpacked solution metadata to the source control in its unmanaged form to allow the creation of a development environment at any later stage. (If only managed solution metadata is committed, it makes it hard to create new development environments.) You have two options to do this:

- Commit both managed and unmanaged using the

/packagetype:Bothoption of Solution Packager. This allows packing in either managed or unmanaged mode but has the disadvantage of duplication inside source code such that changes will often appear in multiple xml files - both the managed and unmanaged version. - Only commit unmanaged to source control (resulting in cleaner change sets) and then, inside the build pipeline, import the packaged solution into a build environment so that it can then be exported as managed to convert it into a managed deployment artifact.

Versioning and deploying updates

When deploying and updating your code components, it's important to have a consistent versioning strategy so that you can:

- Track which version is deployed against the features/fixes it contains.

- Ensure canvas apps can detect that they need to update to the latest version.

- Ensure that model-driven apps will invalidate their cache and load the new version.

A common versioning strategy is semantic versioning, which has the format: MAJOR.MINOR.PATCH.

Incrementing the PATCH version

The ControlManifest.Input.xml stores the code component version in the control element:

<control namespace="..." constructor="..." version="1.0.0" display-name-key="..." description-key="..." control-type="...">

When deploying an update to a code component, the version in the ControlManifest.Input.xml must at minimum have its PATCH (the last part of the version) incremented for the change to be detected. This can be done either manually by editing the version attribute directly or by using the following command to advance the PATCH version by one:

pac pcf version --strategy manifest

Alternatively, to specify an exact value for the PATCH part (for example, as part of an automated build pipeline) use:

pac pcf version --patchversion <PATCH VERSION>

More information: pac pcf version.

When to increment the MAJOR and MINOR version

It's recommended that the MAJOR and MINOR version of the code component's version are kept in sync with the Dataverse solution that is distributed. For example:

- Your deployed code component version is

1.0.0and your solution version is1.0.0.0. - You make a small update to your code component and increment the PATCH version on the code component to

1.0.1usingpac pcf version --strategy manifest. - When packaging the code component for deployment, the solution version in

Solution.xmlis updated to be 1.0.0.1, or the version is incremented automatically by manually exporting the solution. - You make significant changes to your solution such that you want to increment the MAJOR and MINOR version to 1.1.0.0.

- In this case, the code component version also can be updated to be 1.1.0.

A Dataverse solution has four parts and it can be thought of in the following structure: MAJOR.MINOR.BUILD.REVISION.

If you're using AzureDevOps, you can set your build pipeline versioning using the Build and Rev environment variables (Run (build) number - Azure Pipelines), and use PowerShell script similar to the approach described in the article Use PowerShell scripts to customize pipelines.

| Semantic version part | ControlManifest.Input.xml version partMAJOR.MINOR.PATCH |

Solution.xml version partMAJOR.MINOR.BUILD.REVISION |

AzureDevOps Build Version |

|---|---|---|---|

| MAJOR | MAJOR | MAJOR | Set using Pipeline Variable $(majorVersion) or use the value last committed to source control. |

| MINOR | MINOR | MINOR | Set using Pipeline Variable $(minorVersion) or use the value last committed to source control. |

| --- | --- | BUILD | $(Build.BuildId) |

| PATCH | PATCH | REVISION | $(Rev:r) |

Canvas apps ALM considerations

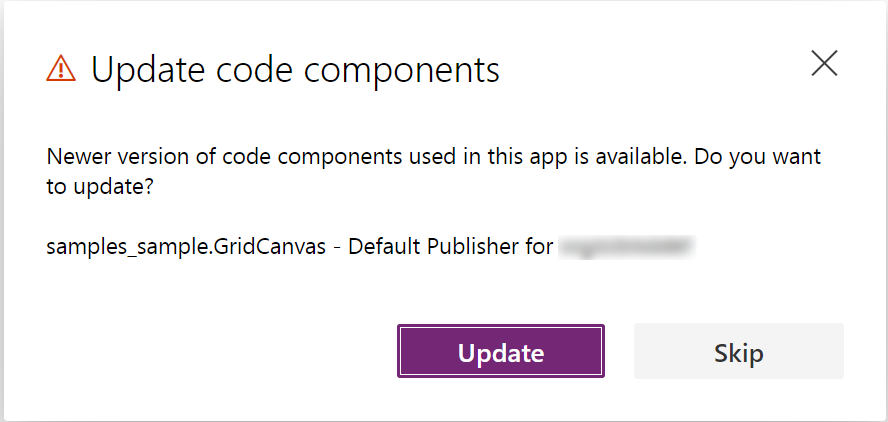

Consuming code components in canvas apps is different from doing so in model-driven apps. Code components must be explicitly added to the app by selecting Get more components on the Insert panel. Once the code component is added to the canvas app, it's included as the content inside the app definition. To update to a new version of the code component after it's deployed (and the control version incremented), the app maker must first open the app in Power Apps Studio and select Update when prompted on the Update code components dialog. The app must then be saved and published for the new version to be used when the app is played by users.

If the app is not updated or Skip is used, the app continues to use the older version of the code component, even though it doesn't exist in the environment since it's been overwritten by the newer version.

Since the app contains a copy of the code component, it's therefore possible to have different versions of the code components running side by side in a single environment from inside different canvas apps. However, you cannot have different versions of a code component running side by side in the same app. App makers are encouraged to update their apps to the latest version of code components when a new version is deployed.

Note

Although, at this time, you can import a canvas app without the matching code component being deployed to that environment, it's recommended that you always ensure apps are updated to use the latest version of the code components and that the same version is deployed to that environment first or as part of the same solution.

Related articles

Application lifecycle management (ALM) with Microsoft Power Platform

Power Apps component framework API reference

Create your first component

Debug code components