Deploy a custom speech model

In this article, you learn how to deploy an endpoint for a custom speech model. Except for batch transcription, you must deploy a custom endpoint to use a custom speech model.

Tip

A hosted deployment endpoint isn't required to use custom speech with the Batch transcription API. You can conserve resources if the custom speech model is only used for batch transcription. For more information, see Speech service pricing.

You can deploy an endpoint for a base or custom model, and then update the endpoint later to use a better trained model.

Note

Endpoints used by F0 Speech resources are deleted after seven days.

Add a deployment endpoint

To create a custom endpoint, follow these steps:

Sign in to the Speech Studio.

Select Custom speech > Your project name > Deploy models.

If this is your first endpoint, you notice that there are no endpoints listed in the table. After you create an endpoint, you use this page to track each deployed endpoint.

Select Deploy model to start the new endpoint wizard.

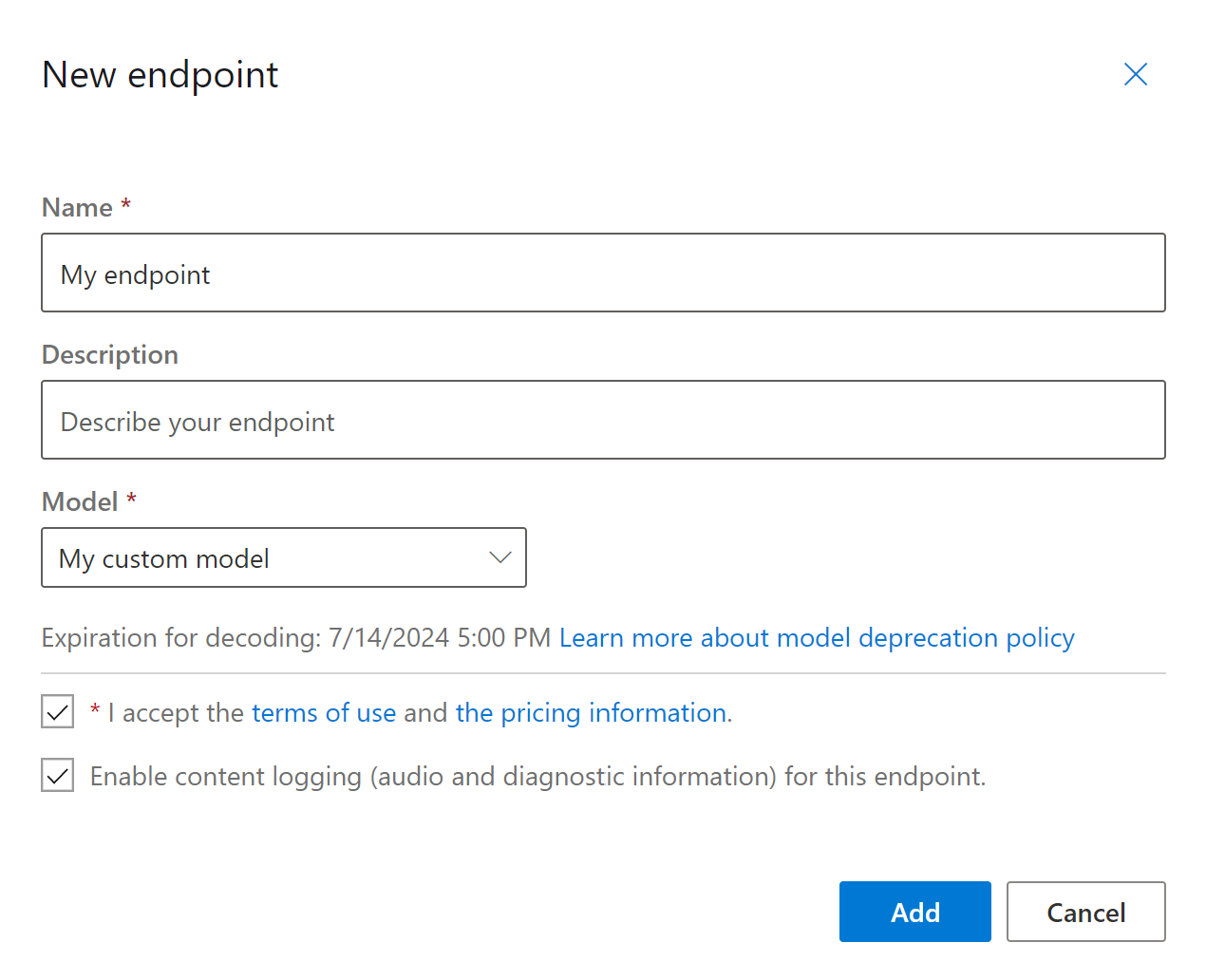

On the New endpoint page, enter a name and description for your custom endpoint.

Select the custom model that you want to associate with the endpoint.

Optionally, you can check the box to enable audio and diagnostic logging of the endpoint's traffic.

Select Add to save and deploy the endpoint.

On the main Deploy models page, details about the new endpoint are displayed in a table, such as name, description, status, and expiration date. It can take up to 30 minutes to instantiate a new endpoint that uses your custom models. When the status of the deployment changes to Succeeded, the endpoint is ready to use.

Important

Take note of the model expiration date. This is the last date that you can use your custom model for speech recognition. For more information, see Model and endpoint lifecycle.

Select the endpoint link to view information specific to it, such as the endpoint key, endpoint URL, and sample code.

To create an endpoint and deploy a model, use the spx csr endpoint create command. Construct the request parameters according to the following instructions:

- Set the

projectparameter to the ID of an existing project. This is recommended so that you can also view and manage the endpoint in Speech Studio. You can run thespx csr project listcommand to get available projects. - Set the required

modelparameter to the ID of the model that you want deployed to the endpoint. - Set the required

languageparameter. The endpoint locale must match the locale of the model. The locale can't be changed later. The Speech CLIlanguageparameter corresponds to thelocaleproperty in the JSON request and response. - Set the required

nameparameter. This is the name that is displayed in the Speech Studio. The Speech CLInameparameter corresponds to thedisplayNameproperty in the JSON request and response. - Optionally, you can set the

loggingparameter. Set this toenabledto enable audio and diagnostic logging of the endpoint's traffic. The default isfalse.

Here's an example Speech CLI command to create an endpoint and deploy a model:

spx csr endpoint create --api-version v3.2 --project YourProjectId --model YourModelId --name "My Endpoint" --description "My Endpoint Description" --language "en-US"

You should receive a response body in the following format:

{

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/endpoints/a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"model": {

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/models/9e240dc1-3d2d-4ac9-98ec-1be05ba0e9dd"

},

"links": {

"logs": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/endpoints/a07164e8-22d1-4eb7-aa31-bf6bb1097f37/files/logs",

"restInteractive": "https://eastus.stt.speech.microsoft.com/speech/recognition/interactive/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"restConversation": "https://eastus.stt.speech.microsoft.com/speech/recognition/conversation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"restDictation": "https://eastus.stt.speech.microsoft.com/speech/recognition/dictation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"webSocketInteractive": "wss://eastus.stt.speech.microsoft.com/speech/recognition/interactive/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"webSocketConversation": "wss://eastus.stt.speech.microsoft.com/speech/recognition/conversation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"webSocketDictation": "wss://eastus.stt.speech.microsoft.com/speech/recognition/dictation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37"

},

"project": {

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/projects/0198f569-cc11-4099-a0e8-9d55bc3d0c52"

},

"properties": {

"loggingEnabled": true

},

"lastActionDateTime": "2024-07-15T16:29:36Z",

"status": "NotStarted",

"createdDateTime": "2024-07-15T16:29:36Z",

"locale": "en-US",

"displayName": "My Endpoint",

"description": "My Endpoint Description"

}

The top-level self property in the response body is the endpoint's URI. Use this URI to get details about the endpoint's project, model, and logs. You also use this URI to update the endpoint.

For Speech CLI help with endpoints, run the following command:

spx help csr endpoint

To create an endpoint and deploy a model, use the Endpoints_Create operation of the Speech to text REST API. Construct the request body according to the following instructions:

- Set the

projectproperty to the URI of an existing project. This is recommended so that you can also view and manage the endpoint in Speech Studio. You can make a Projects_List request to get available projects. - Set the required

modelproperty to the URI of the model that you want deployed to the endpoint. - Set the required

localeproperty. The endpoint locale must match the locale of the model. The locale can't be changed later. - Set the required

displayNameproperty. This is the name that is displayed in the Speech Studio. - Optionally, you can set the

loggingEnabledproperty withinproperties. Set this totrueto enable audio and diagnostic logging of the endpoint's traffic. The default isfalse.

Make an HTTP POST request using the URI as shown in the following Endpoints_Create example. Replace YourSubscriptionKey with your Speech resource key, replace YourServiceRegion with your Speech resource region, and set the request body properties as previously described.

curl -v -X POST -H "Ocp-Apim-Subscription-Key: YourSubscriptionKey" -H "Content-Type: application/json" -d '{

"project": {

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/projects/0198f569-cc11-4099-a0e8-9d55bc3d0c52"

},

"properties": {

"loggingEnabled": true

},

"displayName": "My Endpoint",

"description": "My Endpoint Description",

"model": {

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/models/base/ae8d1643-53e4-4554-be4c-221dcfb471c5"

},

"locale": "en-US",

}' "https://YourServiceRegion.api.cognitive.microsoft.com/speechtotext/v3.2/endpoints"

You should receive a response body in the following format:

{

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/endpoints/a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"model": {

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/models/9e240dc1-3d2d-4ac9-98ec-1be05ba0e9dd"

},

"links": {

"logs": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/endpoints/a07164e8-22d1-4eb7-aa31-bf6bb1097f37/files/logs",

"restInteractive": "https://eastus.stt.speech.microsoft.com/speech/recognition/interactive/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"restConversation": "https://eastus.stt.speech.microsoft.com/speech/recognition/conversation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"restDictation": "https://eastus.stt.speech.microsoft.com/speech/recognition/dictation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"webSocketInteractive": "wss://eastus.stt.speech.microsoft.com/speech/recognition/interactive/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"webSocketConversation": "wss://eastus.stt.speech.microsoft.com/speech/recognition/conversation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"webSocketDictation": "wss://eastus.stt.speech.microsoft.com/speech/recognition/dictation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37"

},

"project": {

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/projects/0198f569-cc11-4099-a0e8-9d55bc3d0c52"

},

"properties": {

"loggingEnabled": true

},

"lastActionDateTime": "2024-07-15T16:29:36Z",

"status": "NotStarted",

"createdDateTime": "2024-07-15T16:29:36Z",

"locale": "en-US",

"displayName": "My Endpoint",

"description": "My Endpoint Description"

}

The top-level self property in the response body is the endpoint's URI. Use this URI to get details about the endpoint's project, model, and logs. You also use this URI to update or delete the endpoint.

Change model and redeploy endpoint

An endpoint can be updated to use another model that was created by the same Speech resource. As previously mentioned, you must update the endpoint's model before the model expires.

To use a new model and redeploy the custom endpoint:

- Sign in to the Speech Studio.

- Select Custom speech > Your project name > Deploy models.

- Select the link to an endpoint by name, and then select Change model.

- Select the new model that you want the endpoint to use.

- Select Done to save and redeploy the endpoint.

To redeploy the custom endpoint with a new model, use the spx csr model update command. Construct the request parameters according to the following instructions:

- Set the required

endpointparameter to the ID of the endpoint that you want deployed. - Set the required

modelparameter to the ID of the model that you want deployed to the endpoint.

Here's an example Speech CLI command that redeploys the custom endpoint with a new model:

spx csr endpoint update --api-version v3.2 --endpoint YourEndpointId --model YourModelId

You should receive a response body in the following format:

{

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/endpoints/a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"model": {

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/models/9e240dc1-3d2d-4ac9-98ec-1be05ba0e9dd"

},

"links": {

"logs": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/endpoints/a07164e8-22d1-4eb7-aa31-bf6bb1097f37/files/logs",

"restInteractive": "https://eastus.stt.speech.microsoft.com/speech/recognition/interactive/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"restConversation": "https://eastus.stt.speech.microsoft.com/speech/recognition/conversation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"restDictation": "https://eastus.stt.speech.microsoft.com/speech/recognition/dictation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"webSocketInteractive": "wss://eastus.stt.speech.microsoft.com/speech/recognition/interactive/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"webSocketConversation": "wss://eastus.stt.speech.microsoft.com/speech/recognition/conversation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"webSocketDictation": "wss://eastus.stt.speech.microsoft.com/speech/recognition/dictation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37"

},

"project": {

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/projects/0198f569-cc11-4099-a0e8-9d55bc3d0c52"

},

"properties": {

"loggingEnabled": true

},

"lastActionDateTime": "2024-07-15T16:30:12Z",

"status": "Succeeded",

"createdDateTime": "2024-07-15T16:29:36Z",

"locale": "en-US",

"displayName": "My Endpoint",

"description": "My Endpoint Description"

}

For Speech CLI help with endpoints, run the following command:

spx help csr endpoint

To redeploy the custom endpoint with a new model, use the Endpoints_Update operation of the Speech to text REST API. Construct the request body according to the following instructions:

- Set the

modelproperty to the URI of the model that you want deployed to the endpoint.

Make an HTTP PATCH request using the URI as shown in the following example. Replace YourSubscriptionKey with your Speech resource key, replace YourServiceRegion with your Speech resource region, replace YourEndpointId with your endpoint ID, and set the request body properties as previously described.

curl -v -X PATCH -H "Ocp-Apim-Subscription-Key: YourSubscriptionKey" -H "Content-Type: application/json" -d '{

"model": {

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/models/9e240dc1-3d2d-4ac9-98ec-1be05ba0e9dd"

},

}' "https://YourServiceRegion.api.cognitive.microsoft.com/speechtotext/v3.2/endpoints/YourEndpointId"

You should receive a response body in the following format:

{

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/endpoints/a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"model": {

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/models/9e240dc1-3d2d-4ac9-98ec-1be05ba0e9dd"

},

"links": {

"logs": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/endpoints/a07164e8-22d1-4eb7-aa31-bf6bb1097f37/files/logs",

"restInteractive": "https://eastus.stt.speech.microsoft.com/speech/recognition/interactive/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"restConversation": "https://eastus.stt.speech.microsoft.com/speech/recognition/conversation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"restDictation": "https://eastus.stt.speech.microsoft.com/speech/recognition/dictation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"webSocketInteractive": "wss://eastus.stt.speech.microsoft.com/speech/recognition/interactive/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"webSocketConversation": "wss://eastus.stt.speech.microsoft.com/speech/recognition/conversation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"webSocketDictation": "wss://eastus.stt.speech.microsoft.com/speech/recognition/dictation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37"

},

"project": {

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/projects/0198f569-cc11-4099-a0e8-9d55bc3d0c52"

},

"properties": {

"loggingEnabled": true

},

"lastActionDateTime": "2024-07-15T16:30:12Z",

"status": "Succeeded",

"createdDateTime": "2024-07-15T16:29:36Z",

"locale": "en-US",

"displayName": "My Endpoint",

"description": "My Endpoint Description"

}

The redeployment takes several minutes to complete. In the meantime, your endpoint uses the previous model without interruption of service.

View logging data

Logging data is available for export if you configured it while creating the endpoint.

To download the endpoint logs:

- Sign in to the Speech Studio.

- Select Custom speech > Your project name > Deploy models.

- Select the link by endpoint name.

- Under Content logging, select Download log.

To get logs for an endpoint, use the spx csr endpoint list command. Construct the request parameters according to the following instructions:

- Set the required

endpointparameter to the ID of the endpoint that you want to get logs.

Here's an example Speech CLI command that gets logs for an endpoint:

spx csr endpoint list --api-version v3.2 --endpoint YourEndpointId

The locations of each log file with more details are returned in the response body.

To get logs for an endpoint, start by using the Endpoints_Get operation of the Speech to text REST API.

Make an HTTP GET request using the URI as shown in the following example. Replace YourEndpointId with your endpoint ID, replace YourSubscriptionKey with your Speech resource key, and replace YourServiceRegion with your Speech resource region.

curl -v -X GET "https://YourServiceRegion.api.cognitive.microsoft.com/speechtotext/v3.2/endpoints/YourEndpointId" -H "Ocp-Apim-Subscription-Key: YourSubscriptionKey"

You should receive a response body in the following format:

{

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/endpoints/a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"model": {

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/models/9e240dc1-3d2d-4ac9-98ec-1be05ba0e9dd"

},

"links": {

"logs": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/endpoints/a07164e8-22d1-4eb7-aa31-bf6bb1097f37/files/logs",

"restInteractive": "https://eastus.stt.speech.microsoft.com/speech/recognition/interactive/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"restConversation": "https://eastus.stt.speech.microsoft.com/speech/recognition/conversation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"restDictation": "https://eastus.stt.speech.microsoft.com/speech/recognition/dictation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"webSocketInteractive": "wss://eastus.stt.speech.microsoft.com/speech/recognition/interactive/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"webSocketConversation": "wss://eastus.stt.speech.microsoft.com/speech/recognition/conversation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37",

"webSocketDictation": "wss://eastus.stt.speech.microsoft.com/speech/recognition/dictation/cognitiveservices/v1?cid=a07164e8-22d1-4eb7-aa31-bf6bb1097f37"

},

"project": {

"self": "https://eastus.api.cognitive.microsoft.com/speechtotext/v3.2/projects/0198f569-cc11-4099-a0e8-9d55bc3d0c52"

},

"properties": {

"loggingEnabled": true

},

"lastActionDateTime": "2024-07-15T16:30:12Z",

"status": "Succeeded",

"createdDateTime": "2024-07-15T16:29:36Z",

"locale": "en-US",

"displayName": "My Endpoint",

"description": "My Endpoint Description"

}

Make an HTTP GET request using the "logs" URI from the previous response body. Replace YourEndpointId with your endpoint ID, replace YourSubscriptionKey with your Speech resource key, and replace YourServiceRegion with your Speech resource region.

curl -v -X GET "https://YourServiceRegion.api.cognitive.microsoft.com/speechtotext/v3.2/endpoints/YourEndpointId/files/logs" -H "Ocp-Apim-Subscription-Key: YourSubscriptionKey"

The locations of each log file with more details are returned in the response body.

Logging data is available on Microsoft-owned storage for 30 days, and then it's removed. If your own storage account is linked to the Azure AI services subscription, the logging data isn't automatically deleted.