Use a custom container to deploy a model to an online endpoint

APPLIES TO:

Azure CLI ml extension v2 (current)

Azure CLI ml extension v2 (current)

Python SDK azure-ai-ml v2 (current)

Python SDK azure-ai-ml v2 (current)

Learn how to use a custom container to deploy a model to an online endpoint in Azure Machine Learning.

Custom container deployments can use web servers other than the default Python Flask server used by Azure Machine Learning. Users of these deployments can still take advantage of Azure Machine Learning's built-in monitoring, scaling, alerting, and authentication.

The following table lists various deployment examples that use custom containers such as TensorFlow Serving, TorchServe, Triton Inference Server, Plumber R package, and Azure Machine Learning Inference Minimal image.

| Example | Script (CLI) | Description |

|---|---|---|

| minimal/multimodel | deploy-custom-container-minimal-multimodel | Deploy multiple models to a single deployment by extending the Azure Machine Learning Inference Minimal image. |

| minimal/single-model | deploy-custom-container-minimal-single-model | Deploy a single model by extending the Azure Machine Learning Inference Minimal image. |

| mlflow/multideployment-scikit | deploy-custom-container-mlflow-multideployment-scikit | Deploy two MLFlow models with different Python requirements to two separate deployments behind a single endpoint using the Azure Machine Learning Inference Minimal Image. |

| r/multimodel-plumber | deploy-custom-container-r-multimodel-plumber | Deploy three regression models to one endpoint using the Plumber R package |

| tfserving/half-plus-two | deploy-custom-container-tfserving-half-plus-two | Deploy a Half Plus Two model using a TensorFlow Serving custom container using the standard model registration process. |

| tfserving/half-plus-two-integrated | deploy-custom-container-tfserving-half-plus-two-integrated | Deploy a Half Plus Two model using a TensorFlow Serving custom container with the model integrated into the image. |

| torchserve/densenet | deploy-custom-container-torchserve-densenet | Deploy a single model using a TorchServe custom container. |

| triton/single-model | deploy-custom-container-triton-single-model | Deploy a Triton model using a custom container |

This article focuses on serving a TensorFlow model with TensorFlow (TF) Serving.

Warning

Microsoft might not be able to help troubleshoot problems caused by a custom image. If you encounter problems, you might be asked to use the default image or one of the images Microsoft provides to see if the problem is specific to your image.

Prerequisites

Before following the steps in this article, make sure you have the following prerequisites:

An Azure Machine Learning workspace. If you don't have one, use the steps in the Quickstart: Create workspace resources article to create one.

The Azure CLI and the

mlextension or the Azure Machine Learning Python SDK v2:To install the Azure CLI and extension, see Install, set up, and use the CLI (v2).

Important

The CLI examples in this article assume that you are using the Bash (or compatible) shell. For example, from a Linux system or Windows Subsystem for Linux.

To install the Python SDK v2, use the following command:

pip install azure-ai-ml azure-identityTo update an existing installation of the SDK to the latest version, use the following command:

pip install --upgrade azure-ai-ml azure-identityFor more information, see Install the Python SDK v2 for Azure Machine Learning.

You, or the service principal you use, must have Contributor access to the Azure resource group that contains your workspace. You have such a resource group if you configured your workspace using the quickstart article.

To deploy locally, you must have Docker engine running locally. This step is highly recommended. It helps you debug issues.

Download source code

To follow along with this tutorial, clone the source code from GitHub.

git clone https://github.com/Azure/azureml-examples --depth 1

cd azureml-examples/cli

Initialize environment variables

Define environment variables:

BASE_PATH=endpoints/online/custom-container/tfserving/half-plus-two

AML_MODEL_NAME=tfserving-mounted

MODEL_NAME=half_plus_two

MODEL_BASE_PATH=/var/azureml-app/azureml-models/$AML_MODEL_NAME/1

Download a TensorFlow model

Download and unzip a model that divides an input by two and adds 2 to the result:

wget https://aka.ms/half_plus_two-model -O $BASE_PATH/half_plus_two.tar.gz

tar -xvf $BASE_PATH/half_plus_two.tar.gz -C $BASE_PATH

Run a TF Serving image locally to test that it works

Use docker to run your image locally for testing:

docker run --rm -d -v $PWD/$BASE_PATH:$MODEL_BASE_PATH -p 8501:8501 \

-e MODEL_BASE_PATH=$MODEL_BASE_PATH -e MODEL_NAME=$MODEL_NAME \

--name="tfserving-test" docker.io/tensorflow/serving:latest

sleep 10

Check that you can send liveness and scoring requests to the image

First, check that the container is alive, meaning that the process inside the container is still running. You should get a 200 (OK) response.

curl -v http://localhost:8501/v1/models/$MODEL_NAME

Then, check that you can get predictions about unlabeled data:

curl --header "Content-Type: application/json" \

--request POST \

--data @$BASE_PATH/sample_request.json \

http://localhost:8501/v1/models/$MODEL_NAME:predict

Stop the image

Now that you tested locally, stop the image:

docker stop tfserving-test

Deploy your online endpoint to Azure

Next, deploy your online endpoint to Azure.

Create a YAML file for your endpoint and deployment

You can configure your cloud deployment using YAML. Take a look at the sample YAML for this example:

tfserving-endpoint.yml

$schema: https://azuremlsdk2.blob.core.windows.net/latest/managedOnlineEndpoint.schema.json

name: tfserving-endpoint

auth_mode: aml_token

tfserving-deployment.yml

$schema: https://azuremlschemas.azureedge.net/latest/managedOnlineDeployment.schema.json

name: tfserving-deployment

endpoint_name: tfserving-endpoint

model:

name: tfserving-mounted

version: {{MODEL_VERSION}}

path: ./half_plus_two

environment_variables:

MODEL_BASE_PATH: /var/azureml-app/azureml-models/tfserving-mounted/{{MODEL_VERSION}}

MODEL_NAME: half_plus_two

environment:

#name: tfserving

#version: 1

image: docker.io/tensorflow/serving:latest

inference_config:

liveness_route:

port: 8501

path: /v1/models/half_plus_two

readiness_route:

port: 8501

path: /v1/models/half_plus_two

scoring_route:

port: 8501

path: /v1/models/half_plus_two:predict

instance_type: Standard_DS3_v2

instance_count: 1

There are a few important concepts to note in this YAML/Python parameter:

Base image

The base image is specified as a parameter in environment, and docker.io/tensorflow/serving:latest is used in this example. As you inspect the container, you can find that this server uses ENTRYPOINT to start an entry point script, which takes the environment variables such as MODEL_BASE_PATH and MODEL_NAME, and exposes ports such as 8501. These details are all specific information for this chosen server. You can use this understanding of the server, to determine how to define the deployment. For example, if you set environment variables for MODEL_BASE_PATH and MODEL_NAME in the deployment definition, the server (in this case, TF Serving) will take the values to initiate the server. Likewise, if you set the port for the routes to be 8501 in the deployment definition, the user request to such routes will be correctly routed to the TF Serving server.

Note that this specific example is based on the TF Serving case, but you can use any containers that will stay up and respond to requests coming to liveness, readiness, and scoring routes. You can refer to other examples and see how the dockerfile is formed (for example, using CMD instead of ENTRYPOINT) to create the containers.

Inference config

Inference config is a parameter in environment, and it specifies the port and path for 3 types of the route: liveness, readiness, and scoring route. Inference config is required if you want to run your own container with managed online endpoint.

Readiness route vs liveness route

The API server you choose may provide a way to check the status of the server. There are two types of the route that you can specify: liveness and readiness. A liveness route is used to check whether the server is running. A readiness route is used to check whether the server is ready to do work. In the context of machine learning inferencing, a server could respond 200 OK to a liveness request before loading a model, and the server could respond 200 OK to a readiness request only after the model is loaded into the memory.

For more information about liveness and readiness probes in general, see the Kubernetes documentation.

The liveness and readiness routes will be determined by the API server of your choice, as you would have identified when testing the container locally in earlier step. Note that the example deployment in this article uses the same path for both liveness and readiness, since TF Serving only defines a liveness route. Refer to other examples for different patterns to define the routes.

Scoring route

The API server you choose would provide a way to receive the payload to work on. In the context of machine learning inferencing, a server would receive the input data via a specific route. Identify this route for your API server as you test the container locally in earlier step, and specify it when you define the deployment to create.

Note that the successful creation of the deployment will update the scoring_uri parameter of the endpoint as well, which you can verify with az ml online-endpoint show -n <name> --query scoring_uri.

Locating the mounted model

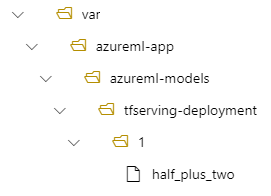

When you deploy a model as an online endpoint, Azure Machine Learning mounts your model to your endpoint. Model mounting allows you to deploy new versions of the model without having to create a new Docker image. By default, a model registered with the name foo and version 1 would be located at the following path inside of your deployed container: /var/azureml-app/azureml-models/foo/1

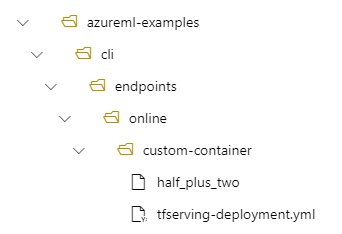

For example, if you have a directory structure of /azureml-examples/cli/endpoints/online/custom-container on your local machine, where the model is named half_plus_two:

And tfserving-deployment.yml contains:

model:

name: tfserving-mounted

version: 1

path: ./half_plus_two

Then your model will be located under /var/azureml-app/azureml-models/tfserving-deployment/1 in your deployment:

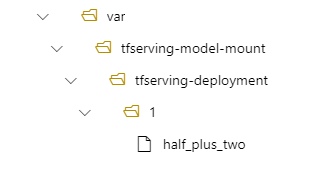

You can optionally configure your model_mount_path. It lets you change the path where the model is mounted.

Important

The model_mount_path must be a valid absolute path in Linux (the OS of the container image).

For example, you can have model_mount_path parameter in your tfserving-deployment.yml:

name: tfserving-deployment

endpoint_name: tfserving-endpoint

model:

name: tfserving-mounted

version: 1

path: ./half_plus_two

model_mount_path: /var/tfserving-model-mount

.....

Then your model is located at /var/tfserving-model-mount/tfserving-deployment/1 in your deployment. Note that it's no longer under azureml-app/azureml-models, but under the mount path you specified:

Create your endpoint and deployment

Now that you understand how the YAML was constructed, create your endpoint.

az ml online-endpoint create --name tfserving-endpoint -f endpoints/online/custom-container/tfserving-endpoint.yml

Creating a deployment might take a few minutes.

az ml online-deployment create --name tfserving-deployment -f endpoints/online/custom-container/tfserving-deployment.yml --all-traffic

Invoke the endpoint

Once your deployment completes, see if you can make a scoring request to the deployed endpoint.

RESPONSE=$(az ml online-endpoint invoke -n $ENDPOINT_NAME --request-file $BASE_PATH/sample_request.json)

Delete the endpoint

Now that you successfully scored with your endpoint, you can delete it:

az ml online-endpoint delete --name tfserving-endpoint