Microsoft Purview data security and compliance protections for generative AI apps

Use Microsoft Purview to mitigate and manage the risks associated with AI usage, and implement corresponding protection and governance controls.

Microsoft Purview Data Security Posture Management (DSPM) for AI provides easy-to-use graphical tools and reports to quickly gain insights into AI use within your organization. One-click policies help you protect your data and comply with regulatory requirements.

Use Data Security Posture Management for AI in conjunction with other Microsoft Purview capabilities to strengthen your data security and compliance for Microsoft 365 Copilot and Microsoft 365 Copilot Chat:

- Sensitivity labels and content encrypted by Microsoft Purview Information Protection

- Data classification

- Customer Key

- Communication compliance

- Auditing

- Content search

- eDiscovery

- Retention and deletion

- Customer Lockbox

Note

To check whether your organization's licensing plans support these capabilities, see the licensing guidance link at the top of the page. For licensing information for Microsoft 365 Copilot itself, see the service description for Microsoft 365 Copilot.

Use the following sections to learn more about Data Security Posture Management for AI and the Microsoft Purview capabilities that provide additional data security and compliance controls to accelerate your organization's adoption of Microsoft 365 Copilot and other generative AI apps. If you're new to Microsoft Purview, you might also find an overview of the product helpful: Learn about Microsoft Purview.

For more general information about security and compliance requirements for Microsoft 365 Copilot, see Data, Privacy, and Security for Microsoft 365 Copilot. For Microsoft 365 Copilot Chat, see the Copilot Privacy and protections.

Data Security Posture Management for AI provides insights, policies, and controls for AI apps

Microsoft Purview Data Security Posture Management (DSPM) for AI from the Microsoft Purview portal or the Microsoft Purview compliance portal provides a central management location to help you quickly secure data for AI apps and proactively monitor AI use. These apps include Microsoft 365 Copilot, other copilots from Microsoft, and AI apps from third-party large language modules (LLMs).

Data Security Posture Management for AI offers a set of capabilities so you can safely adopt AI without having to choose between productivity and protection:

Insights and analytics into AI activity in your organization

Ready-to-use policies to protect data and prevent data loss in AI prompts

Data assessments to identify, remediate, and monitor potential oversharing of data.

Compliance controls to apply optimal data handling and storing policies

For a list of supported third-party AI sites, such as those used for Gemini and ChatGPT, see Supported AI sites by Microsoft Purview for data security and compliance protections.

How to use Data Security Posture Management for AI

To help you more quickly gain insights into AI usage and protect your data, Data Security Posture Management for AI provides some recommended preconfigured policies that you can activate with a single click. Allow at least 24 hours for these new policies to collect data to display the results in Data Security Posture Management for AI, or reflect any changes that you make to the default settings.

No activation needed and now in preview, Data Security Posture Management for AI automatically runs a weekly data assessment for all your SharePoint sites used by Copilot. You can supplement this with your own custom data assessments. These assessments are designed specifically to help you identify, remediate, and monitor potential oversharing of data, so you can be more confident about your organization using Microsoft 365 Copilot and Microsoft 365 Copilot Chat.

To get started with Data Security Posture Management for AI, use the Microsoft Purview portal or you might still be able to use the older Microsoft Purview compliance portal. You need an account that has appropriate permissions for compliance management, such as an account that's a member of the Microsoft Entra Compliance Administrator group role.

Depending on the portal you're using, navigate to one of the following locations:

Sign in to the Microsoft Purview portal > Solutions > DSPM for AI.

Sign in to the Microsoft Purview compliance portal > DSPM for AI.

From Overview, review the Get started section to learn more about Data Security Posture Management for AI, and the immediate actions you can take. Select each one to display the flyout pane to learn more, take actions, and verify your current status.

Action More information Turn on Microsoft Purview Audit Auditing is on by default for new tenants, so you might already meet this prerequisite. If you do, and users are already assigned licenses for Copilot, you start to see insights about Copilot activities from the Reports section further down the page. Install Microsoft Purview browser extension A prerequisite for third-party AI sites. Onboard devices to Microsoft Purview Also a prerequisite for third-party AI sites. Extend your insights for data discovery One-click policies for collecting information about users visiting third-party generative AI sites and sending sensitive information to them. The option is the same as the Extend your insights button in the AI data analytics section further down the page. For more information about the prerequisites, see Prerequisites for Data Security Posture Management for AI.

For more information about the preconfigured policies that you can activate, see One-click policies from Data Security Posture Management for AI.

Then, review the Recommendations section and decide whether to implement any options that are relevant to your tenant. View each recommendation to understand how they're relevant to your data and learn more.

These options include running a data assessment across SharePoint sites, creating sensitivity labels and policies to protect your data, and creating some default policies to immediately help you detect and protect sensitive data sent to generative AI sites. Examples of recommendations:

- Protect your data from potential oversharing risks by viewing the results of your default data assessment to identify and fix issues to help you more confidently deploy Microsoft 365 Copilot.

- Protect sensitive data referenced in Microsoft 365 Copilot by creating a DLP policy that selects sensitivity labels to prevent Microsoft 365 Copilot summarizing the labeled data. For more information, see Learn about the Microsoft 365 Copilot policy location.

- Detect risky interactions in AI apps to calculate user risk by detecting risky prompts and responses in Microsoft 365 Copilot and other generative AI apps. For more information, see Risky AI usage (preview).

- Discover and govern interactions with ChatGPT Enterprise AI by registering ChatGPT Enterprise workspaces, you can identify potential data exposure risks by detecting sensitive information that's shared with ChatGPT Enterprise.

- Get guided assistance to AI regulations, which uses control-mapping regulatory templates from Compliance Manager.

You can use the View all recommendations link, or Recommendations from the navigation pane to see all the available recommendations for your tenant, and their status. When a recommendation is complete or dismissed, you no longer see it on the Overview page.

Use the Reports section or the Reports page from the navigation pane to view the results of the default policies created. You need to wait at least a day for the reports to be populated. Select the categories of Microsoft Copilot Experiences and Enterprise AI apps to help you identify the specific generative AI app.

Note

Separate Teams, Copilot, and AI app locations are rolling out and may not yet be visible in your Purview tenant at this time.

Use the Policies page to monitor the status of the default one-click policies created and AI-related policies from other Microsoft Purview solutions. To edit the policies, use the corresponding management solution in the portal. For example, for DSPM for AI - Unethical behavior in Copilot, you can review and remediate the matches from the Communication Compliance solution.

Note

If you have the older retention policies for the location Teams chats and Copilot interactions, they aren't included on this page. Learn how to create separate retention policies for Microsoft Copilot Experiences and/or Enterprise AI Apps that will will be included on this Policy page.

Select Activity explorer to see details of the data collected from your policies.

This more detailed information includes activity type and user, date and time, AI app category and app, app accessed in, any sensitive information types, files referenced, and sensitive files referenced.

Examples of activities include AI interaction, Sensitive info types, and AI website visit. Copilot prompts and responses are included in the AI interaction events when you have the right permisisons. For more information about the events, see Activity explorer events.

Similar to the report categories, workloads include Microsoft Copilot Experiences and Enterprise AI apps. Examples of an app ID and app host for Microsoft Copilot Experiences include Microsoft 365 Copilots and Copilot Studio. Enterprise AI apps includes ChatGPT Enterprise.

Select Data assessments (preview) to identify and fix potential data oversharing risks in your organization. A default data assessment automatically runs weekly for all your SharePoint sites used by Copilot in your organization, and you might have already run a custom assessment as one of the recommendations. However, come back regularly to this option to check the latest weekly results of the default assessment and run custom assessments when you want to check for different users or specific sites. After an assessment has run, wait at least 48 hours to see the results that don't update again. You'll need a new assessment to see any changes in the results.

Because of the power and speed AI can proactively surface content that might be obsolete, over-permissioned, or lacking governance controls, generative AI amplifies the problem of oversharing data. Use data assessements to both identify and remediate issues.

To view the automatically created data assessement for your tenant, from the Default assessments category, select Oversharing Assessment for the week of <month, year> from the list. From the columns for each site reported, you see information such as the total number of items found, how many were accessed and how often, and how many sensitive information types were found and accessed.

From the list, select each site to access the flyout pane that has tabs for Overview, Protect, and Monitor. Use the information on each tab to learn more, and take recommended actions. For example:

Use the Protect tab to select options to remediate oversharing, which include:

- Restrict access by label: Use Microsoft Purview Data Loss Prevention to create a DLP policy that prevents Microsoft 365 Copilot from summarizing data when it has sensitivity labels that you select. For more information about how this works and supported scenarios, see Learn about the Microsoft 365 Copilot policy location.

- Restrict all items: Use SharePoint Restricted Content Discoverability to list the SharePoint sites to be exempt from Microsoft 365 Copilot. For more information, see Restricted Content Discoverability for SharePoint Sites.

- Create an auto-labeling policy: When sensitive information is found for unlabeled files, use Microsoft Purview Information Protection to create an auto-labeling policy to automatically apply a sensitivity label for sensitive data. For more information about how to create this policy, see How to configure auto-labeling policies for SharePoint, OneDrive, and Exchange.

- Create retention policies: When content hasn't been accessed for at least 3 years, use Microsoft Purview Data Lifecycle Management to automatically delete it. For more information about how to create the retention policy, see Create and configure retention policies.

Use the Monitor tab to view the number of items in the site shared with anyone, shared with everyone in the organization, shared with specific people, and shared externally. Select Start a SharePoint site access review for information how to use the SharePoint data access governance reports.

Example screenshot, after a data assessment has been run, showing the Protect tab with resolutions for problems found:

To create your own custom data assessment, select Create assessment to identify potential oversharing issues for all or selected users, the data sources to scan (currently supported for SharePoint only), and run the assessment.

If you select all sites, you don't need to be a member of the sites, but you must be a member to select specific sites.

This data assessment is created in the Custom assessments category. Wait for the status of your assessment to display Scan completed, and select it to view details. To rerun a custom data assessment, use the duplicate option to create a new assessment, starting with the same selections.

Data assessments currently support a maximum of 200,000 items per location.

For Microsoft 365 Copilot and Microsoft 365 Copilot Chat, use these policies, tools, and insights in conjunction with additional protections and compliance capabilities from Microsoft Purview.

Microsoft Purview strengthens information protection for Copilot

Microsoft 365 Copilot and Microsoft 365 Copilot Chat use existing controls to ensure that data stored in your tenant is never returned to the user or used by a large language model (LLM) if the user doesn't have access to that data. When the data has sensitivity labels from your organization applied to the content, there's an extra layer of protection:

When a file is open in Word, Excel, PowerPoint, or similarly an email or calendar event is open in Outlook, the sensitivity of the data is displayed to users in the app with the label name and content markings (such as header or footer text) that have been configured for the label. Loop components and pages also support the same sensitivity labels.

When the sensitivity label applies encryption, users must have the EXTRACT usage right, as well as VIEW, for Copilot to return the data.

This protection extends to data stored outside your Microsoft 365 tenant when it's open in an Office app (data in use). For example, local storage, network shares, and cloud storage.

Tip

If you haven't already, we recommend you enable sensitivity labels for SharePoint and OneDrive and also familiarize yourself with the file types and label configurations that these services can process. When sensitivity labels aren't enabled for these services, the encrypted files that Copilot can access are limited to data in use from Office apps on Windows.

For instructions, see Enable sensitivity labels for Office files in SharePoint and OneDrive.

Additionally, when you use Microsoft 365 Copilot Chat (previously known as Business Chat, Graph-grounded chat, and Microsoft 365 Chat) that can access data from a broad range of content, the sensitivity of labeled data returned by Copilot is made visible to users with the sensitivity label displayed for citations and the items listed in the response. Using the sensitivity labels' priority number that's defined in the Microsoft Purview portal or the Microsoft Purview compliance portal, the latest response in Copilot displays the highest priority sensitivity label from the data used for that Copilot chat.

Although compliance admins define a sensitivity label's priority, a higher priority number usually denotes higher sensitivity of the content, with more restrictive permissions. As a result, Copilot responses are labeled with the most restrictive sensitivity label.

Note

If items are encrypted by Microsoft Purview Information Protection but don't have a sensitivity label, Microsoft 365 Copilot and Microsoft 365 Copilot Chat also won't return these items to users if the encryption doesn't include the EXTRACT or VIEW usage rights for the user.

If you're not already using sensitivity labels, see Get started with sensitivity labels.

Although DLP policies don't support data classification for Copilot interactions, sensitive info types and trainable classifiers are supported with communication compliance policies to identify sensitive data in user prompts to Copilot, and responses.

Copilot protection with sensitivity label inheritance

If you use Copilot to create new content based on an item that has a sensitivity label applied, the sensitivity label from the source file is automatically inherited, with the label's protection settings.

For example, a user selects Draft with Copilot in Word and then Reference a file. Or a user selects Create presentation from file in PowerPoint, or Edit in Pages from Microsoft 365 Copilot Chat. The source content has the sensitivity label Confidential\Anyone (unrestricted) applied and that label is configured to apply a footer that displays "Confidential". The new content is automatically labeled Confidential\Anyone (unrestricted) with the same footer.

To see an example of this in action, watch the following demo from the Ignite 2023 session, "Getting your enterprise ready for Microsoft 365 Copilot". The demo shows how the default sensitivity label of General is replaced with a Confidential label when a user drafts with Copilot and references a labeled file. The information bar under the ribbon informs the user that content created by Copilot resulted in the new label being automatically applied:

If multiple files are used to create new content, the sensitivity label with the highest priority is used for label inheritance.

As with all automatic labeling scenarios, the user can always override and replace an inherited label (or remove, if you're not using mandatory labeling).

Microsoft Purview protection without sensitivity labels

Even if a sensitivity label isn't applied to content, services and products might use the encryption capabilities from the Azure Rights Management service. As a result, Microsoft 365 Copilot and Microsoft 365 Copilot Chat can still check for the VIEW and EXTRACT usage rights before returning data and links to a user, but there's no automatic inheritance of protection for new items.

Tip

You'll get the best user experience when you always use sensitivity labels to protect your data, and encryption is applied by a label.

Examples of products and services that can use the encryption capabilities from the Azure Rights Management service without sensitivity labels:

- Microsoft Purview Message Encryption

- Microsoft Information Rights Management (IRM)

- Microsoft Rights Management connector

- Microsoft Rights Management SDK

For other encryption methods that don't use the Azure Rights Management service:

S/MIME protected emails won't be returned by Copilot, and Copilot isn't available in Outlook when an S/MIME protected email is open.

Password-protected documents can't be accessed by Copilot unless they're already opened by the user in the same app (data in use). Passwords aren't inherited by a destination item.

As with other Microsoft 365 services, such as eDiscovery and search, items encrypted with Microsoft Purview Customer Key or your own root key (BYOK) are supported and eligible to be returned by Copilot.

Microsoft Purview supports compliance management for Copilot

Use Microsoft Purview compliance capabilities to support your risk and compliance requirements for Microsoft 365 Copilot and Microsoft 365 Copilot Chat, and other generative AI apps.

Interactions with Copilot can be monitored for each user in your tenant. As such, you can use Purview's classification (sensitive info types and trainable classifiers), content search, communication compliance, auditing, eDiscovery, and automatic retention and deletion capabilities by using retention policies.

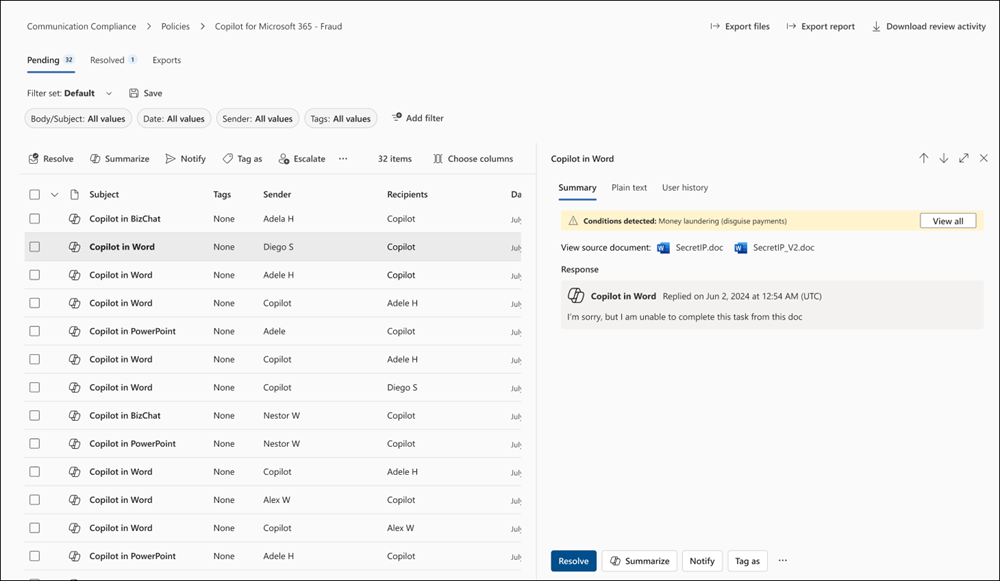

For communication compliance, you can analyze user prompts and Copilot responses to detect inappropriate or risky interactions or sharing of confidential information. For more information, see Configure a communication compliance policy to detect for Copilot interactions.

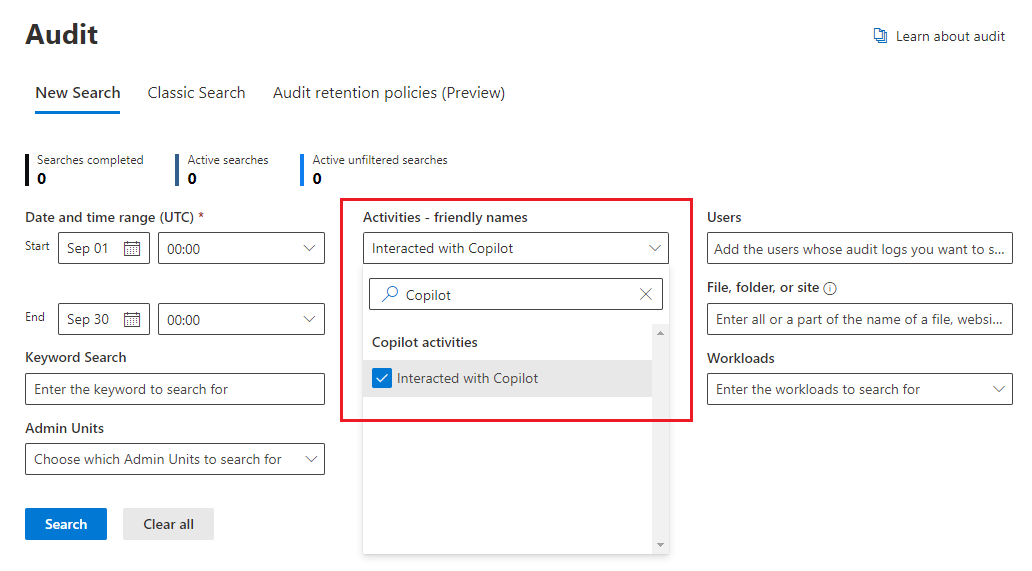

For auditing, details are captured in the unified audit log when users interact with Copilot. Events include how and when users interact with Copilot, in which Microsoft 365 service the activity took place, and references to the files stored in Microsoft 365 that were accessed during the interaction. If these files have a sensitivity label applied, that's also captured. In the Audit solution from the Microsoft Purview portal or the Microsoft Purview compliance portal, select Copilot activities and Interacted with Copilot. You can also select Copilot as a workload.

For content search, because user prompts to Copilot and responses from Copilot are stored in a user's mailbox, they can be searched and retrieved when the user's mailbox is selected as the source for a search query. Select and retrieve this data from the source mailbox by selecting from the query builder Add condition > Type > Equals any of > Add/Remove more options > Copilot interactions.

Similarly for eDiscovery, you use the same query process to select mailboxes and retrieve user prompts to Copilot and responses from Copilot. After the collection is created and sourced to the review phase in eDiscovery (Premium), this data is available for performing all the existing reviewing actions. These collections and review sets can then further be put on hold or exported. If you need to delete this data, see Search for and delete data for Copilot.

For retention policies that support automatic retention and deletion, user prompts to Copilot and responses from Copilot are identified by Microsoft 365 Copilots, included in the Microsoft Copilot Experiences policy location. Previously included in a policy location named Teams chats and Copilot interaction, these older retention policies are being replaced with separate locations. For more information, see Separate an existing 'Teams chats and Copilot interactions' policy.

For detailed information about this retention works, see Learn about retention for Copilot & AI apps.

As with all retention policies and holds, if more than one policy for the same location applies to a user, the principles of retention resolve any conflicts. For example, the data is retained for the longest duration of all the applied retention policies or eDiscovery holds.

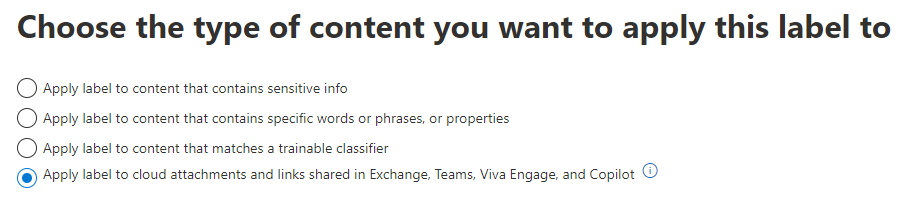

For retention labels to automatically retain files referenced in Copilot, select the option for cloud attachments with an auto-apply retention label policy: Apply label to cloud attachments and links shared in Exchange, Teams, Viva Engage, and Copilot. As with all retained cloud attachments, the file version at the time it's referenced is retained.

For detailed information about how this retention works, see How retention works with cloud attachments.

For configuration instructions:

To configure communication compliance policies for Copilot interactions, see Create and manage communication compliance policies.

To search the audit log for Copilot interactions, see Search the audit log.

To use content search to find Copilot interactions, see Search for content.

To use eDiscovery for Copilot interactions, see Microsoft Purview eDiscovery solutions.

To create or change a retention policy for Copilot interactions, see Create and configure retention policies.

To create an auto-apply retention label policy for files referenced in Copilot, see Automatically apply a retention label to retain or delete content.

Other documentation to help you secure and manage generative AI apps

Blog post announcement: Accelerate AI adoption with next-gen security and governance capabilities

For more detailed information, see Considerations for Microsoft Purview Data Security Posture Management for AI and data security and compliance protections for Copilot.

Microsoft 365 Copilot:

Related resources: