Copy data from DB2 using Azure Data Factory or Synapse Analytics

APPLIES TO:  Azure Data Factory

Azure Data Factory  Azure Synapse Analytics

Azure Synapse Analytics

Tip

Try out Data Factory in Microsoft Fabric, an all-in-one analytics solution for enterprises. Microsoft Fabric covers everything from data movement to data science, real-time analytics, business intelligence, and reporting. Learn how to start a new trial for free!

This article outlines how to use the Copy Activity in Azure Data Factory and Synapse Analytics pipelines to copy data from a DB2 database. It builds on the copy activity overview article that presents a general overview of copy activity.

Supported capabilities

This DB2 connector is supported for the following capabilities:

| Supported capabilities | IR |

|---|---|

| Copy activity (source/-) | ① ② |

| Lookup activity | ① ② |

① Azure integration runtime ② Self-hosted integration runtime

For a list of data stores that are supported as sources or sinks by the copy activity, see the Supported data stores table.

Specifically, this DB2 connector supports the following IBM DB2 platforms and versions with Distributed Relational Database Architecture (DRDA) SQL Access Manager (SQLAM) version 9, 10 and 11. It utilizes the DDM/DRDA protocol.

- IBM DB2 for z/OS 12.1

- IBM DB2 for z/OS 11.1

- IBM DB2 for i 7.3

- IBM DB2 for i 7.2

- IBM DB2 for i 7.1

- IBM DB2 for LUW 11

- IBM DB2 for LUW 10.5

- IBM DB2 for LUW 10.1

Prerequisites

If your data store is located inside an on-premises network, an Azure virtual network, or Amazon Virtual Private Cloud, you need to configure a self-hosted integration runtime to connect to it.

If your data store is a managed cloud data service, you can use the Azure Integration Runtime. If the access is restricted to IPs that are approved in the firewall rules, you can add Azure Integration Runtime IPs to the allow list.

You can also use the managed virtual network integration runtime feature in Azure Data Factory to access the on-premises network without installing and configuring a self-hosted integration runtime.

For more information about the network security mechanisms and options supported by Data Factory, see Data access strategies.

The Integration Runtime provides a built-in DB2 driver, therefore you don't need to manually install any driver when copying data from DB2.

Getting started

To perform the Copy activity with a pipeline, you can use one of the following tools or SDKs:

- The Copy Data tool

- The Azure portal

- The .NET SDK

- The Python SDK

- Azure PowerShell

- The REST API

- The Azure Resource Manager template

Create a linked service to DB2 using UI

Use the following steps to create a linked service to DB2 in the Azure portal UI.

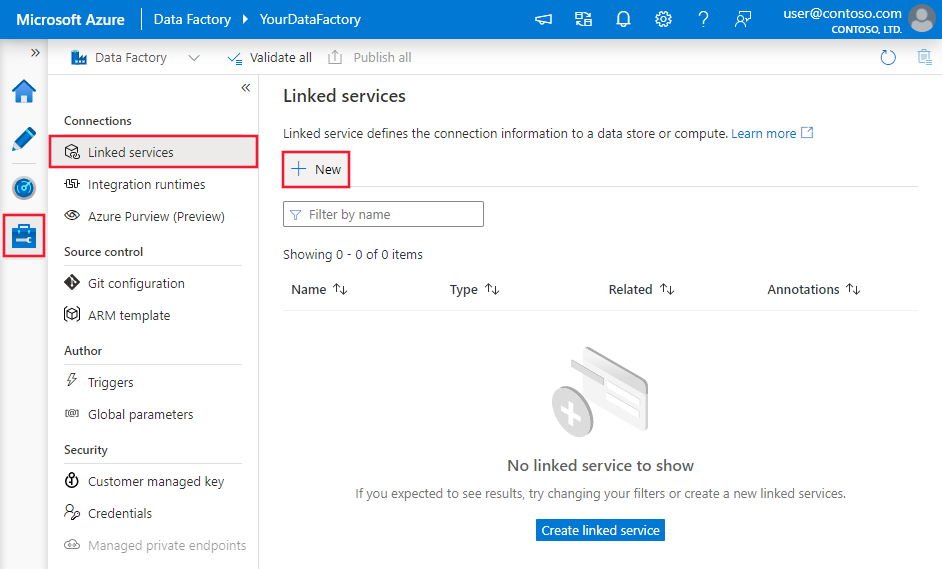

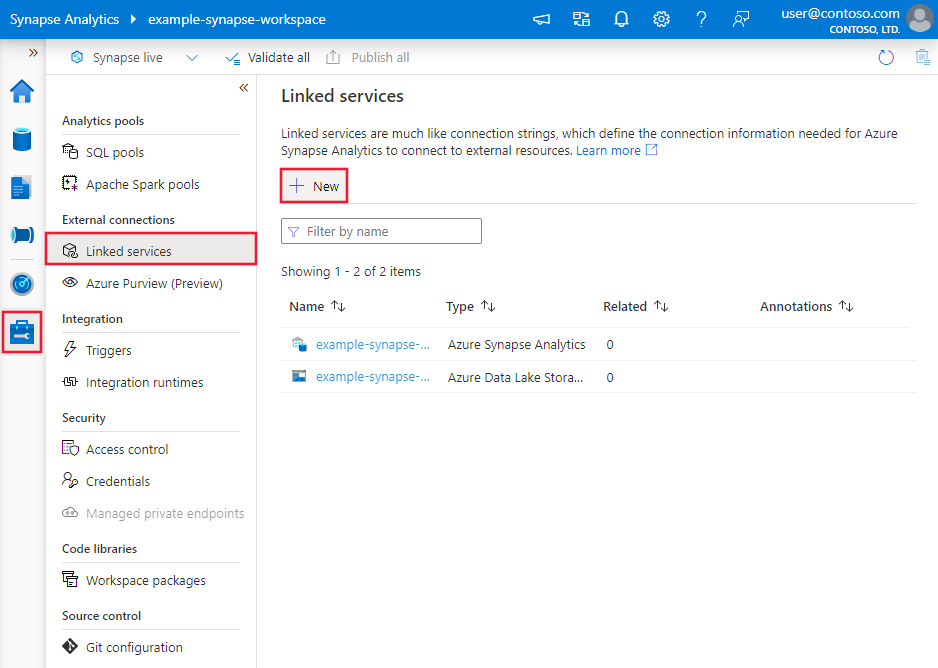

Browse to the Manage tab in your Azure Data Factory or Synapse workspace and select Linked Services, then click New:

Search for DB2 and select the DB2 connector.

Configure the service details, test the connection, and create the new linked service.

Connector configuration details

The following sections provide details about properties that are used to define Data Factory entities specific to DB2 connector.

Linked service properties

The following properties are supported for DB2 linked service:

| Property | Description | Required |

|---|---|---|

| type | The type property must be set to: Db2 | Yes |

| connectionString | Specify information needed to connect to the DB2 instance. You can also put password in Azure Key Vault and pull the password configuration out of the connection string. Refer to the following samples and Store credentials in Azure Key Vault article with more details. |

Yes |

| connectVia | The Integration Runtime to be used to connect to the data store. Learn more from Prerequisites section. If not specified, it uses the default Azure Integration Runtime. | No |

Typical properties inside the connection string:

| Property | Description | Required |

|---|---|---|

| server | Name of the DB2 server. You can specify the port number following the server name delimited by colon e.g. server:port.The DB2 connector utilizes the DDM/DRDA protocol, and by default uses port 50000 if not specified. The port your specific DB2 database uses might be different based on the version and your settings, e.g. for DB2 LUW the default port is 50000, for AS400 the default port is 446 or 448 when TLS enabled. Refer to the following DB2 documents on how the port is configured typically: DB2 z/OS, DB2 iSeries, and DB2 LUW. |

Yes |

| database | Name of the DB2 database. | Yes |

| authenticationType | Type of authentication used to connect to the DB2 database. Allowed value is: Basic. |

Yes |

| username | Specify user name to connect to the DB2 database. | Yes |

| password | Specify password for the user account you specified for the username. Mark this field as a SecureString to store it securely, or reference a secret stored in Azure Key Vault. | Yes |

| packageCollection | Specify under where the needed packages are auto created by the service when querying the database. If this is not set, the service uses the {username} as the default value. | No |

| certificateCommonName | When you use Secure Sockets Layer (SSL) or Transport Layer Security (TLS) encryption, you must enter a value for Certificate common name. | No |

Tip

If you receive an error message that states The package corresponding to an SQL statement execution request was not found. SQLSTATE=51002 SQLCODE=-805, the reason is a needed package is not created for the user. By default, the service will try to create the package under the collection named as the user you used to connect to the DB2. Specify the package collection property to indicate under where you want the service to create the needed packages when querying the database. If you can't determine the package collection name, try to set packageCollection=NULLID.

Example:

{

"name": "Db2LinkedService",

"properties": {

"type": "Db2",

"typeProperties": {

"connectionString": "server=<server:port>;database=<database>;authenticationType=Basic;username=<username>;password=<password>;packageCollection=<packagecollection>;certificateCommonName=<certname>;"

},

"connectVia": {

"referenceName": "<name of Integration Runtime>",

"type": "IntegrationRuntimeReference"

}

}

}

Example: store password in Azure Key Vault

{

"name": "Db2LinkedService",

"properties": {

"type": "Db2",

"typeProperties": {

"connectionString": "server=<server:port>;database=<database>;authenticationType=Basic;username=<username>;packageCollection=<packagecollection>;certificateCommonName=<certname>;",

"password": {

"type": "AzureKeyVaultSecret",

"store": {

"referenceName": "<Azure Key Vault linked service name>",

"type": "LinkedServiceReference"

},

"secretName": "<secretName>"

}

},

"connectVia": {

"referenceName": "<name of Integration Runtime>",

"type": "IntegrationRuntimeReference"

}

}

}

If you were using DB2 linked service with the following payload, it is still supported as-is, while you are suggested to use the new one going forward.

Previous payload:

{

"name": "Db2LinkedService",

"properties": {

"type": "Db2",

"typeProperties": {

"server": "<servername:port>",

"database": "<dbname>",

"authenticationType": "Basic",

"username": "<username>",

"password": {

"type": "SecureString",

"value": "<password>"

}

},

"connectVia": {

"referenceName": "<name of Integration Runtime>",

"type": "IntegrationRuntimeReference"

}

}

}

Dataset properties

For a full list of sections and properties available for defining datasets, see the datasets article. This section provides a list of properties supported by DB2 dataset.

To copy data from DB2, the following properties are supported:

| Property | Description | Required |

|---|---|---|

| type | The type property of the dataset must be set to: Db2Table | Yes |

| schema | Name of the schema. | No (if "query" in activity source is specified) |

| table | Name of the table. | No (if "query" in activity source is specified) |

| tableName | Name of the table with schema. This property is supported for backward compatibility. Use schema and table for new workload. |

No (if "query" in activity source is specified) |

Example

{

"name": "DB2Dataset",

"properties":

{

"type": "Db2Table",

"typeProperties": {},

"schema": [],

"linkedServiceName": {

"referenceName": "<DB2 linked service name>",

"type": "LinkedServiceReference"

}

}

}

If you were using RelationalTable typed dataset, it is still supported as-is, while you are suggested to use the new one going forward.

Copy activity properties

For a full list of sections and properties available for defining activities, see the Pipelines article. This section provides a list of properties supported by DB2 source.

DB2 as source

To copy data from DB2, the following properties are supported in the copy activity source section:

| Property | Description | Required |

|---|---|---|

| type | The type property of the copy activity source must be set to: Db2Source | Yes |

| query | Use the custom SQL query to read data. For example: "query": "SELECT * FROM \"DB2ADMIN\".\"Customers\"". |

No (if "tableName" in dataset is specified) |

Example:

"activities":[

{

"name": "CopyFromDB2",

"type": "Copy",

"inputs": [

{

"referenceName": "<DB2 input dataset name>",

"type": "DatasetReference"

}

],

"outputs": [

{

"referenceName": "<output dataset name>",

"type": "DatasetReference"

}

],

"typeProperties": {

"source": {

"type": "Db2Source",

"query": "SELECT * FROM \"DB2ADMIN\".\"Customers\""

},

"sink": {

"type": "<sink type>"

}

}

}

]

If you were using RelationalSource typed source, it is still supported as-is, while you are suggested to use the new one going forward.

Data type mapping for DB2

When copying data from DB2, the following mappings are used from DB2 data types to interim data types used internally within the service. See Schema and data type mappings to learn about how copy activity maps the source schema and data type to the sink.

| DB2 Database type | Interim service data type |

|---|---|

| BigInt | Int64 |

| Binary | Byte[] |

| Blob | Byte[] |

| Char | String |

| Clob | String |

| Date | Datetime |

| DB2DynArray | String |

| DbClob | String |

| Decimal | Decimal |

| DecimalFloat | Decimal |

| Double | Double |

| Float | Double |

| Graphic | String |

| Integer | Int32 |

| LongVarBinary | Byte[] |

| LongVarChar | String |

| LongVarGraphic | String |

| Numeric | Decimal |

| Real | Single |

| SmallInt | Int16 |

| Time | TimeSpan |

| Timestamp | DateTime |

| VarBinary | Byte[] |

| VarChar | String |

| VarGraphic | String |

| Xml | Byte[] |

Lookup activity properties

To learn details about the properties, check Lookup activity.

Related content

For a list of data stores supported as sources and sinks by the copy activity, see supported data stores.