How to get most out of Windows Azure Tables

Introduction

Windows Azure Storage is a scalable and durable cloud storage system in which applications can store data and access it from anywhere and at any time. Windows Azure Storage provides a rich set of data abstractions:

- Windows Azure Blob – provides storage for large data items like file and allows you to associate metadata with it.

- Windows Azure Drives – provides a durable NTFS volume for applications running in Windows Azure cloud.

- Windows Azure Table – provides structured storage for maintaining service state.

- Windows Azure Queue – provides asynchronous work dispatch to enable service communication.

This post will concentrate on Windows Azure Table, which supports massively scalable tables in the cloud. It can contain billions of entities and terabytes of data and the system will efficiently scale out automatically to meet the table’s traffic needs. However, the scale you can achieve depends on the schema you choose and the application’s access patterns. One of the goals of this post is to cover best practices, tips to follow and pitfalls to avoid that will allow your application to get the most out of the Table Storage.

Table Data Model

To those who are new to Windows Azure Table, we would like to start off with a quick description of the data model since it is a non-relational storage system; a few concepts are different from a conventional database system.

To store data in Windows Azure Storage, you would first need to get an account by signing up here with your live id. Once you have completed registration, you can create storage and hosted services. The storage service creation process will request a storage account name and this name becomes part of the host name you would use to access Windows Azure Storage. The host name for accessing Windows Azure Table is <accountName>.table.core.windows.net.

While creating the account you also get to choose the geo location in which the data will be stored. We recommend that you collocate it with your hosted services. This is important for a couple of reasons – 1) applications will have fast network access to your data, and 2) the bandwidth usage in the same geo location is not charged.

Once you have created a storage service account, you will receive two 512 bit secret keys called primary and secondary access keys. Any one of these secret keys is then used to authenticate user requests to the storage system by creating a HMAC SHA256 signature for the request. The signature is passed with each request to authenticate the user requests. The reason for the two access keys is that it allows you to regenerate keys by rotating between primary and secondary access keys in your existing live applications.

Using this storage account, you can create tables that store structured data. A Windows Azure table is analogous to a table in conventional database system in that it is a container for storing structured data. But an important differentiating factor is that it does not have a schema associated with it. If a fixed schema is required for an application, the application will have to enforce it at the application layer. A table is scoped by the storage account and a single account can have multiple tables.

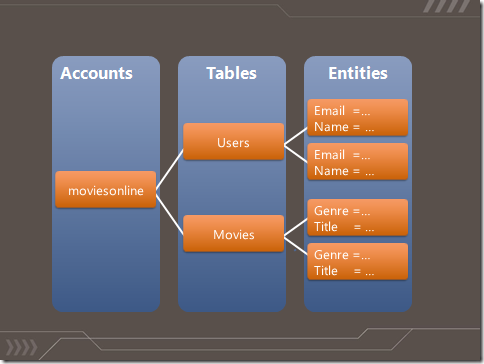

The basic data item stored in a table is called entity. An entity is a collection of properties that are name value pairs. Each entity has 3 fixed properties called PartitionKey, RowKey and Timestamp. In addition to these, a user can store up to 252 additional properties in an entity. If we were to map this to concepts in a conventional database system, an entity is analogous to a row and property is analogous to a column. Figure 1 show the above described concepts in a picture and more details can be found in our documentation “Understanding the Table Service Data Model”.

Figure 1 Table Storage Concepts

Every entity has 3 fixed properties:

- PartitionKey – The first key property of every table. The system uses this key to automatically distribute the table’s entities over many storage nodes.

- RowKey – A second key property for the table. This is the unique ID of the entity within the partition it belongs to. The PartitionKey combined with the RowKey uniquely identifies an entity in a table. The combination also defines the single sort order that is provided today i.e. all entities are sorted (in ascending order) by (PartitionKey, RowKey).

- Timestamp – Every entity has a version maintained by the system which is used for optimistic concurrency. Update and Delete requests by default send an ETag using the If-Match condition and the operation will fail if the timestamp sent in the If-Match header differs from the Timestamp property value on the server.

The PartitionKey and RowKey together form the clustered index for the table and by definition of a clustered index, results are sorted by <PartitionKey, RowKey>. The sort order is ascending.

Operations on Table

The following are the operations supported on tables

- Create a table or entity

- Retrieve a table or entity, with filters

- Update an entity

- Delete a table or entity

- Entity Group Transactions - These are transactions across entities in the same partition in a single table

Note: We currently do not support Upsert (Insert an entity or Update it if it already exists). We recommend that an application issue an update/insert first depending on what has the highest probability to succeed in the scenario and handle an exception (Conflict or ResourceNotFound) appropriately. Supporting Upsert is in our feature request list.

For more details on each of the operations, please refer to the MSDN documentation. Windows Azure Table uses WCF Data Services to implement the OData protocol. The wire protocol is ATOM-Pub. We also provide a StorageClient library in the Windows Azure SDK that provides some convenience in handling continuation tokens for queries (See “Continuation Tokens” below) and retries for operations.

The schema used for a table is defined as a .NET class with the additional DataServiceKey attribute specified which informs WCF Data Services that our key is <PartitionKey, RowKey>. Also note that all public properties are sent over the wire as properties for the entity and stored in the table.

[DataServiceKey("PartitionKey", "RowKey")]

public class Movie {

/// Movie Category is the partition key public string PartitionKey { get; set; }

/// Movie Title is the row key public string RowKey { get; set; }

public DateTime Timestamp { get; set; }

public int ReleaseYear { get; set; }

public double Rating { get; set; }

public string Language { get; set; }

public bool Favorite { get; set; }

}

An example wire protocol (ATOM Pub) for inserting an entity with the above “Movie” definition is:

<?xml version="1.0" encoding="utf-8" standalone="yes"?>

<entry xmlns:d="https://schemas.microsoft.com/ado/2007/08/dataservices" xmlns:m="https://schemas.microsoft.com/ado/2007/08/dataservices/metadata" xmlns="https://www.w3.org/2005/Atom">

<title />

<author>

<name />

</author>

<updated>2010-10-16T15:48:53.0011614Z</updated>

<id />

<content type="application/xml">

<m:properties>

<d:Favorite m:type="Edm.Boolean">false</d:Favorite>

<d:Language>English</d:Language>

<d:PartitionKey>Action</d:PartitionKey>

<d:Rating m:type="Edm.Double">4.5</d:Rating>

<d:ReleaseYear m:type="Edm.Int32">2010</d:ReleaseYear>

<d:Revenue m:type="Edm.Double">0</d:Revenue>

<d:RowKey>Cop Out</d:RowKey>

<d:Timestamp m:type="Edm.DateTime">0001-01-01T00:00:00</d:Timestamp>

</m:properties>

</content>

</entry>

Creating Tables and Inserting/Updating Entities

Below is a snippet of C# code that illustrates how to create a table and insert and update entities.

// Connection string is of the format: // "DefaultEndpointsProtocol=http;AccountName=myaccount;AccountKey=mykey" CloudStorageAccount account = CloudStorageAccount.Parse(cxnString);

CloudTableClient tableClient = account.CreateCloudTableClient();

// Create Movie Table string tableName = "Movies";

tableClient.CreateTableIfNotExist(tableName);

TableServiceContext context = tableClient.GetDataServiceContext();

// Add movie object to the context context.AddObject(tableName, new Movie("Action", "White Water Rapids Survival"));

// The object is not sent to the Table service until SaveChanges is // called. SaveChangesWithRetries wraps the SaveChanges but as the name // suggest, it also provides retries. context.SaveChangesWithRetries();

// We should use a new DataServiceContext for this operation // but for brevity, we will skip this best practice in the code snippet // Query for action movies that are rated > 4 var q = (from movie in context.CreateQuery<Movie>(tableName)

where movie.PartitionKey == "Action" && movie.Rating > 4.0

select movie).AsTableServiceQuery<Movie>();

// Make each of the movie that is returned in the result set my favorite // Using the AsTableServiceQuery extension above means that the below // iteration handles continuation tokens since this is not a single point query. // See Queries section for more details on query efficiency and continuation tokens. foreach (Movie movieToUpdate in q)

{

movieToUpdate.Favorite = true;

// This sets the entity to be updated in the // context and no request is sent until SaveChanges is called. This // issues an update with optimistic concurrency check. With the above query, // the context tracks this entity with the associated Etag value. The following // update will set the If-Match header such that entity is updated only if etag // matches with the entity representation on server. context.UpdateObject(movieToUpdate);

}

// The batch SaveChangesOptions ensures atomic transaction for all updates context.SaveChangesWithRetries(SaveChangesOptions.Batch);

Options for saving changes

SaveChangesOptions has the following options:

- None -This default option results in sending each pending CUD operation as an individual request to the service. If a single operation fails, an exception is thrown and remaining pending operations are not sent to the service.

- Batch - This option results in sending all CUD operations currently tracked by the context as a single atomic transaction to the Windows Azure Table service (see Entity Group Transaction for more details and rules for a batch).

- ContinueOnError - Results in sending each pending CUD operation as individual requests to the service. If an operation fails, the context records it and remaining operations continue to be sent to the service.

- ReplaceOnUpdate - By default, all updates are sent as “Merge” requests i.e. if an update operation for an entity does not send a property, the property value is still retained since the update replaces only those property values that are sent in the request. ReplaceOnUpdate, replaces the entire entity and hence any missing properties on update are not stored any more. Hence, ReplaceOnUpdate can be used to remove properties for an entity.

Some of these options can be combined for a given SaveChanges. For example:

SaveChangesWithRetries(SaveChangesOptions.ContinueOnError | SaveChangesOptions.ReplaceOnUpdate) implies that each pending CUD operation is sent as an individual request to the server and all update operations result in the entity being replaced on the server end rather than merged.

However, some combinations are invalid. For example: SaveChangesWithRetries(SaveChangesOptions.ContinueOnError | SaveChangesOptions.Batch)

Note: SaveChangesWithRetries is provided in the StorageClient library as a wrapper over WCF Data Service’s SaveChanges method. SaveChangesWithRetries, as the name mentions, handles retries on failures.

Entity Tracking

The above example shows an update of entity which was first retrieved via a query. Entities need to be tracked by the context for issuing updates or deletes and hence we retrieved it to allow the context to track the entity (it does this by default). The purpose of tracking is to send the Etag condition using If-Match header when an update or delete is issued. The operation succeeds only if the Etag that the context tracks for an entity matches that on the server. The Etag for an entity is its Timestamp as explained above and the service changes it with every update.

But what if I want to skip the Etag check i.e. unconditionally update/delete the entity? Or what if I know what the Etag is and I want to avoid issuing a query before I update or delete the entity? WCF Data Service API provides an AttachTo method for this purpose. This method takes the table name, entity and Etag to track it with and the context starts tracking the entity which will then allow update and delete to be issued on the entity. If “*” is used for the Etag value during AttachTo, an unconditional update request is sent to the service. A common mistake to be aware of here is that “AttachTo” can throw an exception if the entity is already being tracked by the context. Here is a snippet of code that shows an unconditional delete:

Movie existingEntity = new Movie("Action", "Cop Out");

// AttachTo can throw an exception if the entity is already being tracked. // We will need to handle exceptions which is excluded here for brevity. context.AttachTo(tableName, existingEntity, “*”);

context.DeleteObject(existingEntity);

context.SaveChangesWithRetries();

Null Values

The Windows Azure Table service ignores properties that have null values passed in for them, and they are not saved into the table stored in the Azure Table service. For example, if Language is null when using the above entity definition, OData protocol marks this property as having “null” value. The wire protocol (OData’s ATOM Pub) will have:

<d:Language m:null="true"/>

The Azure Table service ignores this “Language” property when it comes in and the entity representation in Windows Azure Tables will not have this property. If the SaveChangesOption is ReplaceOnUpdate, then since all null values are ignored, the end result is that the entity saved on the server side will not contain this property.

Queries

Windows Azure Table supports query using various operators over key and non-keyed properties (see MSDN documentation for more details) and the query results are always sorted by PartitionKey, RowKey, since Windows Azure Table supports just one key which is (PartitionKey, RowKey), and does not currently support secondary indexes. Queries to Windows Azure Table can be categorized into the following:

- Single Entity (a.k.a. Point Queries): Point query is a query is to retrieve a single entity by specifying a single PartitionKey and RowKey using equality predicates. Example:

var q = (from movie in context.CreateQuery<Movie>(tableName)

where movie.PartitionKey == "Action" && movie.RowKey == "Terminator" select movie);

NOTE: Such queries by default throw “DataServiceQueryException” with error code “ResourceNotFound” when the entity does not exist.

- Range Queries: Range query involves scanning range of rows. It can be further categorized into following:

- Row Range Scan: Query results in scanning a range of rows within a single partition. Example:

var q = (from movie in context.CreateQuery<Movie>(tableName)

where movie.PartitionKey == "Action" && movie.RowKey.CompareTo("Alien") >= 0 && movie.RowKey.CompareTo("Terminator") <= 0 && movie.IsFavorite

select movie);

-

- Partition Range Scan: Query results in scanning a range of rows that may span across several partitions. Example:

var q = (from movie in context.CreateQuery<Movie>(tableName)

where movie.PartitionKey.CompareTo("Action") >= 0

&& movie.PartitionKey.CompareTo("War") < 0

&& movie.IsFavorite

select movie);

- Full Table Scan: Query results in scanning the entire table i.e. all rows in all partitions in a table. Four examples:

var q = (from movie in context.CreateQuery<Movie>(tableName)

select movie);

var q = (from movie in context.CreateQuery<Movie>(tableName)

where movie.PartitionKey.CompareTo("Action") != 0

select movie);

var q = (from movie in context.CreateQuery<Movie>(tableName)

where movie.IsFavorite

select movie);

var q = (from movie in context.CreateQuery<Movie>(tableName)

where movie.RowKey.CompareTo("Sh") >= 0

&& movie.RowKey.CompareTo("Si") < 0

select movie);

From performance perspective, point queries are the best since it is a clustered index lookup. The performance of range queries depends on the size of the row that needs to be iterated through and not just the size of the final result set.

Here are some queries and are listed along with description of their efficiency:

- PartitionKey == “SciFi” and RowKey == “Star Wars”: Single entity lookup which is the most efficient query

- PartitionKey == “SciFi” and “Sphere” ≤ RowKey ≤ “Star Wars”: Scans entities in a single partition, efficiency depends on number of entities within this RowKey range that needs to be iterated over.

- “Action” ≤ PartitionKey ≤ “Thriller”: Scans entities across multiple partitions and efficiency depends on the number of entities across these partitions that need to be iterated over.

- PartitionKey == “Action” || PartitionKey == “Thriller”: The current implementation of the LINQ OR predicate is not optimized to scan just the two partitions and will result in a full table scan. It is recommended to execute the two queries in parallel and results be merged on the client end.

- “Cars” ≤ RowKey ≤ “Star Wars”: scans entire table since the PartitionKey has not been specified.

We mentioned earlier that query results are always returned sorted by combination of PartitionKey, RowKey. This sort characteristic along with the fact that there is no native support for prefix operations brings out certain interesting cases for queries which we shall try to cover via examples.

Example 1

Retrieve all action movies whose name begins with “Sh”

var q = (from movie in context.CreateQuery<Movie>(tableName)

where movie.PartitionKey == "Action" && movie.RowKey.CompareTo("Sh") >= 0

&& movie.RowKey.CompareTo("Si") < 0

select movie);

In this example we use “Sh” as the inclusive lower limit and “Si” as the upper limit. This gives us all action movies whose name starts with “Sh”. This query is efficient, since it is going to a single partition and doing a scan over a range of rows from “Sh” to “Si”.

Example 2

Table “RecentMovies” is used to store the latest movies and the purpose is to display the movies such that most recently released movie is displayed first. The PartitionKey is ReleasedDate with format YYYY-MM-DD.

Let us take a small sample set of release dates sorted by ascending order:

PartitionKey = ReleaseDate |

Movie Title |

2009-01-20 |

Movie1 |

2009-05-01 |

Movie2 |

2010-01-31 |

Movie3 |

2010-06-20 |

Movie4 |

2010-10-23 |

Movie5 |

Table 1 Rows sorted by ASC (Released Date)

Now if the released date in Table 1 was used as the PartitionKey as is, the result set will not meet the intended query that we need i.e. Desc (Released Date).

To meet the query requirement from this table, we need to use a PartitionKey sorted in the correct order we want the queries to be returned in. One option is to use a PartitionKey to represent the movie’s release date subtracted from a max value. This would result in restructuring the partition key as YYYY’-MM’-DD’ where:

YYYY’ = MaxYear-YYYY; MaxYear = 9999

MM’ = MaxMonth-MM; MaxMonth = 12

DD’ = MaxDate-DD; MaxDate = 31

PartitionKey = Formatted(ReleaseDate) |

Movie Title |

7989-02-08 |

Movie5 |

7989-06-11 |

Movie4 |

7989-11-00 |

Movie3 |

7990-07-30 |

Movie2 |

7990-11-11 |

Movie1 |

Table 2 Rows sorted correctly to meet query need – Sorted By Formatted (Release Date)

An interesting thing to note here is we use the month in the format of MM and not just M (similar for day) i.e. they are fixed length. This is again driven by the fact that rows are lexically sorted. Let us assume that we did not use fixed length – Table 3 shows the sort order which will be incorrect because lexically “7989-11-0” < “7989-2-8”.

PartitionKey = Formatted(ReleaseDate) |

Movie Title |

7989-11-0 |

Movie3 |

7989-2-8 |

Movie5 |

7989-6-11 |

Movie4 |

7990-11-11 |

Movie1 |

7990-07-30 |

Movie2 |

Table 3 Rows sorted Incorrectly when Fixed length is not used for Date components

Large result sets and Continuation Tokens

Range queries can require multiple round trips to get the entire result set because the service may return partial results with a continuation token and expect the client to resend the query with continuation token to get the next set of results. The response with a continuation includes a continuation token as custom headers. For a query over your entities, the custom headers representing a continuation token are:

- x-ms-continuation-NextPartitionKey

- x-ms-continuation-NextRowKey

The client should pass both these values back into the next query as HTTP query options, with the rest of the query remaining the same. The client will then get the next set of entities starting at the continuation token. The next query looks as follows:

Note, when using AsTableServiceQuery(), the continuation tokens are hidden and are automatically passed back to the service to continue the query by the storage client library.

Continuation tokens can be returned for multiple reasons:

- A response can contain at most 1000 entities. If your query results in more than that, a continuation token is returned.

- When $top (Take(N)) is used, a continuation token is returned that allows an application to implement pagination.

- If query execution does not complete within 5 seconds, partial results are returned with continuation token.

- A Continuation token may also be returned when a partition boundary is crossed. A partition boundary is the logical barrier between two partitions in the storage service. If the next entity to return is in a different partition which is served by a different server, a continuation token is returned with partial results. It is expected that the application will resend the request with the continuation token to get the subsequent entities.

An application should expect continuation tokens and it should continue to reissue the request with the received continuation tokens to get the entire result set. It is normal to receive just a continuation token without any results. This can happen when:

- When the filter does not match any entities in the partition range served by a server

- It is possible for the service to maintain empty Range Partitions. If the query is across more than one partition, and the start of the query lands on one of these empty Range Partitions a continuation token pointing to the next range partition will be returned. These empty range partitions could be there because all of the entities were deleted for an existing Range Partition or the Range Partition was created to enable future scalability (load) on the table across more Range Partitions. This does not occur often, but it can occur as the service load balances itself, which is why your application must appropriately handle continuation tokens. When a single value for PartitionKey with equality predicate is not used in the query filter, the request can be served by a server that did not have any data in the partition range but was assigned a partition range that was valid for the query. This can happen if data in that partition range is deleted or if the storage service reserved some extra partitions for future use.

Best Practices and Tips for Programming Windows Azure Tables

The following are best practices and tips for programming Windows Azure Tables:

DataServiceContext by default tracks entities that are added via AddObject, AttachTo or returned via query results. This tracking is not thread safe and hence it is highly recommended that a new DataServiceContext be used for every logical operation. This also reduces the overhead caused by having the context to track millions of entities that may be queried or inserted in the lifecycle of an application.

Note: A logical operation may include a query for the entity followed by update/delete operation for the queried entity. This requires two requests to the service, but considered as one logical operation.Expect InvalidOperationException exception (with message “Context is already tracking a different entity with the same resource Uri”) when AttachTo is called on an entity that is already being tracked since an entity can be added for tracking only once. See this post for more details.

A DataServiceQueryException with error code “ResourceNotFound” is thrown by default when a point query is issued for an entity that does not exist (i.e. a query of form where PartitionKey == “foo” && RowKey == “bar”). This exception can be avoided by setting IgnoreResourceNotFoundException to ignore exceptions when an entity lookup fails. Instead one can rely on an empty result set to indicate that the addressed entity does not exist.

When using the same DataServiceContext instance across all inserts/updates/deletes, if there is a failure in SaveChanges, the entity that failed continues to be tracked in the DataServiceContext and re-tried the next time SaveChanges is invoked. Example:

try { // Add movie object that already exists context.AddObject(tableName, new Movie("Action", "Cop Out")); context.SaveChangesWithRetries();// Operation fails with “Conflict” error } catch (Exception e) { // excluded exception handling for brevity... } context.AddObject(tableName, new Movie("Action", "Law Abiding Citizen")); // Operation fails with “Conflict” error again since "Cop Out" is // issued again context.SaveChangesWithRetries();

The above is an example where, if your application dealt with the conflict error of “Cop Out” in the try/catch, you would want to use a new DataServiceContext for the next SaveChangesWithRetries to have a clean set of changes to apply.

- When using StorageClient library to insert entities with SaveChangesWithRetries(), this will retry the insert of the entity if there is a failure or timeout. If the operation succeeds on the Azure Table Service, but the client issuing the request times out due to a network error, the client will retry the request. Since the entity was already inserted, this can result in a Conflict error being sent back. Therefore, the results of SaveChangesWithRetires() would return a Conflict for the insert even though it succeeded correctly. Similarly, if the client application is doing an entity delete with SaveChangesWithRetries(), then if the operation succeeds at the Azure Table service, but a retry occurs from the client, then the client may receive a ResourceNotFound. Therefore, when an application uses SaveChangesWithRetries() or does its own retries after a timeout or intermittent network error, it needs to expect and handle these potential confusing errors. Applications may have their own specific way to handle such errors. For example, some applications may ignore “Conflict” and “ResourceNotFound” errors since as long as the entity was inserted or deleted nothing else needs to be done. However, certain applications may want to update the entity rather than insert on “Conflict” errors. For such application, it may be beneficial for the application to add a transaction-id property to the entity. The application can then retrieve the entity on Conflict and examine the transaction-id, if it matches then the prior SaveChanges was successful.

- DataServiceContext has a MergeOption property which is used to control how the context handles the tracked entities.

Example: context.MergeOption -= MergeOption.NoTracking;

The possible valuesare:- AppendOnly - When MergeOption on the context is set to AppendOnly and the entity is already tracked (by any of the previous retrieve or add operation with the same DataServiceContext object), then retrieving an entity again from server will not update the already tracked entity in the context. Since the ETag is not updated for the tracked entity, a subsequent update may result in PreCondition failure if there is an ETag mismatch.

- OverwriteChanges - DataServiceContext always loads the entity instance from the server hence keeping it up-to-date and overwriting the previously tracked entity. Any changes done to properties are overwritten with the values returned from the server.

- PreserveChanges - When an entity instance exists in the DataServiceContext, the properties are not overwritten with those returned from the server. So any property changes made to objects currently tracked in the DataServiceContext are preserved but the ETag is updated hence making it a good option when recovering from optimistic concurrency errors.

- NoTracking - Entity instances are not tracked by the DataServiceContext. To update an entity on a context that is not tracking will require the use of AttachTo as shown in the code snippet above. Tracking is mandatory for Insert, Update and Delete operations. Since not tracking entities in the context reduces client overhead (reduces memory footprint), this is a good option to use when the scenario is to query entities but there is no intention to update/delete the entities or insert new entities using this context.

Partitions

Our previous post on “Scalability Targets” described why the concept of a “Partition” is critical to scalability. Our system load balances partitions to meet demands of the traffic but a given partition is served only by a single server and hence a single partition has some limits. In Windows Azure table, the PartitionKey property is used as the partition key. All entities with same PartitionKey value are clustered together and they are served from a single server node. This allows the user to control entity locality by setting the PartitionKey values, and perform Entity Group Transactions over entities in that same partition. A single partition has some limitations and application owners should understand how their entities are accessed and workload requirements in order to select a PartitionKey value that allows the application to scale. Applications need to understand their workload to a given partition, and stress with the simulated peak workload during testing, to make sure they will get the desired results.

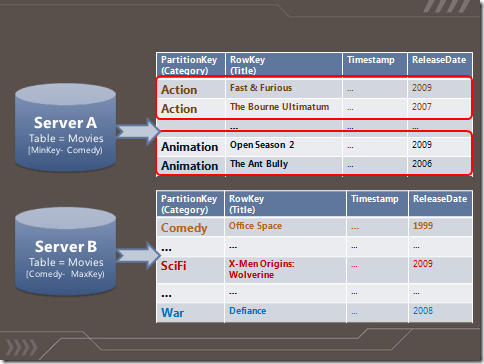

Figure 2 Concept of Partitioning |

Figure 2 shows an example of a movie listing stored in a Windows Azure Table. The category is used as the PartitionKey. We see in this example that entities are sorted by <PartitionKey, RowKey> and that all entities with same PartitionKey values are stored together and served by a single server. All movies that are categorized as “Action” belong to a single partition. In addition each server has a range of partitions that it serves – Server A serves all entities with PartitionKey up to but not including “Comedy” and Server B serves all entities starting from “Comedy”.

Key Selection

There are well documented steps for key selection in a conventional database system. However, when dealing with a “No SQL” system like Windows Azure Table, the procedure may be different and challenging due to the absence of some features like secondary indexes, relationships, joins, order by, group by etc. Choosing a key is important for an application to be able to scale well because of which we have dedicated an entire section here to document some procedures that may help. In this section we will discuss the impact some options can have and discuss some scenarios with examples.

The things to consider while selecting keys are:

- Entity Group Transactions

- Scalability

- Efficient queries

Entity Group Transactions

Entity Group Transaction provides atomic transaction over a group of entities in a single table that have the same PartitionKey value. Here are the rules for Entity Group Transactions:

- A single batch transaction can have up to 100 entities.

- Request size for a batch transaction should not exceed 4MB in size.

- In a single batch transaction, an entity cannot repeat itself (i.e. the RowKey property should be different).

In many applications, multiple entities have to be inserted, updated, or deleted for a single logical request in an atomic transaction. For such scenarios, it is beneficial to have the same value for the PartitionKey across group of such entities that need to belong to an atomic transaction. Let us walk through an example where we consider Entity Group Transaction in the key selection process. The scenario is a video rental application which should track video rentals and also maintain a total count on rentals per user. In a conventional database system, this is usually maintained in two tables: Rentals and Members. The Rentals table stores the rental information of currently checked out movies and Members table maintains the number of movies rented by each member. A stored procedure would then implement the transaction that inserts the rental information of currently checked out movies into the Rentals table and updates the rental count in the Members table. In Windows Azure Table, Entity Group Transaction along with the fact that an Azure table is schema-less can be utilized to store entities that belong to Rentals and Members in to a single table. The PartitionKey is the MemberId and RowKey depends on the type of entity –

- “Member” - stores the member’s rental count and other personal member information

- “Rental_<Movie Name>” – stores information about a currently checked out movie for that member.

Now since the PartitionKey is the same for entities representing member and rental information and they are stored in a single table, Entity Group Transaction can be used to update the member information and keep the running count of number of movies that a member rents in a single request. Since Entity Group Transaction provides atomic transaction for entities in the same partition (the entities have the same PartitionKey value) in a single table, the application does not have to handle the scenario of one command succeeding while the other fails.

Scalability

As noted in our “Scalability Targets” post, a single partition can serve up to 500 entities per second, depending upon the workload. When applications have requirements that surpass that limit, they will need to design such that the requests are distributed across the range of partitions rather than just a single partition. Hence, scale is important to consider while selecting keys. The choice ranges from (a) having a single partition by having the same value for PartitionKey for all entities to (b) having a unique value for PartitionKey for every entity (i.e. every entity is in its own partition). There are advantages and disadvantages for each of the choice. Let us first evaluate the two extreme choices:

Single PartitionKey Value

Advantages:

- Since all entities belong to a single partition, Entity Group Transactions (batch) can be used to insert/update/delete 100 entities at a time. Batching can considerably reduce costs, and the application can perform atomic operations across each batch of entities.

Disadvantages:

- Does not scale beyond the limits of that of a single server since a partition can be served only by a single server. This is not suitable for scenarios where large workloads are expected.

For queries, range row scans can be fast, depending upon the size of the range, and they will be processed by a single server.

New PartitionKey Value for Every Entity

Advantages:

- Scales very well since our system has the option to load balance several partitions – potentially every entity can be load balanced effectively (provided “Append only” pattern is avoided).

Disadvantages:

- A row range query is expensive since it will result in full table scan..

- Entity Group Transaction (batch) is allowed only on the same partition in a single table - since all entities have unique PartitionKey value, batch requests cannot be issued.

For partition range queries, the partition range scans can be efficient, if the ranges are small, though more than one server may need to be visited to satisfy the query, and the query may require using continuation tokens to retrieve all of the results.

Both of the above choices are valid to have under different scenarios. Most applications have a variety of potential keys and you need to ensure that the partitioning you select is scalable. Here are some procedures that can be followed to test if the selected key allows your application to scale:

- Once a PartitionKey is selected, go over the access patterns for the data to see if this choice will result in partitions that will become too hot to be served efficiently from a single server? One way to determine if this would result in a hot partition is to implement a Table partition stress test. For this test, create a sample table using your keys and then exert peak stress for your given workload on a single partition to ensure that the table partition can provide the desired throughput for your application. You may wish to leave some margin of error in order to anticipate future additional load your application may experience. This is a common best practice for scale testing your application.

NOTE: A single partition can serve up to 500 entities per second. However, this number depends on your access patterns. For this scalability target, the throughput limit is in terms of entities and not requests to the server. A single batch request (Entity Group Transaction), which can contain 100 entities, so a partition can handle up to the following number of batch requests per second = 500/(# of entities in batch). - If the table partition stress shows your scenario is requiring more than 500 entities per second from a single partition then you should revisit your key choices in order to allow for more granularity. The manner in which the Azure Table service performs throttling is based on the current load of the service. Meaning, that it is possible to run a stress test and achieve more than 500 entities per second (as seen in many of our example charts below) with little or no throttling, however this may not always be the case as the service may experience higher traffic volumes in the future that could result in throttling. Overall it is considered best practice to avoid requiring more than 500 entities per second out of an individual partition.

- If the Table partition stress test passes and you have not exceeded the recommended limits on each partition, then you are done. A test can be considered as successful if throttling is not experienced and the throughput was within the established limits. See “Timeouts and ServerBusy – Is it normal?” later in this post for more details.

- If the Table partition stress test does not pass, select a more fine-grained PartitionKey. This could be done either by choosing a different PartitionKey or modifying the existing PartitionKey (for example, by concatenating it with the next key property). The purpose of this is to create more partitions so that a single partition does not become too hot in which a large percentage of requests are continuously throttled.

Let us now take an example to learn about how scale can influence our key selection process. Our example scenario consists of a distributed application consisting of various components (i.e. customer portal web roles, worker roles for data aggregation, web roles for reporting, etc.) and multiple instances of each role in a given deployment. Each component (role type) is assigned a unique name. Additionally, each instance of a given component has a unique instance id. We want to construct a logging system that allows a user to perform error and usage reporting over each instance and each component. If we were to build such a logging system for our application to record messages into a table, we have a couple of options when choosing an appropriate PartitionKey for our system:

- PartitionKey = Component Name: This seems like a good idea since an application that has many components will be using multiple partitions – one for each component. This allows the service to scale these partitions out when various components start to log heavily. However, if a single component logs a lot of messages and it has many instances running, this partition will become hot and eventually will be limited by the throughput of a single partition (i.e. single partition is limited by the scale of a single server). Though each component has unique name, all instances of one component share the Partitionkey.

- PartitionKey = Component Name + Instance Id: Here the combination of component name and instance id allows us to scale better since each instance of a component is a single partition for logging. If the customer portal web role component has 100 instances, each instance will use its own value for PartitionKey. This allows Window Azure Table system to better load balance out the partitions based on the traffic it sees.

- PartitionKey = ComponentName + Instance Id + Bucket Id: Let us assume that even option 2 does not scale well, since every instance of this component logs more than what a single partition can handle. Here, one option is to predetermine a number of buckets to distribute these messages into and then select these buckets randomly to insert the log statements. The number of buckets can be determined via estimating the high end of required throughput.

Note: The section titled “Queries” covers query efficiency in detail, but we wanted to quickly point out here that when using a PartitionKey that is made up of concatenated fields, you want to choose an ordering based upon your dominant queries.. In the 3rd example above you are able to query for all entities in a given component name, Instance Id or BucketID by utilizing lexical comparisons; however if you were to choose PartitionKey = Bucket Id + ComponentName + Instance Id, you will be unable to efficiently query for all entities by Instance ID since entities with that Instance Id will span multiple Buckets and Components, as such you would be forced to perform a full table scan over non indexed fields which would have an adverse effect on performance.

Here are some examples of queries when using ComponentName + Instance Id + BucketId:

- Query all entities that belong to component “ReportingRole”

var query = from entity in context.CreateQuery<Logs>(tableName)

where entity.PartitionKey.CompareTo("ReportingRole;”) >= 0 && entity.PartitionKey.CompareTo("ReportingRole<”) < 0 select entity;

- Query all entities that belong to component “ReportingRole” and Instance 2

var query = from entity in context.CreateQuery<Logs>(tableName)

where entity.PartitionKey.CompareTo("ReportingRole;Instance0002;”) >= 0 && entity.PartitionKey.CompareTo("ReportingRole;Instance0002<”) < 0 select entity;

To summarize, depending on estimated traffic, an application can be designed to use appropriate number of partitions which allows it to scale. This will be a good time to mention the “Append Only” pattern that one should avoid while designing the partitioning scheme.

Stay Away From “Append (or Prepend) Only” Pattern (if possible) For High Scale Access

Let us use an example to explain what an “Append Only” pattern is (and prepend only pattern differs in just the sort order). Assume that you have the following successive values for partition keys:

- 12:00:00

- 12:00:01

- 12:00:02

- 12:00:03

- 12:00:04

- 12:00:05

- 12:00:06

- 12:00:07

Then of course you know the next write will be 12:00:08, then 12:00:09, etc. Each of the above represents a unique entity and each entity is in its own partition –one may ask that since it follows our golden rule of scaling by having entities in different partitions, why is it that we should avoid it for high scale scenarios?

For query efficiencies, we group the entities into Range Partitions; For example, we might group them into the following 3 partitions (just as an example) based upon the load on the table:

Range Partition 1

- 12:00:00

- 12:00:01

- 12:00:02

- 12:00:03

Range Partition 2

5. 12:00:04

6. 12:00:05

7. 12:00:06

Range Partition3

8. 12:00:07

We do not by default create a Range Partition for every partition key value, because if we would, we would not get the efficiencies from range partition key queries. If you wanted to query for rows between a time range 12:00:01 and 12:00:03, that would be efficient in the above example since we have grouped those entities into a single range partition. In using range partitions, the writes for 12:00:08, then 12:00:09 are all going to the same partition, which is the last partition in the table (Partition 3 in the example). This is what we call the “Append Only” pattern on a table. If the keys were inserted in descending order, it would be a “Prepend only” pattern and all requests will fall the in first partition range.

The “Append Only” (or “Prepend only”) pattern is to use a lexically increasing (or decreasing) key for successive accesses. Data is stored sorted by PartitionKey (and RowKey for tables). When the entities inserted have their partition key increasing (decreasing) alphanumerically, the request will always be served by the last (or first) range partition for that table, which will be hosted by a single server. Since the keys are increasing (or decreasing), it is always the tail end (or beginning) of this partition range that gets all the requests and hence the write throughput achieved will be limited by that of a single server and load balancing will not help increase the throughput. Bottom line, it is important to know that “Append Only” (or “Prepend Only”) pattern will have limited write throughput of a single partition, in comparison to what can be achieved by writing across the whole key range.

Selecting Partition Key for Efficient Queries

Windows Azure Tables provides one clustered index and results are always sorted in ascending order of PartitionKey, RowKey. Absence of secondary index and sorting implies that selecting PartitionKey values that provides efficient queries requires forethought of the queries that are required for your application. In the “Queries” section we discussed about efficiency of various query types with most efficient being a point (single entity) query. We recommend that high-frequency, latency-critical queries use point query (i.e. PartitionKey and RowKey lookup) or PartitionKey filter on a small range of entities. Using the PartitionKey and RowKey in the query filter limits the query execution to a single or a subset of contiguous entities (depending upon the condition used), thereby improving query performance. The following are some rough guidelines and suggestions for how to choose a PartitionKey for your table for efficient querying:

- First determine the important properties for your table. These are the properties frequently used as query filters.

- Pick the potential keys from these important properties.

- It is important to identify the dominant query for your application workload. From your dominant query, pick the properties that are used in the query filters.

- This is your initial set of key properties.

- Order the key properties by order of importance in your query.

- Do the key properties uniquely identify the entity? If not, include a unique identifier in the set of keys.

- If you have only 1 key property, use it as the PartitionKey.

- If you have only 2 key properties, use the first as the PartitionKey, and the second as the RowKey.

- If you have more than 2 key properties, you can try to concatenate them into two groups – the first concatenated group is the PartitionKey, and the second one is the RowKey. With this approach, your application would need to understand that the PartitionKey for example contained two keys separated by a “-“. The key that is always known for frequently executed, latency sensitive, queries can be chosen as the prefix so that efficient prefix range queries can be executed on PartitionKey and/or RowKey.

In some cases, you may have more than one dominant query which may have different set of properties that need to be queried over. For example: In a micro blogging site, the requirements for dominant queries can be that for a given author:

- Retrieve blogs authored by a user by date: RowKey is <Date Published>;<Blog Title>

- Retrieve blogs authored by a user by category: RowKey is <Category>;<Blog Title>

In this case, the PartitionKey is Author, but there are two options for RowKey. Using Entity Group Transaction, an application can easily maintain its own secondary index. There are two options here for storing data:

Option 1

Application can store the blog twice in a single transaction in the same partition – one entity with RowKey <Date Published>;<Blog Title> and second entity with RowKey <Category>;<Blog Title>.

Example:

PartitionKey |

RowKey |

Date Published |

Category |

Text |

Brady |

20100920;RandyToVikings |

2010/09/20 |

Sports |

Randy has left the building… |

Brady |

Sports;RandyToVikings |

2010/09/20 |

Sports |

Randy has left the building… |

Table 4 Entities stored twice to provide fast lookup by category and date

This allows a fast and easy way to query by date range or category. For example, a query for all “Sports” blogs by Brady is easy. For this, we use the prefix search pattern we described in Queries section.

var blogs = from blog in context.CreateQuery<Blogs>("Blogs")

where blog.PartitionKey == "Brady" && blog.RowKey.CompareTo("Sports;") >= 0

&& blog.RowKey.CompareTo("Sports<") < 0

select blog;

The key is in using delimiter + 1 as the separator. In the above example, ‘;’ was the separator used and hence we used ‘<’ in the last predicate.

Now to get all Brady’s blogs in December, we can again do an efficient search:

var blogs = from blog in context.CreateQuery<Blogs>("Blogs")

where blog.PartitionKey == "Brady" && blog.RowKey.CompareTo("20101201;") >= 0

&& blog.RowKey.CompareTo("20101231<") < 0

select blog;

Option 2

If storing entities multiple times is not feasible because of the size of each entity, then an application can insert an additional index row that provides the required lookup to retrieve the primary row that contains all the data. We can benefit from the fact that a table is schema-less by not storing all the properties in the index row.

PartitionKey |

RowKey |

Date Published |

Category |

Text |

Brady |

20100920;RandyToVikings |

2010/09/20 |

Sports |

Randy has left the building… |

Brady |

Sports;RandyToVikings |

2010/09/20 |

Table 5 Entity and Index Stored in Same table to provide fast lookup by category and date

For the above example, let us assume that entity with RowKey <Date Published>;<Blog Title> is the primary row that contains all data. Then store the second entity with RowKey as <Category>;<Blog Title> and Date Published as the only non-key property (second row in Table 5). The purpose of the second entity here is to provide a lookup for the “Date Published”. Now when a query by category is to be executed, it can first retrieve the “Date Published” and then retrieve the entire entity containing the blog. The two queries are look up and a good alternative to scanning large partitions. This is effectively storing and using a second index in the same partition for this table and the index can be easily maintained by using Entity Group Transactions.

Performance Case Study Methodology

We are now going to examine the performance of Windows Azure Tables. Before we do that, we will briefly describe the methodology used to perform the experiments.

The following provides a description of the framework. A Silverlight application running as a Web role is used to submit parameters for a test run. The test parameters are stored as a row in a table. A worker role with multiple instances continuously monitors this table and when it sees a test submitted, it will go ahead and execute the test run. Depending on the test run parameters and number of VMs and threads to use, appropriate number of worker roles queue up N threads that then issue requests against Windows Azure table, blob, or queue depending on what is being tested. We ran the tests against small (called small in the graphs) and extra-large VMs (called XL in the graphs) in production.

A few notes on our application used here:

- GUID was used for PartitionKey. For table tests that span across partitions, the partition key is a random GUID.

- Each thread is provided a PartitionKey to work with. The row key used is JobID_VMID_WorkerId_Index.

- JobId is an ID that uniquely identifies the test.

- VMID is the id for the VM working

- WorkerID is the id that each thread is assigned (1 through N where N is the maximum threads assigned per VM for the test)

- Index is the unique id for each entity. Each thread maintains a counter which is incremented after every insert

- Table entity has one non key property called “Payload” of string type.

- All entities are of 1024 bytes and the payload was randomly generated

- We use synchronous Table APIs.

- We do not retry a request in these tests.

- Each thread performs a series of synchronous requests, as soon as one request finishes another is sent out, except for requests that result in an exception being thrown.

- In a typical application it is recommended to utilize an exponential backoff strategy in order to respond to the throughput of the service. However in the test below we wanted to concentrate on available throughput of the service for stressing the system, as such when the test encounters an exception it will only wait for 3 seconds before submitting the next request.

- We use a new DataServiceContext for every logical operation. WCF Data Services recommends use of a new context for each logical operation since it is not thread safe and also reduces the overhead of tracking vast number of entities that a client may want to store. A logical operation may include a query for the entity followed by update/delete operation for the queried entity.

- For Queries, we perform single entity (i.e. equality filter on PartitionKey and RowKey) GETs to compare throughputs. Each thread works on an assigned GUID as the partition key and retrieves each entity sequentially since the RowKey suffix is essentially a counter.

- For multi-partition tests each unique worker thread both reads and writes to its own partition using a randomly generated GUID as the partition key, for single partition tests all worker threads in all VMs use the same randomly generate GUID as the partition key. This results in a well distributed load across partitions thus allowing our system to load balance.

- We ran all tests in live production environment over a period of several days. As such there is some degree of variability in the data collected that reflects the behavior of the production environment; however we believe this provides an accurate picture of what to expect when designing solutions targeting Azure Storage (Blobs/ Tables / Queues).

- We have followed the best practices posted here except for “To improve performance of ADO.NET Data Service deserialization” since this issue has been resolved in recent releases of WCF Data Services and in .NET 4.0.

- We use the following setting for ServicePointManager:

- ServicePointManager.Expect100Continue = false;

- ServicePointManager.UseNagleAlgorithm = false;

- ServicePointManager.DefaultConnectionLimit = 100;

- We turn off expect 100 so that PUT and POST requests do not send the expect header and wait for 100-continue from the server before sending the payload body.

- We turn off Nagling and have covered in depth in this post about how Nagling impacts small packets.

- We set the default concurrent connection limit to 100.

- We use the following setting for ServicePointManager:

- We monitor and track throttling errors in all tests. We observed throttling in results for only Figures 8, 9 and 15, which stressed the load on a single partition over the scalability targets.

- Our results were collected after our system reached a steady state. This warm up allowed our service to load balance enough times to meet its throughput needs, which is the same that would occur for a continuously running live hosted service. When you perform your own scalability tests, you should warm up your service to its steady state and run with that for awhile. Then run your scalability tests using those storage accounts. We have seen some studies compare results when running from cold start for just a few minutes and hence did not see as much throughput as we show here.

Performance Results and Tips

Selecting the Appropriate Request Size

A common question we get asked is how can we upload 1+ million entities into Windows Azure Tables as fast as possible from a worker role? The answer is Entity Group Transaction (aka batch). The follow up question asked is how many entities should we batch together in a single request? To gain some insight into these questions we will examine the performance differences for various sized batch requests and also compare with single entity requests.

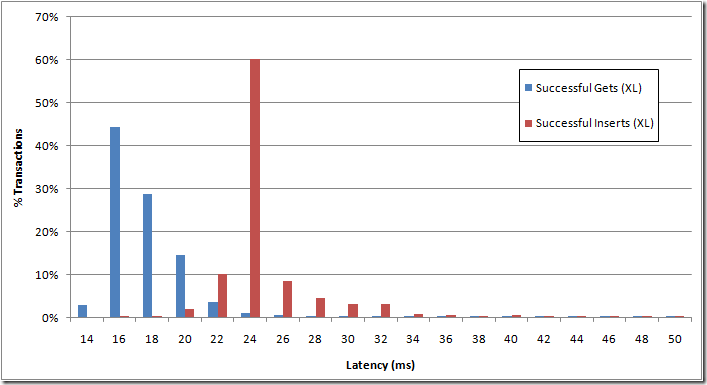

Let us start with comparing the latencies for single entity insert against batch inserts using a single extra-large VM as the client. Figure 3 shows the latency observed for single entity gets and inserts. The y-axis shows the number of transactions during the run, and the x-axis shows the latency in milliseconds. The results show that majority of gets complete under 40 milliseconds and the majority of inserts complete under 50 milliseconds.

Figure 3 Single entity latencies for inserts and gets

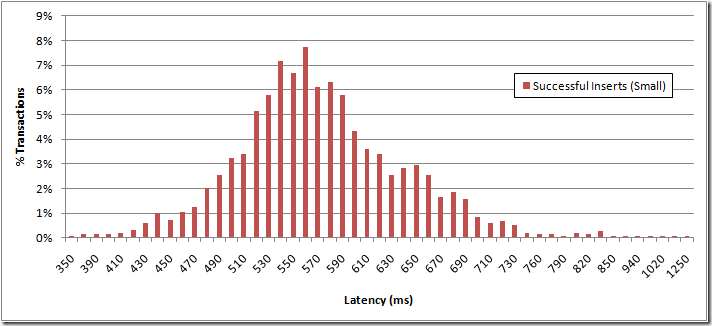

Figure 4 shows the latency for batch of 100 entities executed serially on a single thread on a small VM. The x-axis shows latency in milliseconds and y-axis shows the number of transactions during the run. We see that the majority of batch inserts complete within 1.2 seconds. For the results, we insert 100 entities into a table and all entities belong to the same partition. When comparing Figure 3 and Figure 4 the results show an expected result, which is that batching updates together reduces the latency to process a set of operations versus sending them to the server serially one at a time.

Figure 4 Insert Latency distribution for Batch of 100 Entities

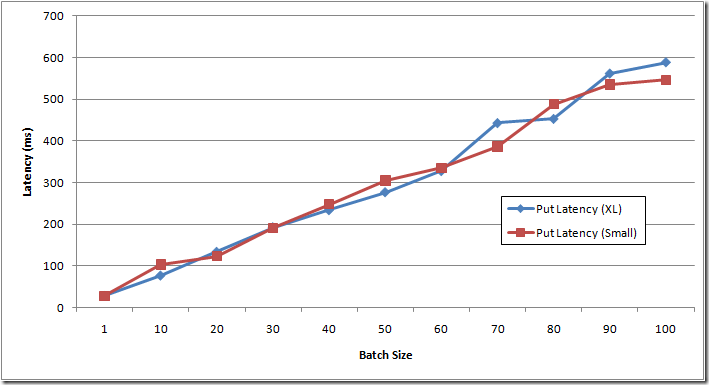

Since batching helps for bulk inserts, let us look at what the right size for batch request may be. Figure 5 shows the average latency for batch requests of various sizes when executed serially using a single thread on small and extra-large VM. The x-axis shows the various batch sizes used and y-axis shows the latency for the requests in milliseconds.

Figure 5 Compare latencies of requests with various sized batch requests to a single partition

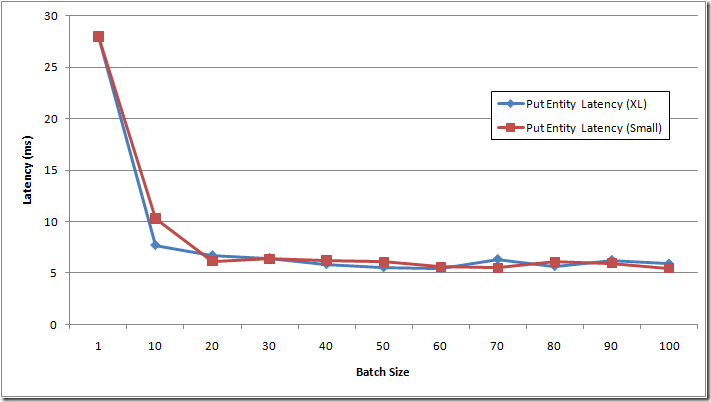

Figure 6 shows the average latency it takes for a single entity when part of a batch request. The x-axis shows the various batch sizes used and y-axis shows the latency for each entity (and not the entire batch request) in milliseconds. This data is derived from Figure 5 by dividing the batch transaction latency by number of entities in the batch transactions.

If an application is sensitive to latency, issuing multiple concurrent batch requests containing 20 inserts will be better than issuing a single request containing 40+ entities, because the per entity latency savings peaks out at batch requests of size 20. However, an application may want to batch more entities together to reduce transaction costs. This is because there is an economic benefit when utilizing higher batch sizes as they directly reduce the number of transactions against your storage account by a factor of N. Meaning a batch size of 50 is twice as expensive as batch size of 100 for the same number of aggregate entities, since it will result in twice the number of transactions. Overall, consider throughput, latency and costs when evaluating the batch size to use for your scenario.

Figure 6 Compare per entity latencies of requests for various sized batch requests to a single partition

In Figure 7, we compare the impact of batching on multiple partitions. The test compares different batch sizes on extra-large VM with small VM using 30 threads. The results show a similar trend as seen in the prior results; even with multiple partitions and multiple threads, the performance benefit of batching tends to peak out at around a batch size of 20 for our experimental workload.

The results also show a performance difference between using an extra-large VM and a small VM. The results show that a single extra-large VM provides a little more than 1.5 times the throughput than on a small VM. The reason behind achieving higher throughput on extra-large VM is because extra-large VMs have more resources (memory, cores and network bandwidth) compared to a small VM to handle the 30 threads. In addition the small VM being a single core, .NET 3.5 uses workstation GC which suspends all threads during collection and the lower available memory on small VMs can trigger GC more frequently when compared to extra-large VMs. Even so, there are cost differences between the small and extra-large VM and it depends upon your applications workload whether it will get lower cost per transaction using several small VMs vs. fewer extra-large VMs.

Figure 7 Compare the performance of different sized batch requests to multiple partitions using 30 threads on 1 VM

Scalability of the table

The storage system monitors the usage patterns of the partitions, when it recognizes that there is a lot of traffic to some partitions, the system will respond by automatically spreading them out across many storage servers. This will result in lower latencies and higher overall throughput as the traffic load is now being distributed across many servers. However, a partition (i.e. all entities with same partition key) will be served by a single server to provide transaction ordering and strong consistency for the operations to that partition. It is important to note that the amount of data stored within a partition is not limited by the storage capacity of one storage node (we will have more information on this in a future post that provides an overview of our storage architecture).

Since entities with the same PartitionKey are stored together and served by a single server, it allows efficient querying within a partition. Your application can also benefit from efficient caching and other performance optimizations that are provided by data locality within a partition. There is a tradeoff here between trying to benefit from entity locality, where you get efficient queries over entities in the same partition, and the scalability of your table, where the more partitions your table has the easier it is for Windows Azure Table to spread the load out over many servers.

Important: This prior posting gave the scalability targets of Windows Azure Tables . There we say that we are targeting a storage account to perform up to 5,000 operations per second (where an operation here is a single entity), and a single partition to perform up to 500 entities per second. The results we show in this section are higher than both of these. Our service allows a storage account and partition to go above these targets, based upon the excess load that the storage service can handle. But it is important to design your system within these targets, because if the storage service is under heavy load it will start to throttle the storage account and the partition for excess load over these targets. Also, these targets are not exact in that it also depends upon the exact mix of operations and size of operations for your service. Therefore it is important to perform stress tests to see where the limit of a single partition is and if your expected access patterns allow you to reach the expected targets.

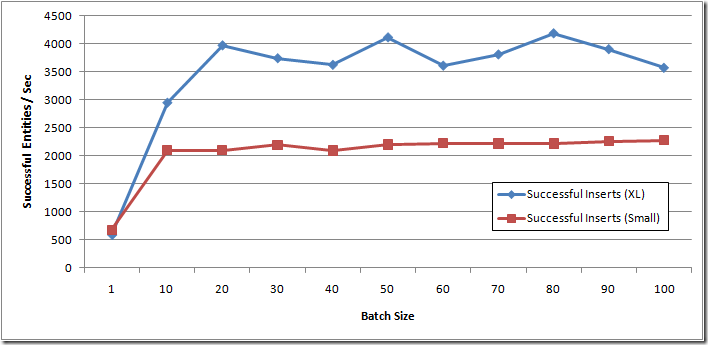

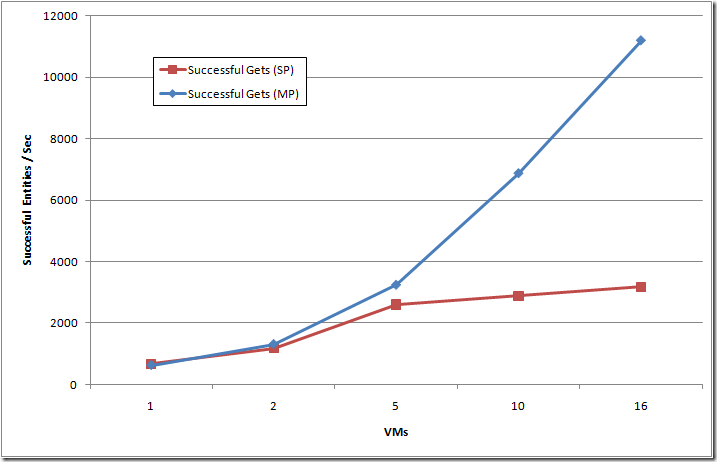

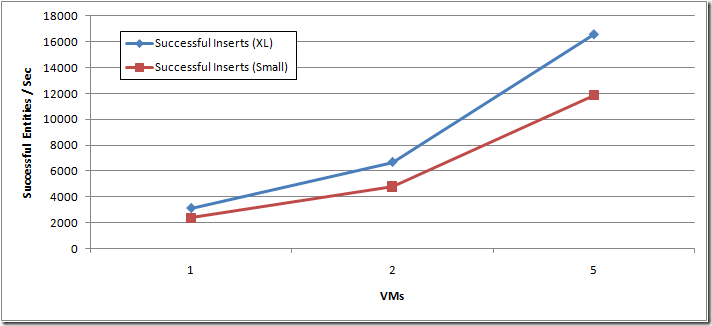

To understand the impact of number of partitions on scalability, we compared the throughput of inserting single entities using 15 threads per extra-large VM into a single partition and multiple partitions. The results for Inserts and Gets are shown in Figure 8 and Figure 9 respectively. The x-axis shows the number of extra-large VMs used to generate the traffic and y-axis shows the entities inserted/retrieved per second. Figure 8 shows single entity inserts, whereas we will look at the throughput using batch requests later in the blog post.

Figure 8 shows that a single partition in a table served around 1000 entities per second at 5 VMs, with 0.0004% of the requests throttled (no throttling occurred at 2 VMs doing 600 entities per second). Then as we increased the load on the single partition, we saw around 0.03% of the requests throttled at 10 VMs, and 0.2% of the requests throttled at 16 VMs, all for the single partition results. These results show the importance of understanding the scalability targets of a single partition, and staying within them. Whereas for the multiple partition results, the throughput continued to scale up as the load increased as we added VMs, since the application distributed the requests across many partitions, and then the Table service was able to appropriately distribute the traffic across many storage nodes to meet the additional traffic demands.

Figure 8 Compare single entity insert throughput between single and multi-partition (Using 15 threads per extra-large VM)

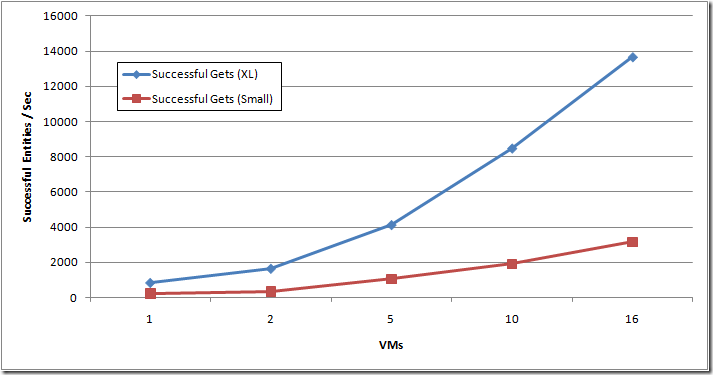

Figure 9 compares the throughput we get for single entity GETs for single and multiple partitions. The results show that distributing the requests over multiple partitions provides higher throughput than a single partition for the same reasons as described for Figure 8. Comparing Figure 8 with Figure 9, we see as expected that GETs provide higher throughput than Inserts since writes are more expensive than reads.

Figure 9 Compare single entity GET throughput between single partition and multi-partition. 15 threads per extra-large VM

Now that we have established the throughput limits of a single partition and the importance of distributing the load across multiple partitions with single entity operations, we will now examine the benefits of using batch operation.

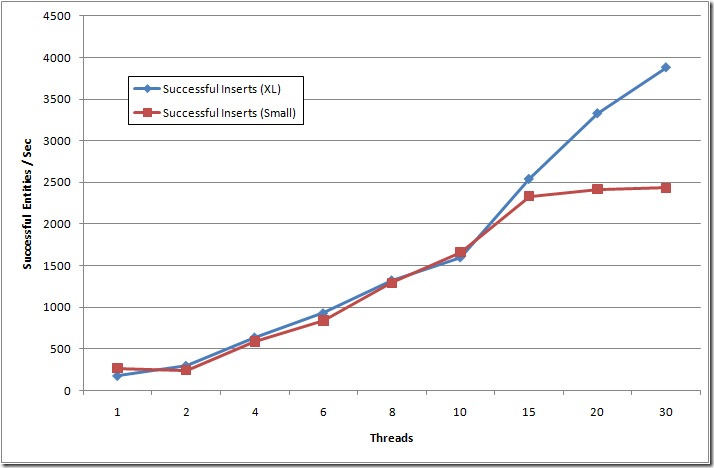

Let us see the impact that concurrency (i.e. for our scenario the number of threads) has over throughput. We compare throughput by varying the number of threads issuing batch inserts of 100 entities with each of the batch requests targeting different partitions. We issue all requests from a single VM. The x-axis in Figure 10 shows the number of threads that concurrently issue the batch insert requests and y-axis shows the throughput in terms of entities/second. As we see in Figure 10, the throughput improves as we increase the number of concurrent batch insert requests from a single VM with batch requests targeting multiple partitions.

Figure 10 shows that over a certain threshold of concurrency (after 15 threads), we see that the improvement flattens out and increasing concurrency does not help much for single VM. This is not due to a limit on the server end, rather this illustrates when the available processing resources of the VM becomes saturated (in this case a single core VM), whereas the throughput continues to go up with the increase in threads for XL VM size.

Figure 10 Compare impact of threads issuing batch inserts (100 entities) targeting multiple partitions on a single VM

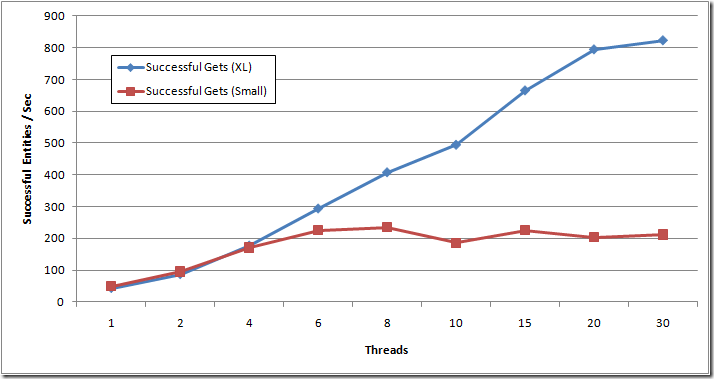

Figure 11 performs the same experiment as in Figure 10, but compares the impact of threads on GET throughput on multiple partitions from a single VM. Here we see the similar leveling off of throughput, but at a much earlier number of threads (6), due to the same reasons.

Figure 11 Compare impact of threads on single entity GETs targeting multiple partitions on a single VM

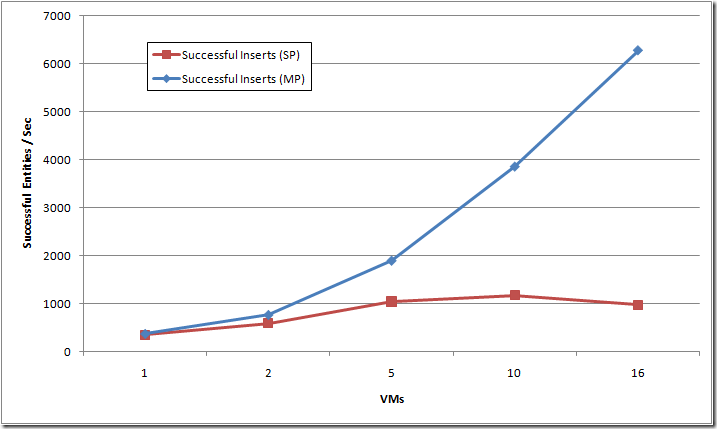

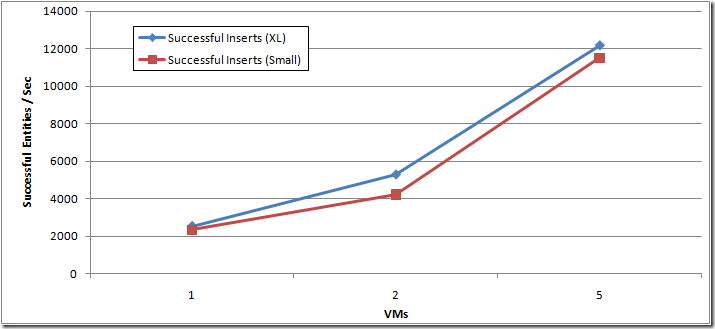

In Figure 12 and Figure 13 we want to see the impact of increasing the number of VMs on batch inserts. We execute batch requests targeting multiple partitions and these batch requests are issued concurrently from 15 threads in Figure 12 and 30 threads in Figure 13. The x-axis shows the number of VMs from which requests were issued concurrently and y-axis shows the throughput in terms of entities/second.

Figure 12 Compare 100 batch upload throughput with varying VMs on multiple partitions, 15 threads per VM

Figure 12 and Figure 13 show that using batch requests and increasing the number of VMs continue to give higher throughput. This shows that the lower throughput seen in Figure 10 is due to client side bottleneck. Please note that the achieved throughput of 17,000 entities per second is a lot higher than the scalability targets we state for an account. As described earlier, the intention here is to show that the system can scale to higher throughputs if the excess throughput is available to be used in the system, but you should design your application within the scalability targets, since that is what is enforced for throttling when the system is under heavy load.

Figure 13 Compare 100 batch upload throughput with varying VMs on multiple partitions, 30 threads per VM

As shown in prior results, we observed high CPU usage on small VMs that limited their throughput as we increased the number of threads. In Figure 14 we compare the throughput for GETs on small and extra-large VMs with 30 threads per VM. The results show the higher throughputs achieved by using more VMs or having more processing power per VM (when using extra-large vs. small VM).

Figure 14 Single entity get throughput over multiple partitions, 30 threads per VM

A few question these results may prompt:

- What size of VM should my application use to get the best cost per transaction?

- Is it better, in terms of cost, to use many smaller sized VMs or fewer larger VMs to achieve the same throughput?

The answer to these questions is very application dependent, since some applications may optimize their costs by using many smaller VMs, whereas others using fewer larger VMs, depending upon the amount of VM cycles needed for processing each application-level transaction, or other application factors. The only way to tell is to stress test your application while varying the type of VM, the amount of concurrency in each VM, and other factors that can influence your design (e.g., how much batching to perform, etc). Due to the various sizes of VMs available it is possible to create a custom solution that best meets the demands of your scenario, and do so in an economical way.

Scalability Summary

To quickly summarize, for scalability:

- Collocate entities that need to be accessed together by assigning them the same PartitionKey value

- Understand the scalability of a single partition for your application’s traffic

- Distribute entities that have high traffic demands across multiple partitions – our system will monitor and automatically load balance to meet traffic needs

- Client side bottlenecks. When looking at the scalability of the application, it is important to understand if the application is experiencing a server side bottleneck or a client side bottleneck. If the client side is the bottleneck, then increasing the number of concurrent requests, the number of VMs used or the size of the VM can increase overall throughput.

- It is important to note that in our case study we utilized blocking synchronous calls and increased concurrency by increasing the number of threads for simplicity, since our focus was on showing the server side throughput Windows Azure Tables provides. However, for truly scalable applications it is recommended to use asynchronous operations rather than their synchronous counterparts. Asynchronous operations provide significant benefits in terms of scale, CPU utilization, and Memory utilization.

Timeouts and ServerBusy – Is it normal?

A single server may serve many partitions. Our system monitors these partitions and when a server is heavily loaded, our system load balances these partitions to meet the traffic needs of clients. The server may take 5-10 minutes to decide to load balance in order to not overreact to very small spikes in traffic. Therefore it is possible for clients to experience “Server Busy” or “Timeout” errors when a limit is hit and it is expected that clients backs off on such errors to allow the load to be reduced on the server. Once a server recognizes that a limit is being hit, load balancing kicks in, which involves reassignment of partition(s) to other servers. This reassignment may take between 10-20 seconds for the partition to be available for serving requests, during which time a client may experience “Server Busy” or “Timeout” errors. The retries and exponential back-off strategy provided by the StorageClient (for SaveChangesWithRetries and CloudTableQuery) will help in handling such errors. Then once the partitions are load balanced the system would then provide higher throughput since the partitions are served by different servers thus being able to process more requests across these partitions. However, since a single partition is always served by a single server, its limit is critical to understand for an application’s scale, as described earlier. A client that requires high throughput should design its application so that it uses multiple partitions to meet its scale needs. It is worthwhile to reiterate that “Server Busy” and “Timeout” errors can be experienced in the following cases:

- When a server reaches a limit on requests it can process. These requests can be to any/all partitions that it has been assigned to serve. Since load balancing does not kick in at the very instant the server sees spikes, a client can experience “Server busy” until the partitions are load balanced.

- During load balancing, which can take up to 10-20 seconds for a partition to be reassigned and made available again for processing requests

- A single partition is being asked to serve more requests than what a single partition can serve (see the target scalability limits described earlier)

1 and 2 should be considered normal and the system load balances to meet the traffic needs. However, in case of 3, there is not much that the Azure Storage system can do, since a partition can be served only by a single server in order to efficiently provide strong consistency for your Windows Azure Table operations.

We recommend that clients inspect their data access patterns and schema to see under what load it experiences “Server Busy” and “Timeout”, as well as stress test a single partition for the application to see how many entities per second it can process. When scale is a concern, designing the schema such that traffic is distributed across various partitions allows our system to load balance.

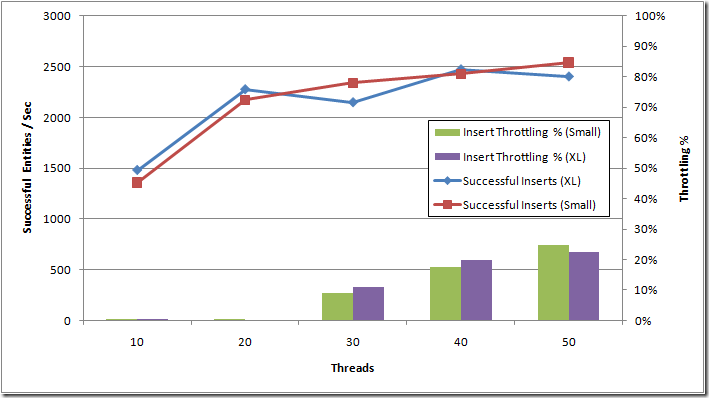

To illustrate this behavior we designed a test to purposely “saturate” a single partition with numerous requests to force the service into the throttling behavior. In the test below we ran each VM with 10 concurrent worker threads, adding additional VMs to increase the overall workload on the partition. The x-axis in Figure 15 indicates the total number of threads issuing requests across all VMs. The left y-axis is the throughput in terms of successful entities/second, and the right y-axis is the percent of the overall requests that were throttled.

Figure 15 Saturation of a single partition with 100 batch requests (10 threads per VM and using 1, 2, 3, 4 and 5 VMs)

Figure 15 shows that between 20 and 30 threads the backend begins to throttle ~11% of the incoming requests. This is after it has reached 2,300 entities per second for 20 threads with almost no throttling. The results also show that there is little performance gained by overloading a single partition as the service will respond to increased load by throttling a higher percentage of incoming requests. At 50 concurrent requests, we see approximately 2,500 successful entities per second, but also around 700 expected throttling failures per second (22% of the total 3,200 request/second being sent by the client). As such it is best to observe best practices and utilize an exponential backoff strategy as well as multiple partitions (if applicable) to minimize throttled requests. This can be seen as the percent of throttling continues to go up, whereas the successful request rate for the partition holds steady at above 2,300 entities per second.

Note: Another important point regarding this result is that for billing purposes the storage account includes transactions that result in expected throttling in addition to successful ones. All of these throttled requests are classified as expected and will be billed, since they are going over the scalability targets for a single partition and the client is not backing off. This provides an additional economic incentive to design an application’s tables in a scalable way, as well as observe exponential backoff strategy in order to achieve the most economical throughput (see for “ Understanding Windows Azure Storage Billing ” for more on billing).