Pricing scenario using a data pipeline to load 1 TB of Parquet data to a data warehouse

In this scenario, a Copy activity was used in a data pipeline to load 1 TB of Parquet table data stored in Azure Data Lake Storage (ADLS) Gen2 to a data warehouse in Microsoft Fabric.

The prices used in the following example are hypothetical and don’t intend to imply exact actual pricing. These are just to demonstrate how you can estimate, plan, and manage cost for Data Factory projects in Microsoft Fabric. Also, since Fabric capacities are priced uniquely across regions, we use the pay-as-you-go pricing for a Fabric capacity at US West 2 (a typical Azure region), at $0.18 per CU per hour. Refer here to Microsoft Fabric - Pricing to explore other Fabric capacity pricing options.

Configuration

To accomplish this scenario, you need to create a pipeline with the following configuration:

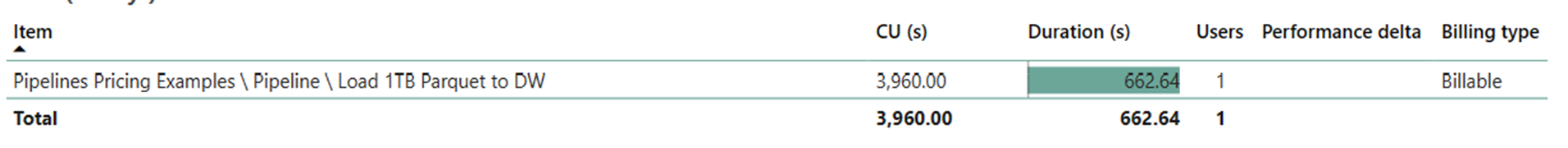

Cost estimation using the Fabric Metrics App

The data movement operation utilized 3,960 CU seconds with a 662.64 second duration while activity run operation was null since there weren’t any non-copy activities in the pipeline run.

Note

Although reported as a metric, the actual duration of the run isn't relevant when calculating the effective CU hours with the Fabric Metrics App since the CU seconds metric it also reports already accounts for its duration.

| Metric | Data Movement Operation |

|---|---|

| CU seconds | 3,960 CU seconds |

| Effective CU-hours | (3,960) / (60*60) CU-hours = 1.1 CU-hours |

Total run cost at $0.18/CU hour = (1.1 CU-hour) * ($0.18/CU hour) ~= $0.20