How declarative agents work

Now that we know the basics of a declarative agent, let’s see how it works behind the scenes. You learn about all the pieces of declarative agents and see how they fit together to create an agent. This knowledge helps you decide whether a declarative agent works for you.

Custom knowledge

Declarative agents use custom knowledge to provide extra data and context to Microsoft 365 Copilot that is scoped to a specific scenario or task.

Custom knowledge consists of two parts:

- Custom instructions: defines how the agent should behave and how it should shape its responses.

- Custom grounding: defines the data sources that the agent can use in its responses.

What are custom instructions?

Instructions are specific directives or guidelines that are passed to the foundation model to shape its responses. These instructions can include:

- Task definitions: outlining what the model should do, such as answering questions, summarizing text, or generating creative content.

- Behavioral guidelines: setting the tone, style, and level of detail for responses to ensure they align with user expectations.

- Content restrictions: specifying what the model should avoid, such as sensitive subjects, or copyrighted material.

- Formatting rules: showing how the output should be structured, like using bullet points or specific formatting styles.

For example, in our IT support scenario our agent is given the following instructions:

You are IT support, an intelligent assistant designed to answer common IT support queries from employees at Contoso Electronics and manage support tickets. You can use the Tickets action and documents from the IT Help Desk SharePoint Online site as your sources of information. When you can't find the necessary information, prioritize the documents from the IT Help Desk site over your own training knowledge and ensure that your responses are not specific to Contoso Electronics. Always include a cited source in your answers. Your responses should be concise and suitable for a non-technical audience.

What is custom grounding?

Grounding is the process of connecting large language models (LLM) to real-world information, enabling more accurate and relevant responses. Grounding data is used to provide context and support to the LLM when generating responses. It reduces the need for the LLM to rely solely on its training data and improves the quality of the responses.

By default, a declarative agent isn't connected to any data sources. You configure a declarative agent with one or more Microsoft 365 data sources:

- Documents stored in OneDrive

- Documents stored in SharePoint Online

- Content ingested into Microsoft 365 by a Copilot connector

In addition, a declarative agent can be configured to use web search results from Bing.com.

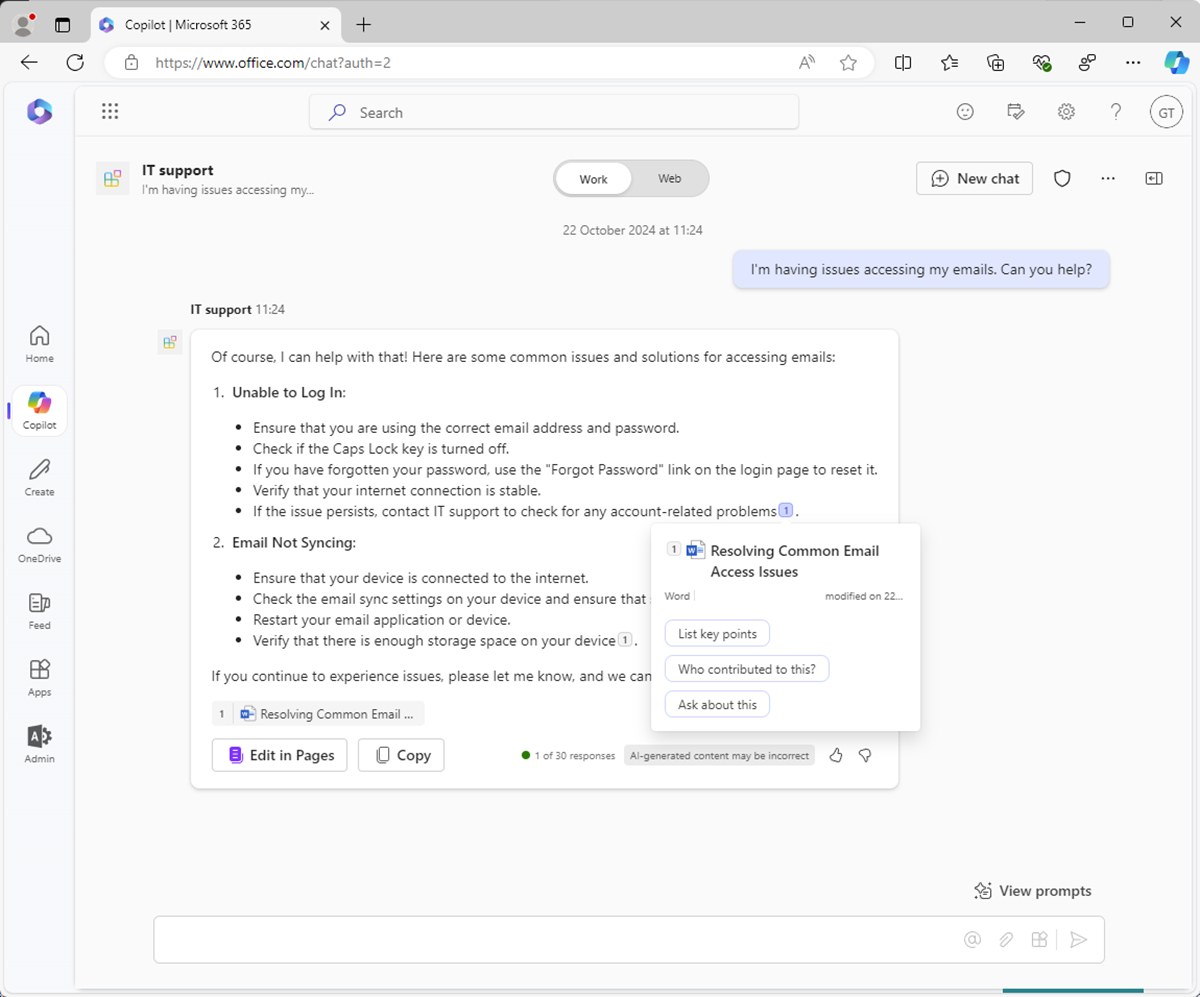

For example, in our IT support scenario, a SharePoint Online document library is used as a source of grounding data.

When Copilot uses grounding data in an answer, the source of the data is referenced and cited in the response.

Custom actions

Custom actions enable declarative agents to interact with external systems in real-time. You create customs actions and integrate them with the declarative agent to read and update data in external systems using APIs.

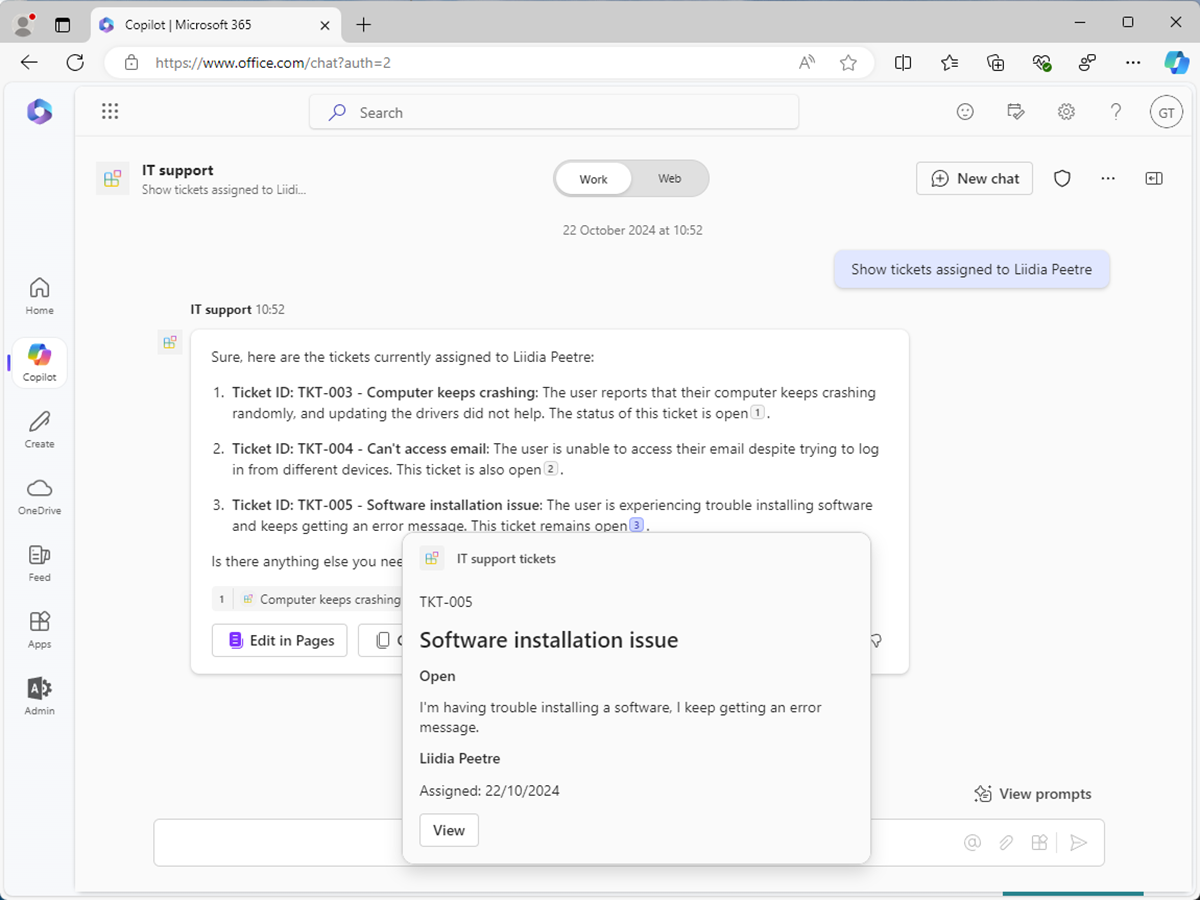

For example, in our IT support scenario, a custom action is used to read and write data in the support ticketing system via an API.

How does a declarative agent use custom knowledge and custom actions to answer questions?

Let’s see how custom knowledge and custom actions are used together in a declarative agent to solve our IT support problem.

You build a declarative agent with the following configuration:

- Custom instructions: Use instructions to shape the responses so that they're appropriate for nontechnical users.

- Custom grounding data: Use grounding data to improve the relevancy and accuracy of responses. For example, use information stored in knowledge base articles on a SharePoint Online site.

- Custom action: Use actions to access data in real-time from external systems. For example, use an action to interact with data in the support ticketing system via its API to manage support tickets using natural language.

The following steps describe how the Microsoft 365 Copilot handles user prompts and generates a response:

- Input: The user submits a prompt.

- Preliminary checks: Copilot performs responsible AI checks and security measures to ensure the user prompt doesn't pose any risks.

- Reasoning: Copilot creates a plan to respond to the user prompt.

- Grounding data: Copilot retrieves the relevant information from grounding data.

- Actions: Copilot retrieves data from relevant actions.

- Instructions: Copilot retrieves the declarative agent instructions.

- Response: The Copilot orchestrator compiles all the data gathered during the reasoning process and passes it to the LLM to create a final response.

- Output: Copilot delivers the response to the user interface and updates the conversation.