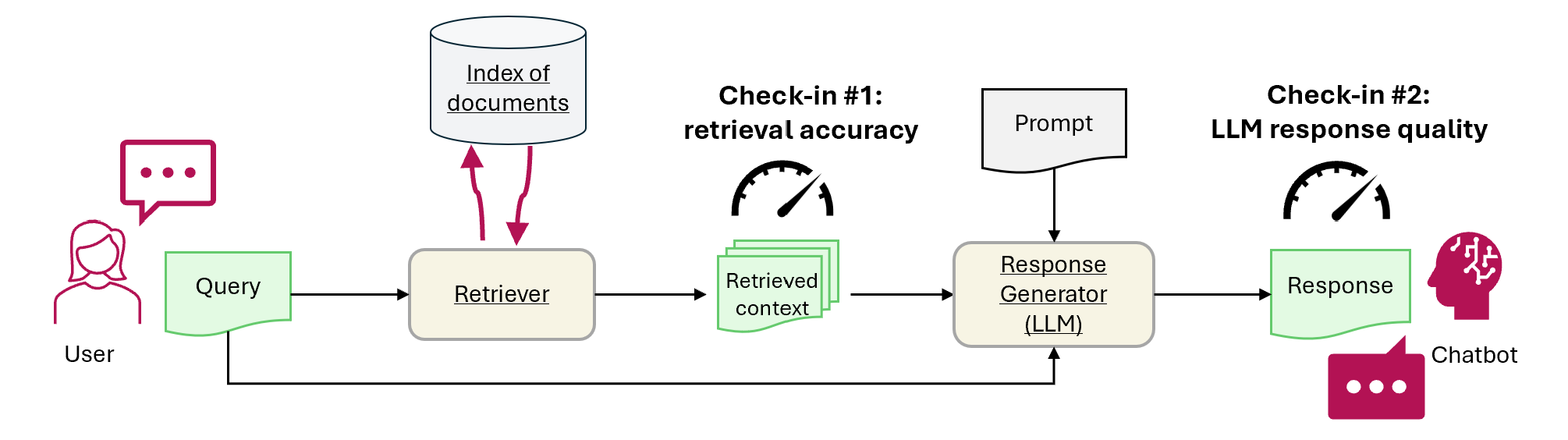

本教程演示如何使用 Fabric 评估 RAG 应用程序性能。 评估侧重于两个主要 RAG 组件:检索器(Azure AI 搜索)和响应生成器(使用用户的查询、检索的上下文和生成回复的提示的 LLM)。 以下是主要步骤:

- 设置 Azure OpenAI 和 Azure AI 搜索服务

- 从 CMU 的 QA 数据集中加载维基百科文章的数据以构建基准

- 使用一个查询运行冒烟测试,以确认 RAG 系统工作端到端

- 定义用于评估的确定性和 AI 辅助指标

- 签入 1:使用最高 N 精度评估检索器性能

- 检查点 2:使用基础性、相关性和相似性指标评估响应生成器性能

- 在 OneLake 中可视化和存储评估结果,供将来参考和持续评估

先决条件

在开始本教程之前,请完成 Fabric 中“构建检索增强生成”分步指南。

需要以下服务才能运行笔记本:

- Microsoft Fabric

- 将 Lakehouse 添加到此笔记本中(它包含您在上一个教程中添加的数据)。

- 用于 OpenAI 的 Azure AI Studio

- Azure AI 搜索(包含在上一教程中索引的数据)。

在上一教程中,你将数据上传到 Lakehouse,并生成了 RAG 系统使用的文档索引。 使用本练习中的索引来学习核心技术来评估 RAG 性能并确定潜在问题。 如果未创建索引或删除索引,请按照 快速入门指南 完成先决条件。

设置对 Azure OpenAI 和 Azure AI 搜索的访问权限

定义终结点和所需的密钥。 导入所需的库和函数。 实例化 Azure OpenAI 和 Azure AI 搜索的客户端。 通过提示定义一个函数包裹器以查询 RAG 系统。

# Enter your Azure OpenAI service values

aoai_endpoint = "https://<your-resource-name>.openai.azure.com" # TODO: Provide the Azure OpenAI resource endpoint (replace <your-resource-name>)

aoai_key = "" # TODO: Fill in your API key from Azure OpenAI

aoai_deployment_name_embeddings = "text-embedding-ada-002"

aoai_model_name_query = "gpt-4-32k"

aoai_model_name_metrics = "gpt-4-32k"

aoai_api_version = "2024-02-01"

# Setup key accesses to Azure AI Search

aisearch_index_name = "" # TODO: Create a new index name: must only contain lowercase, numbers, and dashes

aisearch_api_key = "" # TODO: Fill in your API key from Azure AI Search

aisearch_endpoint = "https://.search.windows.net" # TODO: Provide the url endpoint for your created Azure AI Search

import warnings

warnings.filterwarnings("ignore", category=DeprecationWarning)

import os, requests, json

from datetime import datetime, timedelta

from azure.core.credentials import AzureKeyCredential

from azure.search.documents import SearchClient

from pyspark.sql import functions as F

from pyspark.sql.functions import to_timestamp, current_timestamp, concat, col, split, explode, udf, monotonically_increasing_id, when, rand, coalesce, lit, input_file_name, regexp_extract, concat_ws, length, ceil

from pyspark.sql.types import StructType, StructField, StringType, IntegerType, TimestampType, ArrayType, FloatType

from pyspark.sql import Row

import pandas as pd

from azure.search.documents.indexes import SearchIndexClient

from azure.search.documents.models import (

VectorizedQuery,

)

from azure.search.documents.indexes.models import (

SearchIndex,

SearchField,

SearchFieldDataType,

SimpleField,

SearchableField,

SemanticConfiguration,

SemanticPrioritizedFields,

SemanticField,

SemanticSearch,

VectorSearch,

HnswAlgorithmConfiguration,

HnswParameters,

VectorSearchProfile,

VectorSearchAlgorithmKind,

VectorSearchAlgorithmMetric,

)

import openai

from openai import AzureOpenAI

import uuid

import matplotlib.pyplot as plt

from synapse.ml.featurize.text import PageSplitter

import ipywidgets as widgets

from IPython.display import display as w_display

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 6, Finished, Available, Finished)

# Configure access to OpenAI endpoint

openai.api_type = "azure"

openai.api_key = aoai_key

openai.api_base = aoai_endpoint

openai.api_version = aoai_api_version

# Create client for accessing embedding endpoint

embed_client = AzureOpenAI(

api_version=aoai_api_version,

azure_endpoint=aoai_endpoint,

api_key=aoai_key,

)

# Create client for accessing chat endpoint

chat_client = AzureOpenAI(

azure_endpoint=aoai_endpoint,

api_key=aoai_key,

api_version=aoai_api_version,

)

# Configure access to Azure AI Search

search_client = SearchClient(

aisearch_endpoint,

aisearch_index_name,

credential=AzureKeyCredential(aisearch_api_key)

)

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 7, Finished, Available, Finished)

以下函数实现两个主要 RAG 组件 - 检索器 (get_context_source) 和响应生成器 (get_answer)。 该代码类似于上一教程。 该 topN 参数允许你设置要检索的相关资源数(本教程使用 3,但最佳值可能因数据集而异):

# Implement retriever

def get_context_source(question, topN=3):

"""

Retrieves contextual information and sources related to a given question using embeddings and a vector search.

Parameters:

question (str): The question for which the context and sources are to be retrieved.

topN (int, optional): The number of top results to retrieve. Default is 3.

Returns:

List: A list containing two elements:

1. A string with the concatenated retrieved context.

2. A list of retrieved source paths.

"""

embed_client = openai.AzureOpenAI(

api_version=aoai_api_version,

azure_endpoint=aoai_endpoint,

api_key=aoai_key,

)

query_embedding = embed_client.embeddings.create(input=question, model=aoai_deployment_name_embeddings).data[0].embedding

vector_query = VectorizedQuery(vector=query_embedding, k_nearest_neighbors=topN, fields="Embedding")

results = search_client.search(

vector_queries=[vector_query],

top=topN,

)

retrieved_context = ""

retrieved_sources = []

for result in results:

retrieved_context += result['ExtractedPath'] + "\n" + result['Chunk'] + "\n\n"

retrieved_sources.append(result['ExtractedPath'])

return [retrieved_context, retrieved_sources]

# Implement response generator

def get_answer(question, context):

"""

Generates a response to a given question using provided context and an Azure OpenAI model.

Parameters:

question (str): The question that needs to be answered.

context (str): The contextual information related to the question that will help generate a relevant response.

Returns:

str: The response generated by the Azure OpenAI model based on the provided question and context.

"""

messages = [

{

"role": "system",

"content": "You are a chat assistant. Use provided text to ground your response. Give a one-word answer when possible ('yes'/'no' is OK where appropriate, no details). Unnecessary words incur a $500 penalty."

}

]

messages.append(

{

"role": "user",

"content": question + "\n" + context,

},

)

chat_client = openai.AzureOpenAI(

azure_endpoint=aoai_endpoint,

api_key=aoai_key,

api_version=aoai_api_version,

)

chat_completion = chat_client.chat.completions.create(

model=aoai_model_name_query,

messages=messages,

)

return chat_completion.choices[0].message.content

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 8, Finished, Available, Finished)

Dataset

卡内基梅隆大学 Question-Answer 数据集版本 1.2 是维基百科文章的语料库,其中包含手动编写的事实问题和答案。 它托管在 GFDL 下的 Azure Blob 存储中。 数据集包含一个具有以下字段的表:

-

ArticleTitle:维基百科文章的名称,问题和答案来自 -

Question:手动编写的关于文章的问题 -

Answer:基于文章手动编写的答案 -

DifficultyFromQuestioner:难以对问题作者分配的问题进行评分 -

DifficultyFromAnswerer:评估程序分配的难度评分;可以不同于DifficultyFromQuestioner -

ExtractedPath:原始文章的路径(一篇文章可以有多个问答对) -

text:经过清理的维基百科文本

从同一位置下载 LICENSE-S08 和 LICENSE-S09 文件以获取许可证详细信息。

历史记录和引文

将此引文用于数据集:

CMU Question/Answer Dataset, Release 1.2

August 23, 2013

Noah A. Smith, Michael Heilman, and Rebecca Hwa

Question Generation as a Competitive Undergraduate Course Project

In Proceedings of the NSF Workshop on the Question Generation Shared Task and Evaluation Challenge, Arlington, VA, September 2008.

Available at http://www.cs.cmu.edu/~nasmith/papers/smith+heilman+hwa.nsf08.pdf.

Original dataset acknowledgments:

This research project was supported by NSF IIS-0713265 (to Smith), an NSF Graduate Research Fellowship (to Heilman), NSF IIS-0712810 and IIS-0745914 (to Hwa), and Institute of Education Sciences, U.S. Department of Education R305B040063 (to Carnegie Mellon).

cmu-qa-08-09 (modified version)

June 12, 2024

Amir Jafari, Alexandra Savelieva, Brice Chung, Hossein Khadivi Heris, Journey McDowell

This release uses the GNU Free Documentation License (GFDL) (http://www.gnu.org/licenses/fdl.html).

The GNU license applies to all copies of the dataset.

创建基准

导入基准。 对于此演示,请使用来自 S08/set1 和 S08/set2 分组的问题子集。 若要每篇文章保留一个问题,请应用 df.dropDuplicates(["ExtractedPath"])。 删除重复的问题。 策展过程增加了难度标签;本示例将它们限制为 medium.

df = spark.sql("SELECT * FROM data_load_tests.cmu_qa")

# Filter the DataFrame to include the specified paths

df = df.filter((col("ExtractedPath").like("S08/data/set1/%")) | (col("ExtractedPath").like("S08/data/set2/%")))

# Keep only medium-difficulty questions.

df = df.filter(col("DifficultyFromQuestioner") == "medium")

# Drop duplicate questions and source paths.

df = df.dropDuplicates(["Question"])

df = df.dropDuplicates(["ExtractedPath"])

num_rows = df.count()

num_columns = len(df.columns)

print(f"Number of rows: {num_rows}, Number of columns: {num_columns}")

# Persist the DataFrame

df.persist()

display(df)

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 9, Finished, Available, Finished)Number of rows: 20, Number of columns: 7SynapseWidget(Synapse.DataFrame, 47aff8cb-72f8-4a36-885c-f4f3bb830a91)

结果是包含 20 行的数据帧 - 演示基准。 关键字段是 Question,Answer(人工整理的真实答案),和 ExtractedPath(源文档)。 调整筛选器以包含其他问题,并调整难度以获得更真实的示例。 试一试。

运行简单的端到端测试

从检索扩充生成(RAG)的端到端烟雾测试开始。

question = "How many suborders are turtles divided into?"

retrieved_context, retrieved_sources = get_context_source(question)

answer = get_answer(question, retrieved_context)

print(answer)

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 10, Finished, Available, Finished)Three

此冒烟测试有助于查找 RAG 实现中的问题,例如凭据不正确、缺少或空矢量索引或不兼容的函数接口。 如果测试失败,请检查是否存在问题。 预期输出: Three. 如果冒烟测试通过,继续阅读下一部分以进一步评估 RAG。

建立指标

定义用于评估检索器的确定性指标。 它受到搜索引擎的启发。 它会检查检索到的源列表是否包括地面真相源。 该指标是 top-N 精确度得分,因为参数设置了检索到的源的数量。

def get_retrieval_score(target_source, retrieved_sources):

if target_source in retrieved_sources:

return 1

else:

return 0

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 11, Finished, Available, Finished)

根据基准,答案包含在 ID 为 "S08/data/set1/a9"源中。 在上面运行的示例上测试该函数时,会按预期返回1,因为它位于前三段相关文本区块中。

print("Retrieved sources:", retrieved_sources)

get_retrieval_score("S08/data/set1/a9", retrieved_sources)

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 12, Finished, Available, Finished)Retrieved sources: ['S08/data/set1/a9', 'S08/data/set1/a9', 'S08/data/set1/a5']1

本部分定义 AI 辅助指标。 提示模板中包含了一些输入示例(CONTEXT 和 ANSWER),以及建议输出——这种模板也称为小样本模型。 与 Azure AI Studio 中使用的提示相同。 详细了解 内置评估指标。 此演示使用 groundedness 和 relevance 指标 - 这些通常最有用且最可靠,可用于评估 GPT 模型。 其他指标可能很有用,但提供的理解较少——例如,答案不必相似就可以是正确的,因此 similarity 分数可能会带来误导。 所有指标的规模为 1 到 5。 更高越好。 扎根性仅接受两个输入(上下文和生成的答案),而其他两个指标还使用真实数据进行评估。

def get_groundedness_metric(context, answer):

"""Get the groundedness score from the LLM using the context and answer."""

groundedness_prompt_template = """

You are presented with a CONTEXT and an ANSWER about that CONTEXT. Decide whether the ANSWER is entailed by the CONTEXT by choosing one of the following ratings:

1. 5: The ANSWER follows logically from the information contained in the CONTEXT.

2. 1: The ANSWER is logically false from the information contained in the CONTEXT.

3. an integer score between 1 and 5 and if such integer score does not exist, use 1: It is not possible to determine whether the ANSWER is true or false without further information. Read the passage of information thoroughly and select the correct answer from the three answer labels. Read the CONTEXT thoroughly to ensure you know what the CONTEXT entails. Note the ANSWER is generated by a computer system, it can contain certain symbols, which should not be a negative factor in the evaluation.

Independent Examples:

## Example Task #1 Input:

"CONTEXT": "Some are reported as not having been wanted at all.", "QUESTION": "", "ANSWER": "All are reported as being completely and fully wanted."

## Example Task #1 Output:

1

## Example Task #2 Input:

"CONTEXT": "Ten new television shows appeared during the month of September. Five of the shows were sitcoms, three were hourlong dramas, and two were news-magazine shows. By January, only seven of these new shows were still on the air. Five of the shows that remained were sitcoms.", "QUESTION": "", "ANSWER": "At least one of the shows that were cancelled was an hourlong drama."

## Example Task #2 Output:

5

## Example Task #3 Input:

"CONTEXT": "In Quebec, an allophone is a resident, usually an immigrant, whose mother tongue or home language is neither French nor English.", "QUESTION": "", "ANSWER": "In Quebec, an allophone is a resident, usually an immigrant, whose mother tongue or home language is not French."

5

## Example Task #4 Input:

"CONTEXT": "Some are reported as not having been wanted at all.", "QUESTION": "", "ANSWER": "All are reported as being completely and fully wanted."

## Example Task #4 Output:

1

## Actual Task Input:

"CONTEXT": {context}, "QUESTION": "", "ANSWER": {answer}

Reminder: The return values for each task should be correctly formatted as an integer between 1 and 5. Do not repeat the context and question. Don't explain the reasoning. The answer should include only a number: 1, 2, 3, 4, or 5.

Actual Task Output:

"""

metric_client = openai.AzureOpenAI(

api_version=aoai_api_version,

azure_endpoint=aoai_endpoint,

api_key=aoai_key,

)

messages = [

{

"role": "system",

"content": "You are an AI assistant. You will be given the definition of an evaluation metric for assessing the quality of an answer in a question-answering task. Your job is to compute an accurate evaluation score using the provided evaluation metric."

},

{

"role": "user",

"content": groundedness_prompt_template.format(context=context, answer=answer)

}

]

metric_completion = metric_client.chat.completions.create(

model=aoai_model_name_metrics,

messages=messages,

temperature=0,

)

return metric_completion.choices[0].message.content

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 13, Finished, Available, Finished)

def get_relevance_metric(context, question, answer):

relevance_prompt_template = """

Relevance measures how well the answer addresses the main aspects of the question, based on the context. Consider whether all and only the important aspects are contained in the answer when evaluating relevance. Given the context and question, score the relevance of the answer between one to five stars using the following rating scale:

One star: the answer completely lacks relevance

Two stars: the answer mostly lacks relevance

Three stars: the answer is partially relevant

Four stars: the answer is mostly relevant

Five stars: the answer has perfect relevance

This rating value should always be an integer between 1 and 5. So the rating produced should be 1 or 2 or 3 or 4 or 5.

context: Marie Curie was a Polish-born physicist and chemist who pioneered research on radioactivity and was the first woman to win a Nobel Prize.

question: What field did Marie Curie excel in?

answer: Marie Curie was a renowned painter who focused mainly on impressionist styles and techniques.

stars: 1

context: The Beatles were an English rock band formed in Liverpool in 1960, and they are widely regarded as the most influential music band in history.

question: Where were The Beatles formed?

answer: The band The Beatles began their journey in London, England, and they changed the history of music.

stars: 2

context: The recent Mars rover, Perseverance, was launched in 2020 with the main goal of searching for signs of ancient life on Mars. The rover also carries an experiment called MOXIE, which aims to generate oxygen from the Martian atmosphere.

question: What are the main goals of Perseverance Mars rover mission?

answer: The Perseverance Mars rover mission focuses on searching for signs of ancient life on Mars.

stars: 3

context: The Mediterranean diet is a commonly recommended dietary plan that emphasizes fruits, vegetables, whole grains, legumes, lean proteins, and healthy fats. Studies have shown that it offers numerous health benefits, including a reduced risk of heart disease and improved cognitive health.

question: What are the main components of the Mediterranean diet?

answer: The Mediterranean diet primarily consists of fruits, vegetables, whole grains, and legumes.

stars: 4

context: The Queen's Royal Castle is a well-known tourist attraction in the United Kingdom. It spans over 500 acres and contains extensive gardens and parks. The castle was built in the 15th century and has been home to generations of royalty.

question: What are the main attractions of the Queen's Royal Castle?

answer: The main attractions of the Queen's Royal Castle are its expansive 500-acre grounds, extensive gardens, parks, and the historical castle itself, which dates back to the 15th century and has housed generations of royalty.

stars: 5

Don't explain the reasoning. The answer should include only a number: 1, 2, 3, 4, or 5.

context: {context}

question: {question}

answer: {answer}

stars:

"""

metric_client = openai.AzureOpenAI(

api_version=aoai_api_version,

azure_endpoint=aoai_endpoint,

api_key=aoai_key,

)

messages = [

{

"role": "system",

"content": "You are an AI assistant. You are given the definition of an evaluation metric for assessing the quality of an answer in a question-answering task. Compute an accurate evaluation score using the provided evaluation metric."

},

{

"role": "user",

"content": relevance_prompt_template.format(context=context, question=question, answer=answer)

}

]

metric_completion = metric_client.chat.completions.create(

model=aoai_model_name_metrics,

messages=messages,

temperature=0,

)

return metric_completion.choices[0].message.content

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 14, Finished, Available, Finished)

def get_similarity_metric(question, ground_truth, answer):

similarity_prompt_template = """

Equivalence, as a metric, measures the similarity between the predicted answer and the correct answer. If the information and content in the predicted answer is similar or equivalent to the correct answer, then the value of the Equivalence metric should be high, else it should be low. Given the question, correct answer, and predicted answer, determine the value of Equivalence metric using the following rating scale:

One star: the predicted answer is not at all similar to the correct answer

Two stars: the predicted answer is mostly not similar to the correct answer

Three stars: the predicted answer is somewhat similar to the correct answer

Four stars: the predicted answer is mostly similar to the correct answer

Five stars: the predicted answer is completely similar to the correct answer

This rating value should always be an integer between 1 and 5. So the rating produced should be 1 or 2 or 3 or 4 or 5.

The examples below show the Equivalence score for a question, a correct answer, and a predicted answer.

question: What is the role of ribosomes?

correct answer: Ribosomes are cellular structures responsible for protein synthesis. They interpret the genetic information carried by messenger RNA (mRNA) and use it to assemble amino acids into proteins.

predicted answer: Ribosomes participate in carbohydrate breakdown by removing nutrients from complex sugar molecules.

stars: 1

question: Why did the Titanic sink?

correct answer: The Titanic sank after it struck an iceberg during its maiden voyage in 1912. The impact caused the ship's hull to breach, allowing water to flood into the vessel. The ship's design, lifeboat shortage, and lack of timely rescue efforts contributed to the tragic loss of life.

predicted answer: The sinking of the Titanic was a result of a large iceberg collision. This caused the ship to take on water and eventually sink, leading to the death of many passengers due to a shortage of lifeboats and insufficient rescue attempts.

stars: 2

question: What causes seasons on Earth?

correct answer: Seasons on Earth are caused by the tilt of the Earth's axis and its revolution around the Sun. As the Earth orbits the Sun, the tilt causes different parts of the planet to receive varying amounts of sunlight, resulting in changes in temperature and weather patterns.

predicted answer: Seasons occur because of the Earth's rotation and its elliptical orbit around the Sun. The tilt of the Earth's axis causes regions to be subjected to different sunlight intensities, which leads to temperature fluctuations and alternating weather conditions.

stars: 3

question: How does photosynthesis work?

correct answer: Photosynthesis is a process by which green plants and some other organisms convert light energy into chemical energy. This occurs as light is absorbed by chlorophyll molecules, and then carbon dioxide and water are converted into glucose and oxygen through a series of reactions.

predicted answer: In photosynthesis, sunlight is transformed into nutrients by plants and certain microorganisms. Light is captured by chlorophyll molecules, followed by the conversion of carbon dioxide and water into sugar and oxygen through multiple reactions.

stars: 4

question: What are the health benefits of regular exercise?

correct answer: Regular exercise can help maintain a healthy weight, increase muscle and bone strength, and reduce the risk of chronic diseases. It also promotes mental well-being by reducing stress and improving overall mood.

predicted answer: Routine physical activity can contribute to maintaining ideal body weight, enhancing muscle and bone strength, and preventing chronic illnesses. In addition, it supports mental health by alleviating stress and augmenting general mood.

stars: 5

Don't explain the reasoning. The answer should include only a number: 1, 2, 3, 4, or 5.

question: {question}

correct answer:{ground_truth}

predicted answer: {answer}

stars:

"""

metric_client = openai.AzureOpenAI(

api_version=aoai_api_version,

azure_endpoint=aoai_endpoint,

api_key=aoai_key,

)

messages = [

{

"role": "system",

"content": "You are an AI assistant. You will be given the definition of an evaluation metric for assessing the quality of an answer in a question-answering task. Your job is to compute an accurate evaluation score using the provided evaluation metric."

},

{

"role": "user",

"content": similarity_prompt_template.format(question=question, ground_truth=ground_truth, answer=answer)

}

]

metric_completion = metric_client.chat.completions.create(

model=aoai_model_name_metrics,

messages=messages,

temperature=0,

)

return metric_completion.choices[0].message.content

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 15, Finished, Available, Finished)

测试相关性指标:

get_relevance_metric(retrieved_context, question, answer)

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 16, Finished, Available, Finished)'2'

分数为 5 表示答案相关。 以下代码获取相似性指标:

get_similarity_metric(question, 'three', answer)

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 17, Finished, Available, Finished)'5'

分数 5 表示答案与人类专家策划的基础真相答案相匹配。 AI 辅助指标分数可能会随相同的输入而波动。 他们的速度比使用人类法官更快。

评估基准 Q&A 上的 RAG 性能

创建可大规模运行的函数包装器。 包装以 _udf(即user-defined function)结尾的每个函数,使其符合 Spark 标准,并能够在群集上更快速地运行大型数据计算。

# UDF wrappers for RAG components

@udf(returnType=StructType([

StructField("retrieved_context", StringType(), True),

StructField("retrieved_sources", ArrayType(StringType()), True)

]))

def get_context_source_udf(question, topN=3):

return get_context_source(question, topN)

@udf(returnType=StringType())

def get_answer_udf(question, context):

return get_answer(question, context)

# UDF wrapper for retrieval score

@udf(returnType=StringType())

def get_retrieval_score_udf(target_source, retrieved_sources):

return get_retrieval_score(target_source, retrieved_sources)

# UDF wrappers for AI-assisted metrics

@udf(returnType=StringType())

def get_groundedness_metric_udf(context, answer):

return get_groundedness_metric(context, answer)

@udf(returnType=StringType())

def get_relevance_metric_udf(context, question, answer):

return get_relevance_metric(context, question, answer)

@udf(returnType=StringType())

def get_similarity_metric_udf(question, ground_truth, answer):

return get_similarity_metric(question, ground_truth, answer)

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 18, Finished, Available, Finished)

代码提交 #1:检索工具的性能

以下代码在基准数据帧中创建 result 和 retrieval_score 列。 这些列包括 RAG 生成的答案,以及提供给 LLM 的上下文是否包含问题所基于的文章的指示器。

df = df.withColumn("result", get_context_source_udf(df.Question)).select(df.columns+["result.*"])

df = df.withColumn('retrieval_score', get_retrieval_score_udf(df.ExtractedPath, df.retrieved_sources))

print("Aggregate Retrieval score: {:.2f}%".format((df.where(df["retrieval_score"] == 1).count() / df.count()) * 100))

display(df.select(["question", "retrieval_score", "ExtractedPath", "retrieved_sources"]))

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 19, Finished, Available, Finished)Aggregate Retrieval score: 100.00%SynapseWidget(Synapse.DataFrame, 14efe386-836a-4765-bd88-b121f32c7cfc)

对于所有问题,检索器会提取正确的上下文,在大多数情况下,它是第一个条目。 Azure AI 搜索性能良好。 在某些情况下,你可能想知道为什么上下文有两个或三个相同的值。 这不是错误 - 这意味着检索器提取同一篇文章的片段,这些片段不适合在拆分过程中放入一个区块。

签入 #2:响应生成器的性能

将问题和上下文传递给 LLM 以生成答案。 将 generated_answer 存储在 DataFrame 的列中。

df = df.withColumn('generated_answer', get_answer_udf(df.Question, df.retrieved_context))

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 20, Finished, Available, Finished)

使用生成的答案、实况答案、问题和上下文来计算指标。 显示每个问答对的评估结果:

df = df.withColumn('gpt_groundedness', get_groundedness_metric_udf(df.retrieved_context, df.generated_answer))

df = df.withColumn('gpt_relevance', get_relevance_metric_udf(df.retrieved_context, df.Question, df.generated_answer))

df = df.withColumn('gpt_similarity', get_similarity_metric_udf(df.Question, df.Answer, df.generated_answer))

display(df.select(["question", "answer", "generated_answer", "retrieval_score", "gpt_groundedness","gpt_relevance", "gpt_similarity"]))

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 21, Finished, Available, Finished)SynapseWidget(Synapse.DataFrame, 22b97d27-91e1-40f3-b888-3a3399de9d6b)

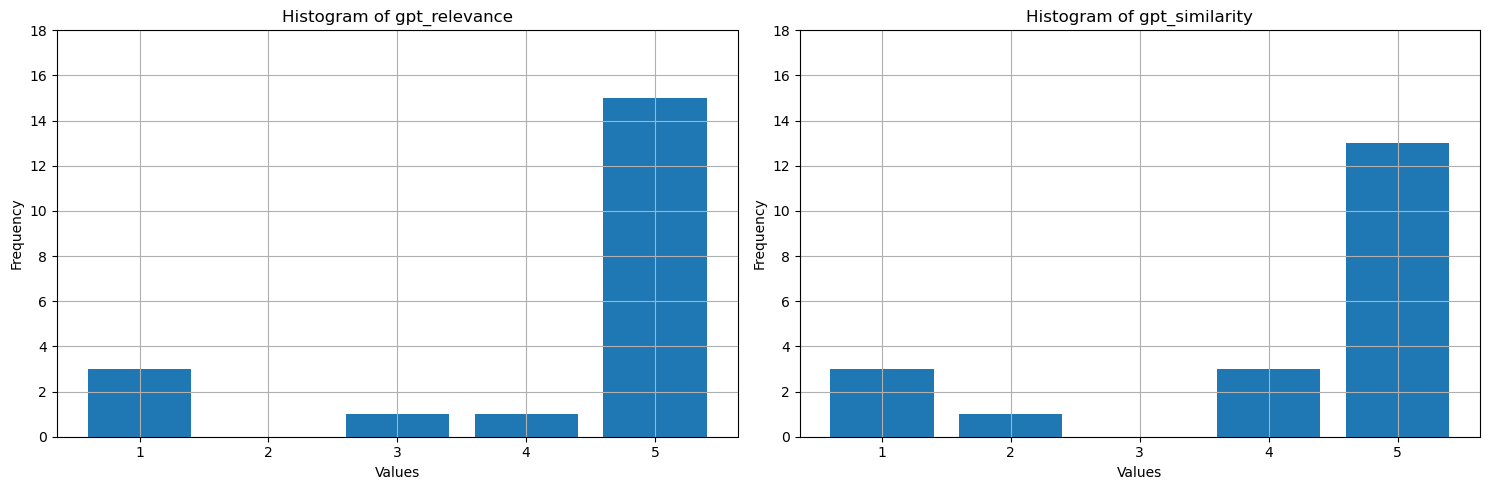

这些值显示什么? 为了使其更易于解释,请绘制基础性、相关性和相似性的直方图。 LLM 比人类真实答案更冗长,这降低了相似度指标,大约一半的答案在语义上是正确的,但收到四星,这意味着大多数答案相似。 所有三个指标的大多数值为 4 或 5,这表明 RAG 性能良好。 有一些离群值——例如,对于问题How many species of otter are there?,模型生成的结果There are 13 species of otter是正确的,且具有较高的相关性和相似性(5)。 出于某种原因,GPT认为它在提供的背景中缺乏依据,并只给了它一颗星。 在另外三种情况下,其中至少一个 AI 辅助指标为一星,低分意味着回答不佳。 LLM 偶尔会错误得分,但通常准确得分。

# Convert Spark DataFrame to Pandas DataFrame

pandas_df = df.toPandas()

selected_columns = ['gpt_groundedness', 'gpt_relevance', 'gpt_similarity']

trimmed_df = pandas_df[selected_columns].astype(int)

# Define a function to plot histograms for the specified columns

def plot_histograms(dataframe, columns):

# Set up the figure size and subplots

plt.figure(figsize=(15, 5))

for i, column in enumerate(columns, 1):

plt.subplot(1, len(columns), i)

# Filter the dataframe to only include rows with values 1, 2, 3, 4, 5

filtered_df = dataframe[dataframe[column].isin([1, 2, 3, 4, 5])]

filtered_df[column].hist(bins=range(1, 7), align='left', rwidth=0.8)

plt.title(f'Histogram of {column}')

plt.xlabel('Values')

plt.ylabel('Frequency')

plt.xticks(range(1, 6))

plt.yticks(range(0, 20, 2))

# Call the function to plot histograms for the specified columns

plot_histograms(trimmed_df, selected_columns)

# Show the plots

plt.tight_layout()

plt.show()

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 24, Finished, Available, Finished)

最后一步,将基准结果保存到 Lakehouse 中的表中。 此步骤是可选的,但强烈建议你这样做- 它使你的发现更有用。 在 RAG 中更改某些内容(例如,修改提示、更新索引或使用响应生成器中的其他 GPT 模型)时,请测量影响、量化改进和检测回归。

# create name of experiment that is easy to refer to

friendly_name_of_experiment = "rag_tutorial_experiment_1"

# Note the current date and time

time_of_experiment = current_timestamp()

# Generate a unique GUID for all rows

experiment_id = str(uuid.uuid4())

# Add two new columns to the Spark DataFrame

updated_df = df.withColumn("execution_time", time_of_experiment) \

.withColumn("experiment_id", lit(experiment_id)) \

.withColumn("experiment_friendly_name", lit(friendly_name_of_experiment))

# Store the updated DataFrame in the default lakehouse as a table named 'rag_experiment_runs'

table_name = "rag_experiment_run_demo1"

updated_df.write.format("parquet").mode("append").saveAsTable(table_name)

单元格输出:StatementMeta(, 21cb8cd3-7742-4c1f-8339-265e2846df1d, 28, Finished, Available, Finished)

随时返回到试验结果,查看它们、与新试验进行比较,并选择最适合生产的配置。

概要

使用 AI 辅助指标和前 N 名检索率构建您的基于检索增强生成(RAG)的解决方案。