Training is the process where the model learns from your labeled data. After training is completed, you can view the model's performance to determine if you need to improve your model.

To train a model, start a training job. Only successfully completed jobs create a usable model. Training jobs expire after seven days. After this period, you won't be able to retrieve the job details. If your training job completed successfully and a model was created, the job expiration isn't affected. You can only have one training job running at a time, and you can't start other jobs in the same project.

Depending on the dataset size and the complexity of your schema, training times can vary from a few minutes up to several hours.

Prerequisites

Before you train your model, you need:

See the project development lifecycle.

Data splitting

Before you start the training process, labeled documents in your project are divided into a training set and a testing set. Each one of them serves a different function.

The training set is used in training the model and where the model learns the class/classes assigned to each document.

The testing set is a blind set that isn't introduced to the model during training but only during evaluation.

After the model is trained successfully, it can make predictions from the documents in the testing set. Based on these predictions, the model's evaluation metrics are calculated.

We recommend making sure that all your classes are adequately represented in both the training and testing set.

Custom text classification supports two methods for data splitting:

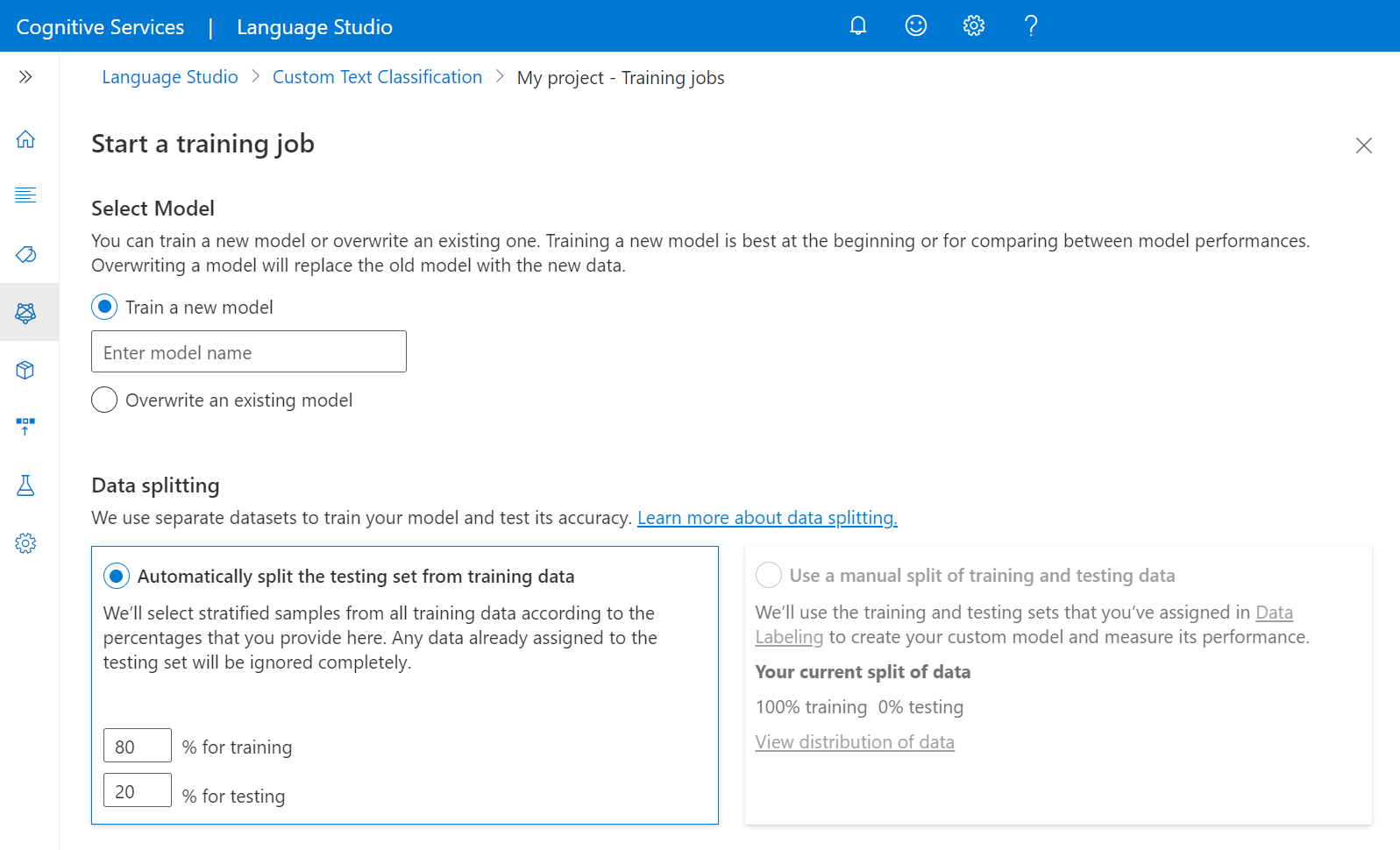

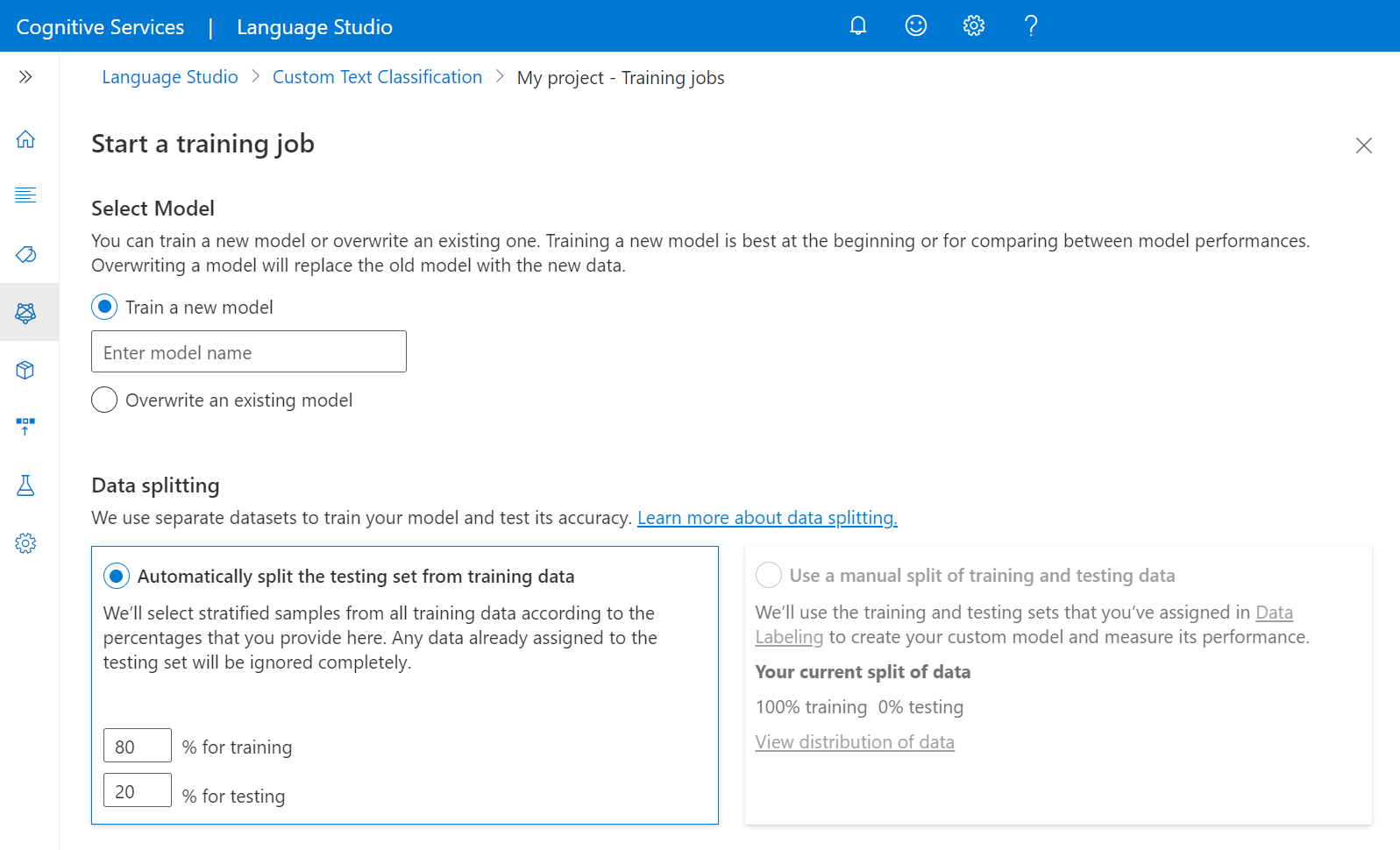

- Automatically splitting the testing set from training data: The system splits your labeled data between the training and testing sets, according to the percentages you choose. The system attempts to have a representation of all classes in your training set. The recommended percentage split is 80% for training and 20% for testing.

Note

If you choose the Automatically splitting the testing set from training data option, only the data assigned to training set is split according to the percentages provided.

- Use a manual split of training and testing data: This method enables users to define which labeled documents should belong to which set. This step is only enabled if you added documents to your testing set during data labeling.

Train model

To start training your model from within the Language Studio:

Select Training jobs from the left side menu.

Select Start a training job from the top menu.

Select Train a new model and type in the model name in the text box. You can also overwrite an existing model by selecting this option and choosing the model you want to overwrite from the dropdown menu. Overwriting a trained model is irreversible, but it won't affect your deployed models until you deploy the new model.

Select data splitting method. You can choose Automatically splitting the testing set from training data where the system will split your labeled data between the training and testing sets, according to the specified percentages. Or you can Use a manual split of training and testing data, this option is only enabled if you added documents to your testing set during data labeling. See How to train a model for more information on data splitting.

Select the Train button.

If you select the training job ID from the list, a side pane will appear where you can check the Training progress, Job status, and other details for this job.

Note

- Only successfully completed training jobs will generate models.

- The time to train the model can take anywhere between a few minutes to several hours based on the size of your labeled data.

- You can only have one training job running at a time. You can't start other training job within the same project until the running job is completed.

Start training job

Submit a POST request using the following URL, headers, and JSON body to submit a training job. Replace the placeholder values with your own values.

{ENDPOINT}/language/authoring/analyze-text/projects/{PROJECT-NAME}/:train?api-version={API-VERSION}

| Placeholder |

Value |

Example |

{ENDPOINT} |

The endpoint for authenticating your API request. |

https://<your-custom-subdomain>.cognitiveservices.azure.com |

{PROJECT-NAME} |

The name of your project. This value is case-sensitive. |

myProject |

{API-VERSION} |

The version of the API you're calling. The value referenced is for the latest version released. Learn more about other available API versions |

2022-05-01 |

Use the following header to authenticate your request.

| Key |

Value |

Ocp-Apim-Subscription-Key |

The key to your resource. Used for authenticating your API requests. |

Request body

Use the following JSON in your request body. The model will be given the {MODEL-NAME} once training is complete. Only successful training jobs will produce models.

{

"modelLabel": "{MODEL-NAME}",

"trainingConfigVersion": "{CONFIG-VERSION}",

"evaluationOptions": {

"kind": "percentage",

"trainingSplitPercentage": 80,

"testingSplitPercentage": 20

}

}

| Key |

Placeholder |

Value |

Example |

| modelLabel |

{MODEL-NAME} |

The model name that is assigned to your model once trained successfully. |

myModel |

| trainingConfigVersion |

{CONFIG-VERSION} |

This is the model version used to train the model. |

2022-05-01 |

| evaluationOptions |

|

Option to split your data across training and testing sets. |

{} |

| kind |

percentage |

Split methods. Possible values are percentage or manual. See How to train a model for more information. |

percentage |

| trainingSplitPercentage |

80 |

Percentage of your tagged data to be included in the training set. Recommended value is 80. |

80 |

| testingSplitPercentage |

20 |

Percentage of your tagged data to be included in the testing set. Recommended value is 20. |

20 |

Note

The trainingSplitPercentage and testingSplitPercentage are only required if Kind is set to percentage and the sum of both percentages should be equal to 100.

Once you send your API request, you receive a 202 response indicating that the job was submitted correctly. In the response headers, extract the location value formatted like this:

{ENDPOINT}/language/authoring/analyze-text/projects/{PROJECT-NAME}/train/jobs/{JOB-ID}?api-version={API-VERSION}

{JOB-ID} is used to identify your request, since this operation is asynchronous. You can use this URL to get the training status.

Get training job status

Training could take sometime depending on the size of your training data and complexity of your schema. You can use the following request to keep polling the status of the training job until successfully completed.

Use the following GET request to get the status of your model's training progress. Replace the placeholder values with your own values.

Request URL

{ENDPOINT}/language/authoring/analyze-text/projects/{PROJECT-NAME}/train/jobs/{JOB-ID}?api-version={API-VERSION}

| Placeholder |

Value |

Example |

{ENDPOINT} |

The endpoint for authenticating your API request. |

https://<your-custom-subdomain>.cognitiveservices.azure.com |

{PROJECT-NAME} |

The name of your project. This value is case-sensitive. |

myProject |

{JOB-ID} |

The ID for locating your model's training status. This value is in the location header value you received in the previous step. |

xxxxxxxx-xxxx-xxxx-xxxx-xxxxxxxxxxxxx |

{API-VERSION} |

The version of the API you're calling. The value referenced is for the latest version released. For more information, see Model lifecycle. |

2022-05-01 |

Use the following header to authenticate your request.

| Key |

Value |

Ocp-Apim-Subscription-Key |

The key to your resource. Used for authenticating your API requests. |

Response Body

Once you send the request, you get the following response.

{

"result": {

"modelLabel": "{MODEL-NAME}",

"trainingConfigVersion": "{CONFIG-VERSION}",

"estimatedEndDateTime": "2022-04-18T15:47:58.8190649Z",

"trainingStatus": {

"percentComplete": 3,

"startDateTime": "2022-04-18T15:45:06.8190649Z",

"status": "running"

},

"evaluationStatus": {

"percentComplete": 0,

"status": "notStarted"

}

},

"jobId": "{JOB-ID}",

"createdDateTime": "2022-04-18T15:44:44Z",

"lastUpdatedDateTime": "2022-04-18T15:45:48Z",

"expirationDateTime": "2022-04-25T15:44:44Z",

"status": "running"

}

Cancel training job

To cancel a training job in Language Studio, go to the Training jobs page. Select the training job you want to cancel, and select Cancel from the top menu.

Create a POST request by using the following URL, headers, and JSON body to cancel a training job.

Request URL

Use the following URL when creating your API request. Replace the placeholder values with your own values.

{Endpoint}/language/authoring/analyze-text/projects/{PROJECT-NAME}/train/jobs/{JOB-ID}/:cancel?api-version={API-VERSION}

| Placeholder |

Value |

Example |

{ENDPOINT} |

The endpoint for authenticating your API request. |

https://<your-custom-subdomain>.cognitiveservices.azure.com |

{PROJECT-NAME} |

The name for your project. This value is case-sensitive. |

EmailApp |

{JOB-ID} |

This value is the training job ID. |

XXXXX-XXXXX-XXXX-XX |

{API-VERSION} |

The version of the API you're calling. The value referenced is for the latest released model version. |

2022-05-01 |

Use the following header to authenticate your request.

| Key |

Value |

Ocp-Apim-Subscription-Key |

The key to your resource. Used for authenticating your API requests. |

After you send your API request, you receive a 202 response with an Operation-Location header used to check the status of the job.

Next steps

After training is completed, you're able to view the model's performance to optionally improve your model if needed. Once you're satisfied with your model, you can deploy it, making it available to use for classifying text.