Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

APPLIES TO:  Azure Data Factory

Azure Data Factory  Azure Synapse Analytics

Azure Synapse Analytics

Tip

Try out Data Factory in Microsoft Fabric, an all-in-one analytics solution for enterprises. Microsoft Fabric covers everything from data movement to data science, real-time analytics, business intelligence, and reporting. Learn how to start a new trial for free!

This article outlines how to use Data Flow to transform data in data.world (Preview). To learn more, read the introductory article for Azure Data Factory or Azure Synapse Analytics.

Important

This connector is currently in preview. You can try it out and give us feedback. If you want to take a dependency on preview connectors in your solution, please contact Azure support.

Supported capabilities

This data.world connector is supported for the following capabilities:

| Supported capabilities | IR |

|---|---|

| Mapping data flow (source/-) | ① |

① Azure integration runtime ② Self-hosted integration runtime

For a list of data stores that are supported as sources/sinks, see the Supported data stores table.

Create a data.world linked service using UI

Use the following steps to create a data.world linked service in the Azure portal UI.

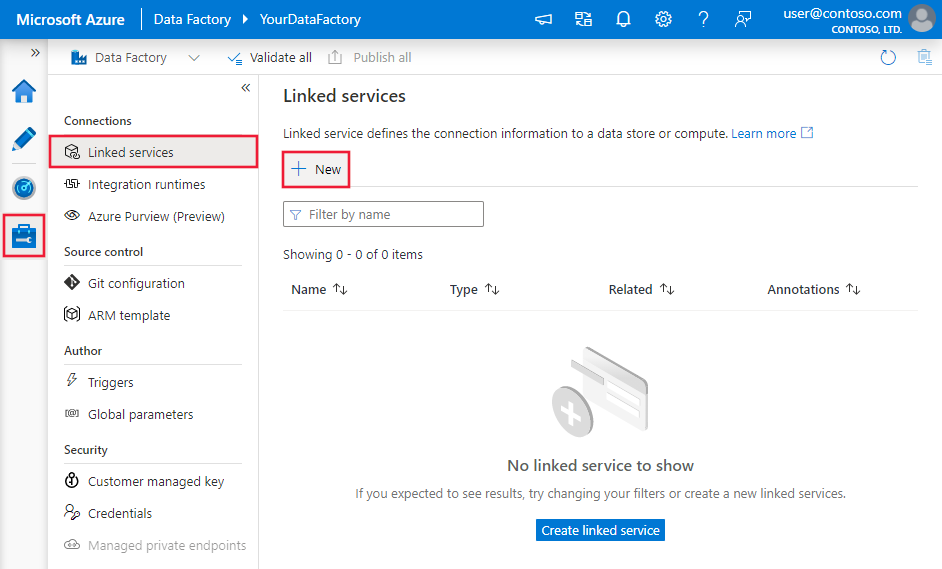

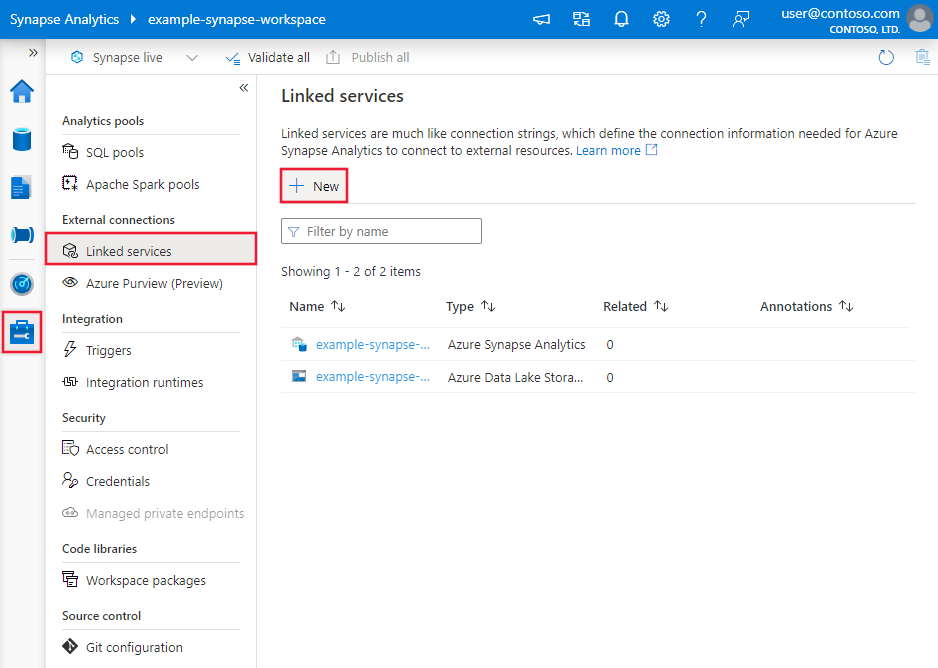

Browse to the Manage tab in your Azure Data Factory or Synapse workspace and select Linked Services, then select New:

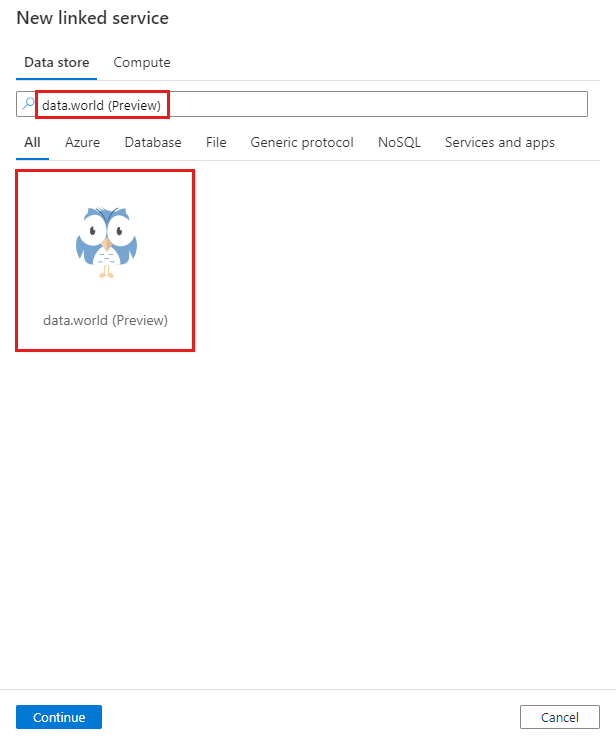

Search for data.world (Preview) and select the data.world (Preview) connector.

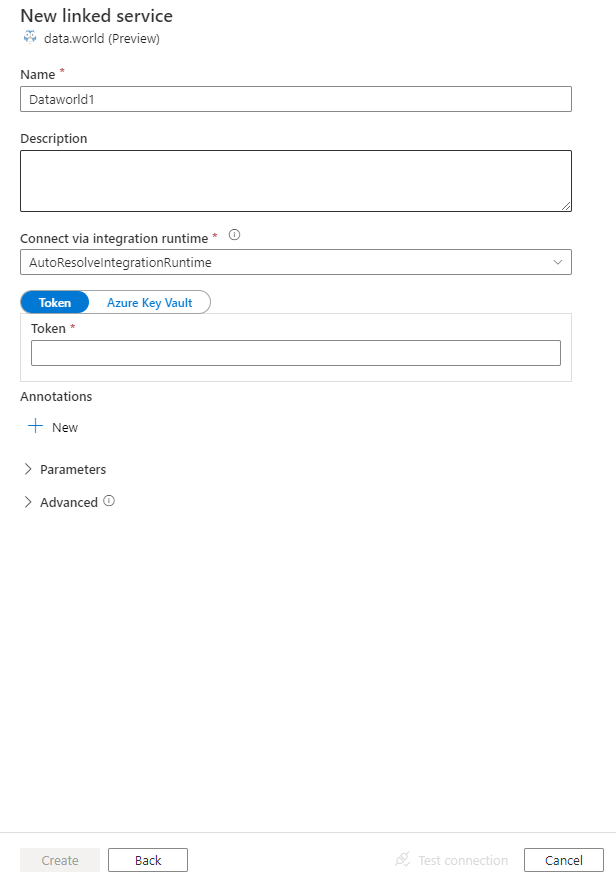

Configure the service details, test the connection, and create the new linked service.

Connector configuration details

The following sections provide information about properties that are used to define Data Factory and Synapse pipeline entities specific to data.world.

Linked service properties

The following properties are supported for the data.world linked service:

| Property | Description | Required |

|---|---|---|

| type | The type property must be set to Dataworld. | Yes |

| apiToken | Specify an API token for the data.world. Mark this field as SecureString to store it securely. Or, you can reference a secret stored in Azure Key Vault. | Yes |

Example:

{

"name": "DataworldLinkedService",

"properties": {

"type": "Dataworld",

"typeProperties": {

"apiToken": {

"type": "SecureString",

"value": "<API token>"

}

}

}

}

Mapping data flow properties

When transforming data in mapping data flow, you can read tables from data.world. For more information, see the source transformation in mapping data flows. You can only use an inline dataset as source type.

Source transformation

The below table lists the properties supported by data.world source. You can edit these properties in the Source options tab.

| Name | Description | Required | Allowed values | Data flow script property |

|---|---|---|---|---|

| Dataset name | The ID of the dataset in data.world. | Yes | String | datasetId |

| Table name | The ID of the table within the dataset in data.world. | No (if query is specified) |

String | tableId |

| Query | Enter a SQL query to fetch data from data.world. An example is select * from MyTable. |

No (if tableId is specified) |

String | query |

| Owner | The owner of the dataset in data.world. | Yes | String | owner |

data.world source script example

When you use data.world as source type, the associated data flow script is:

source(allowSchemaDrift: true,

validateSchema: false,

store: 'dataworld',

format: 'rest',

owner: 'owner1',

datasetId: 'dataset1',

tableId: 'MyTable') ~> DataworldSource

Related content

For a list of data stores supported as sources and sinks by the copy activity, see Supported data stores.