Tunnel connectivity issues

Microsoft Azure Kubernetes Service (AKS) uses a specific component for tunneled, secure communication between the nodes and the control plane. The tunnel consists of a server on the control plane side and a client on the cluster nodes side. This article discusses how to troubleshoot and resolve issues that relate to tunnel connectivity in AKS.

Note

Previously, the AKS tunnel component was tunnel-front. It has now been migrated to the Konnectivity service, an upstream Kubernetes component. For more information about this migration, see the AKS release notes and changelog.

Prerequisites

Symptoms

You receive an error message that resembles the following examples about port 10250:

Error from server: Get "https://<aks-node-name>:10250/containerLogs/<namespace>/<pod-name>/<container-name>": dial tcp <aks-node-ip>:10250: i/o timeout

Error from server: error dialing backend: dial tcp <aks-node-ip>:10250: i/o timeout

The Kubernetes API server uses port 10250 to connect to a node's kubelet to retrieve the logs. If port 10250 is blocked, the kubectl logs and other features will only work for pods that run on the nodes in which the tunnel component is scheduled. For more information, see Kubernetes ports and protocols: Worker nodes.

Because the tunnel components or the connectivity between the server and client can't be established, functionality such as the following won't work as expected:

Admission controller webhooks

Ability of log retrieval (using the kubectl logs command)

Running a command in a container or getting inside a container (using the kubectl exec command)

Forwarding one or more local ports of a pod (using the kubectl port-forward command)

Cause 1: A network security group (NSG) is blocking port 10250

Note

This cause is applicable to any tunnel components that you might have in your AKS cluster.

You can use an Azure network security group (NSG) to filter network traffic to and from Azure resources in an Azure virtual network. A network security group contains security rules that allow or deny inbound and outbound network traffic between several types of Azure resources. For each rule, you can specify source and destination, port, and protocol. For more information, see How network security groups filter network traffic.

If the NSG blocks port 10250 at the virtual network level, tunnel functionalities (such as logs and code execution) will work for only the pods that are scheduled on the nodes where tunnel pods are scheduled. The other pods won't work because their nodes won't be able to reach the tunnel, and the tunnel is scheduled on other nodes. To verify this state, you can test the connectivity by using netcat (nc) or telnet commands. You can run the az vmss run-command invoke command to conduct the connectivity test and verify whether it succeeds, times out, or causes some other issue:

az vmss run-command invoke --resource-group <infra-or-MC-resource-group> \

--name <virtual-machine-scale-set-name> \

--command-id RunShellScript \

--instance-id <instance-id> \

--scripts "nc -v -w 2 <ip-of-node-that-schedules-the-tunnel-component> 10250" \

--output tsv \

--query 'value[0].message'

Solution 1: Add an NSG rule to allow access to port 10250

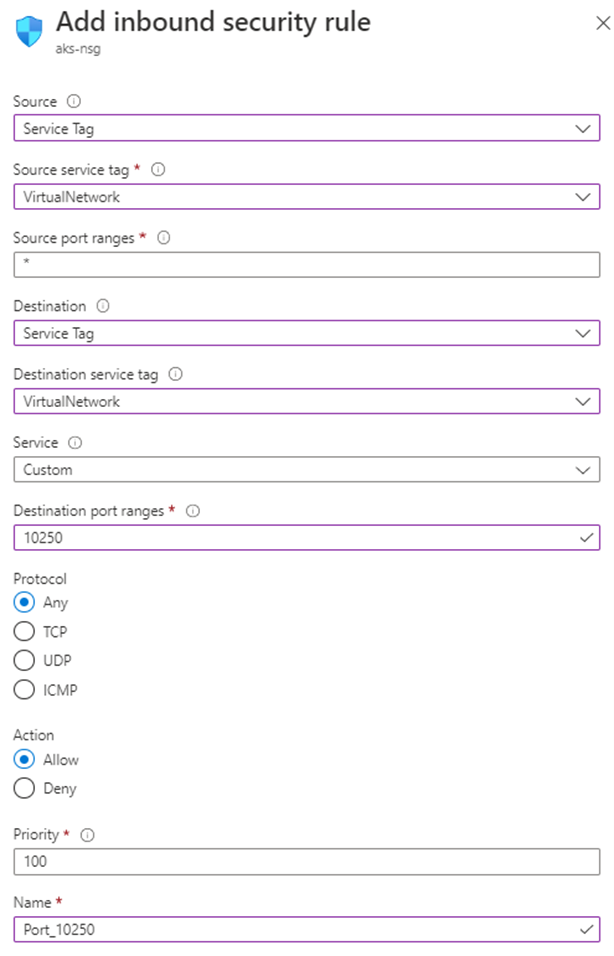

If you use an NSG, and you have specific restrictions, make sure that you add a security rule that allows traffic for port 10250 at the virtual network level. The following Azure portal image shows an example security rule:

If you want to be more restrictive, you can allow access to port 10250 at the subnet level only.

Note

The Priority field must be adjusted accordingly. For example, if you have a rule that denies multiple ports (including port 10250), the rule that's shown in the image should have a lower priority number (lower numbers have higher priority). For more information about Priority, see Security rules.

If you don't see any behavioral change after you apply this solution, you can re-create the tunnel component pods. Deleting these pods causes them to be re-created.

Cause 2: The Uncomplicated Firewall (UFW) tool is blocking port 10250

Note

This cause applies to any tunnel component that you have in your AKS cluster.

Uncomplicated Firewall (UFW) is a command-line program for managing a netfilter firewall. AKS nodes use Ubuntu. Therefore, UFW is installed on AKS nodes by default, but UFW is disabled.

By default, if UFW is enabled, it will block access to all ports, including port 10250. In this case, it's unlikely that you can use Secure Shell (SSH) to connect to AKS cluster nodes for troubleshooting. This is because UFW might also be blocking port 22. To troubleshoot, you can run the az vmss run-command invoke command to invoke a ufw command that checks whether UFW is enabled:

az vmss run-command invoke --resource-group <infra-or-MC-resource-group> \

--name <virtual-machine-scale-set-name> \

--command-id RunShellScript \

--instance-id <instance-id> \

--scripts "ufw status" \

--output tsv \

--query 'value[0].message'

What if the results indicate that UFW is enabled, and it doesn't specifically allow port 10250? In this case, tunnel functionalities (such as logs and code execution) won't work for the pods that are scheduled on the nodes that have UFW enabled. To fix the problem, apply one of the following solutions on UFW.

Important

Before you use this tool to make any changes, review the AKS support policy (especially node maintenance and access) to prevent your cluster from entering into an unsupported scenario.

Note

If you don't see any behavioral change after you apply a solution, you can re-create the tunnel component pods. Deleting these pods will cause them to be re-created.

Solution 2a: Disable Uncomplicated Firewall

Run the following az vmss run-command invoke command to disable UFW:

az vmss run-command invoke --resource-group <infra-or-MC-resource-group> \

--name <virtual-machine-scale-set-name> \

--command-id RunShellScript \

--instance-id <instance-id> \

--scripts "ufw disable" \

--output tsv \

--query 'value[0].message'

Solution 2b: Configure Uncomplicated Firewall to permit access to port 10250

To force UFW to allow access to port 10250, run the following az vmss run-command invoke command:

az vmss run-command invoke --resource-group <infra-or-MC-resource-group> \

--name <virtual-machine-scale-set-name> \

--command-id RunShellScript \

--instance-id <instance-id> \

--scripts "ufw allow 10250" \

--output tsv \

--query 'value[0].message'

Cause 3: The iptables tool is blocking port 10250

Note

This cause applies to any tunnel component that you have in your AKS cluster.

The iptables tool lets a system administrator configure the IP packet filter rules of a Linux firewall. You can configure the iptables rules to block communication on port 10250.

You can view the rules for your nodes to check whether port 10250 is blocked or the associated packets are dropped. To do this, run the following iptables command:

iptables --list --line-numbers

In the output, the data is grouped into several chains, including the INPUT chain. Each chain contains a table of rules under the following column headings:

num(rule number)targetprot(protocol)optsourcedestination

Does the INPUT chain contain a rule in which the target is DROP, the protocol is tcp, and the destination is tcp dpt:10250? If it does, iptables is blocking access to destination port 10250.

Solution 3: Delete the iptables rule that blocks access on port 10250

Run one of the following commands to delete the iptables rule that prevents access to port 10250:

iptables --delete INPUT --jump DROP --protocol tcp --source <ip-number> --destination-port 10250

iptables --delete INPUT <input-rule-number>

To address your exact or potential scenario, we recommend that you check the iptables manual by running the iptables --help command.

Important

Before you use this tool to make any changes, review the AKS support policy (especially node maintenance and access) to prevent your cluster from entering into an unsupported scenario.

Cause 4: Egress port 1194 or 9000 isn't opened

Note

This cause applies to only the tunnel-front and aks-link pods.

Are there any egress traffic restrictions, such as from an AKS firewall? If there are, port 9000 is required in order to enable correct functionality of the tunnel-front pod. Similarly, port 1194 is required for the aks-link pod.

Konnectivity relies on port 443. By default, this port is open. Therefore, you don't have to worry about connectivity issues on that port.

Solution 4: Open port 9000

Although tunnel-front has been moved to the Konnectivity service, some AKS clusters still use tunnel-front, which relies on port 9000. Make sure that the virtual appliance or any network device or software allows access to port 9000. For more information about the required rules and dependencies, see Azure Global required network rules.

Cause 5: Source Network Address Translation (SNAT) port exhaustion

Note

This cause applies to any tunnel component that you have in your AKS cluster. However, it doesn't apply to private AKS clusters. Source Network Address Translation (SNAT) port exhaustion can occur for public communication only. For private AKS clusters, the API server is inside the AKS virtual network or subnet.

If SNAT port exhaustion occurs (failed SNAT ports), the nodes can't connect to the API server. The tunnel container is on the API server side. Therefore, tunnel connectivity won't be established.

If the SNAT port resources are exhausted, the outbound flows fail until the existing flows release some SNAT ports. Azure Load Balancer reclaims the SNAT ports when the flow closes. It uses a four-minute idle time-out to reclaim the SNAT ports from the idle flows.

You can view the SNAT ports from either the AKS load balancer metrics or the service diagnostics, as described in the following sections. For more information about how to view SNAT ports, see How do I check my outbound connection statistics?.

AKS load balancer metrics

To use AKS load balancer metrics to view the SNAT ports, follow these steps:

In the Azure portal, search for and select Kubernetes services.

In the list of Kubernetes services, select the name of your cluster.

In the menu pane of the cluster, find the Settings heading, and then select Properties.

Select the name that's listed under Infrastructure resource group.

Select the kubernetes load balancer.

In the menu pane of the load balancer, find the Monitoring heading, and then select Metrics.

For the metric type, select SNAT Connection Count.

Select Apply splitting.

Set Split by to Connection State.

Service diagnostics

To use service diagnostics to view the SNAT ports, follow these steps:

In the Azure portal, search for and select Kubernetes services.

In the list of Kubernetes services, select the name of your cluster.

In the menu pane of the cluster, select Diagnose and solve problems.

Select Connectivity Issues.

Under SNAT Connection and Port Allocation, select View details.

If necessary, use the Time Range button to customize the time frame.

Solution 5a: Make sure the application is using connection pooling

This behavior might occur because an application isn't reusing existing connections. We recommend that you don't create one outbound connection per request. Such a configuration can cause connection exhaustion. Check whether the application code is following best practices and using connection pooling. Most libraries support connection pooling. Therefore, you shouldn't have to create a new outbound connection per request.

Solution 5b: Adjust the allocated outbound ports

If everything is OK within the application, you'll have to adjust the allocated outbound ports. For more information about outbound port allocation, see Configure the allocated outbound ports.

Solution 5c: Use a Managed Network Address Translation (NAT) Gateway when you create a cluster

You can set up a new cluster to use a Managed Network Address Translation (NAT) Gateway for outbound connections. For more information, see Create an AKS cluster with a Managed NAT Gateway.

Third-party contact disclaimer

Microsoft provides third-party contact information to help you find additional information about this topic. This contact information may change without notice. Microsoft does not guarantee the accuracy of third-party contact information.

Contact us for help

If you have questions or need help, create a support request, or ask Azure community support. You can also submit product feedback to Azure feedback community.