Övervaka onlineslutpunkter

Azure Mašinsko učenje använder integrering med Azure Monitor för att spåra och övervaka mått och loggar för onlineslutpunkter. Du kan visa mått i diagram, jämföra mellan slutpunkter och distributioner, fästa på Instrumentpaneler i Azure-portalen, konfigurera aviseringar, fråga från loggtabeller och skicka loggar till mål som stöds. Du kan också använda Application Insights för att analysera händelser från användarcontainrar.

Mått: För mått på slutpunktsnivå, till exempel svarstid för begäranden, begäranden per minut, nya anslutningar per sekund och nätverksbyte, kan du öka detaljnivån för att se information på distributionsnivå eller statusnivå. Mått på distributionsnivå som processor-/GPU-användning och minnes- eller diskanvändning kan också ökas nedåt till instansnivå. Med Azure Monitor kan du spåra dessa mått i diagram och konfigurera instrumentpaneler och aviseringar för ytterligare analys.

Loggar: Du kan skicka mått till Log Analytics-arbetsytan där du kan köra frågor mot loggarna med kusto-frågesyntax. Du kan också skicka mått till Azure Storage-konton och/eller Event Hubs för vidare bearbetning. Dessutom kan du använda dedikerade loggtabeller för onlineslutpunktsrelaterade händelser, trafik och konsolloggar (container). Kusto-frågan tillåter komplex analys och sammanfogning av flera tabeller.

Application Insights: Utvalda miljöer omfattar integrering med Application Insights och du kan aktivera eller inaktivera den här integreringen när du skapar en onlinedistribution. Inbyggda mått och loggar skickas till Application Insights och du kan använda de inbyggda funktionerna i Application Insights (till exempel Live-mått, transaktionssökning, fel och prestanda) för ytterligare analys.

I den här artikeln lär du dig hur du:

- Välj rätt metod för att visa och spåra mått och loggar

- Visa mått för din onlineslutpunkt

- Skapa en instrumentpanel för dina mått

- Skapa en måttavisering

- Visa loggar för din onlineslutpunkt

- Använda Application Insights för att spåra mått och loggar

Förutsättningar

- Distribuera en Azure-Mašinsko učenje onlineslutpunkt.

- Du måste ha minst läsaråtkomst på slutpunkten.

Mått

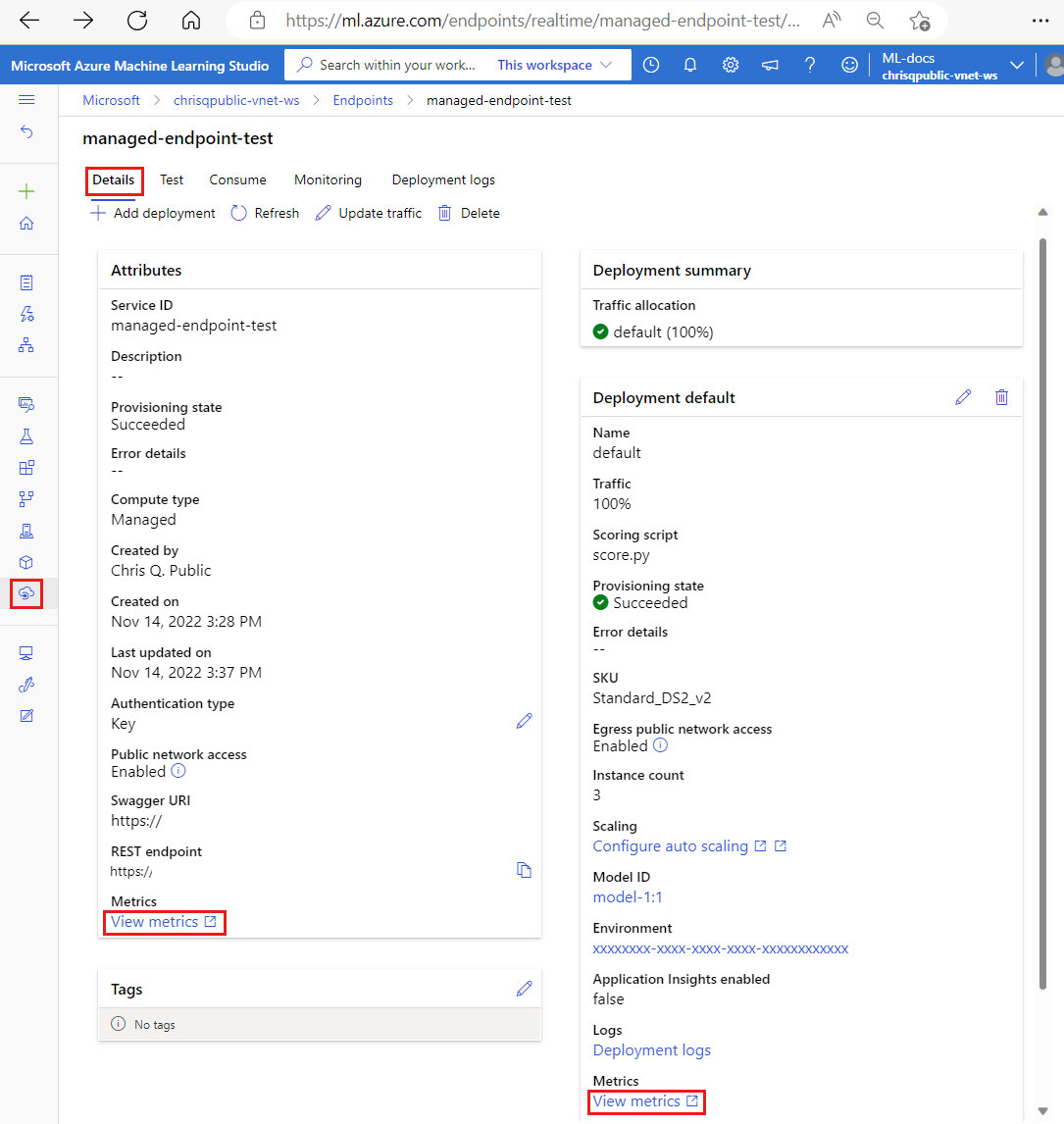

Du kan visa måttsidor för onlineslutpunkter eller distributioner i Azure-portalen. Ett enkelt sätt att komma åt dessa måttsidor är via länkar som är tillgängliga i Användargränssnittet för Azure Mašinsko učenje studio – särskilt på fliken Information på en slutpunkts sida. Genom att följa dessa länkar kommer du till den exakta måttsidan i Azure-portalen för slutpunkten eller distributionen. Du kan också gå in på Azure-portalen för att söka efter måttsidan för slutpunkten eller distributionen.

Så här kommer du åt måttsidorna via länkar som är tillgängliga i studion:

Gå till Azure Mašinsko učenje Studio.

I det vänstra navigeringsfältet väljer du sidan Slutpunkter.

Välj en slutpunkt genom att klicka på dess namn.

Välj Visa mått i avsnittet Attribut i slutpunkten för att öppna slutpunktens måttsida i Azure-portalen.

Välj Visa mått i avsnittet för varje tillgänglig distribution för att öppna distributionens måttsida i Azure-portalen.

Så här kommer du åt mått direkt från Azure-portalen:

Logga in på Azure-portalen.

Navigera till onlineslutpunkten eller distributionsresursen.

Onlineslutpunkter och distributioner är Azure Resource Manager-resurser (ARM) som kan hittas genom att gå till deras ägande resursgrupp. Leta efter resurstyperna Mašinsko učenje onlineslutpunkt och Mašinsko učenje onlinedistribution.

I den vänstra kolumnen väljer du Mått.

Tillgängliga mått

Beroende på vilken resurs du väljer kommer de mått som du ser att vara olika. Måtten är begränsade på olika sätt för onlineslutpunkter och onlinedistributioner.

Mått i slutpunktsomfång

| Kategori | Metric | Namn i REST API | Enhet | Aggregering | Dimensioner | Tidsintervall | DS-export |

|---|---|---|---|---|---|---|---|

| Trafik | Aktiva anslutningar Det totala antalet samtidiga TCP-anslutningar som är aktiva från klienter. |

ConnectionsActive |

Antal | Genomsnitt | <ingen> | PT1M | Nej |

| Trafik | Datainsamlingsfel per minut Antalet datainsamlingshändelser har släppts per minut. |

DataCollectionErrorsPerMinute |

Antal | Minimum, Maximum, Average | deployment, , reasontype |

PT1M | Nej |

| Trafik | Datainsamlingshändelser per minut Antalet datainsamlingshändelser som bearbetas per minut. |

DataCollectionEventsPerMinute |

Antal | Minimum, Maximum, Average | deployment, type |

PT1M | Nej |

| Trafik | Nätverksbyte Byte per sekund som hanteras för slutpunkten. |

NetworkBytes |

BytesPerSecond | Genomsnitt | <ingen> | PT1M | Nej |

| Trafik | Nya anslutningar per sekund Det genomsnittliga antalet nya TCP-anslutningar per sekund som upprättats från klienter. |

NewConnectionsPerSecond |

CountPerSecond | Genomsnitt | <ingen> | PT1M | Nej |

| Trafik | Svarstid för begäran Det genomsnittliga fullständiga tidsintervallet för en begäran som ska besvaras i millisekunder |

RequestLatency |

Millisekunder | Genomsnitt | deployment |

PT1M | Ja |

| Trafik | Frågesvarstid P50 Den genomsnittliga P50-begärandefördröjningen aggregerad av alla värden för svarstid för begäranden som samlats in under den valda tidsperioden |

RequestLatency_P50 |

Millisekunder | Genomsnitt | deployment |

PT1M | Ja |

| Trafik | Svarstid för begäran P90 Den genomsnittliga P90-begärandefördröjningen aggregerad av alla värden för svarstid för begäran som samlats in under den valda tidsperioden |

RequestLatency_P90 |

Millisekunder | Genomsnitt | deployment |

PT1M | Ja |

| Trafik | Frågesvarstid P95 Den genomsnittliga P95-begärandesvarstiden aggregerad av alla värden för svarstid för begäran som samlats in under den valda tidsperioden |

RequestLatency_P95 |

Millisekunder | Genomsnitt | deployment |

PT1M | Ja |

| Trafik | Svarstid för begäran P99 Den genomsnittliga P99-begärandefördröjningen aggregerad av alla värden för svarstid för begäranden som samlats in under den valda tidsperioden |

RequestLatency_P99 |

Millisekunder | Genomsnitt | deployment |

PT1M | Ja |

| Trafik | Begäranden per minut Antalet begäranden som skickas till onlineslutpunkten inom en minut |

RequestsPerMinute |

Antal | Genomsnitt | deployment, statusCode, , statusCodeClassmodelStatusCode |

PT1M | Nej |

Bandbreddsbegränsning

Bandbredden begränsas om kvotgränserna överskrids för hanterade onlineslutpunkter. Mer information om gränser finns i artikeln om gränser för onlineslutpunkter. Så här avgör du om begäranden begränsas:

- Övervaka måttet "Nätverksbyte"

- Svarstrailern har fälten:

ms-azureml-bandwidth-request-delay-msochms-azureml-bandwidth-response-delay-ms. Värdena för fälten är fördröjningarna, i millisekunder, för bandbreddsbegränsningen.

Mer information finns i Problem med bandbreddsbegränsning.

Mått i distributionsomfång

| Kategori | Metric | Namn i REST API | Enhet | Aggregering | Dimensioner | Tidsintervall | DS-export |

|---|---|---|---|---|---|---|---|

| Resurs | Procentandel processorminnesanvändning Procentandel minnesanvändning på en instans. Användningen rapporteras med en minuts intervall. |

CpuMemoryUtilizationPercentage |

Procent | Minimum, Maximum, Average | instanceId |

PT1M | Ja |

| Resurs | Cpu-användningsprocent Procentandel cpu-användning på en instans. Användningen rapporteras med en minuts intervall. |

CpuUtilizationPercentage |

Procent | Minimum, Maximum, Average | instanceId |

PT1M | Ja |

| Resurs | Datainsamlingsfel per minut Antalet datainsamlingshändelser har släppts per minut. |

DataCollectionErrorsPerMinute |

Antal | Minimum, Maximum, Average | instanceId, , reasontype |

PT1M | Nej |

| Resurs | Datainsamlingshändelser per minut Antalet datainsamlingshändelser som bearbetas per minut. |

DataCollectionEventsPerMinute |

Antal | Minimum, Maximum, Average | instanceId, type |

PT1M | Nej |

| Resurs | Distributionskapacitet Antalet instanser i distributionen. |

DeploymentCapacity |

Antal | Minimum, Maximum, Average | instanceId, State |

PT1M | Nej |

| Resurs | Diskanvändning Procentandel diskanvändning på en instans. Användningen rapporteras med en minuts intervall. |

DiskUtilization |

Procent | Minimum, Maximum, Average | instanceId, disk |

PT1M | Ja |

| Resurs | GPU Energy i Joules Intervallenergi i Joules på en GPU-nod. Energi rapporteras med en minuts intervall. |

GpuEnergyJoules |

Antal | Minimum, Maximum, Average | instanceId |

PT1M | Nej |

| Resurs | Procent för GPU-minnesanvändning Procentandel av GPU-minnesanvändningen på en instans. Användningen rapporteras med en minuts intervall. |

GpuMemoryUtilizationPercentage |

Procent | Minimum, Maximum, Average | instanceId |

PT1M | Ja |

| Resurs | GPU-användningsprocent Procentandel av GPU-användningen på en instans. Användningen rapporteras med en minuts intervall. |

GpuUtilizationPercentage |

Procent | Minimum, Maximum, Average | instanceId |

PT1M | Ja |

| Trafik | Frågesvarstid P50 Den genomsnittliga P50-begärandefördröjningen aggregerad av alla värden för svarstid för begäranden som samlats in under den valda tidsperioden |

RequestLatency_P50 |

Millisekunder | Genomsnitt | <ingen> | PT1M | Ja |

| Trafik | Svarstid för begäran P90 Den genomsnittliga P90-begärandefördröjningen aggregerad av alla värden för svarstid för begäran som samlats in under den valda tidsperioden |

RequestLatency_P90 |

Millisekunder | Genomsnitt | <ingen> | PT1M | Ja |

| Trafik | Frågesvarstid P95 Den genomsnittliga P95-begärandesvarstiden aggregerad av alla värden för svarstid för begäran som samlats in under den valda tidsperioden |

RequestLatency_P95 |

Millisekunder | Genomsnitt | <ingen> | PT1M | Ja |

| Trafik | Svarstid för begäran P99 Den genomsnittliga P99-begärandefördröjningen aggregerad av alla värden för svarstid för begäranden som samlats in under den valda tidsperioden |

RequestLatency_P99 |

Millisekunder | Genomsnitt | <ingen> | PT1M | Ja |

| Trafik | Begäranden per minut Antalet begäranden som skickas till onlinedistribution inom en minut |

RequestsPerMinute |

Antal | Genomsnitt | envoy_response_code |

PT1M | Nej |

Skapa instrumentpaneler och aviseringar

Med Azure Monitor kan du skapa instrumentpaneler och aviseringar baserat på mått.

Skapa instrumentpaneler och visualisera frågor

Du kan skapa anpassade instrumentpaneler och visualisera mått från flera källor i Azure-portalen, inklusive måtten för din onlineslutpunkt. Mer information om hur du skapar instrumentpaneler och visualiserar frågor finns i Instrumentpaneler med hjälp av loggdata och instrumentpaneler med hjälp av programdata.

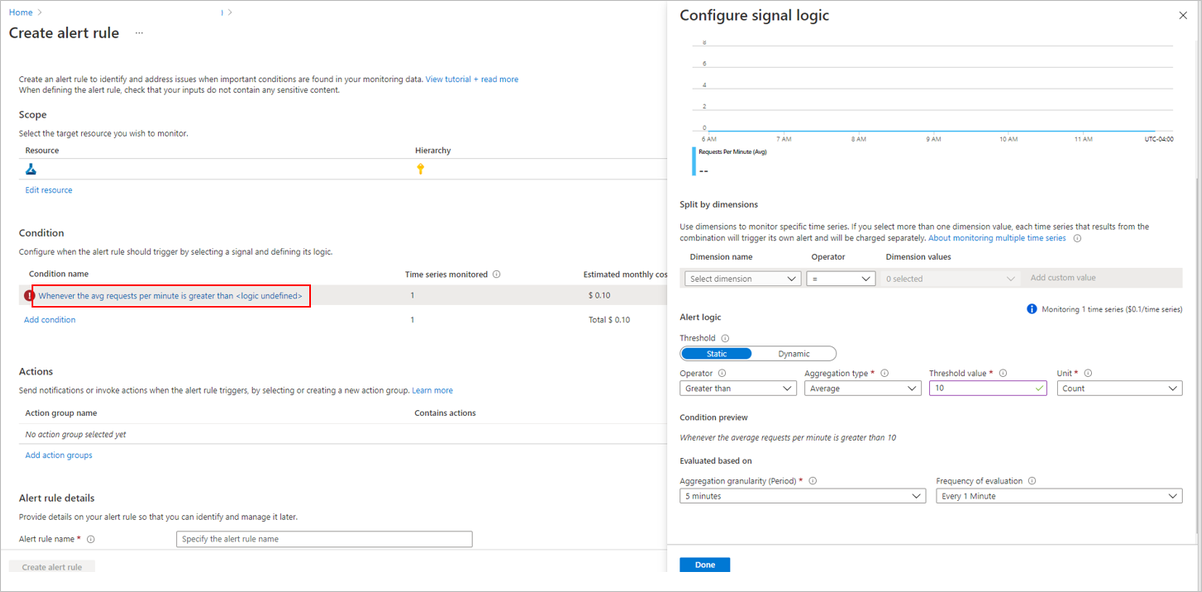

Skapa aviseringar

Du kan också skapa anpassade aviseringar för att meddela dig om viktiga statusuppdateringar till din onlineslutpunkt:

Längst upp till höger på måttsidan väljer du Ny aviseringsregel.

Välj ett villkorsnamn för att ange när aviseringen ska utlösas.

Välj Lägg till åtgärdsgrupper>Skapa åtgärdsgrupper för att ange vad som ska hända när aviseringen utlöses.

Välj Skapa aviseringsregel för att slutföra skapandet av aviseringen.

Mer information finns i Skapa Azure Monitor-aviseringsregler.

Aktivera autoskalning baserat på mått

Du kan aktivera autoskalning av distributioner med hjälp av mått med hjälp av användargränssnitt eller kod. När du använder kod (antingen CLI eller SDK) kan du använda mått-ID:t som anges i tabellen med tillgängliga mått i villkor för att utlösa autoskalning. Mer information finns i Autoskalning av onlineslutpunkter.

Loggar

Det finns tre loggar som kan aktiveras för onlineslutpunkter:

AmlOnlineEndpointTrafficLog: Du kan välja att aktivera trafikloggar om du vill kontrollera informationen i din begäran. Nedan visas några fall:

Om svaret inte är 200 kontrollerar du värdet för kolumnen "ResponseCodeReason" för att se vad som hände. Kontrollera också orsaken i avsnittet "HTTPS-statuskoder" i artikeln Felsöka onlineslutpunkter .

Du kan kontrollera svarskoden och svarsorsaken för din modell från kolumnen "ModelStatusCode" och "ModelStatusReason".

Du vill kontrollera varaktigheten för begäran, till exempel total varaktighet, varaktighet för begäran/svar och fördröjningen som orsakas av nätverksbegränsningen. Du kan kontrollera det från loggarna för att se svarstiden för uppdelningen.

Om du vill kontrollera hur många begäranden eller misslyckade begäranden som nyligen har misslyckats. Du kan också aktivera loggarna.

AmlOnlineEndpointConsoleLog: Innehåller loggar som containrarna matar ut till konsolen. Nedan visas några fall:

Om containern inte startar kan konsolloggen vara användbar för felsökning.

Övervaka containerbeteendet och se till att alla begäranden hanteras korrekt.

Skriv begärande-ID:t i konsolloggen. När du ansluter till begärande-ID:t, AmlOnlineEndpointConsoleLog och AmlOnlineEndpointTrafficLog på Log Analytics-arbetsytan, kan du spåra en begäran från nätverksinmatningspunkten för en onlineslutpunkt till containern.

Du kan också använda den här loggen för prestandaanalys för att fastställa den tid som krävs av modellen för att bearbeta varje begäran.

AmlOnlineEndpointEventLog: Innehåller händelseinformation om containerns livscykel. För närvarande tillhandahåller vi information om följande typer av händelser:

Name Meddelande BackOff Säkerhetskopiering av omstart av misslyckad container Drog Containeravbildningen "<IMAGE_NAME>" finns redan på datorn Mördande Containerinferens-server misslyckad liveness-avsökning, startas om Skapad Skapad containeravbildningshämtare Skapad Skapad containerinferens-server Skapad Skapad containermodellmontering LivenessProbeFailed Liveness-avsökningen misslyckades: <FAILURE_CONTENT> ReadinessProbeFailed Beredskapsavsökningen misslyckades: <FAILURE_CONTENT> Börjat Startad containeravbildningshämtare Börjat Startad containerinferens-server Börjat Startad containermodellmontering Mördande Stoppa containerinferens-server Mördande Stoppa containermodellmontering

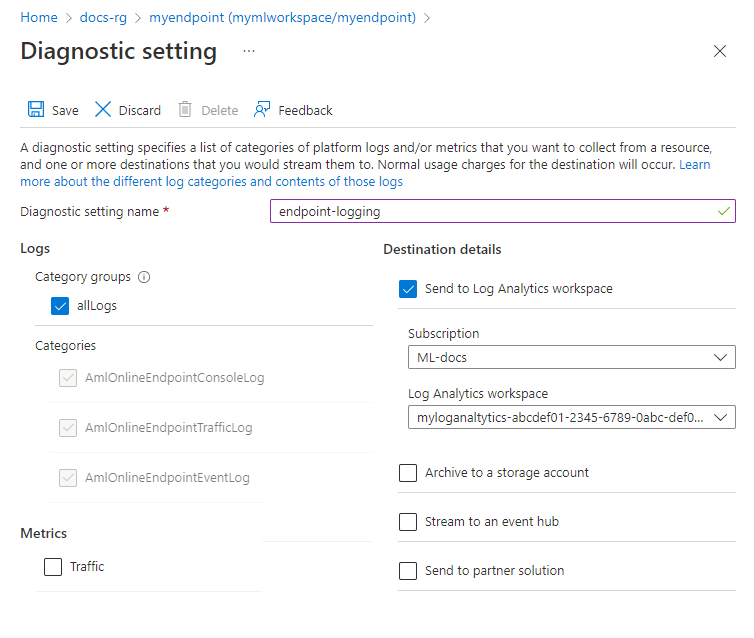

Så här aktiverar/inaktiverar du loggar

Viktigt!

Loggning använder Azure Log Analytics. Om du för närvarande inte har en Log Analytics-arbetsyta kan du skapa en med hjälp av stegen i Skapa en Log Analytics-arbetsyta i Azure-portalen.

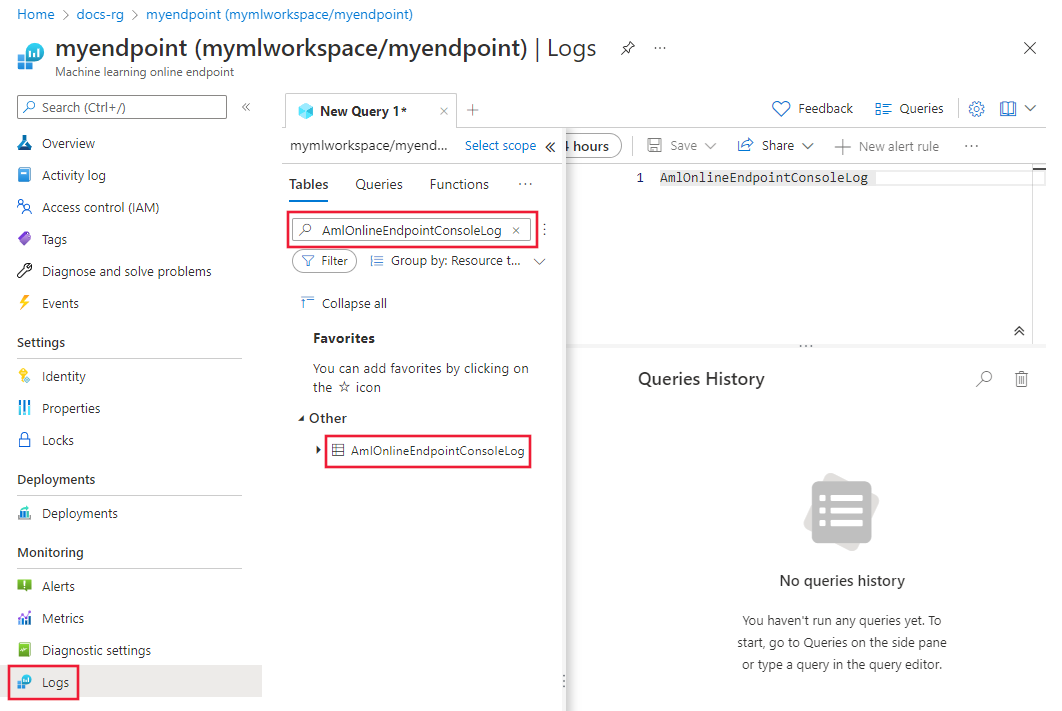

I Azure-portalen går du till den resursgrupp som innehåller slutpunkten och väljer sedan slutpunkten.

I avsnittet Övervakning till vänster på sidan väljer du Diagnostikinställningar och sedan Lägg till inställningar.

Välj de loggkategorier som ska aktiveras, välj Skicka till Log Analytics-arbetsyta och välj sedan den Log Analytics-arbetsyta som ska användas. Ange slutligen ett namn på diagnostikinställningen och välj Spara.

Viktigt!

Det kan ta upp till en timme innan anslutningen till Log Analytics-arbetsytan aktiveras. Vänta en timme innan du fortsätter med nästa steg.

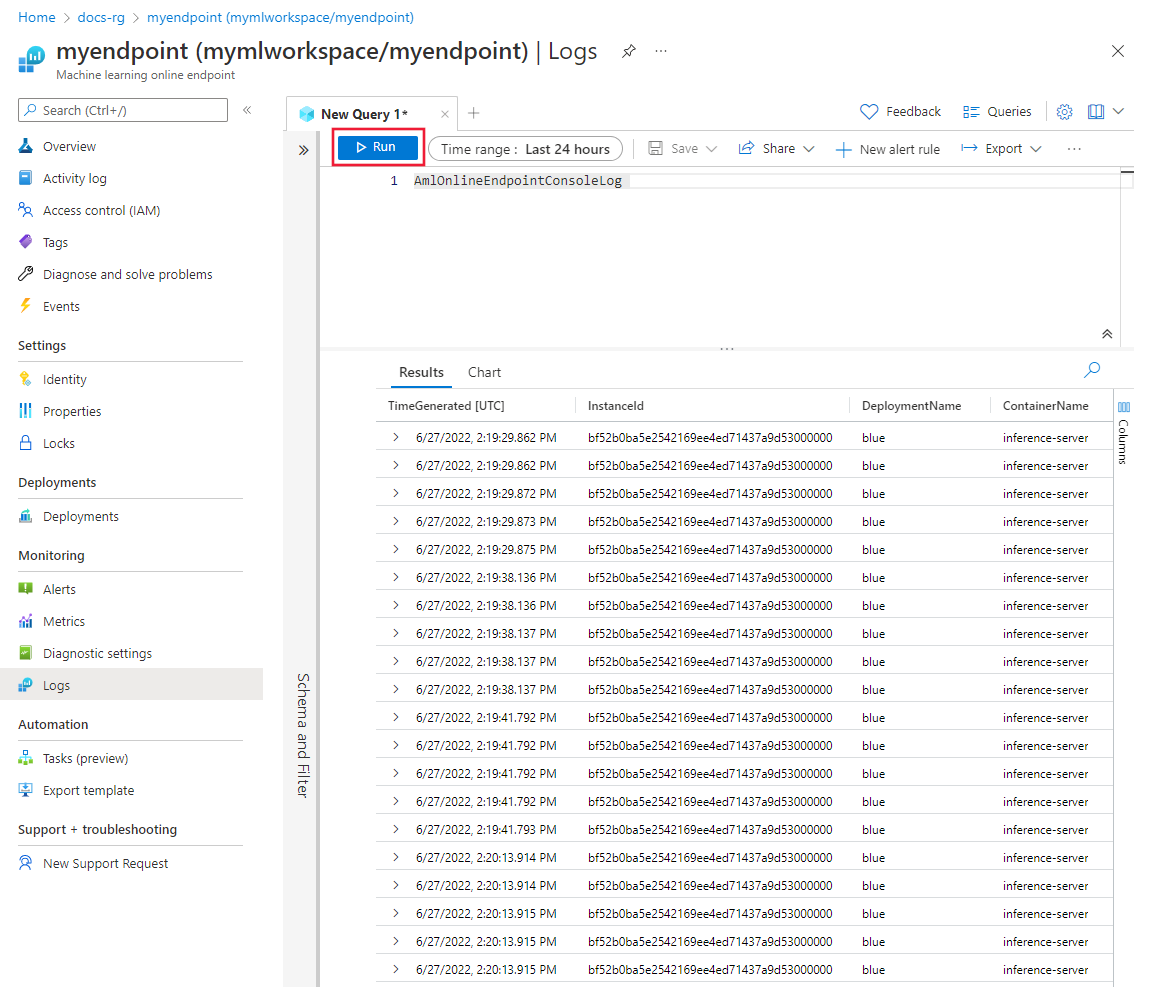

Skicka bedömningsbegäranden till slutpunkten. Den här aktiviteten bör skapa poster i loggarna.

Från antingen onlineslutpunktsegenskaperna eller Log Analytics-arbetsytan väljer du Loggar till vänster på skärmen.

Stäng dialogrutan Frågor som öppnas automatiskt och dubbelklicka sedan på AmlOnlineEndpointConsoleLog. Om du inte ser det använder du fältet Sök .

Markera Kör.

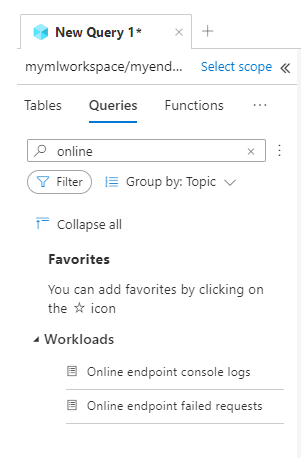

Exempelfrågor

Du hittar exempelfrågor på fliken Frågor när du visar loggar. Sök efter Online-slutpunkt för att hitta exempelfrågor.

Information om loggkolumn

Följande tabeller innehåller information om de data som lagras i varje logg:

AmlOnlineEndpointTrafficLog

| Property | beskrivning |

|---|---|

| Metod | Den begärda metoden från klienten. |

| Sökväg | Den begärda sökvägen från klienten. |

| SubscriptionId | Prenumerations-ID för maskininlärning för onlineslutpunkten. |

| AzureMLWorkspaceId | Maskininlärningsarbetsytans ID för onlineslutpunkten. |

| AzureMLWorkspaceName | Maskininlärningsarbetsytans namn på onlineslutpunkten. |

| EndpointName | Namnet på onlineslutpunkten. |

| DeploymentName | Namnet på onlinedistributionen. |

| Protokoll | Protokollet för begäran. |

| ResponseCode | Den slutliga svarskoden som returnerades till klienten. |

| ResponseCodeReason | Den slutliga orsaken till svarskoden som returnerades till klienten. |

| ModelStatusCode | Svarsstatuskoden från modellen. |

| ModelStatusReason | Orsaken till svarsstatusen från modellen. |

| RequestPayloadSize | Totalt antal byte som tagits emot från klienten. |

| ResponsePayloadSize | Det totala antalet byte som skickas tillbaka till klienten. |

| UserAgent | Användarens agenthuvud för begäran, inklusive kommentarer men trunkerade till högst 70 tecken. |

| XRequestId | Begärande-ID:t som genererats av Azure Mašinsko učenje för intern spårning. |

| XMSClientRequestId | Spårnings-ID:t som genereras av klienten. |

| TotalDurationMs | Varaktighet i millisekunder från starttiden för begäran till den senaste svarsbyte som skickades tillbaka till klienten. Om klienten är frånkopplad mäter den från starttiden till klientens frånkopplingstid. |

| RequestDurationMs | Varaktighet i millisekunder från starttiden för begäran till den sista byte för den begäran som togs emot från klienten. |

| ResponseDurationMs | Varaktighet i millisekunder från starttiden för begäran till den första svarsbyteläsningen från modellen. |

| RequestThrottlingDelayMs | Fördröjning i millisekunder vid överföring av begärandedata på grund av nätverksbegränsning. |

| ResponseThrottlingDelayMs | Fördröjning i millisekunder vid överföring av svarsdata på grund av nätverksbegränsning. |

AmlOnlineEndpointConsoleLog

| Property | beskrivning |

|---|---|

| TimeGenerated | Tidsstämpeln (UTC) för när loggen genererades. |

| OperationName | Åtgärden som är associerad med loggposten. |

| InstanceId | ID:t för den instans som genererade den här loggposten. |

| DeploymentName | Namnet på distributionen som är associerad med loggposten. |

| ContainerName | Namnet på containern där loggen genererades. |

| Meddelande | Innehållet i loggen. |

AmlOnlineEndpointEventLog

| Property | beskrivning |

|---|---|

| TimeGenerated | Tidsstämpeln (UTC) för när loggen genererades. |

| OperationName | Åtgärden som är associerad med loggposten. |

| InstanceId | ID:t för den instans som genererade den här loggposten. |

| DeploymentName | Namnet på distributionen som är associerad med loggposten. |

| Name | Namnet på händelsen. |

| Meddelande | Innehållet i händelsen. |

Använda Application Insights

Utvalda miljöer omfattar integrering med Application Insights, och du kan aktivera eller inaktivera den här integreringen när du skapar en onlinedistribution. Inbyggda mått och loggar skickas till Application Insights och du kan använda de inbyggda funktionerna i Application Insights (till exempel Live-mått, transaktionssökning, fel och prestanda) för ytterligare analys.

Mer information finns i Översikt över Application Insights.

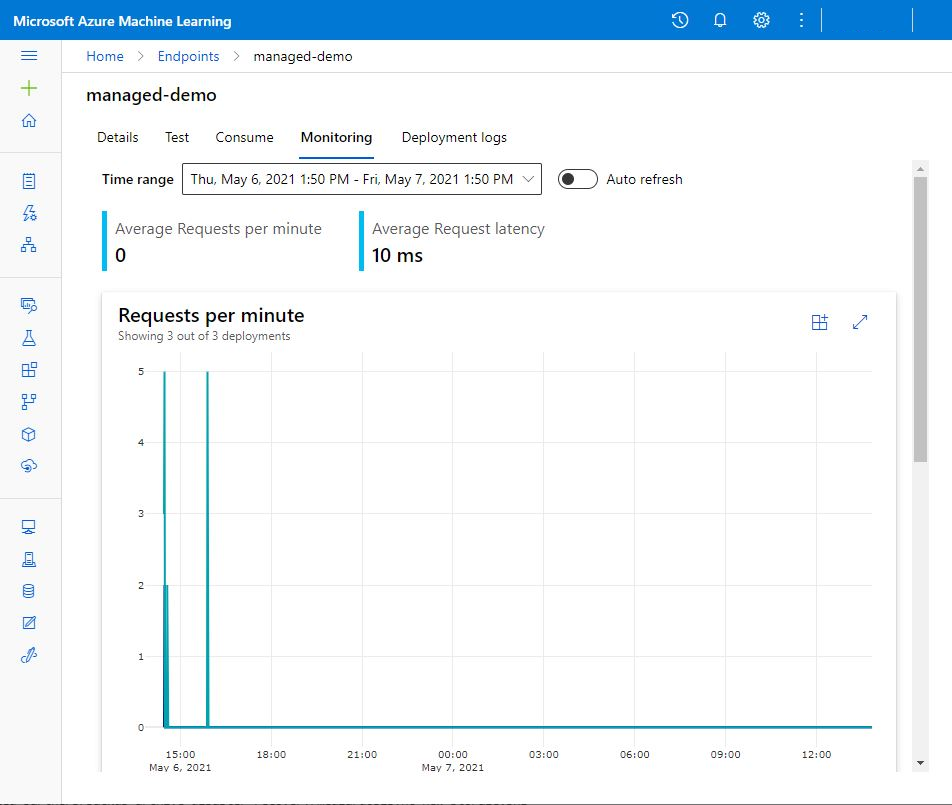

I studion kan du använda fliken Övervakning på en onlineslutpunkts sida för att se aktivitetsövervakardiagram på hög nivå för den hanterade onlineslutpunkten. Om du vill använda övervakningsfliken måste du välja Aktivera Application Insight-diagnostik och datainsamling när du skapar slutpunkten.

Relaterat innehåll

- Lär dig hur du visar kostnader för din distribuerade slutpunkt.

- Läs mer om metrics Explorer.