Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

A Log Analytics workspace is a data store into which you can collect any type of log data from all of your Azure and non-Azure resources and applications. We recommend that you send all log data to one Log Analytics workspace, unless you have specific business needs that require you to create multiple workspaces, as described in Design a Log Analytics workspace architecture.

This article explains how to create a Log Analytics workspace.

Prerequisites

To create a Log Analytics workspace, you need an Azure account with an active subscription. You can create an account for free.

Permissions required

You need Microsoft.OperationalInsights/workspaces/write permissions to the resource group where you want to create the Log Analytics workspace, as provided by the Log Analytics Contributor built-in role, for example.

Create a workspace

Use the Log Analytics workspaces menu to create a workspace.

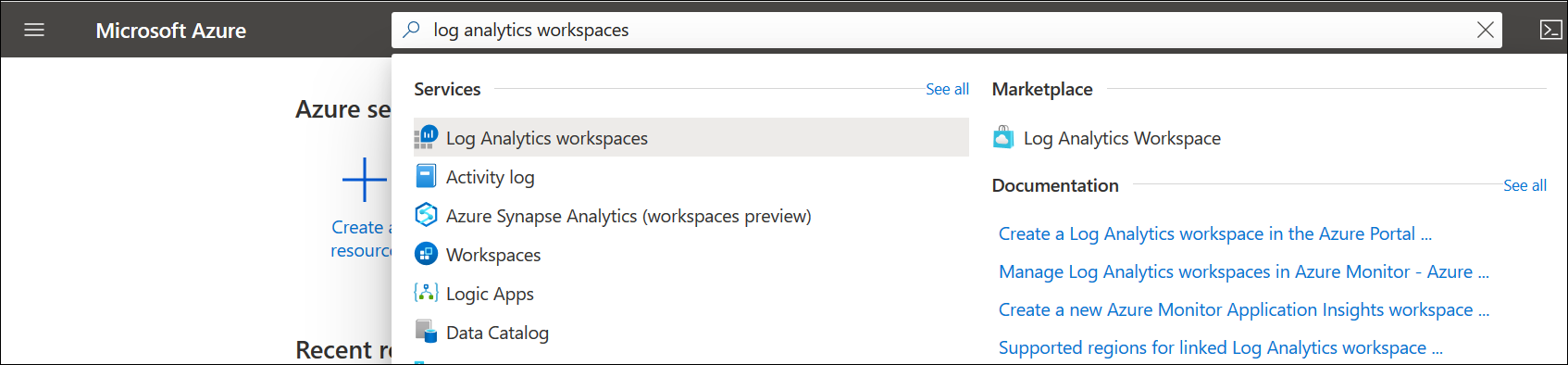

In the Azure portal, enter Log Analytics in the search box. As you begin typing, the list filters based on your input. Select Log Analytics workspaces.

Select Create.

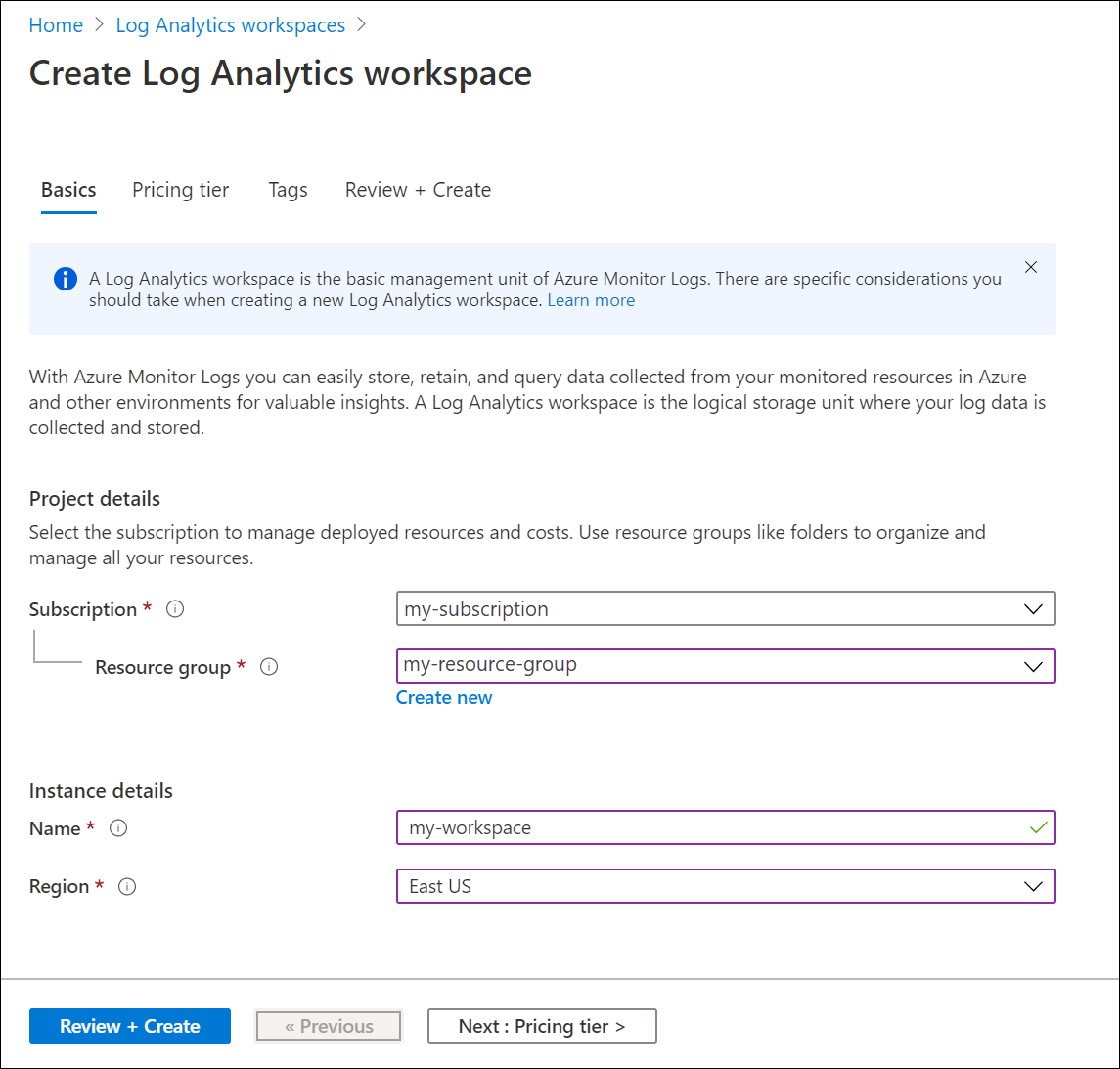

Select a Subscription from the dropdown.

Use an existing Resource Group or create a new one.

Provide a name for the new Log Analytics workspace, such as DefaultLAWorkspace. This name must be unique per resource group.

Select an available Region. For more information, see which regions Log Analytics is available in. Search for Azure Monitor in the Search for a product box.

Select Review + Create to review the settings. Then select Create to create the workspace. A default pricing tier of pay-as-you-go is applied. No charges will be incurred until you start collecting enough data. For more information about other pricing tiers, see Log Analytics pricing details.

Troubleshooting

When you create a workspace that was deleted in the last 14 days and in soft-delete state, the operation could have a different outcome depending on your workspace configuration:

If you provide the same workspace name, resource group, subscription, and region as in the deleted workspace, your workspace will be recovered including its data, configuration, and connected agents.

Workspace names must be unique for a resource group. If you use a workspace name that already exists, or is soft deleted, an error is returned. To permanently delete your soft-deleted name and create a new workspace with the same name, follow these steps:

- Recover your workspace.

- Permanently delete your workspace.

- Create a new workspace by using the same workspace name.

Next steps

Now that you have a workspace available, you can configure collection of monitoring telemetry, run log searches to analyze that data, and add a management solution to provide more data and analytic insights. To learn more:

- See Monitor health of Log Analytics workspace in Azure Monitor to create alert rules to monitor the health of your workspace.

- See Collect Azure service logs and metrics for use in Log Analytics to enable data collection from Azure resources with Azure Diagnostics or Azure Storage.